floating-point number on:

[Wikipedia]

[Google]

[Amazon]

In

In

In 1914, the Spanish engineer

In 1914, the Spanish engineer

Digital Computers, History of Origins, (pdf)

p. 545, Digital Computers: Origins, Encyclopedia of Computer Science, January 2003. In 1938, In 1989, mathematician and computer scientist William Kahan was honored with the

In 1989, mathematician and computer scientist William Kahan was honored with the

and the 64-bit ("double") layout is similar.

and the 64-bit ("double") layout is similar.

/* Enough digits to be sure we get the correct approximation. */

double pi = 3.1415926535897932384626433832795;

double z = tan(pi/2.0);

will give a result of 16331239353195370.0. In single precision (using the

double A(double X)

If, however, intermediate computations are all performed in extended precision (e.g. by setting line to C99 ), then up to full precision in the final double result can be maintained. Alternatively, a numerical analysis of the algorithm reveals that if the following non-obvious change to line is made:

Z = log(Z) / (Z - 1.0);

then the algorithm becomes numerically stable and can compute to full double precision.

To maintain the properties of such carefully constructed numerically stable programs, careful handling by the

OpenCores

(NB. This website contains open source floating-point IP cores for the implementation of floating-point operators in FPGA or ASIC devices. The project ''double_fpu'' contains verilog source code of a double-precision floating-point unit. The project ''fpuvhdl'' contains vhdl source code of a single-precision floating-point unit.) * {{Data types Computer arithmetic Articles with example C code

computing

Computing is any goal-oriented activity requiring, benefiting from, or creating computer, computing machinery. It includes the study and experimentation of algorithmic processes, and the development of both computer hardware, hardware and softw ...

, floating-point arithmetic (FP) is arithmetic

Arithmetic is an elementary branch of mathematics that deals with numerical operations like addition, subtraction, multiplication, and division. In a wider sense, it also includes exponentiation, extraction of roots, and taking logarithms.

...

on subsets of real number

In mathematics, a real number is a number that can be used to measure a continuous one- dimensional quantity such as a duration or temperature. Here, ''continuous'' means that pairs of values can have arbitrarily small differences. Every re ...

s formed by a ''significand

The significand (also coefficient, sometimes argument, or more ambiguously mantissa, fraction, or characteristic) is the first (left) part of a number in scientific notation or related concepts in floating-point representation, consisting of its s ...

'' (a signed sequence of a fixed number of digits in some base) multiplied by an integer power of that base.

Numbers of this form are called floating-point numbers.

For example, the number 2469/200 is a floating-point number in base ten with five digits:

However, 7716/625 = 12.3456 is not a floating-point number in base ten with five digits—it needs six digits.

The nearest floating-point number with only five digits is 12.346.

And 1/3 = 0.3333… is not a floating-point number in base ten with any finite number of digits.

In practice, most floating-point systems use base two, though base ten (decimal floating point

Decimal floating-point (DFP) arithmetic refers to both a representation and operations on Decimal data type, decimal floating-point numbers. Working directly with decimal (base-10) fractions can avoid the rounding errors that otherwise typically ...

) is also common.

Floating-point arithmetic operations, such as addition and division, approximate the corresponding real number arithmetic operations by rounding

Rounding or rounding off is the process of adjusting a number to an approximate, more convenient value, often with a shorter or simpler representation. For example, replacing $ with $, the fraction 312/937 with 1/3, or the expression √2 with ...

any result that is not a floating-point number itself to a nearby floating-point number.

For example, in a floating-point arithmetic with five base-ten digits, the sum 12.345 + 1.0001 = 13.3451 might be rounded to 13.345.

The term ''floating point'' refers to the fact that the number's radix point

alt=Four types of separating decimals: a) 1,234.56. b) 1.234,56. c) 1'234,56. d) ١٬٢٣٤٫٥٦., Both a full_stop.html" ;"title="comma and a full stop">comma and a full stop (or period) are generally accepted decimal separators for interna ...

can "float" anywhere to the left, right, or between the significant digits of the number. This position is indicated by the exponent, so floating point can be considered a form of scientific notation

Scientific notation is a way of expressing numbers that are too large or too small to be conveniently written in decimal form, since to do so would require writing out an inconveniently long string of digits. It may be referred to as scientif ...

.

A floating-point system can be used to represent, with a fixed number of digits, numbers of very different orders of magnitude

In a ratio scale based on powers of ten, the order of magnitude is a measure of the nearness of two figures. Two numbers are "within an order of magnitude" of each other if their ratio is between 1/10 and 10. In other words, the two numbers are wi ...

— such as the number of meters between galaxies or between protons in an atom. For this reason, floating-point arithmetic is often used to allow very small and very large real numbers that require fast processing times. The result of this dynamic range

Dynamics (from Greek δυναμικός ''dynamikos'' "powerful", from δύναμις ''dynamis'' " power") or dynamic may refer to:

Physics and engineering

* Dynamics (mechanics), the study of forces and their effect on motion

Brands and ent ...

is that the numbers that can be represented are not uniformly spaced; the difference between two consecutive representable numbers varies with their exponent.

Over the years, a variety of floating-point representations have been used in computers. In 1985, the IEEE 754

The IEEE Standard for Floating-Point Arithmetic (IEEE 754) is a technical standard for floating-point arithmetic originally established in 1985 by the Institute of Electrical and Electronics Engineers (IEEE). The standard #Design rationale, add ...

Standard for Floating-Point Arithmetic was established, and since the 1990s, the most commonly encountered representations are those defined by the IEEE.

The speed of floating-point operations, commonly measured in terms of FLOPS

Floating point operations per second (FLOPS, flops or flop/s) is a measure of computer performance in computing, useful in fields of scientific computations that require floating-point calculations.

For such cases, it is a more accurate measu ...

, is an important characteristic of a computer system

A computer is a machine that can be programmed to automatically carry out sequences of arithmetic or logical operations (''computation''). Modern digital electronic computers can perform generic sets of operations known as ''programs'', wh ...

, especially for applications that involve intensive mathematical calculations.

A floating-point unit

A floating-point unit (FPU), numeric processing unit (NPU), colloquially math coprocessor, is a part of a computer system specially designed to carry out operations on floating-point numbers. Typical operations are addition, subtraction, multip ...

(FPU, colloquially a math coprocessor) is a part of a computer system specially designed to carry out operations on floating-point numbers.

Overview

Floating-point numbers

A number representation specifies some way of encoding a number, usually as a string of digits. There are several mechanisms by which strings of digits can represent numbers. In standard mathematical notation, the digit string can be of any length, and the location of theradix point

alt=Four types of separating decimals: a) 1,234.56. b) 1.234,56. c) 1'234,56. d) ١٬٢٣٤٫٥٦., Both a full_stop.html" ;"title="comma and a full stop">comma and a full stop (or period) are generally accepted decimal separators for interna ...

is indicated by placing an explicit "point" character (dot or comma) there. If the radix point is not specified, then the string implicitly represents an integer

An integer is the number zero (0), a positive natural number (1, 2, 3, ...), or the negation of a positive natural number (−1, −2, −3, ...). The negations or additive inverses of the positive natural numbers are referred to as negative in ...

and the unstated radix point would be off the right-hand end of the string, next to the least significant digit. In fixed-point systems, a position in the string is specified for the radix point. So a fixed-point scheme might use a string of 8 decimal digits with the decimal point in the middle, whereby "00012345" would represent 0001.2345.

In scientific notation

Scientific notation is a way of expressing numbers that are too large or too small to be conveniently written in decimal form, since to do so would require writing out an inconveniently long string of digits. It may be referred to as scientif ...

, the given number is scaled by a power of 10

In mathematics, a power of 10 is any of the integer powers of the number ten; in other words, ten multiplied by itself a certain number of times (when the power is a positive integer). By definition, the number one is a power (the zeroth power ...

, so that it lies within a specific range—typically between 1 and 10, with the radix point appearing immediately after the first digit. As a power of ten, the scaling factor is then indicated separately at the end of the number. For example, the orbital period of Jupiter

Jupiter is the fifth planet from the Sun and the List of Solar System objects by size, largest in the Solar System. It is a gas giant with a Jupiter mass, mass more than 2.5 times that of all the other planets in the Solar System combined a ...

's moon Io is seconds, a value that would be represented in standard-form scientific notation as seconds.

Floating-point representation is similar in concept to scientific notation. Logically, a floating-point number consists of:

* A signed (meaning positive or negative) digit string of a given length in a given radix

In a positional numeral system, the radix (radices) or base is the number of unique digits, including the digit zero, used to represent numbers. For example, for the decimal system (the most common system in use today) the radix is ten, becaus ...

(or base). This digit string is referred to as the ''significand

The significand (also coefficient, sometimes argument, or more ambiguously mantissa, fraction, or characteristic) is the first (left) part of a number in scientific notation or related concepts in floating-point representation, consisting of its s ...

'', ''mantissa'', or ''coefficient''. The length of the significand determines the ''precision'' to which numbers can be represented. The radix point position is assumed always to be somewhere within the significand—often just after or just before the most significant digit, or to the right of the rightmost (least significant) digit. This article generally follows the convention that the radix point is set just after the most significant (leftmost) digit.

* A signed integer exponent

In mathematics, exponentiation, denoted , is an operation involving two numbers: the ''base'', , and the ''exponent'' or ''power'', . When is a positive integer, exponentiation corresponds to repeated multiplication of the base: that is, i ...

(also referred to as the ''characteristic'', or ''scale''), which modifies the magnitude of the number.

To derive the value of the floating-point number, the ''significand'' is multiplied by the ''base'' raised to the power of the ''exponent'', equivalent to shifting the radix point from its implied position by a number of places equal to the value of the exponent—to the right if the exponent is positive or to the left if the exponent is negative.

Using base-10 (the familiar decimal

The decimal numeral system (also called the base-ten positional numeral system and denary or decanary) is the standard system for denoting integer and non-integer numbers. It is the extension to non-integer numbers (''decimal fractions'') of th ...

notation) as an example, the number , which has ten decimal digits of precision, is represented as the significand together with 5 as the exponent. To determine the actual value, a decimal point is placed after the first digit of the significand and the result is multiplied by to give , or . In storing such a number, the base (10) need not be stored, since it will be the same for the entire range of supported numbers, and can thus be inferred.

Symbolically, this final value is:

where is the significand (ignoring any implied decimal point), is the precision (the number of digits in the significand), is the base (in our example, this is the number ''ten''), and is the exponent.

Historically, several number bases have been used for representing floating-point numbers, with base two ( binary) being the most common, followed by base ten (decimal floating point

Decimal floating-point (DFP) arithmetic refers to both a representation and operations on Decimal data type, decimal floating-point numbers. Working directly with decimal (base-10) fractions can avoid the rounding errors that otherwise typically ...

), and other less common varieties, such as base sixteen ( hexadecimal floating point), base eight (octal floating point), base four (quaternary floating point), base three ( balanced ternary floating point) and even base 256 and base .

A floating-point number is a rational number

In mathematics, a rational number is a number that can be expressed as the quotient or fraction of two integers, a numerator and a non-zero denominator . For example, is a rational number, as is every integer (for example,

The set of all ...

, because it can be represented as one integer divided by another; for example is (145/100)×1000 or /100. The base determines the fractions that can be represented; for instance, 1/5 cannot be represented exactly as a floating-point number using a binary base, but 1/5 can be represented exactly using a decimal base (, or ). However, 1/3 cannot be represented exactly by either binary (0.010101...) or decimal (0.333...), but in base 3, it is trivial (0.1 or 1×3−1) . The occasions on which infinite expansions occur depend on the base and its prime factors.

The way in which the significand (including its sign) and exponent are stored in a computer is implementation-dependent. The common IEEE formats are described in detail later and elsewhere, but as an example, in the binary single-precision (32-bit) floating-point representation, , and so the significand is a string of 24 bits. For instance, the number π's first 33 bits are:

In this binary expansion, let us denote the positions from 0 (leftmost bit, or most significant bit) to 32 (rightmost bit). The 24-bit significand will stop at position 23, shown as the underlined bit above. The next bit, at position 24, is called the ''round bit'' or ''rounding bit''. It is used to round the 33-bit approximation to the nearest 24-bit number (there are specific rules for halfway values, which is not the case here). This bit, which is in this example, is added to the integer formed by the leftmost 24 bits, yielding:

When this is stored in memory using the IEEE 754 encoding, this becomes the significand

The significand (also coefficient, sometimes argument, or more ambiguously mantissa, fraction, or characteristic) is the first (left) part of a number in scientific notation or related concepts in floating-point representation, consisting of its s ...

. The significand is assumed to have a binary point to the right of the leftmost bit. So, the binary representation of π is calculated from left-to-right as follows:

where is the precision ( in this example), is the position of the bit of the significand from the left (starting at and finishing at here) and is the exponent ( in this example).

It can be required that the most significant digit of the significand of a non-zero number be non-zero (except when the corresponding exponent would be smaller than the minimum one). This process is called ''normalization''. For binary formats (which uses only the digits and ), this non-zero digit is necessarily . Therefore, it does not need to be represented in memory, allowing the format to have one more bit of precision. This rule is variously called the ''leading bit convention'', the ''implicit bit convention'', the ''hidden bit convention'', or the ''assumed bit convention''.

Alternatives to floating-point numbers

The floating-point representation is by far the most common way of representing in computers an approximation to real numbers. However, there are alternatives: * Fixed-point representation uses integer hardware operations controlled by a software implementation of a specific convention about the location of the binary or decimal point, for example, 6 bits or digits from the right. The hardware to manipulate these representations is less costly than floating point, and it can be used to perform normal integer operations, too. Binary fixed point is usually used in special-purpose applications on embedded processors that can only do integer arithmetic, but decimal fixed point is common in commercial applications. * Logarithmic number systems (LNSs) represent a real number by the logarithm of its absolute value and a sign bit. The value distribution is similar to floating point, but the value-to-representation curve (''i.e.'', the graph of the logarithm function) is smooth (except at 0). Conversely to floating-point arithmetic, in a logarithmic number system multiplication, division and exponentiation are simple to implement, but addition and subtraction are complex. The ( symmetric) level-index arithmetic (LI and SLI) of Charles Clenshaw, Frank Olver and Peter Turner is a scheme based on a generalized logarithm representation. * Tapered floating-point representation, used in Unum. * Some simple rational numbers (''e.g.'', 1/3 and 1/10) cannot be represented exactly in binary floating point, no matter what the precision is. Using a different radix allows one to represent some of them (''e.g.'', 1/10 in decimal floating point), but the possibilities remain limited. Software packages that perform rational arithmetic represent numbers as fractions with integral numerator and denominator, and can therefore represent any rational number exactly. Such packages generally need to use "bignum

In computer science, arbitrary-precision arithmetic, also called bignum arithmetic, multiple-precision arithmetic, or sometimes infinite-precision arithmetic, indicates that calculations are performed on numbers whose numerical digit, digits of p ...

" arithmetic for the individual integers.

* Interval arithmetic

Interval arithmetic (also known as interval mathematics; interval analysis or interval computation) is a mathematical technique used to mitigate rounding and measurement errors in mathematical computation by computing function bounds. Numeri ...

allows one to represent numbers as intervals and obtain guaranteed bounds on results. It is generally based on other arithmetics, in particular floating point.

* Computer algebra system

A computer algebra system (CAS) or symbolic algebra system (SAS) is any mathematical software with the ability to manipulate mathematical expressions in a way similar to the traditional manual computations of mathematicians and scientists. The de ...

s such as Mathematica

Wolfram (previously known as Mathematica and Wolfram Mathematica) is a software system with built-in libraries for several areas of technical computing that allows machine learning, statistics, symbolic computation, data manipulation, network ...

, Maxima, and Maple

''Acer'' is a genus of trees and shrubs commonly known as maples. The genus is placed in the soapberry family Sapindaceae.Stevens, P. F. (2001 onwards). Angiosperm Phylogeny Website. Version 9, June 2008 nd more or less continuously updated si ...

can often handle irrational numbers like or in a completely "formal" way (symbolic computation

In mathematics and computer science, computer algebra, also called symbolic computation or algebraic computation, is a scientific area that refers to the study and development of algorithms and software for manipulating mathematical expressions ...

), without dealing with a specific encoding of the significand. Such a program can evaluate expressions like "" exactly, because it is programmed to process the underlying mathematics directly, instead of using approximate values for each intermediate calculation.

History

In 1914, the Spanish engineer

In 1914, the Spanish engineer Leonardo Torres Quevedo

Leonardo Torres Quevedo (; 28 December 1852 – 18 December 1936) was a Spanish civil engineer, mathematician and inventor, known for his numerous engineering innovations, including Aerial tramway, aerial trams, airships, catamarans, and remote ...

published ''Essays on Automatics'', where he designed a special-purpose electromechanical calculator based on Charles Babbage

Charles Babbage (; 26 December 1791 – 18 October 1871) was an English polymath. A mathematician, philosopher, inventor and mechanical engineer, Babbage originated the concept of a digital programmable computer.

Babbage is considered ...

's analytical engine and described a way to store floating-point numbers in a consistent manner. He stated that numbers will be stored in exponential format as ''n'' × 10, and offered three rules by which consistent manipulation of floating-point numbers by machines could be implemented. For Torres, "''n'' will always be the same number of digits (e.g. six), the first digit of ''n'' will be of order of tenths, the second of hundredths, etc, and one will write each quantity in the form: ''n''; ''m''." The format he proposed shows the need for a fixed-sized significand as is presently used for floating-point data, fixing the location of the decimal point in the significand so that each representation was unique, and how to format such numbers by specifying a syntax to be used that could be entered through a typewriter

A typewriter is a Machine, mechanical or electromechanical machine for typing characters. Typically, a typewriter has an array of Button (control), keys, and each one causes a different single character to be produced on paper by striking an i ...

, as was the case of his Electromechanical Arithmometer in 1920.Randell, BrianDigital Computers, History of Origins, (pdf)

p. 545, Digital Computers: Origins, Encyclopedia of Computer Science, January 2003. In 1938,

Konrad Zuse

Konrad Ernst Otto Zuse (; ; 22 June 1910 – 18 December 1995) was a German civil engineer, List of pioneers in computer science, pioneering computer scientist, inventor and businessman. His greatest achievement was the world's first programm ...

of Berlin completed the Z1, the first binary, programmable mechanical computer

A mechanical computer is a computer built from mechanical components such as levers and gears rather than electronic components. The most common examples are adding machines and mechanical counters, which use the turning of gears to incremen ...

; it uses a 24-bit binary floating-point number representation with a 7-bit signed exponent, a 17-bit significand (including one implicit bit), and a sign bit. The more reliable relay

A relay

Electromechanical relay schematic showing a control coil, four pairs of normally open and one pair of normally closed contacts

An automotive-style miniature relay with the dust cover taken off

A relay is an electrically operated switc ...

-based Z3, completed in 1941, has representations for both positive and negative infinities; in particular, it implements defined operations with infinity, such as , and it stops on undefined operations, such as .

Zuse also proposed, but did not complete, carefully rounded floating-point arithmetic that includes and NaN representations, anticipating features of the IEEE Standard by four decades. In contrast, von Neumann recommended against floating-point numbers for the 1951 IAS machine, arguing that fixed-point arithmetic is preferable.

The first ''commercial'' computer with floating-point hardware was Zuse's Z4 computer, designed in 1942–1945. In 1946, Bell Laboratories introduced the Model V, which implemented decimal floating-point numbers.

The Pilot ACE has binary floating-point arithmetic, and it became operational in 1950 at National Physical Laboratory, UK. Thirty-three were later sold commercially as the English Electric DEUCE. The arithmetic is actually implemented in software, but with a one megahertz clock rate, the speed of floating-point and fixed-point operations in this machine were initially faster than those of many competing computers.

The mass-produced IBM 704

The IBM 704 is the model name of a large digital computer, digital mainframe computer introduced by IBM in 1954. Designed by John Backus and Gene Amdahl, it was the first mass-produced computer with hardware for floating-point arithmetic. The I ...

followed in 1954; it introduced the use of a biased exponent. For many decades after that, floating-point hardware was typically an optional feature, and computers that had it were said to be "scientific computers", or to have " scientific computation" (SC) capability (see also Extensions for Scientific Computation (XSC)). It was not until the launch of the Intel i486 in 1989 that ''general-purpose'' personal computers had floating-point capability in hardware as a standard feature.

The UNIVAC 1100/2200 series

The UNIVAC 1100/2200 series is a series of compatible 36-bit computer systems, beginning with the UNIVAC 1107 in 1962, initially made by Sperry Rand. The series continues to be supported today by Unisys Corporation as the ClearPath Dorado Serie ...

, introduced in 1962, supported two floating-point representations:

* ''Single precision'': 36 bits, organized as a 1-bit sign, an 8-bit exponent, and a 27-bit significand.

* ''Double precision'': 72 bits, organized as a 1-bit sign, an 11-bit exponent, and a 60-bit significand.

The IBM 7094, also introduced in 1962, supported single-precision and double-precision representations, but with no relation to the UNIVAC's representations. Indeed, in 1964, IBM introduced hexadecimal floating-point representations in its System/360

The IBM System/360 (S/360) is a family of mainframe computer systems announced by IBM on April 7, 1964, and delivered between 1965 and 1978. System/360 was the first family of computers designed to cover both commercial and scientific applicati ...

mainframes; these same representations are still available for use in modern z/Architecture

z/Architecture, initially and briefly called ESA Modal Extensions (ESAME), is IBM's 64-bit complex instruction set computer (CISC) instruction set architecture, implemented by its mainframe computers. IBM introduced its first z/Architecture ...

systems. In 1998, IBM implemented IEEE-compatible binary floating-point arithmetic in its mainframes; in 2005, IBM also added IEEE-compatible decimal floating-point arithmetic.

Initially, computers used many different representations for floating-point numbers. The lack of standardization at the mainframe level was an ongoing problem by the early 1970s for those writing and maintaining higher-level source code; these manufacturer floating-point standards differed in the word sizes, the representations, and the rounding behavior and general accuracy of operations. Floating-point compatibility across multiple computing systems was in desperate need of standardization by the early 1980s, leading to the creation of the IEEE 754

The IEEE Standard for Floating-Point Arithmetic (IEEE 754) is a technical standard for floating-point arithmetic originally established in 1985 by the Institute of Electrical and Electronics Engineers (IEEE). The standard #Design rationale, add ...

standard once the 32-bit (or 64-bit) word

A word is a basic element of language that carries semantics, meaning, can be used on its own, and is uninterruptible. Despite the fact that language speakers often have an intuitive grasp of what a word is, there is no consensus among linguist ...

had become commonplace. This standard was significantly based on a proposal from Intel, which was designing the i8087 numerical coprocessor; Motorola, which was designing the 68000

The Motorola 68000 (sometimes shortened to Motorola 68k or m68k and usually pronounced "sixty-eight-thousand") is a 16/32-bit complex instruction set computer (CISC) microprocessor, introduced in 1979 by Motorola Semiconductor Products Sector ...

around the same time, gave significant input as well.

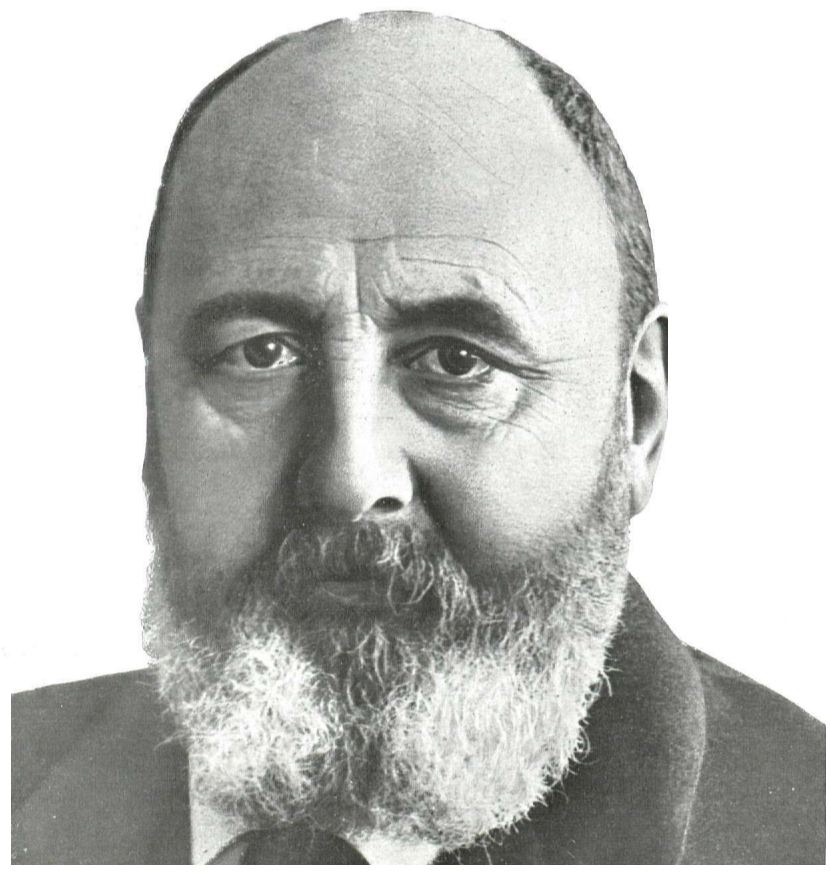

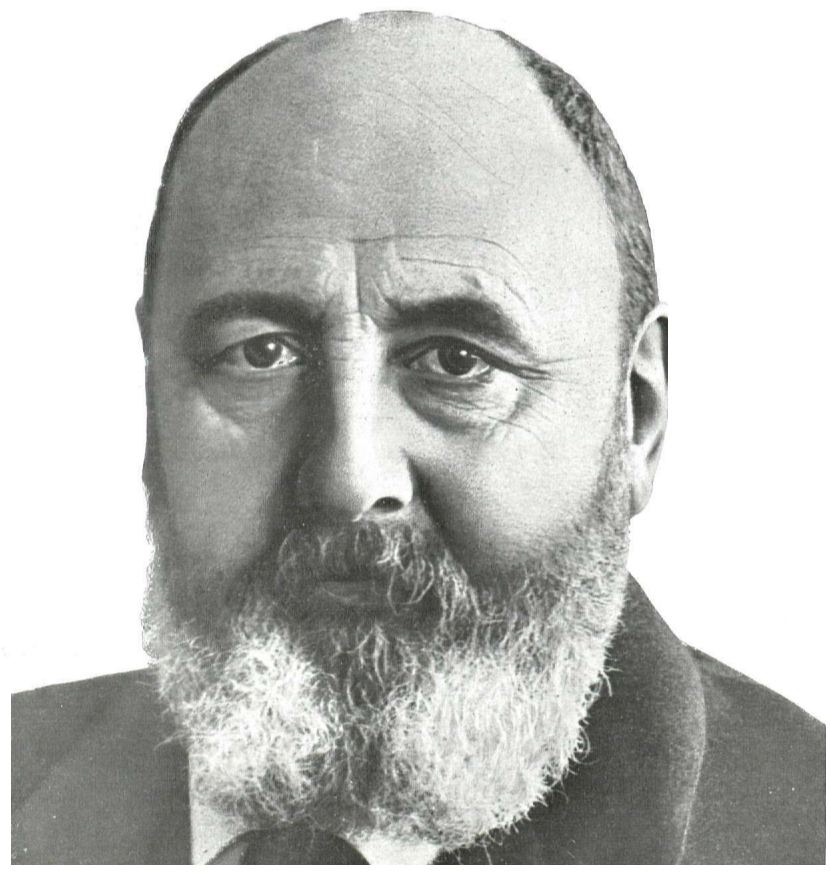

In 1989, mathematician and computer scientist William Kahan was honored with the

In 1989, mathematician and computer scientist William Kahan was honored with the Turing Award

The ACM A. M. Turing Award is an annual prize given by the Association for Computing Machinery (ACM) for contributions of lasting and major technical importance to computer science. It is generally recognized as the highest distinction in the fi ...

for being the primary architect behind this proposal; he was aided by his student Jerome Coonen and a visiting professor, Harold Stone.

Among the x86 (more specifically i8087) innovations are these:

* A precisely specified floating-point representation at the bit-string level, so that all compliant computers interpret bit patterns the same way. This makes it possible to accurately and efficiently transfer floating-point numbers from one computer to another (after accounting for endianness

file:Gullivers_travels.jpg, ''Gulliver's Travels'' by Jonathan Swift, the novel from which the term was coined

In computing, endianness is the order in which bytes within a word (data type), word of digital data are transmitted over a data comm ...

).

* A precisely specified behavior for the arithmetic operations: A result is required to be produced as if infinitely precise arithmetic were used to yield a value that is then rounded according to specific rules. This means that a compliant computer program would always produce the same result when given a particular input, thus mitigating the almost mystical reputation that floating-point computation had developed for its hitherto seemingly non-deterministic behavior.

* The ability of exceptional conditions (overflow, divide by zero, etc.) to propagate through a computation in a benign manner and then be handled by the software in a controlled fashion.

These features would be inherited into IEEE 754-1985 (with the exception of the encoding of special values and exceptions), though the extended internal precision of x87 means it requires explicit rounding of exact results directly to the destination precision in order to match standard IEEE 754 results. However, the behavior may not be the same as a rounding to the destination format due to a possible wider exponent range of the extended format.

Range of floating-point numbers

A floating-point number consists of two fixed-point components, whose range depends exclusively on the number of bits or digits in their representation. Whereas components linearly depend on their range, the floating-point range linearly depends on the significand range and exponentially on the range of exponent component, which attaches outstandingly wider range to the number. On a typical computer system, a '' double-precision'' (64-bit) binary floating-point number has a coefficient of 53 bits (including 1 implied bit), an exponent of 11 bits, and 1 sign bit. Since 210 = 1024, the complete range of the positive normal floating-point numbers in this format is from 2−1022 ≈ 2 × 10−308 to approximately 21024 ≈ 2 × 10308. The number of normal floating-point numbers in a system (''B'', ''P'', ''L'', ''U'') where * ''B'' is the base of the system, * ''P'' is the precision of the significand (in base ''B''), * ''L'' is the smallest exponent of the system, * ''U'' is the largest exponent of the system, is . There is a smallest positive normal floating-point number, : Underflow level = UFL = , which has a 1 as the leading digit and 0 for the remaining digits of the significand, and the smallest possible value for the exponent. There is a largest floating-point number, : Overflow level = OFL = , which has ''B'' − 1 as the value for each digit of the significand and the largest possible value for the exponent. In addition, there are representable values strictly between −UFL and UFL. Namely, positive and negative zeros, as well as subnormal numbers.IEEE 754: floating point in modern computers

TheIEEE

The Institute of Electrical and Electronics Engineers (IEEE) is an American 501(c)(3) organization, 501(c)(3) public charity professional organization for electrical engineering, electronics engineering, and other related disciplines.

The IEEE ...

standardized the computer representation for binary floating-point numbers in IEEE 754

The IEEE Standard for Floating-Point Arithmetic (IEEE 754) is a technical standard for floating-point arithmetic originally established in 1985 by the Institute of Electrical and Electronics Engineers (IEEE). The standard #Design rationale, add ...

(a.k.a. IEC 60559) in 1985. This first standard is followed by almost all modern machines. It was revised in 2008. IBM mainframes support IBM's own hexadecimal floating point format and IEEE 754-2008 decimal floating point

Decimal floating-point (DFP) arithmetic refers to both a representation and operations on Decimal data type, decimal floating-point numbers. Working directly with decimal (base-10) fractions can avoid the rounding errors that otherwise typically ...

in addition to the IEEE 754 binary format. The Cray T90 series had an IEEE version, but the SV1 still uses Cray floating-point format.

The standard provides for many closely related formats, differing in only a few details. Five of these formats are called ''basic formats'', and others are termed ''extended precision formats'' and ''extendable precision format''. Three formats are especially widely used in computer hardware and languages:

* Single precision

Single-precision floating-point format (sometimes called FP32 or float32) is a computer number format, usually occupying 32 bits in computer memory; it represents a wide dynamic range of numeric values by using a floating radix point.

A floa ...

(binary32), usually used to represent the "float" type in the C language family. This is a binary format that occupies 32 bits (4 bytes) and its significand has a precision of 24 bits (about 7 decimal digits).

* Double precision

Double-precision floating-point format (sometimes called FP64 or float64) is a floating-point arithmetic, floating-point computer number format, number format, usually occupying 64 Bit, bits in computer memory; it represents a wide range of numeri ...

(binary64), usually used to represent the "double" type in the C language family. This is a binary format that occupies 64 bits (8 bytes) and its significand has a precision of 53 bits (about 16 decimal digits).

* Double extended, also ambiguously called "extended precision" format. This is a binary format that occupies at least 79 bits (80 if the hidden/implicit bit rule is not used) and its significand has a precision of at least 64 bits (about 19 decimal digits). The C99 and C11 standards of the C language family, in their annex F ("IEC 60559 floating-point arithmetic"), recommend such an extended format to be provided as " long double". A format satisfying the minimal requirements (64-bit significand precision, 15-bit exponent, thus fitting on 80 bits) is provided by the x86

x86 (also known as 80x86 or the 8086 family) is a family of complex instruction set computer (CISC) instruction set architectures initially developed by Intel, based on the 8086 microprocessor and its 8-bit-external-bus variant, the 8088. Th ...

architecture. Often on such processors, this format can be used with "long double", though extended precision is not available with MSVC. For alignment purposes, many tools store this 80-bit value in a 96-bit or 128-bit space. On other processors, "long double" may stand for a larger format, such as quadruple precision, or just double precision, if any form of extended precision is not available.

Increasing the precision of the floating-point representation generally reduces the amount of accumulated round-off error

In computing, a roundoff error, also called rounding error, is the difference between the result produced by a given algorithm using exact arithmetic and the result produced by the same algorithm using finite-precision, rounded arithmetic. Roun ...

caused by intermediate calculations.

Other IEEE formats include:

* Decimal64 and decimal128 floating-point formats. These formats (especially decimal128) are pervasive in financial transactions because, along with the decimal32 format, they allow correct decimal rounding.

* Quadruple precision (binary128). This is a binary format that occupies 128 bits (16 bytes) and its significand has a precision of 113 bits (about 34 decimal digits).

* Half precision, also called binary16, a 16-bit floating-point value. It is being used in the NVIDIA Cg graphics language, and in the openEXR standard (where it actually predates the introduction in the IEEE 754 standard).

Any integer with absolute value less than 224 can be exactly represented in the single-precision format, and any integer with absolute value less than 253 can be exactly represented in the double-precision format. Furthermore, a wide range of powers of 2 times such a number can be represented. These properties are sometimes used for purely integer data, to get 53-bit integers on platforms that have double-precision floats but only 32-bit integers.

The standard specifies some special values, and their representation: positive infinity

Infinity is something which is boundless, endless, or larger than any natural number. It is denoted by \infty, called the infinity symbol.

From the time of the Ancient Greek mathematics, ancient Greeks, the Infinity (philosophy), philosophic ...

(), negative infinity (), a negative zero (−0) distinct from ordinary ("positive") zero, and "not a number" values ( NaNs).

Comparison of floating-point numbers, as defined by the IEEE standard, is a bit different from usual integer comparison. Negative and positive zero compare equal, and every NaN compares unequal to every value, including itself. All finite floating-point numbers are strictly smaller than and strictly greater than , and they are ordered in the same way as their values (in the set of real numbers).

Internal representation

Floating-point numbers are typically packed into a computer datum as the sign bit, the exponent field, and a field for the significand, from left to right. For theIEEE 754

The IEEE Standard for Floating-Point Arithmetic (IEEE 754) is a technical standard for floating-point arithmetic originally established in 1985 by the Institute of Electrical and Electronics Engineers (IEEE). The standard #Design rationale, add ...

binary formats (basic and extended) that have extant hardware implementations, they are apportioned as follows:

While the exponent can be positive or negative, in binary formats it is stored as an unsigned number that has a fixed "bias" added to it. Values of all 0s in this field are reserved for the zeros and subnormal numbers; values of all 1s are reserved for the infinities and NaNs. The exponent range for normal numbers is ��126, 127for single precision, ��1022, 1023for double, or ��16382, 16383for quad. Normal numbers exclude subnormal values, zeros, infinities, and NaNs.

In the IEEE binary interchange formats the leading bit of a normalized significand is not actually stored in the computer datum, since it is always 1. It is called the "hidden" or "implicit" bit. Because of this, the single-precision format actually has a significand with 24 bits of precision, the double-precision format has 53, quad has 113, and octuple has 237.

For example, it was shown above that π, rounded to 24 bits of precision, has:

* sign = 0 ; ''e'' = 1 ; ''s'' = 110010010000111111011011 (including the hidden bit)

The sum of the exponent bias (127) and the exponent (1) is 128, so this is represented in the single-precision format as

* 0 10000000 10010010000111111011011 (excluding the hidden bit) = 40490FDB as a hexadecimal

Hexadecimal (also known as base-16 or simply hex) is a Numeral system#Positional systems in detail, positional numeral system that represents numbers using a radix (base) of sixteen. Unlike the decimal system representing numbers using ten symbo ...

number.

An example of a layout for 32-bit floating point is

Other notable floating-point formats

In addition to the widely usedIEEE 754

The IEEE Standard for Floating-Point Arithmetic (IEEE 754) is a technical standard for floating-point arithmetic originally established in 1985 by the Institute of Electrical and Electronics Engineers (IEEE). The standard #Design rationale, add ...

standard formats, other floating-point formats are used, or have been used, in certain domain-specific areas.

* The Microsoft Binary Format (MBF) was developed for the Microsoft BASIC language products, including Microsoft's first ever product the Altair BASIC (1975), TRS-80 LEVEL II, CP/M

CP/M, originally standing for Control Program/Monitor and later Control Program for Microcomputers, is a mass-market operating system created in 1974 for Intel 8080/Intel 8085, 85-based microcomputers by Gary Kildall of Digital Research, Dig ...

's MBASIC, IBM PC 5150

International Business Machines Corporation (using the trademark IBM), nicknamed Big Blue, is an American multinational technology company headquartered in Armonk, New York, and present in over 175 countries. It is a publicly traded comp ...

's BASICA, MS-DOS

MS-DOS ( ; acronym for Microsoft Disk Operating System, also known as Microsoft DOS) is an operating system for x86-based personal computers mostly developed by Microsoft. Collectively, MS-DOS, its rebranding as IBM PC DOS, and a few op ...

's GW-BASIC

GW-BASIC is a dialect of the BASIC programming language developed by Microsoft from IBM BASICA. Functionally identical to BASICA, its BASIC interpreter is a fully self-contained executable and does not need the Cassette BASIC ROM found in the ori ...

and QuickBASIC

Microsoft QuickBASIC (also QB) is an Integrated Development Environment (or IDE) and compiler for the BASIC programming language that was developed by Microsoft. QuickBASIC runs mainly on DOS, though there was also a short-lived version for the c ...

prior to version 4.00. QuickBASIC version 4.00 and 4.50 switched to the IEEE 754-1985 format but can revert to the MBF format using the /MBF command option. MBF was designed and developed on a simulated Intel 8080 by Monte Davidoff, a dormmate of Bill Gates

William Henry Gates III (born October 28, 1955) is an American businessman and philanthropist. A pioneer of the microcomputer revolution of the 1970s and 1980s, he co-founded the software company Microsoft in 1975 with his childhood friend ...

, during spring of 1975 for the MITS Altair 8800. The initial release of July 1975 supported a single-precision (32 bits) format due to cost of the MITS Altair 8800 4-kilobytes memory. In December 1975, the 8-kilobytes version added a double-precision (64 bits) format. A single-precision (40 bits) variant format was adopted for other CPU's, notably the MOS 6502

The MOS Technology 6502 (typically pronounced "sixty-five-oh-two" or "six-five-oh-two") William Mensch and the moderator both pronounce the 6502 microprocessor as ''"sixty-five-oh-two"''. is an 8-bit microprocessor that was designed by a small ...

(Apple II

Apple II ("apple Roman numerals, two", stylized as Apple ][) is a series of microcomputers manufactured by Apple Computer, Inc. from 1977 to 1993. The Apple II (original), original Apple II model, which gave the series its name, was designed ...

, Commodore PET, Atari), Motorola 6800 (MITS Altair 680) and Motorola 6809 (TRS-80 Color Computer). All Microsoft language products from 1975 through 1987 used the Microsoft Binary Format until Microsoft adopted the IEEE 754 standard format in all its products starting in 1988 to their current releases. MBF consists of the MBF single-precision format (32 bits, "6-digit BASIC"), the MBF extended-precision format (40 bits, "9-digit BASIC"), and the MBF double-precision format (64 bits); each of them is represented with an 8-bit exponent, followed by a sign bit, followed by a significand of respectively 23, 31, and 55 bits.

* The bfloat16 format requires the same amount of memory (16 bits) as the IEEE 754 half-precision format, but allocates 8 bits to the exponent instead of 5, thus providing the same range as a IEEE 754 single-precision number. The tradeoff is a reduced precision, as the trailing significand field is reduced from 10 to 7 bits. This format is mainly used in the training of machine learning

Machine learning (ML) is a field of study in artificial intelligence concerned with the development and study of Computational statistics, statistical algorithms that can learn from data and generalise to unseen data, and thus perform Task ( ...

models, where range is more valuable than precision. Many machine learning accelerators provide hardware support for this format.

* The TensorFloat-32 format combines the 8 bits of exponent of the bfloat16 with the 10 bits of trailing significand field of half-precision formats, resulting in a size of 19 bits. This format was introduced by Nvidia

Nvidia Corporation ( ) is an American multinational corporation and technology company headquartered in Santa Clara, California, and incorporated in Delaware. Founded in 1993 by Jensen Huang (president and CEO), Chris Malachowsky, and Curti ...

, which provides hardware support for it in the Tensor Cores of its GPUs based on the Nvidia Ampere architecture. The drawback of this format is its size, which is not a power of 2. However, according to Nvidia, this format should only be used internally by hardware to speed up computations, while inputs and outputs should be stored in the 32-bit single-precision IEEE 754 format.

* The Hopper architecture GPUs provide two FP8 formats: one with the same numerical range as half-precision (E5M2) and one with higher precision, but less range (E4M3).

* The Blackwell GPU architecture includes support for FP6 (E3M2 and E2M3) and FP4 (E2M1) formats. FP4 is the smallest floating-point format which allows for all IEEE 754 principles (see minifloat).

Representable numbers, conversion and rounding

By their nature, all numbers expressed in floating-point format arerational number

In mathematics, a rational number is a number that can be expressed as the quotient or fraction of two integers, a numerator and a non-zero denominator . For example, is a rational number, as is every integer (for example,

The set of all ...

s with a terminating expansion in the relevant base (for example, a terminating decimal expansion in base-10, or a terminating binary expansion in base-2). Irrational numbers, such as π or , or non-terminating rational numbers, must be approximated. The number of digits (or bits) of precision also limits the set of rational numbers that can be represented exactly. For example, the decimal number 123456789 cannot be exactly represented if only eight decimal digits of precision are available (it would be rounded to one of the two straddling representable values, 12345678 × 101 or 12345679 × 101), the same applies to non-terminating digits (. to be rounded to either .55555555 or .55555556).

When a number is represented in some format (such as a character string) which is not a native floating-point representation supported in a computer implementation, then it will require a conversion before it can be used in that implementation. If the number can be represented exactly in the floating-point format then the conversion is exact. If there is not an exact representation then the conversion requires a choice of which floating-point number to use to represent the original value. The representation chosen will have a different value from the original, and the value thus adjusted is called the ''rounded value''.

Whether or not a rational number has a terminating expansion depends on the base. For example, in base-10 the number 1/2 has a terminating expansion (0.5) while the number 1/3 does not (0.333...). In base-2 only rationals with denominators that are powers of 2 (such as 1/2 or 3/16) are terminating. Any rational with a denominator that has a prime factor other than 2 will have an infinite binary expansion. This means that numbers that appear to be short and exact when written in decimal format may need to be approximated when converted to binary floating-point. For example, the decimal number 0.1 is not representable in binary floating-point of any finite precision; the exact binary representation would have a "1100" sequence continuing endlessly:

: ''e'' = −4; ''s'' = 1100110011001100110011001100110011...,

where, as previously, ''s'' is the significand and ''e'' is the exponent.

When rounded to 24 bits this becomes

: ''e'' = −4; ''s'' = 110011001100110011001101,

which is actually 0.100000001490116119384765625 in decimal.

As a further example, the real number π, represented in binary as an infinite sequence of bits is

: 11.0010010000111111011010101000100010000101101000110000100011010011...

but is

: 11.0010010000111111011011

when approximated by rounding

Rounding or rounding off is the process of adjusting a number to an approximate, more convenient value, often with a shorter or simpler representation. For example, replacing $ with $, the fraction 312/937 with 1/3, or the expression √2 with ...

to a precision of 24 bits.

In binary single-precision floating-point, this is represented as ''s'' = 1.10010010000111111011011 with ''e'' = 1.

This has a decimal value of

: 3.1415927410125732421875,

whereas a more accurate approximation of the true value of π is

: 3.14159265358979323846264338327950...

The result of rounding differs from the true value by about 0.03 parts per million, and matches the decimal representation of π in the first 7 digits. The difference is the discretization error and is limited by the machine epsilon.

The arithmetical difference between two consecutive representable floating-point numbers which have the same exponent is called a unit in the last place

In computer science and numerical analysis, unit in the last place or unit of least precision (ulp) is the spacing between two consecutive floating-point numbers, i.e., the value the '' least significant digit'' (rightmost digit) represents if it ...

(ULP). For example, if there is no representable number lying between the representable numbers 1.45A70C2216 and 1.45A70C2416, the ULP is 2×16−8, or 2−31. For numbers with a base-2 exponent part of 0, i.e. numbers with an absolute value higher than or equal to 1 but lower than 2, an ULP is exactly 2−23 or about 10−7 in single precision, and exactly 2−53 or about 10−16 in double precision. The mandated behavior of IEEE-compliant hardware is that the result be within one-half of a ULP.

Rounding modes

Rounding is used when the exact result of a floating-point operation (or a conversion to floating-point format) would need more digits than there are digits in the significand. IEEE 754 requires ''correct rounding'': that is, the rounded result is as if infinitely precise arithmetic was used to compute the value and then rounded (although in implementation only three extra bits are needed to ensure this). There are several differentrounding

Rounding or rounding off is the process of adjusting a number to an approximate, more convenient value, often with a shorter or simpler representation. For example, replacing $ with $, the fraction 312/937 with 1/3, or the expression √2 with ...

schemes (or ''rounding modes''). Historically, truncation

In mathematics and computer science, truncation is limiting the number of digits right of the decimal point.

Truncation and floor function

Truncation of positive real numbers can be done using the floor function. Given a number x \in \mathbb ...

was the typical approach. Since the introduction of IEEE 754, the default method ('' round to nearest, ties to even'', sometimes called Banker's Rounding) is more commonly used. This method rounds the ideal (infinitely precise) result of an arithmetic operation to the nearest representable value, and gives that representation as the result. In the case of a tie, the value that would make the significand end in an even digit is chosen. The IEEE 754 standard requires the same rounding to be applied to all fundamental algebraic operations, including square root and conversions, when there is a numeric (non-NaN) result. It means that the results of IEEE 754 operations are completely determined in all bits of the result, except for the representation of NaNs. ("Library" functions such as cosine and log are not mandated.)

Alternative rounding options are also available. IEEE 754 specifies the following rounding modes:

* round to nearest, where ties round to the nearest even digit in the required position (the default and by far the most common mode)

* round to nearest, where ties round away from zero (optional for binary floating-point and commonly used in decimal)

* round up (toward +∞; negative results thus round toward zero)

* round down (toward −∞; negative results thus round away from zero)

* round toward zero (truncation; it is similar to the common behavior of float-to-integer conversions, which convert −3.9 to −3 and 3.9 to 3)

Alternative modes are useful when the amount of error being introduced must be bounded. Applications that require a bounded error are multi-precision floating-point, and interval arithmetic

Interval arithmetic (also known as interval mathematics; interval analysis or interval computation) is a mathematical technique used to mitigate rounding and measurement errors in mathematical computation by computing function bounds. Numeri ...

.

The alternative rounding modes are also useful in diagnosing numerical instability: if the results of a subroutine vary substantially between rounding to + and − infinity then it is likely numerically unstable and affected by round-off error.

Binary-to-decimal conversion with minimal number of digits

Converting a double-precision binary floating-point number to a decimal string is a common operation, but an algorithm producing results that are both accurate and minimal did not appear in print until 1990, with Steele and White's Dragon4. Some of the improvements since then include: * David M. Gay's ''dtoa.c'', a practical open-source implementation of many ideas in Dragon4. * Grisu3, with a 4× speedup as it removes the use ofbignum

In computer science, arbitrary-precision arithmetic, also called bignum arithmetic, multiple-precision arithmetic, or sometimes infinite-precision arithmetic, indicates that calculations are performed on numbers whose numerical digit, digits of p ...

s. Must be used with a fallback, as it fails for ~0.5% of cases.

* Errol3, an always-succeeding algorithm similar to, but slower than, Grisu3. Apparently not as good as an early-terminating Grisu with fallback.

* Ryū, an always-succeeding algorithm that is faster and simpler than Grisu3.

* Schubfach, an always-succeeding algorithm that is based on a similar idea to Ryū, developed almost simultaneously and independently. Performs better than Ryū and Grisu3 in certain benchmarks.

Many modern language runtimes use Grisu3 with a Dragon4 fallback.

Decimal-to-binary conversion

The problem of parsing a decimal string into a binary FP representation is complex, with an accurate parser not appearing until Clinger's 1990 work (implemented in dtoa.c). Further work has likewise progressed in the direction of faster parsing.Floating-point operations

For ease of presentation and understanding, decimalradix

In a positional numeral system, the radix (radices) or base is the number of unique digits, including the digit zero, used to represent numbers. For example, for the decimal system (the most common system in use today) the radix is ten, becaus ...

with 7 digit precision will be used in the examples, as in the IEEE 754 ''decimal32'' format. The fundamental principles are the same in any radix

In a positional numeral system, the radix (radices) or base is the number of unique digits, including the digit zero, used to represent numbers. For example, for the decimal system (the most common system in use today) the radix is ten, becaus ...

or precision, except that normalization is optional (it does not affect the numerical value of the result). Here, ''s'' denotes the significand and ''e'' denotes the exponent.

Addition and subtraction

A simple method to add floating-point numbers is to first represent them with the same exponent. In the example below, the second number (with the smaller exponent) is shifted right by three digits, and one then proceeds with the usual addition method: 123456.7 = 1.234567 × 10^5 101.7654 = 1.017654 × 10^2 = 0.001017654 × 10^5 Hence: 123456.7 + 101.7654 = (1.234567 × 10^5) + (1.017654 × 10^2) = (1.234567 × 10^5) + (0.001017654 × 10^5) = (1.234567 + 0.001017654) × 10^5 = 1.235584654 × 10^5 In detail: e=5; s=1.234567 (123456.7) + e=2; s=1.017654 (101.7654) e=5; s=1.234567 + e=5; s=0.001017654 (after shifting) -------------------- e=5; s=1.235584654 (true sum: 123558.4654) This is the true result, the exact sum of the operands. It will be rounded to seven digits and then normalized if necessary. The final result is e=5; s=1.235585 (final sum: 123558.5) The lowest three digits of the second operand (654) are essentially lost. This isround-off error

In computing, a roundoff error, also called rounding error, is the difference between the result produced by a given algorithm using exact arithmetic and the result produced by the same algorithm using finite-precision, rounded arithmetic. Roun ...

. In extreme cases, the sum of two non-zero numbers may be equal to one of them:

e=5; s=1.234567

+ e=−3; s=9.876543

e=5; s=1.234567

+ e=5; s=0.00000009876543 (after shifting)

----------------------

e=5; s=1.23456709876543 (true sum)

e=5; s=1.234567 (after rounding and normalization)

In the above conceptual examples it would appear that a large number of extra digits would need to be provided by the adder to ensure correct rounding; however, for binary addition or subtraction using careful implementation techniques only a ''guard'' bit, a ''rounding'' bit and one extra ''sticky'' bit need to be carried beyond the precision of the operands.

Another problem of loss of significance occurs when ''approximations'' to two nearly equal numbers are subtracted. In the following example ''e'' = 5; ''s'' = 1.234571 and ''e'' = 5; ''s'' = 1.234567 are approximations to the rationals 123457.1467 and 123456.659.

e=5; s=1.234571

− e=5; s=1.234567

----------------

e=5; s=0.000004

e=−1; s=4.000000 (after rounding and normalization)

The floating-point difference is computed exactly because the numbers are close—the Sterbenz lemma guarantees this, even in case of underflow when gradual underflow is supported. Despite this, the difference of the original numbers is ''e'' = −1; ''s'' = 4.877000, which differs more than 20% from the difference ''e'' = −1; ''s'' = 4.000000 of the approximations. In extreme cases, all significant digits of precision can be lost. This '' cancellation'' illustrates the danger in assuming that all of the digits of a computed result are meaningful. Dealing with the consequences of these errors is a topic in numerical analysis

Numerical analysis is the study of algorithms that use numerical approximation (as opposed to symbolic computation, symbolic manipulations) for the problems of mathematical analysis (as distinguished from discrete mathematics). It is the study of ...

; see also Accuracy problems.

Multiplication and division

To multiply, the significands are multiplied while the exponents are added, and the result is rounded and normalized. e=3; s=4.734612 × e=5; s=5.417242 ----------------------- e=8; s=25.648538980104 (true product) e=8; s=25.64854 (after rounding) e=9; s=2.564854 (after normalization) Similarly, division is accomplished by subtracting the divisor's exponent from the dividend's exponent, and dividing the dividend's significand by the divisor's significand. There are no cancellation or absorption problems with multiplication or division, though small errors may accumulate as operations are performed in succession. In practice, the way these operations are carried out in digital logic can be quite complex (see Booth's multiplication algorithm andDivision algorithm

A division algorithm is an algorithm which, given two integers ''N'' and ''D'' (respectively the numerator and the denominator), computes their quotient and/or remainder, the result of Euclidean division. Some are applied by hand, while others ar ...

).

Literal syntax

Literals for floating-point numbers depend on languages. They typically usee or E to denote scientific notation

Scientific notation is a way of expressing numbers that are too large or too small to be conveniently written in decimal form, since to do so would require writing out an inconveniently long string of digits. It may be referred to as scientif ...

. The C programming language

C (''pronounced'' '' – like the letter c'') is a general-purpose programming language. It was created in the 1970s by Dennis Ritchie and remains very widely used and influential. By design, C's features cleanly reflect the capabilities of ...

and the IEEE 754

The IEEE Standard for Floating-Point Arithmetic (IEEE 754) is a technical standard for floating-point arithmetic originally established in 1985 by the Institute of Electrical and Electronics Engineers (IEEE). The standard #Design rationale, add ...

standard also define a hexadecimal literal syntax with a base-2 exponent instead of 10. In languages like C, when the decimal exponent is omitted, a decimal point is needed to differentiate them from integers. Other languages do not have an integer type (such as JavaScript

JavaScript (), often abbreviated as JS, is a programming language and core technology of the World Wide Web, alongside HTML and CSS. Ninety-nine percent of websites use JavaScript on the client side for webpage behavior.

Web browsers have ...

), or allow overloading of numeric types (such as Haskell

Haskell () is a general-purpose, statically typed, purely functional programming language with type inference and lazy evaluation. Designed for teaching, research, and industrial applications, Haskell pioneered several programming language ...

). In these cases, digit strings such as 123 may also be floating-point literals.

Examples of floating-point literals are:

* 99.9

* -5000.12

* 6.02e23

* -3e-45

* 0x1.fffffep+127 in C and IEEE 754

Dealing with exceptional cases

Floating-point computation in a computer can run into three kinds of problems: * An operation can be mathematically undefined, such as ∞/∞, ordivision by zero

In mathematics, division by zero, division (mathematics), division where the divisor (denominator) is 0, zero, is a unique and problematic special case. Using fraction notation, the general example can be written as \tfrac a0, where a is the di ...

.

* An operation can be legal in principle, but not supported by the specific format, for example, calculating the square root

In mathematics, a square root of a number is a number such that y^2 = x; in other words, a number whose ''square'' (the result of multiplying the number by itself, or y \cdot y) is . For example, 4 and −4 are square roots of 16 because 4 ...

of −1 or the inverse sine of 2 (both of which result in complex number

In mathematics, a complex number is an element of a number system that extends the real numbers with a specific element denoted , called the imaginary unit and satisfying the equation i^= -1; every complex number can be expressed in the for ...

s).

* An operation can be legal in principle, but the result can be impossible to represent in the specified format, because the exponent is too large or too small to encode in the exponent field. Such an event is called an overflow (exponent too large), underflow (exponent too small) or denormalization (precision loss).

Prior to the IEEE standard, such conditions usually caused the program to terminate, or triggered some kind of trap that the programmer might be able to catch. How this worked was system-dependent, meaning that floating-point programs were not portable. (The term "exception" as used in IEEE 754 is a general term meaning an exceptional condition, which is not necessarily an error, and is a different usage to that typically defined in programming languages such as a C++ or Java, in which an " exception" is an alternative flow of control, closer to what is termed a "trap" in IEEE 754 terminology.)

Here, the required default method of handling exceptions according to IEEE 754 is discussed (the IEEE 754 optional trapping and other "alternate exception handling" modes are not discussed). Arithmetic exceptions are (by default) required to be recorded in "sticky" status flag bits. That they are "sticky" means that they are not reset by the next (arithmetic) operation, but stay set until explicitly reset. The use of "sticky" flags thus allows for testing of exceptional conditions to be delayed until after a full floating-point expression or subroutine: without them exceptional conditions that could not be otherwise ignored would require explicit testing immediately after every floating-point operation. By default, an operation always returns a result according to specification without interrupting computation. For instance, 1/0 returns +∞, while also setting the divide-by-zero flag bit (this default of ∞ is designed to often return a finite result when used in subsequent operations and so be safely ignored).

The original IEEE 754 standard, however, failed to recommend operations to handle such sets of arithmetic exception flag bits. So while these were implemented in hardware, initially programming language implementations typically did not provide a means to access them (apart from assembler). Over time some programming language standards (e.g., C99/C11 and Fortran) have been updated to specify methods to access and change status flag bits. The 2008 version of the IEEE 754 standard now specifies a few operations for accessing and handling the arithmetic flag bits. The programming model is based on a single thread of execution and use of them by multiple threads has to be handled by a means

Means may refer to:

* Means LLC, an anti-capitalist media worker cooperative

* Means (band), a Christian hardcore band from Regina, Saskatchewan

* Means, Kentucky, a town in the US

* Means (surname)

* Means Johnston Jr. (1916–1989), US Navy ...

outside of the standard (e.g. C11 specifies that the flags have thread-local storage

In computer programming, thread-local storage (TLS) is a memory management method that uses static memory allocation, static or global computer storage, memory local to a thread (computing), thread. The concept allows storage of data that appear ...

).

IEEE 754 specifies five arithmetic exceptions that are to be recorded in the status flags ("sticky bits"):

* inexact, set if the rounded (and returned) value is different from the mathematically exact result of the operation.

* underflow, set if the rounded value is tiny (as specified in IEEE 754) ''and'' inexact (or maybe limited to if it has denormalization loss, as per the 1985 version of IEEE 754), returning a subnormal value including the zeros.

* overflow, set if the absolute value of the rounded value is too large to be represented. An infinity or maximal finite value is returned, depending on which rounding is used.

* divide-by-zero, set if the result is infinite given finite operands, returning an infinity, either +∞ or −∞.

* invalid, set if a finite or infinite result cannot be returned e.g. sqrt(−1) or 0/0, returning a quiet NaN.

The default return value for each of the exceptions is designed to give the correct result in the majority of cases such that the exceptions can be ignored in the majority of codes. ''inexact'' returns a correctly rounded result, and ''underflow'' returns a value less than or equal to the smallest positive normal number in magnitude and can almost always be ignored. ''divide-by-zero'' returns infinity exactly, which will typically then divide a finite number and so give zero, or else will give an ''invalid'' exception subsequently if not, and so can also typically be ignored. For example, the effective resistance of n resistors in parallel (see fig. 1) is given by . If a short-circuit develops with set to 0, will return +infinity which will give a final of 0, as expected (see the continued fraction example of IEEE 754 design rationale for another example).

''Overflow'' and ''invalid'' exceptions can typically not be ignored, but do not necessarily represent errors: for example, a root-finding routine, as part of its normal operation, may evaluate a passed-in function at values outside of its domain, returning NaN and an ''invalid'' exception flag to be ignored until finding a useful start point.

Accuracy problems

The fact that floating-point numbers cannot accurately represent all real numbers, and that floating-point operations cannot accurately represent true arithmetic operations, leads to many surprising situations. This is related to the finite precision with which computers generally represent numbers. For example, the decimal numbers 0.1 and 0.01 cannot be represented exactly as binary floating-point numbers. In the IEEE 754 binary32 format with its 24-bit significand, the result of attempting to square the approximation to 0.1 is neither 0.01 nor the representable number closest to it. The decimal number 0.1 is represented in binary as ; , which is Squaring this number gives Squaring it with rounding to the 24-bit precision gives But the representable number closest to 0.01 is Also, the non-representability of π (and π/2) means that an attempted computation of tan(π/2) will not yield a result of infinity, nor will it even overflow in the usual floating-point formats (assuming an accurate implementation of tan). It is simply not possible for standard floating-point hardware to attempt to compute tan(π/2), because π/2 cannot be represented exactly. This computation in C:tanf function), the result will be −22877332.0.

By the same token, an attempted computation of sin(π) will not yield zero. The result will be (approximately) 0.1225 in double precision, or −0.8742 in single precision.

While floating-point addition and multiplication are both commutative

In mathematics, a binary operation is commutative if changing the order of the operands does not change the result. It is a fundamental property of many binary operations, and many mathematical proofs depend on it. Perhaps most familiar as a pr ...

( and ), they are not necessarily associative

In mathematics, the associative property is a property of some binary operations that rearranging the parentheses in an expression will not change the result. In propositional logic, associativity is a valid rule of replacement for express ...

. That is, is not necessarily equal to . Using 7-digit significand decimal arithmetic:

a = 1234.567, b = 45.67834, c = 0.0004

(a + b) + c:

1234.567 (a)

+ 45.67834 (b)

____________

1280.24534 rounds to 1280.245

1280.245 (a + b)

+ 0.0004 (c)

____________

1280.2454 rounds to 1280.245 ← (a + b) + c

a + (b + c):

45.67834 (b)

+ 0.0004 (c)

____________

45.67874

1234.567 (a)

+ 45.67874 (b + c)

____________

1280.24574 rounds to 1280.246 ← a + (b + c)

They are also not necessarily distributive. That is, may not be the same as :

1234.567 × 3.333333 = 4115.223

1.234567 × 3.333333 = 4.115223

4115.223 + 4.115223 = 4119.338

but

1234.567 + 1.234567 = 1235.802

1235.802 × 3.333333 = 4119.340

In addition to loss of significance, inability to represent numbers such as π and 0.1 exactly, and other slight inaccuracies, the following phenomena may occur:

Incidents

* On 25 February 1991, a loss of significance in a MIM-104 Patriot missile battery prevented it from intercepting an incoming Scud missile in Dhahran,Saudi Arabia

Saudi Arabia, officially the Kingdom of Saudi Arabia (KSA), is a country in West Asia. Located in the centre of the Middle East, it covers the bulk of the Arabian Peninsula and has a land area of about , making it the List of Asian countries ...

, contributing to the death of 28 soldiers from the U.S. Army's 14th Quartermaster Detachment. The error was actually introduced by a fixed-point computation, but the underlying issue would have been the same with floating-point arithmetic.

*

Machine precision and backward error analysis

''Machine precision'' is a quantity that characterizes the accuracy of a floating-point system, and is used in backward error analysis of floating-point algorithms. It is also known as unit roundoff or '' machine epsilon''. Usually denoted , its value depends on the particular rounding being used. With rounding to zero, whereas rounding to nearest, where ''B'' is the base of the system and ''P'' is the precision of the significand (in base ''B''). This is important since it bounds the '' relative error'' in representing any non-zero real number within the normalized range of a floating-point system: Backward error analysis, the theory of which was developed and popularized by James H. Wilkinson, can be used to establish that an algorithm implementing a numerical function is numerically stable. The basic approach is to show that although the calculated result, due to roundoff errors, will not be exactly correct, it is the exact solution to a nearby problem with slightly perturbed input data. If the perturbation required is small, on the order of the uncertainty in the input data, then the results are in some sense as accurate as the data "deserves". The algorithm is then defined as '' backward stable''. Stability is a measure of the sensitivity to rounding errors of a given numerical procedure; by contrast, the condition number of a function for a given problem indicates the inherent sensitivity of the function to small perturbations in its input and is independent of the implementation used to solve the problem. As a trivial example, consider a simple expression giving the inner product of (length two) vectors and , then and so where where by definition, which is the sum of two slightly perturbed (on the order of Εmach) input data, and so is backward stable. For more realistic examples in numerical linear algebra, see Higham 2002 and other references below.Minimizing the effect of accuracy problems

Although individual arithmetic operations of IEEE 754 are guaranteed accurate to within half a ULP, more complicated formulae can suffer from larger errors for a variety of reasons. The loss of accuracy can be substantial if a problem or its data are ill-conditioned, meaning that the correct result is hypersensitive to tiny perturbations in its data. However, even functions that are well-conditioned can suffer from large loss of accuracy if an algorithm numerically unstable for that data is used: apparently equivalent formulations of expressions in a programming language can differ markedly in their numerical stability. One approach to remove the risk of such loss of accuracy is the design and analysis of numerically stable algorithms, which is an aim of the branch of mathematics known asnumerical analysis

Numerical analysis is the study of algorithms that use numerical approximation (as opposed to symbolic computation, symbolic manipulations) for the problems of mathematical analysis (as distinguished from discrete mathematics). It is the study of ...

. Another approach that can protect against the risk of numerical instabilities is the computation of intermediate (scratch) values in an algorithm at a higher precision than the final result requires, which can remove, or reduce by orders of magnitude, such risk: IEEE 754 quadruple precision and extended precision are designed for this purpose when computing at double precision.

For example, the following algorithm is a direct implementation to compute the function which is well-conditioned at 1.0, however it can be shown to be numerically unstable and lose up to half the significant digits carried by the arithmetic when computed near 1.0.

compiler

In computing, a compiler is a computer program that Translator (computing), translates computer code written in one programming language (the ''source'' language) into another language (the ''target'' language). The name "compiler" is primaril ...