|

Variance-stabilizing Transformation

In applied statistics, a variance-stabilizing transformation is a data transformation that is specifically chosen either to simplify considerations in graphical exploratory data analysis or to allow the application of simple regression-based or analysis of variance techniques. Overview The aim behind the choice of a variance-stabilizing transformation is to find a simple function ''ƒ'' to apply to values ''x'' in a data set to create new values such that the variability of the values ''y'' is not related to their mean value. For example, suppose that the values x are realizations from different Poisson distributions: i.e. the distributions each have different mean values ''μ''. Then, because for the Poisson distribution the variance is identical to the mean, the variance varies with the mean. However, if the simple variance-stabilizing transformation :y=\sqrt \, is applied, the sampling variance associated with observation will be nearly constant: see Anscombe transform for ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistics

Statistics (from German language, German: ', "description of a State (polity), state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a scientific, industrial, or social problem, it is conventional to begin with a statistical population or a statistical model to be studied. Populations can be diverse groups of people or objects such as "all people living in a country" or "every atom composing a crystal". Statistics deals with every aspect of data, including the planning of data collection in terms of the design of statistical survey, surveys and experimental design, experiments. When census data (comprising every member of the target population) cannot be collected, statisticians collect data by developing specific experiment designs and survey sample (statistics), samples. Representative sampling assures that inferences and conclusions can reasonably extend from the sample ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Data Transformation (statistics)

In statistics, data transformation is the application of a deterministic mathematical function to each point in a data set—that is, each data point ''zi'' is replaced with the transformed value ''yi'' = ''f''(''zi''), where ''f'' is a function. Transforms are usually applied so that the data appear to more closely meet the assumptions of a statistical inference procedure that is to be applied, or to improve the interpretability or appearance of graphs. Nearly always, the function that is used to transform the data is invertible, and generally is continuous. The transformation is usually applied to a collection of comparable measurements. For example, if we are working with data on peoples' incomes in some currency unit, it would be common to transform each person's income value by the logarithm function. Motivation Guidance for how data should be transformed, or whether a transformation should be applied at all, should come from the particular statistical analysis to be pe ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Analysis Of Variance

Analysis of variance (ANOVA) is a family of statistical methods used to compare the Mean, means of two or more groups by analyzing variance. Specifically, ANOVA compares the amount of variation ''between'' the group means to the amount of variation ''within'' each group. If the between-group variation is substantially larger than the within-group variation, it suggests that the group means are likely different. This comparison is done using an F-test. The underlying principle of ANOVA is based on the law of total variance, which states that the total variance in a dataset can be broken down into components attributable to different sources. In the case of ANOVA, these sources are the variation between groups and the variation within groups. ANOVA was developed by the statistician Ronald Fisher. In its simplest form, it provides a statistical test of whether two or more population means are equal, and therefore generalizes the Student's t-test#Independent two-sample t-test, ''t''- ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Poisson Distribution

In probability theory and statistics, the Poisson distribution () is a discrete probability distribution that expresses the probability of a given number of events occurring in a fixed interval of time if these events occur with a known constant mean rate and independently of the time since the last event. It can also be used for the number of events in other types of intervals than time, and in dimension greater than 1 (e.g., number of events in a given area or volume). The Poisson distribution is named after French mathematician Siméon Denis Poisson. It plays an important role for discrete-stable distributions. Under a Poisson distribution with the expectation of ''λ'' events in a given interval, the probability of ''k'' events in the same interval is: :\frac . For instance, consider a call center which receives an average of ''λ ='' 3 calls per minute at all times of day. If the calls are independent, receiving one does not change the probability of when the next on ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Anscombe Transform

In statistics, the Anscombe transform, named after Francis Anscombe, is a variance-stabilizing transformation that transforms a random variable with a Poisson distribution into one with an approximately standard Gaussian distribution. The Anscombe transform is widely used in photon-limited imaging (astronomy, X-ray) where images naturally follow the Poisson law. The Anscombe transform is usually used to pre-process the data in order to make the standard deviation approximately constant. Then denoising algorithms designed for the framework of additive white Gaussian noise are used; the final estimate is then obtained by applying an inverse Anscombe transformation to the denoised data. Definition For the Poisson distribution the mean m and variance v are not independent: m = v. The Anscombe transform : A:x \mapsto 2 \sqrt \, aims at transforming the data so that the variance is set approximately 1 for large enough mean; for mean zero, the variance is still zero. It transform ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Binomial Distribution

In probability theory and statistics, the binomial distribution with parameters and is the discrete probability distribution of the number of successes in a sequence of statistical independence, independent experiment (probability theory), experiments, each asking a yes–no question, and each with its own Boolean-valued function, Boolean-valued outcome (probability), outcome: ''success'' (with probability ) or ''failure'' (with probability ). A single success/failure experiment is also called a Bernoulli trial or Bernoulli experiment, and a sequence of outcomes is called a Bernoulli process; for a single trial, i.e., , the binomial distribution is a Bernoulli distribution. The binomial distribution is the basis for the binomial test of statistical significance. The binomial distribution is frequently used to model the number of successes in a sample of size drawn with replacement from a population of size . If the sampling is carried out without replacement, the draws ar ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Power Transform

In statistics, a power transform is a family of functions applied to create a monotonic transformation of data using power functions. It is a data transformation technique used to stabilize variance, make the data more normal distribution-like, improve the validity of measures of association (such as the Pearson correlation In statistics, the Pearson correlation coefficient (PCC) is a correlation coefficient that measures linear correlation between two sets of data. It is the ratio between the covariance of two variables and the product of their standard deviation ... between variables), and for other data stabilization procedures. Power transforms are used in multiple fields, including Multiresolution analysis, multi-resolution and wavelet analysis, statistical data analysis, medical research, modeling of physical processes, Geochemical modeling, geochemical data analysis, epidemiology and many other clinical, environmental and social research areas. Definition The power tr ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Relative Error

The approximation error in a given data value represents the significant discrepancy that arises when an exact, true value is compared against some approximation derived for it. This inherent error in approximation can be quantified and expressed in two principal ways: as an absolute error, which denotes the direct numerical magnitude of this discrepancy irrespective of the true value's scale, or as a relative error, which provides a scaled measure of the error by considering the absolute error in proportion to the exact data value, thus offering a context-dependent assessment of the error's significance. An approximation error can manifest due to a multitude of diverse reasons. Prominent among these are limitations related to computing machine precision, where digital systems cannot represent all real numbers with perfect accuracy, leading to unavoidable truncation or rounding. Another common source is inherent measurement error, stemming from the practical limitations of inst ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Inverse Hyperbolic Sine

In mathematics, the inverse hyperbolic functions are inverses of the hyperbolic functions, analogous to the inverse circular functions. There are six in common use: inverse hyperbolic sine, inverse hyperbolic cosine, inverse hyperbolic tangent, inverse hyperbolic cosecant, inverse hyperbolic secant, and inverse hyperbolic cotangent. They are commonly denoted by the symbols for the hyperbolic functions, prefixed with ''arc-'' or ''ar-'' or with a superscript (for example , , or \sinh^). For a given value of a hyperbolic function, the inverse hyperbolic function provides the corresponding hyperbolic angle measure, for example \operatorname(\sinh a) = a and \sinh(\operatorname x) = x. Hyperbolic angle measure is the length of an arc of a unit hyperbola x^2 - y^2 = 1 as measured in the Lorentzian plane (''not'' the length of a hyperbolic arc in the Euclidean plane), and twice the area of the corresponding hyperbolic sector. This is analogous to the way circular angle measure i ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Fisher Transformation

In statistics, the Fisher transformation (or Fisher ''z''-transformation) of a Pearson correlation coefficient is its inverse hyperbolic tangent (artanh). When the sample correlation coefficient ''r'' is near 1 or -1, its distribution is highly skewed, which makes it difficult to estimate confidence intervals and apply tests of significance for the population correlation coefficient ρ. The Fisher transformation solves this problem by yielding a variable whose distribution is approximately normally distributed, with a variance that is stable over different values of ''r''. Definition Given a set of ''N'' bivariate sample pairs (''X''''i'', ''Y''''i''), ''i'' = 1, ..., ''N'', the sample correlation coefficient ''r'' is given by :r = \frac = \frac. Here \operatorname(X,Y) stands for the covariance between the variables X and Y and \sigma stands for the standard deviation of the respective variable. Fisher's z-transformation of ''r'' is defined a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Delta Method

In statistics, the delta method is a method of deriving the asymptotic distribution of a random variable. It is applicable when the random variable being considered can be defined as a differentiable function of a random variable which is Asymptotic distribution, asymptotically Normal distribution, Gaussian. History The delta method was derived from propagation of error, and the idea behind was known in the early 20th century. Its statistical application can be traced as far back as 1928 by Truman Lee Kelley, T. L. Kelley. A formal description of the method was presented by J. L. Doob in 1935. Robert Dorfman also described a version of it in 1938. Univariate delta method While the delta method generalizes easily to a multivariate setting, careful motivation of the technique is more easily demonstrated in univariate terms. Roughly, if there is a sequence (mathematics), sequence of random variables satisfying :, where ''θ'' and ''σ''2 are finite valued constants and \xrightar ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

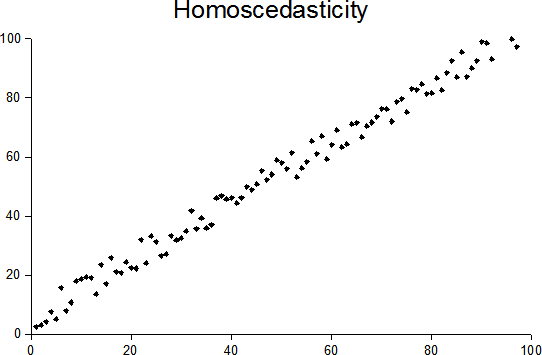

Heteroscedasticity

In statistics, a sequence of random variables is homoscedastic () if all its random variables have the same finite variance; this is also known as homogeneity of variance. The complementary notion is called heteroscedasticity, also known as heterogeneity of variance. The spellings ''homoskedasticity'' and ''heteroskedasticity'' are also frequently used. “Skedasticity” comes from the Ancient Greek word “skedánnymi”, meaning “to scatter”. Assuming a variable is homoscedastic when in reality it is heteroscedastic () results in unbiased but inefficient point estimates and in biased estimates of standard errors, and may result in overestimating the goodness of fit as measured by the Pearson coefficient. The existence of heteroscedasticity is a major concern in regression analysis and the analysis of variance, as it invalidates statistical tests of significance that assume that the modelling errors all have the same variance. While the ordinary least squares ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |