|

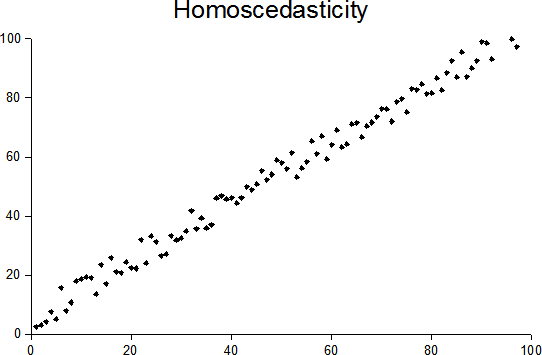

Heteroscedasticity

In statistics, a sequence of random variables is homoscedastic () if all its random variables have the same finite variance; this is also known as homogeneity of variance. The complementary notion is called heteroscedasticity, also known as heterogeneity of variance. The spellings ''homoskedasticity'' and ''heteroskedasticity'' are also frequently used. “Skedasticity” comes from the Ancient Greek word “skedánnymi”, meaning “to scatter”. Assuming a variable is homoscedastic when in reality it is heteroscedastic () results in unbiased but inefficient point estimates and in biased estimates of standard errors, and may result in overestimating the goodness of fit as measured by the Pearson coefficient. The existence of heteroscedasticity is a major concern in regression analysis and the analysis of variance, as it invalidates statistical tests of significance that assume that the modelling errors all have the same variance. While the ordinary least squares ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Generalized Least Squares

In statistics, generalized least squares (GLS) is a method used to estimate the unknown parameters in a Linear regression, linear regression model. It is used when there is a non-zero amount of correlation between the Residual (statistics), residuals in the regression model. GLS is employed to improve efficiency_(statistics), statistical efficiency and reduce the risk of drawing erroneous inferences, as compared to conventional least squares and weighted least squares methods. It was first described by Alexander Aitken in 1935. It requires knowledge of the covariance matrix for the residuals. If this is unknown, estimating the covariance matrix gives the method of feasible generalized least squares (FGLS). However, FGLS provides fewer guarantees of improvement. Method In standard linear regression models, one observes data \_ on ''n'' statistical units with ''k'' − 1 predictor values and one response value each. The response values are placed in a vector,\mathbf ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Autoregressive Conditional Heteroscedasticity

In econometrics, the autoregressive conditional heteroskedasticity (ARCH) model is a statistical model for time series data that describes the variance of the current error term or innovation as a function of the actual sizes of the previous time periods' error terms; often the variance is related to the squares of the previous innovations. The ARCH model is appropriate when the error variance in a time series follows an autoregressive (AR) model; if an autoregressive moving average (ARMA) model is assumed for the error variance, the model is a generalized autoregressive conditional heteroskedasticity (GARCH) model. ARCH models are commonly employed in modeling financial time series that exhibit time-varying volatility and volatility clustering, i.e. periods of swings interspersed with periods of relative calm (this is, when the time series exhibits heteroskedasticity). ARCH-type models are sometimes considered to be in the family of stochastic volatility models, although t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Ordinary Least Squares

In statistics, ordinary least squares (OLS) is a type of linear least squares method for choosing the unknown parameters in a linear regression In statistics, linear regression is a statistical model, model that estimates the relationship between a Scalar (mathematics), scalar response (dependent variable) and one or more explanatory variables (regressor or independent variable). A mode ... model (with fixed level-one effects of a linear function of a set of explanatory variables) by the principle of least squares: minimizing the sum of the squares of the differences between the observed dependent variable (values of the variable being observed) in the input dataset and the output of the (linear) function of the independent variable. Some sources consider OLS to be linear regression. Geometrically, this is seen as the sum of the squared distances, parallel to the axis of the dependent variable, between each data point in the set and the corresponding point on the regression ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Errors And Residuals In Statistics

In statistics and optimization, errors and residuals are two closely related and easily confused measures of the deviation of an observed value of an element of a statistical sample from its "true value" (not necessarily observable). The error of an observation is the deviation of the observed value from the true value of a quantity of interest (for example, a population mean). The residual is the difference between the observed value and the '' estimated'' value of the quantity of interest (for example, a sample mean). The distinction is most important in regression analysis, where the concepts are sometimes called the regression errors and regression residuals and where they lead to the concept of studentized residuals. In econometrics, "errors" are also called disturbances. Introduction Suppose there is a series of observations from a univariate distribution and we want to estimate the mean of that distribution (the so-called location model). In this case, the errors a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Skedastic Function

In probability theory and statistics, a conditional variance is the variance of a random variable given the value(s) of one or more other variables. Particularly in econometrics, the conditional variance is also known as the scedastic function or skedastic function. Conditional variances are important parts of autoregressive conditional heteroskedasticity (ARCH) models. Definition The conditional variance of a random variable ''Y'' given another random variable ''X'' is :\operatorname(Y\mid X) = \operatorname\Big(\big(Y - \operatorname(Y\mid X)\big)^\;\Big, \; X\Big). The conditional variance tells us how much variance is left if we use \operatorname(Y\mid X) to "predict" ''Y''. Here, as usual, \operatorname(Y\mid X) stands for the conditional expectation of ''Y'' given ''X'', which we may recall, is a random variable itself (a function of ''X'', determined up to probability one). As a result, \operatorname(Y\mid X) itself is a random variable (and is a function of ''X''). Expla ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Gauss–Markov Theorem

In statistics, the Gauss–Markov theorem (or simply Gauss theorem for some authors) states that the ordinary least squares (OLS) estimator has the lowest sampling variance within the class of linear unbiased estimators, if the errors in the linear regression model are uncorrelated, have equal variances and expectation value of zero. The errors do not need to be normal, nor do they need to be independent and identically distributed (only uncorrelated with mean zero and homoscedastic with finite variance). The requirement that the estimator be unbiased cannot be dropped, since biased estimators exist with lower variance. See, for example, the James–Stein estimator (which also drops linearity), ridge regression, or simply any degenerate estimator. The theorem was named after Carl Friedrich Gauss and Andrey Markov, although Gauss' work significantly predates Markov's. But while Gauss derived the result under the assumption of independence and normality, Markov r ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Scedastic Function

In probability theory and statistics, a conditional variance is the variance of a random variable given the value(s) of one or more other variables. Particularly in econometrics, the conditional variance is also known as the scedastic function or skedastic function. Conditional variances are important parts of autoregressive conditional heteroskedasticity (ARCH) models. Definition The conditional variance of a random variable ''Y'' given another random variable ''X'' is :\operatorname(Y\mid X) = \operatorname\Big(\big(Y - \operatorname(Y\mid X)\big)^\;\Big, \; X\Big). The conditional variance tells us how much variance is left if we use \operatorname(Y\mid X) to "predict" ''Y''. Here, as usual, \operatorname(Y\mid X) stands for the conditional expectation of ''Y'' given ''X'', which we may recall, is a random variable itself (a function of ''X'', determined up to probability one). As a result, \operatorname(Y\mid X) itself is a random variable (and is a function of ''X''). Exp ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Simple Linear Regression

In statistics, simple linear regression (SLR) is a linear regression model with a single explanatory variable. That is, it concerns two-dimensional sample points with one independent variable and one dependent variable (conventionally, the ''x'' and ''y'' coordinates in a Cartesian coordinate system) and finds a linear function (a non-vertical straight line) that, as accurately as possible, predicts the dependent variable values as a function of the independent variable. The adjective ''simple'' refers to the fact that the outcome variable is related to a single predictor. It is common to make the additional stipulation that the ordinary least squares (OLS) method should be used: the accuracy of each predicted value is measured by its squared '' residual'' (vertical distance between the point of the data set and the fitted line), and the goal is to make the sum of these squared deviations as small as possible. In this case, the slope of the fitted line is equal to the corre ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistics

Statistics (from German language, German: ', "description of a State (polity), state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a scientific, industrial, or social problem, it is conventional to begin with a statistical population or a statistical model to be studied. Populations can be diverse groups of people or objects such as "all people living in a country" or "every atom composing a crystal". Statistics deals with every aspect of data, including the planning of data collection in terms of the design of statistical survey, surveys and experimental design, experiments. When census data (comprising every member of the target population) cannot be collected, statisticians collect data by developing specific experiment designs and survey sample (statistics), samples. Representative sampling assures that inferences and conclusions can reasonably extend from the sample ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |