|

Encoder-decoder Model

The transformer is a deep learning architecture based on the multi-head attention mechanism, in which text is converted to numerical representations called tokens, and each token is converted into a vector via lookup from a word embedding table. At each layer, each token is then contextualized within the scope of the context window with other (unmasked) tokens via a parallel multi-head attention mechanism, allowing the signal for key tokens to be amplified and less important tokens to be diminished. Transformers have the advantage of having no recurrent units, therefore requiring less training time than earlier recurrent neural architectures (RNNs) such as long short-term memory (LSTM). Later variations have been widely adopted for training large language models (LLM) on large (language) datasets. The modern version of the transformer was proposed in the 2017 paper "Attention Is All You Need" by researchers at Google. Transformers were first developed as an improvement ove ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

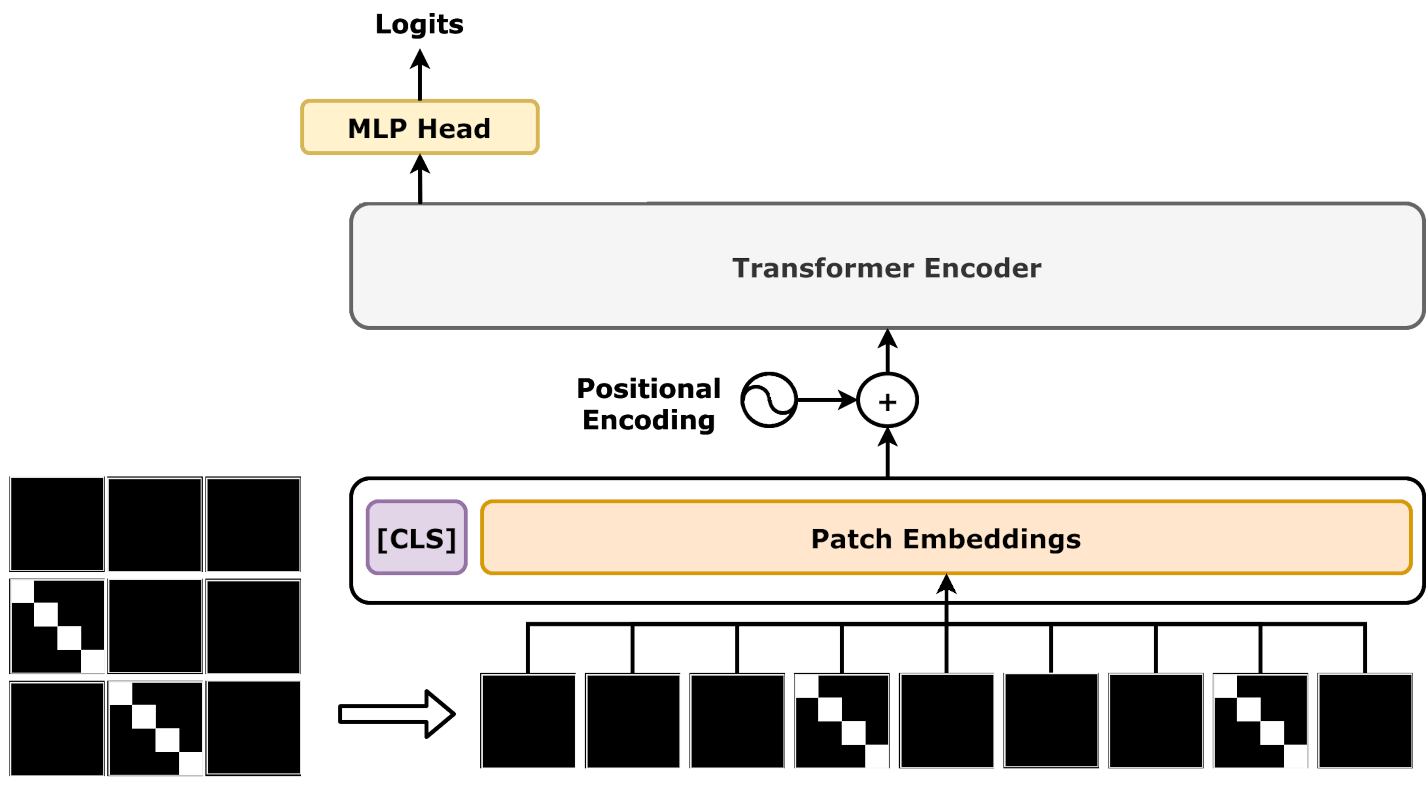

Vision Transformer

A vision transformer (ViT) is a Transformer (machine learning model), transformer designed for computer vision. A ViT decomposes an input image into a series of patches (rather than text into Byte pair encoding, tokens), serializes each patch into a vector, and maps it to a smaller dimension with a single matrix multiplication. These vector Latent space, embeddings are then processed by a BERT (language model), transformer encoder as if they were token embeddings. ViTs were designed as alternatives to convolutional neural networks (CNNs) in computer vision applications. They have different inductive biases, training stability, and data efficiency. Compared to CNNs, ViTs are less data efficient, but have higher capacity. Some of the largest modern computer vision models are ViTs, such as one with 22B parameters. Subsequent to its publication, many variants were proposed, with hybrid architectures with both features of ViTs and CNNs. ViTs have found application in image recognition, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |