|

Data Compression

In information theory, data compression, source coding, or bit-rate reduction is the process of encoding information using fewer bits than the original representation. Any particular compression is either lossy or lossless. Lossless compression reduces bits by identifying and eliminating statistical redundancy. No information is lost in lossless compression. Lossy compression reduces bits by removing unnecessary or less important information. Typically, a device that performs data compression is referred to as an encoder, and one that performs the reversal of the process (decompression) as a decoder. The process of reducing the size of a data file is often referred to as data compression. In the context of data transmission, it is called source coding: encoding is done at the source of the data before it is stored or transmitted. Source coding should not be confused with channel coding, for error detection and correction or line coding, the means for mapping data onto a sig ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Information Theory

Information theory is the mathematical study of the quantification (science), quantification, Data storage, storage, and telecommunications, communication of information. The field was established and formalized by Claude Shannon in the 1940s, though early contributions were made in the 1920s through the works of Harry Nyquist and Ralph Hartley. It is at the intersection of electronic engineering, mathematics, statistics, computer science, Neuroscience, neurobiology, physics, and electrical engineering. A key measure in information theory is information entropy, entropy. Entropy quantifies the amount of uncertainty involved in the value of a random variable or the outcome of a random process. For example, identifying the outcome of a Fair coin, fair coin flip (which has two equally likely outcomes) provides less information (lower entropy, less uncertainty) than identifying the outcome from a roll of a dice, die (which has six equally likely outcomes). Some other important measu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Arithmetic Coding

Arithmetic coding (AC) is a form of entropy encoding used in lossless data compression. Normally, a String (computer science), string of characters is represented using a fixed number of bits per character, as in the American Standard Code for Information Interchange, ASCII code. When a string is converted to arithmetic encoding, frequently used characters will be stored with fewer bits and not-so-frequently occurring characters will be stored with more bits, resulting in fewer bits used in total. Arithmetic coding differs from other forms of entropy encoding, such as Huffman coding, in that rather than separating the input into component symbols and replacing each with a code, arithmetic coding encodes the entire message into a single number, an arbitrary-precision arithmetic, arbitrary-precision fraction ''q'', where . It represents the current information as a range, defined by two numbers. A recent family of entropy coders called asymmetric numeral systems allows for faster imp ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Probabilistic Model

A statistical model is a mathematical model that embodies a set of statistical assumptions concerning the generation of sample data (and similar data from a larger population). A statistical model represents, often in considerably idealized form, the data-generating process. When referring specifically to probabilities, the corresponding term is probabilistic model. All statistical hypothesis tests and all statistical estimators are derived via statistical models. More generally, statistical models are part of the foundation of statistical inference. A statistical model is usually specified as a mathematical relationship between one or more random variables and other non-random variables. As such, a statistical model is "a formal representation of a theory" ( Herman Adèr quoting Kenneth Bollen). Introduction Informally, a statistical model can be thought of as a statistical assumption (or set of statistical assumptions) with a certain property: that the assumption allows u ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Burrows–Wheeler Transform

The Burrows–Wheeler transform (BWT) rearranges a character string into runs of similar characters, in a manner that can be reversed to recover the original string. Since compression techniques such as move-to-front transform and run-length encoding are more effective when such runs are present, the BWT can be used as a preparatory step to improve the efficiency of a compression algorithm, and is used this way in software such as bzip2. The algorithm can be implemented efficiently using a suffix array thus reaching linear time complexity. It was invented by David Wheeler in 1983, and later published by him and Michael Burrows in 1994. Their paper included a compression algorithm, called the Block-sorting Lossless Data Compression Algorithm or BSLDCA, that compresses data by using the BWT followed by move-to-front coding and Huffman coding or arithmetic coding. Description The transform is done by constructing a matrix (known as the Burrows-Wheeler Matrix) whose rows are the ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Prediction By Partial Matching

Prediction by partial matching (PPM) is an adaptive statistical data compression technique based on context modeling and prediction. PPM models use a set of previous symbols in the uncompressed symbol stream to predict the next symbol in the stream. PPM algorithms can also be used to cluster data into predicted groupings in cluster analysis. Theory Predictions are usually reduced to symbol rankings. Each symbol (a letter, bit or any other amount of data) is ranked before it is compressed, and the ranking system determines the corresponding codeword (and therefore the compression rate). In many compression algorithms, the ranking is equivalent to probability mass function estimation. Given the previous letters (or given a context), each symbol is assigned with a probability. For instance, in arithmetic coding the symbols are ranked by their probabilities to appear after previous symbols, and the whole sequence is compressed into a single fraction that is computed according t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Randomized Algorithm

A randomized algorithm is an algorithm that employs a degree of randomness as part of its logic or procedure. The algorithm typically uses uniformly random bits as an auxiliary input to guide its behavior, in the hope of achieving good performance in the "average case" over all possible choices of random determined by the random bits; thus either the running time, or the output (or both) are random variables. There is a distinction between algorithms that use the random input so that they always terminate with the correct answer, but where the expected running time is finite (Las Vegas algorithms, for example Quicksort), and algorithms which have a chance of producing an incorrect result ( Monte Carlo algorithms, for example the Monte Carlo algorithm for the MFAS problem) or fail to produce a result either by signaling a failure or failing to terminate. In some cases, probabilistic algorithms are the only practical means of solving a problem. In common practice, randomized alg ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Re-Pair

Re-Pair (short for recursive pairing) is a grammar-based compression algorithm that, given an input text, builds a straight-line program, i.e. a context-free grammar In formal language theory, a context-free grammar (CFG) is a formal grammar whose production rules can be applied to a nonterminal symbol regardless of its context. In particular, in a context-free grammar, each production rule is of the fo ... generating a single string: the input text. In order to perform the compression in linear time, it consumes the amount of memory that is approximately five times the size of its input. The grammar is built by recursively replacing the most frequent pair of characters occurring in the text. Once there is no pair of characters occurring twice, the resulting string is used as the axiom of the grammar. Therefore, the output grammar is such that all rules but the axiom have two symbols on the right-hand side. How it works Re-Pair was first introduced by N. J. Larsson ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Sequitur Algorithm

Sequitur (or Nevill-Manning–Witten algorithm) is a recursive algorithm developed by Craig Nevill-Manning and Ian H. Witten in 1997 that infers a hierarchical structure (context-free grammar) from a sequence of discrete symbols. The algorithm operates in linear space and time. It can be used in data compression software applications. Constraints The sequitur algorithm constructs a grammar by substituting repeating phrases in the given sequence with new rules and therefore produces a concise representation of the sequence. For example, if the sequence is : S→abcab, the algorithm will produce : S→AcA, A→ab. While scanning the input sequence, the algorithm follows two constraints for generating its grammar efficiently: digram uniqueness and rule utility. Digram uniqueness Whenever a new symbol is scanned from the sequence, it is appended with the last scanned symbol to form a new digram. If this digram has been formed earlier then a new rule is made to replace both occurr ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Data Collection

Data collection or data gathering is the process of gathering and measuring information on targeted variables in an established system, which then enables one to answer relevant questions and evaluate outcomes. Data collection is a research component in all study fields, including physical science, physical and social sciences, humanities, and business. While methods vary by discipline, the emphasis on ensuring accurate and honest collection remains the same. The goal for all data collection is to capture evidence that allows data analysis to lead to the formulation of credible answers to the questions that have been posed. Regardless of the field of or preference for defining data (Quantitative method, quantitative or Qualitative method, qualitative), accurate data collection is essential to maintain research integrity. The selection of appropriate data collection instruments (existing, modified, or newly developed) and delineated instructions for their correct use reduce the l ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Grammar-based Codes

Grammar-based codes or grammar-based compression are Data compression, compression algorithms based on the idea of constructing a context-free grammar (CFG) for the string to be compressed. Examples include universal lossless data compression algorithms. To compress a data sequence x = x_1 \cdots x_n, a grammar-based code transforms x into a context-free grammar G. The problem of finding a smallest grammar for an input sequence (smallest grammar problem) is known to be NP-hard, so many grammar-transform algorithms are proposed from theoretical and practical viewpoints. Generally, the produced grammar G is further compressed by statistical encoders like arithmetic coding. Examples and characteristics The class of grammar-based codes is very broad. It includes block codes, the multilevel pattern matching (MPM) algorithm, variations of the incremental parsing LZ77 and LZ78, Lempel-Ziv code, and many other new universal lossless compression algorithms. Grammar-based codes are univers ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

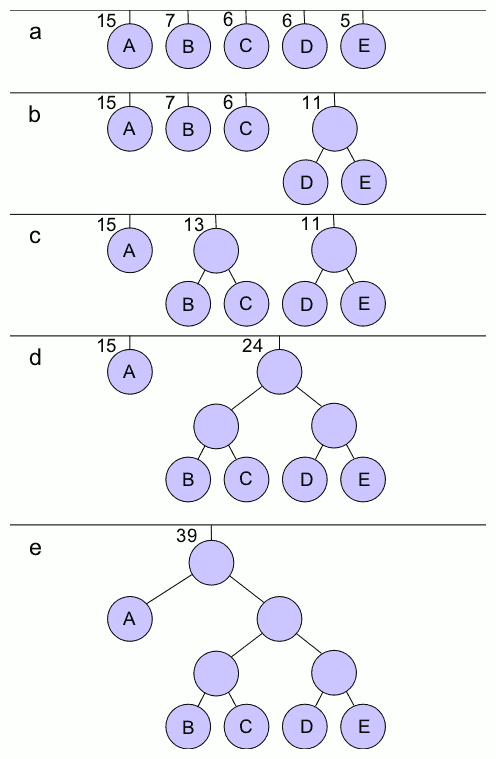

Huffman Coding

In computer science and information theory, a Huffman code is a particular type of optimal prefix code that is commonly used for lossless data compression. The process of finding or using such a code is Huffman coding, an algorithm developed by David A. Huffman while he was a Doctor of Science, Sc.D. student at Massachusetts Institute of Technology, MIT, and published in the 1952 paper "A Method for the Construction of Minimum-Redundancy Codes". The output from Huffman's algorithm can be viewed as a variable-length code table for encoding a source symbol (such as a character in a file). The algorithm derives this table from the estimated probability or frequency of occurrence (''weight'') for each possible value of the source symbol. As in other entropy encoding methods, more common symbols are generally represented using fewer bits than less common symbols. Huffman's method can be efficiently implemented, finding a code in time linear time, linear to the number of input weigh ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |