Skewness on:

[Wikipedia]

[Google]

[Amazon]

In

In

In the older notion of nonparametric skew, defined as where is the

In the older notion of nonparametric skew, defined as where is the  For example, in the distribution of adult residents across US households, the skew is to the right. However, since the majority of cases is less than or equal to the mode, which is also the median, the mean sits in the heavier left tail. As a result, the rule of thumb that the mean is right of the median under right skew failed.

For example, in the distribution of adult residents across US households, the skew is to the right. However, since the majority of cases is less than or equal to the mode, which is also the median, the mean sits in the heavier left tail. As a result, the rule of thumb that the mean is right of the median under right skew failed.

FXSolver.com or simply the moment coefficient of skewness,

2008–2016 by Stan Brown, Oak Road Systems but should not be confused with Pearson's other skewness statistics (see below). The last equality expresses skewness in terms of the ratio of the third cumulant to the 1.5th power of the second cumulant . This is analogous to the definition of kurtosis as the fourth cumulant normalized by the square of the second cumulant. The skewness is also sometimes denoted . If is finite and is finite too, then skewness can be expressed in terms of the non-central moment by expanding the previous formula:

"Measuring skewness: a forgotten statistic."

Journal of Statistics Education 19.2 (2011): 1-18. (Page 7) where is the unique symmetric unbiased estimator of the third cumulant and is the symmetric unbiased estimator of the second cumulant (i.e. the

Skewness Measures for the Weibull Distribution

by Michel Petitjean

On More Robust Estimation of Skewness and Kurtosis

Comparison of skew estimators by Kim and White.

{{Theory_of_probability_distributions Moments (mathematics) Statistical deviation and dispersion

In

In probability theory

Probability theory or probability calculus is the branch of mathematics concerned with probability. Although there are several different probability interpretations, probability theory treats the concept in a rigorous mathematical manner by expre ...

and statistics

Statistics (from German language, German: ', "description of a State (polity), state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a s ...

, skewness is a measure of the asymmetry of the probability distribution

In probability theory and statistics, a probability distribution is a Function (mathematics), function that gives the probabilities of occurrence of possible events for an Experiment (probability theory), experiment. It is a mathematical descri ...

of a real-valued random variable

A random variable (also called random quantity, aleatory variable, or stochastic variable) is a Mathematics, mathematical formalization of a quantity or object which depends on randomness, random events. The term 'random variable' in its mathema ...

about its mean. The skewness value can be positive, zero, negative, or undefined.

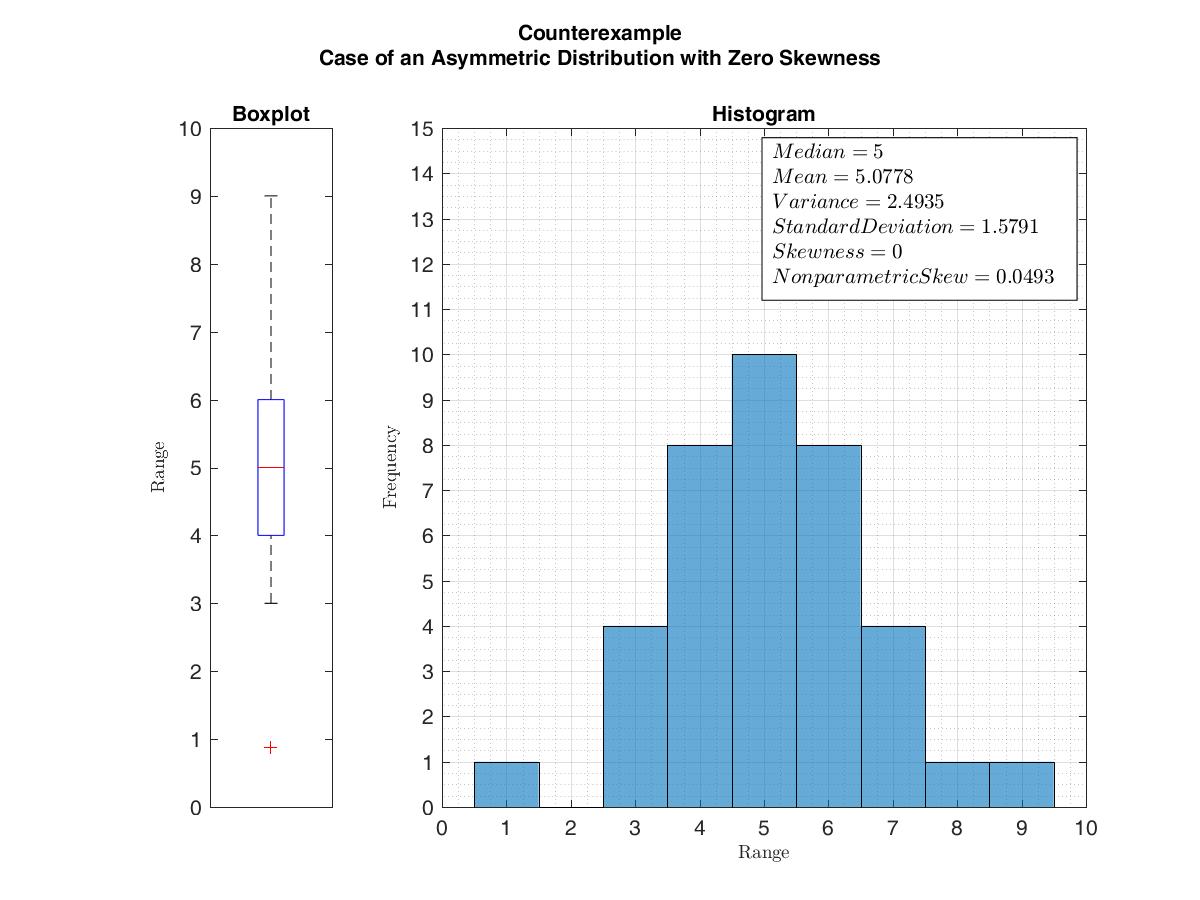

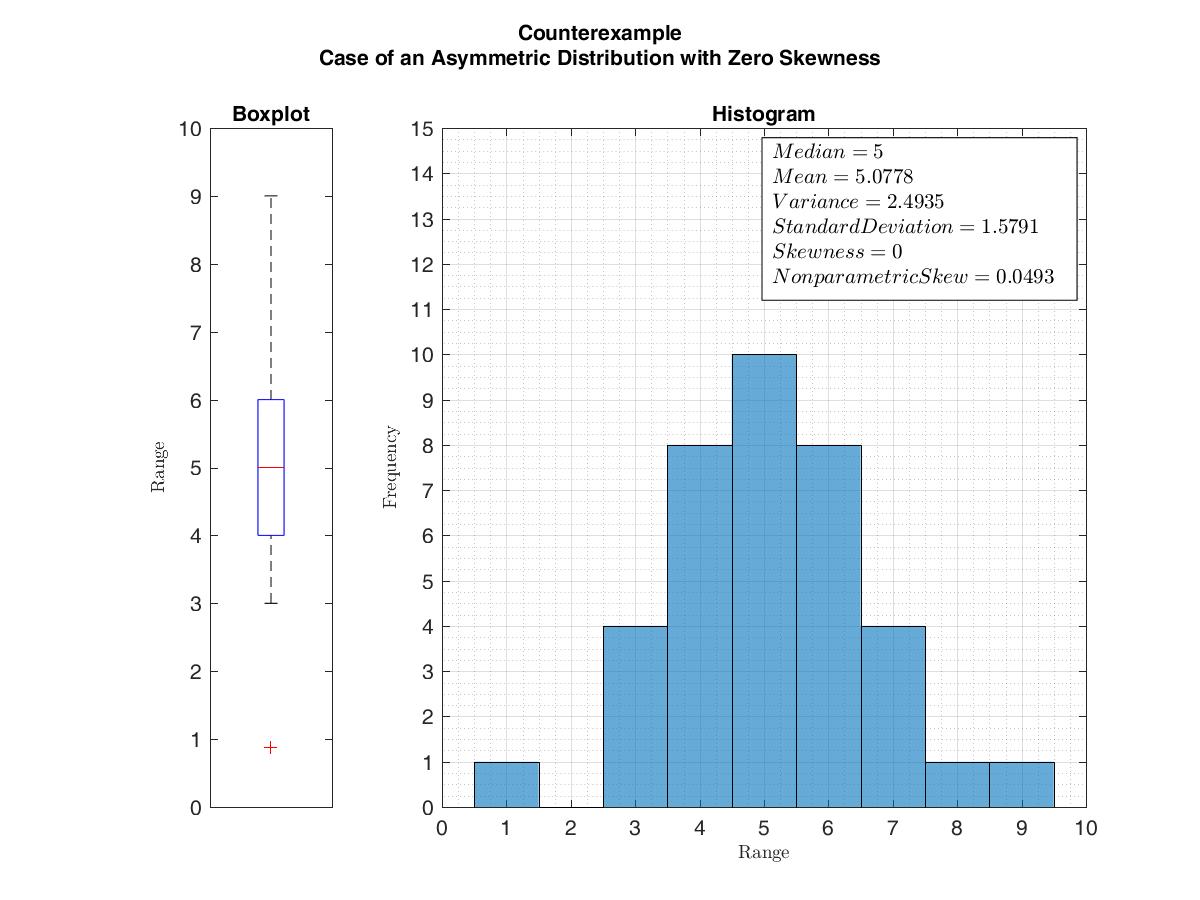

For a unimodal distribution (a distribution with a single peak), negative skew commonly indicates that the ''tail'' is on the left side of the distribution, and positive skew indicates that the tail is on the right. In cases where one tail is long but the other tail is fat, skewness does not obey a simple rule. For example, a zero value in skewness means that the tails on both sides of the mean balance out overall; this is the case for a symmetric distribution but can also be true for an asymmetric distribution where one tail is long and thin, and the other is short but fat. Thus, the judgement on the symmetry of a given distribution by using only its skewness is risky; the distribution shape must be taken into account.

Introduction

Consider the two distributions in the figure just below. Within each graph, the values on the right side of the distribution taper differently from the values on the left side. These tapering sides are called ''tails'', and they provide a visual means to determine which of the two kinds of skewness a distribution has: # ': The left tail is longer; the mass of the distribution is concentrated on the right of the figure. The distribution is said to be ''left-skewed'', ''left-tailed'', or ''skewed to the left'', despite the fact that the curve itself appears to be skewed or leaning to the right; ''left'' instead refers to the left tail being drawn out and, often, the mean being skewed to the left of a typical center of the data. A left-skewed distribution usually appears as a ''right-leaning'' curve. # ': The right tail is longer; the mass of the distribution is concentrated on the left of the figure. The distribution is said to be ''right-skewed'', ''right-tailed'', or ''skewed to the right'', despite the fact that the curve itself appears to be skewed or leaning to the left; ''right'' instead refers to the right tail being drawn out and, often, the mean being skewed to the right of a typical center of the data. A right-skewed distribution usually appears as a ''left-leaning'' curve. Skewness in a data series may sometimes be observed not only graphically but by simple inspection of the values. For instance, consider the numeric sequence (49, 50, 51), whose values are evenly distributed around a central value of 50. We can transform this sequence into a negatively skewed distribution by adding a value far below the mean, which is probably a negativeoutlier

In statistics, an outlier is a data point that differs significantly from other observations. An outlier may be due to a variability in the measurement, an indication of novel data, or it may be the result of experimental error; the latter are ...

, e.g. (40, 49, 50, 51). Therefore, the mean of the sequence becomes 47.5, and the median is 49.5. Based on the formula of nonparametric skew, defined as the skew is negative. Similarly, we can make the sequence positively skewed by adding a value far above the mean, which is probably a positive outlier, e.g. (49, 50, 51, 60), where the mean is 52.5, and the median is 50.5.

As mentioned earlier, a unimodal distribution with zero value of skewness does not imply that this distribution is symmetric necessarily. However, a symmetric unimodal or multimodal distribution always has zero skewness.

Relationship of mean and median

The skewness is not directly related to the relationship between the mean and median: a distribution with negative skew can have its mean greater than or less than the median, and likewise for positive skew. In the older notion of nonparametric skew, defined as where is the

In the older notion of nonparametric skew, defined as where is the mean

A mean is a quantity representing the "center" of a collection of numbers and is intermediate to the extreme values of the set of numbers. There are several kinds of means (or "measures of central tendency") in mathematics, especially in statist ...

, is the median

The median of a set of numbers is the value separating the higher half from the lower half of a Sample (statistics), data sample, a statistical population, population, or a probability distribution. For a data set, it may be thought of as the “ ...

, and is the standard deviation

In statistics, the standard deviation is a measure of the amount of variation of the values of a variable about its Expected value, mean. A low standard Deviation (statistics), deviation indicates that the values tend to be close to the mean ( ...

, the skewness is defined in terms of this relationship: positive/right nonparametric skew means the mean is greater than (to the right of) the median, while negative/left nonparametric skew means the mean is less than (to the left of) the median. However, the modern definition of skewness and the traditional nonparametric definition do not always have the same sign: while they agree for some families of distributions, they differ in some of the cases, and conflating them is misleading.

If the distribution is symmetric, then the mean is equal to the median, and the distribution has zero skewness. If the distribution is both symmetric and unimodal, then the mean

A mean is a quantity representing the "center" of a collection of numbers and is intermediate to the extreme values of the set of numbers. There are several kinds of means (or "measures of central tendency") in mathematics, especially in statist ...

= median

The median of a set of numbers is the value separating the higher half from the lower half of a Sample (statistics), data sample, a statistical population, population, or a probability distribution. For a data set, it may be thought of as the “ ...

= mode. This is the case of a coin toss or the series 1,2,3,4,... Note, however, that the converse is not true in general, i.e. zero skewness (defined below) does not imply that the mean is equal to the median.

A 2005 journal article points out:Many textbooks teach a rule of thumb stating that the mean is right of the median under right skew, and left of the median under left skew. This rule fails with surprising frequency. It can fail in multimodal distributions, or in distributions where one tail is long but the other is heavy. Most commonly, though, the rule fails in discrete distributions where the areas to the left and right of the median are not equal. Such distributions not only contradict the textbook relationship between mean, median, and skew, they also contradict the textbook interpretation of the median.

For example, in the distribution of adult residents across US households, the skew is to the right. However, since the majority of cases is less than or equal to the mode, which is also the median, the mean sits in the heavier left tail. As a result, the rule of thumb that the mean is right of the median under right skew failed.

For example, in the distribution of adult residents across US households, the skew is to the right. However, since the majority of cases is less than or equal to the mode, which is also the median, the mean sits in the heavier left tail. As a result, the rule of thumb that the mean is right of the median under right skew failed.

Definition

Fisher's moment coefficient of skewness

The skewness of a random variable is the third standardized moment , defined as: where is the mean, is thestandard deviation

In statistics, the standard deviation is a measure of the amount of variation of the values of a variable about its Expected value, mean. A low standard Deviation (statistics), deviation indicates that the values tend to be close to the mean ( ...

, E is the expectation operator, is the third central moment, and are the -th cumulants. It is sometimes referred to as Pearson's moment coefficient of skewness,Pearson's moment coefficient of skewnessFXSolver.com or simply the moment coefficient of skewness,

2008–2016 by Stan Brown, Oak Road Systems but should not be confused with Pearson's other skewness statistics (see below). The last equality expresses skewness in terms of the ratio of the third cumulant to the 1.5th power of the second cumulant . This is analogous to the definition of kurtosis as the fourth cumulant normalized by the square of the second cumulant. The skewness is also sometimes denoted . If is finite and is finite too, then skewness can be expressed in terms of the non-central moment by expanding the previous formula:

Examples

Skewness can be infinite, as when where the third cumulants are infinite, or as when where the third cumulant is undefined. Examples of distributions with finite skewness include the following. * Anormal distribution

In probability theory and statistics, a normal distribution or Gaussian distribution is a type of continuous probability distribution for a real-valued random variable. The general form of its probability density function is

f(x) = \frac ...

and any other symmetric distribution with finite third moment has a skewness of 0

* A half-normal distribution has a skewness just below 1

* An exponential distribution

In probability theory and statistics, the exponential distribution or negative exponential distribution is the probability distribution of the distance between events in a Poisson point process, i.e., a process in which events occur continuousl ...

has a skewness of 2

* A lognormal distribution

In probability theory, a log-normal (or lognormal) distribution is a continuous probability distribution of a random variable whose logarithm is normal distribution, normally distributed. Thus, if the random variable is log-normally distributed ...

can have a skewness of any positive value, depending on its parameters

Sample skewness

For a sample of ''n'' values, two natural estimators of the population skewness are and where is the sample mean, is the sample standard deviation, is the (biased) sample second central moment, and is the (biased) sample third central moment. is a method of moments estimator. Another common definition of the ''sample skewness'' isDoane, David P., and Lori E. Seward"Measuring skewness: a forgotten statistic."

Journal of Statistics Education 19.2 (2011): 1-18. (Page 7) where is the unique symmetric unbiased estimator of the third cumulant and is the symmetric unbiased estimator of the second cumulant (i.e. the

sample variance

In probability theory and statistics, variance is the expected value of the squared deviation from the mean of a random variable. The standard deviation (SD) is obtained as the square root of the variance. Variance is a measure of dispersion, ...

). This adjusted Fisher–Pearson standardized moment coefficient is the version found in Excel and several statistical packages including Minitab, SAS and SPSS.

Under the assumption that the underlying random variable is normally distributed, it can be shown that all three ratios , and are unbiased and consistent

In deductive logic, a consistent theory is one that does not lead to a logical contradiction. A theory T is consistent if there is no formula \varphi such that both \varphi and its negation \lnot\varphi are elements of the set of consequences ...

estimators of the population skewness , with , i.e., their distributions converge to a normal distribution with mean 0 and variance 6 ( Fisher, 1930). The variance of the sample skewness is thus approximately for sufficiently large samples. More precisely, in a random sample of size ''n'' from a normal distribution,Duncan Cramer (1997) Fundamental Statistics for Social Research. Routledge. (p 85)

In normal samples, has the smaller variance of the three estimators, with

For non-normal distributions, , and are generally biased estimators of the population skewness ; their expected values can even have the opposite sign from the true skewness. For instance, a mixed distribution consisting of very thin Gaussians centred at −99, 0.5, and 2 with weights 0.01, 0.66, and 0.33 has a skewness of about −9.77, but in a sample of 3 has an expected value of about 0.32, since usually all three samples are in the positive-valued part of the distribution, which is skewed the other way.

Applications

Skewness is a descriptive statistic that can be used in conjunction with thehistogram

A histogram is a visual representation of the frequency distribution, distribution of quantitative data. To construct a histogram, the first step is to Data binning, "bin" (or "bucket") the range of values— divide the entire range of values in ...

and the normal quantile plot to characterize the data or distribution.

Skewness indicates the direction and relative magnitude of a distribution's deviation from the normal distribution.

With pronounced skewness, standard statistical inference procedures such as a confidence interval for a mean will be not only incorrect, in the sense that the true coverage level will differ from the nominal (e.g., 95%) level, but they will also result in unequal error probabilities on each side.

Skewness can be used to obtain approximate probabilities and quantiles of distributions (such as value at risk in finance) via the Cornish–Fisher expansion.

Many models assume normal distribution; i.e., data are symmetric about the mean. The normal distribution has a skewness of zero. But in reality, data points may not be perfectly symmetric. So, an understanding of the skewness of the dataset indicates whether deviations from the mean are going to be positive or negative.

D'Agostino's K-squared test is a goodness-of-fit normality test based on sample skewness and sample kurtosis.

Other measures of skewness

Other measures of skewness have been used, including simpler calculations suggested byKarl Pearson

Karl Pearson (; born Carl Pearson; 27 March 1857 – 27 April 1936) was an English biostatistician and mathematician. He has been credited with establishing the discipline of mathematical statistics. He founded the world's first university ...

(not to be confused with Pearson's moment coefficient of skewness, see above). These other measures are:

Pearson's first skewness coefficient (mode skewness)

The Pearson mode skewness, or first skewness coefficient, is defined asPearson's second skewness coefficient (median skewness)

The Pearson median skewness, or second skewness coefficient, is defined as Which is a simple multiple of the nonparametric skew.Quantile-based measures

Bowley's measure of skewness (from 1901),Kenney JF and Keeping ES (1962) ''Mathematics of Statistics, Pt. 1, 3rd ed.'', Van Nostrand, (page 102). also called Yule's coefficient (from 1912) is defined as: where ''Q'' is the quantile function (i.e., the inverse of thecumulative distribution function

In probability theory and statistics, the cumulative distribution function (CDF) of a real-valued random variable X, or just distribution function of X, evaluated at x, is the probability that X will take a value less than or equal to x.

Ever ...

). The numerator is difference between the average of the upper and lower quartiles (a measure of location) and the median (another measure of location), while the denominator is the semi-interquartile range , which for symmetric distributions is equal to the MAD measure of dispersion.

Other names for this measure are Galton's measure of skewness, p. 3 and p. 40 the Yule–Kendall indexWilks DS (1995) ''Statistical Methods in the Atmospheric Sciences'', p 27. Academic Press. and the quartile skewness,

Similarly, Kelly's measure of skewness is defined as

A more general formulation of a skewness function was described by Groeneveld, R. A. and Meeden, G. (1984):MacGillivray (1992)Hinkley DV (1975) "On power transformations to symmetry", '' Biometrika'', 62, 101–111

The function satisfies and is well defined without requiring the existence of any moments of the distribution. Bowley's measure of skewness is evaluated at while Kelly's measure of skewness is evaluated at . This definition leads to a corresponding overall measure of skewnessMacGillivray (1992) defined as the supremum

In mathematics, the infimum (abbreviated inf; : infima) of a subset S of a partially ordered set P is the greatest element in P that is less than or equal to each element of S, if such an element exists. If the infimum of S exists, it is unique, ...

of this over the range . Another measure can be obtained by integrating the numerator and denominator of this expression.

Quantile-based skewness measures are at first glance easy to interpret, but they often show significantly larger sample variations than moment-based methods. This means that often samples from a symmetric distribution (like the uniform distribution) have a large quantile-based skewness, just by chance.

Groeneveld and Meeden's coefficient

Groeneveld and Meeden have suggested, as an alternative measure of skewness, where is the mean, is the median, is theabsolute value

In mathematics, the absolute value or modulus of a real number x, is the non-negative value without regard to its sign. Namely, , x, =x if x is a positive number, and , x, =-x if x is negative (in which case negating x makes -x positive), ...

, and is the expectation operator. This is closely related in form to Pearson's second skewness coefficient.

L-moments

Use of L-moments in place of moments provides a measure of skewness known as the L-skewness.Distance skewness

A value of skewness equal to zero does not imply that the probability distribution is symmetric. Thus there is a need for another measure of asymmetry that has this property: such a measure was introduced in 2000. It is called distance skewness and denoted by . If ''X'' is a random variable taking values in the -dimensional Euclidean space, has finite expectation, is an independent identically distributed copy of , and denotes the norm in the Euclidean space, then a simple ''measure of asymmetry'' with respect to location parameter is and for (with probability 1). Distance skewness is always between 0 and 1, equals 0 if and only if ''X'' is diagonally symmetric with respect to ( and have the same probability distribution) and equals 1 if and only if ''X'' is a constant ''c'' () with probability one.Szekely, G. J. and Mori, T. F. (2001) "A characteristic measure of asymmetry and its application for testing diagonal symmetry", ''Communications in Statistics – Theory and Methods'' 30/8&9, 1633–1639. Thus there is a simple consistentstatistical test

A statistical hypothesis test is a method of statistical inference used to decide whether the data provide sufficient evidence to reject a particular hypothesis. A statistical hypothesis test typically involves a calculation of a test statistic. ...

of diagonal symmetry based on the sample distance skewness:

Medcouple

The medcouple is a scale-invariant robust measure of skewness, with a breakdown point of 25%. It is themedian

The median of a set of numbers is the value separating the higher half from the lower half of a Sample (statistics), data sample, a statistical population, population, or a probability distribution. For a data set, it may be thought of as the “ ...

of the values of the kernel function

taken over all couples such that , where is the median of the sample . It can be seen as the median of all possible quantile skewness measures.

See also

* Bragg peak * Coskewness * Kurtosis * Shape parameters * Skew normal distribution *Skewness risk

Skewness risk in forecasting models utilized in the financial field is the risk that results when observations are not spread symmetrically around an average value, but instead have a skewed distribution. As a result, the mean and the median can ...

References

Citations

Sources

* * * Premaratne, G., Bera, A. K. (2001). Adjusting the Tests for Skewness and Kurtosis for Distributional Misspecifications. Working Paper Number 01-0116, University of Illinois. Forthcoming in Comm in Statistics, Simulation and Computation. 2016 1–15 * Premaratne, G., Bera, A. K. (2000). Modeling Asymmetry and Excess Kurtosis in Stock Return Data. Office of Research Working Paper Number 00-0123, University of Illinois.Skewness Measures for the Weibull Distribution

External links

*by Michel Petitjean

On More Robust Estimation of Skewness and Kurtosis

Comparison of skew estimators by Kim and White.

{{Theory_of_probability_distributions Moments (mathematics) Statistical deviation and dispersion