|

Weisfeiler Leman Graph Isomorphism Test

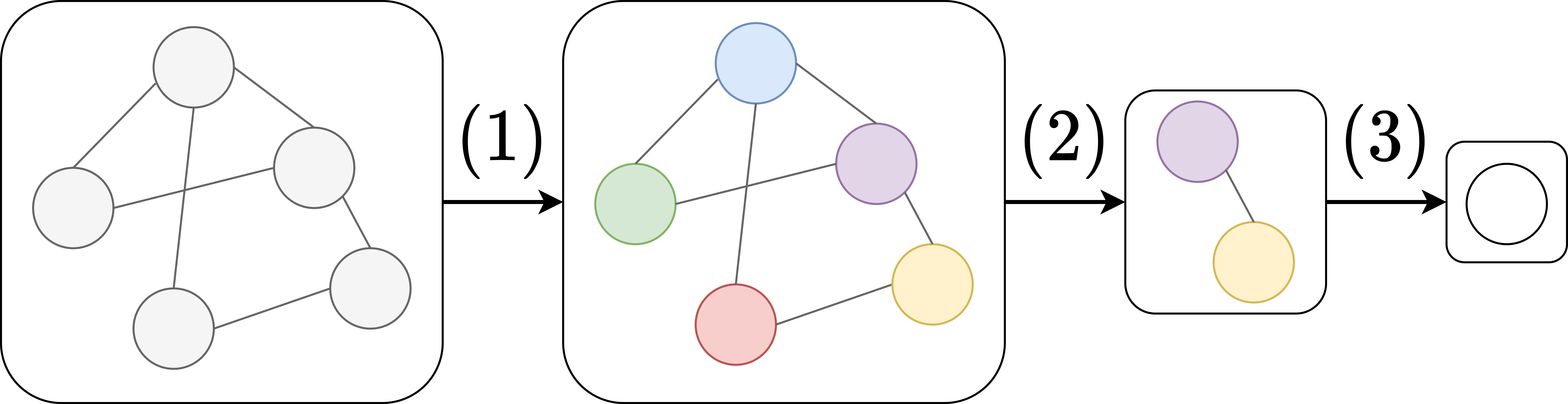

In graph theory, the Weisfeiler Leman graph isomorphism test is a heuristic test for the existence of an isomorphism between two graphs ''G'' and ''H''. It is based on a normal form for graphs first described in an article by Weisfeiler and Leman in 1968. There are several versions of the test referred to in the literature by various names, which easily leads to confusion. The basic Weisfeiler Leman graph isomorphism test The basic version called WLtest takes a graph as input, produces a partition of the nodes which is invariant under automorphisms and outputs a string certificate encoding the partition. When applied to two graphs ''G'' and ''H'' we can compare the certificates. If the certificates do not agree the test fails and ''G'' and ''H'' cannot be isomorphic. If the certificates agree, ''G'' and ''H'' may or may not be isomorphic. The partition is produced in several rounds starting from the trivial partition where all nodes belong to the same component. In each r ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Graph Neural Network

A graph neural network (GNN) belongs to a class of artificial neural networks for processing data that can be represented as graphs. In the more general subject of "geometric deep learning", certain existing neural network architectures can be interpreted as GNNs operating on suitably defined graphs. A convolutional neural network layer, in the context of computer vision, can be seen as a GNN applied to graphs whose nodes are pixels and only adjacent pixels are connected by edges in the graph. A transformer layer, in natural language processing, can be seen as a GNN applied to complete graphs whose nodes are words or tokens in a passage of natural language text. The key design element of GNNs is the use of ''pairwise message passing'', such that graph nodes iteratively update their representations by exchanging information with their neighbors. Since their inception, several different GNN architectures have been proposed, which implement different flavors of message passing, s ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Graph Theory

In mathematics, graph theory is the study of '' graphs'', which are mathematical structures used to model pairwise relations between objects. A graph in this context is made up of '' vertices'' (also called ''nodes'' or ''points'') which are connected by ''edges'' (also called ''links'' or ''lines''). A distinction is made between undirected graphs, where edges link two vertices symmetrically, and directed graphs, where edges link two vertices asymmetrically. Graphs are one of the principal objects of study in discrete mathematics. Definitions Definitions in graph theory vary. The following are some of the more basic ways of defining graphs and related mathematical structures. Graph In one restricted but very common sense of the term, a graph is an ordered pair G=(V,E) comprising: * V, a set of vertices (also called nodes or points); * E \subseteq \, a set of edges (also called links or lines), which are unordered pairs of vertices (that is, an edge is associated with t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Kernelization

In computer science, a kernelization is a technique for designing efficient algorithms that achieve their efficiency by a preprocessing stage in which inputs to the algorithm are replaced by a smaller input, called a "kernel". The result of solving the problem on the kernel should either be the same as on the original input, or it should be easy to transform the output on the kernel to the desired output for the original problem. Kernelization is often achieved by applying a set of reduction rules that cut away parts of the instance that are easy to handle. In parameterized complexity theory, it is often possible to prove that a kernel with guaranteed bounds on the size of a kernel (as a function of some parameter associated to the problem) can be found in polynomial time. When this is possible, it results in a fixed-parameter tractable algorithm whose running time is the sum of the (polynomial time) kernelization step and the (non-polynomial but bounded by the parameter) time to ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Graph Kernel

In structure mining, a graph kernel is a kernel function that computes an inner product on graphs. Graph kernels can be intuitively understood as functions measuring the similarity of pairs of graphs. They allow kernelized learning algorithms such as support vector machines to work directly on graphs, without having to do feature extraction to transform them to fixed-length, real-valued feature vectors. They find applications in bioinformatics, in chemoinformatics (as a type of molecule kernels), and in social network analysis. Concepts of graph kernels have been around since the 1999, when D. Haussler introduced convolutional kernels on discrete structures. The term graph kernels was more officially coined in 2002 by R. I. Kondor and J. Lafferty as kernels ''on'' graphs, i.e. similarity functions between the nodes of a single graph, with the World Wide Web hyperlink graph as a suggested application. In 2003, Gaertner ''et al.'' and Kashima ''et al.'' defined kernels ''betwee ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Artificial Neural Network

Artificial neural networks (ANNs), usually simply called neural networks (NNs) or neural nets, are computing systems inspired by the biological neural networks that constitute animal brains. An ANN is based on a collection of connected units or nodes called artificial neurons, which loosely model the neurons in a biological brain. Each connection, like the synapses in a biological brain, can transmit a signal to other neurons. An artificial neuron receives signals then processes them and can signal neurons connected to it. The "signal" at a connection is a real number, and the output of each neuron is computed by some non-linear function of the sum of its inputs. The connections are called ''edges''. Neurons and edges typically have a ''weight'' that adjusts as learning proceeds. The weight increases or decreases the strength of the signal at a connection. Neurons may have a threshold such that a signal is sent only if the aggregate signal crosses that threshold. Typically, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Graph Kernel

In structure mining, a graph kernel is a kernel function that computes an inner product on graphs. Graph kernels can be intuitively understood as functions measuring the similarity of pairs of graphs. They allow kernelized learning algorithms such as support vector machines to work directly on graphs, without having to do feature extraction to transform them to fixed-length, real-valued feature vectors. They find applications in bioinformatics, in chemoinformatics (as a type of molecule kernels), and in social network analysis. Concepts of graph kernels have been around since the 1999, when D. Haussler introduced convolutional kernels on discrete structures. The term graph kernels was more officially coined in 2002 by R. I. Kondor and J. Lafferty as kernels ''on'' graphs, i.e. similarity functions between the nodes of a single graph, with the World Wide Web hyperlink graph as a suggested application. In 2003, Gaertner ''et al.'' and Kashima ''et al.'' defined kernels ''betwee ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Support Vector Machine

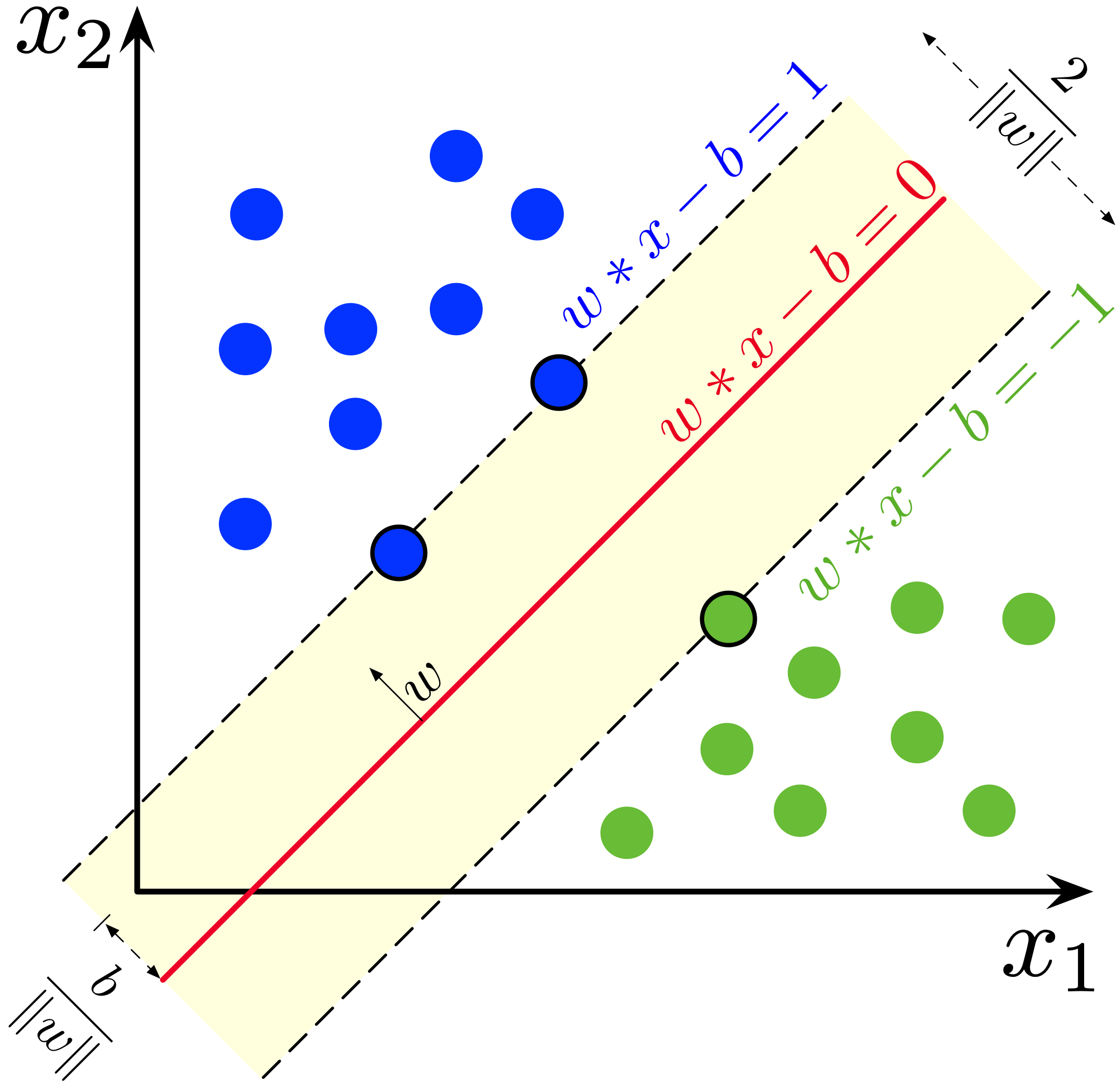

In machine learning, support vector machines (SVMs, also support vector networks) are supervised learning models with associated learning algorithms that analyze data for classification and regression analysis. Developed at AT&T Bell Laboratories by Vladimir Vapnik with colleagues (Boser et al., 1992, Guyon et al., 1993, Cortes and Vapnik, 1995, Vapnik et al., 1997) SVMs are one of the most robust prediction methods, being based on statistical learning frameworks or VC theory proposed by Vapnik (1982, 1995) and Chervonenkis (1974). Given a set of training examples, each marked as belonging to one of two categories, an SVM training algorithm builds a model that assigns new examples to one category or the other, making it a non-probabilistic binary linear classifier (although methods such as Platt scaling exist to use SVM in a probabilistic classification setting). SVM maps training examples to points in space so as to maximise the width of the gap between the two categories. N ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Support Vector Machines

In machine learning, support vector machines (SVMs, also support vector networks) are supervised learning models with associated learning algorithms that analyze data for classification and regression analysis. Developed at AT&T Bell Laboratories by Vladimir Vapnik with colleagues (Boser et al., 1992, Guyon et al., 1993, Cortes and Vapnik, 1995, Vapnik et al., 1997) SVMs are one of the most robust prediction methods, being based on statistical learning frameworks or VC theory proposed by Vapnik (1982, 1995) and Chervonenkis (1974). Given a set of training examples, each marked as belonging to one of two categories, an SVM training algorithm builds a model that assigns new examples to one category or the other, making it a non-probabilistic binary linear classifier (although methods such as Platt scaling exist to use SVM in a probabilistic classification setting). SVM maps training examples to points in space so as to maximise the width of the gap between the two categories. New ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Kernel Method

In machine learning, kernel machines are a class of algorithms for pattern analysis, whose best known member is the support-vector machine (SVM). The general task of pattern analysis is to find and study general types of relations (for example clusters, rankings, principal components, correlations, classifications) in datasets. For many algorithms that solve these tasks, the data in raw representation have to be explicitly transformed into feature vector representations via a user-specified ''feature map'': in contrast, kernel methods require only a user-specified ''kernel'', i.e., a similarity function over all pairs of data points computed using Inner products. The feature map in kernel machines is infinite dimensional but only requires a finite dimensional matrix from user-input according to the Representer theorem. Kernel machines are slow to compute for datasets larger than a couple of thousand examples without parallel processing. Kernel methods owe their name to th ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Machine Learning

Machine learning (ML) is a field of inquiry devoted to understanding and building methods that 'learn', that is, methods that leverage data to improve performance on some set of tasks. It is seen as a part of artificial intelligence. Machine learning algorithms build a model based on sample data, known as training data, in order to make predictions or decisions without being explicitly programmed to do so. Machine learning algorithms are used in a wide variety of applications, such as in medicine, email filtering, speech recognition, agriculture, and computer vision, where it is difficult or unfeasible to develop conventional algorithms to perform the needed tasks.Hu, J.; Niu, H.; Carrasco, J.; Lennox, B.; Arvin, F.,Voronoi-Based Multi-Robot Autonomous Exploration in Unknown Environments via Deep Reinforcement Learning IEEE Transactions on Vehicular Technology, 2020. A subset of machine learning is closely related to computational statistics, which focuses on making pred ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |