|

Sequential Linear-quadratic Programming

Sequential linear-quadratic programming (SLQP) is an iterative method for nonlinear optimization problems where objective function and constraints are twice continuously differentiable. Similarly to sequential quadratic programming (SQP), SLQP proceeds by solving a sequence of optimization subproblems. The difference between the two approaches is that: * in SQP, each subproblem is a quadratic program, with a quadratic model of the objective subject to a linearization of the constraints * in SLQP, two subproblems are solved at each step: a linear program (LP) used to determine an active set, followed by an equality-constrained quadratic program (EQP) used to compute the total step This decomposition makes SLQP suitable to large-scale optimization problems, for which efficient LP and EQP solvers are available, these problems being easier to scale than full-fledged quadratic programs. It may be considered related to, but distinct from, quasi-Newton methods. Algorithm basics Con ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Iterative Method

In computational mathematics, an iterative method is a Algorithm, mathematical procedure that uses an initial value to generate a sequence of improving approximate solutions for a class of problems, in which the ''i''-th approximation (called an "iterate") is derived from the previous ones. A specific implementation with Algorithm#Termination, termination criteria for a given iterative method like gradient descent, hill climbing, Newton's method, or Quasi-Newton method, quasi-Newton methods like Broyden–Fletcher–Goldfarb–Shanno algorithm, BFGS, is an algorithm of an iterative method or a method of successive approximation. An iterative method is called ''Convergent series, convergent'' if the corresponding sequence converges for given initial approximations. A mathematically rigorous convergence analysis of an iterative method is usually performed; however, heuristic-based iterative methods are also common. In contrast, direct methods attempt to solve the problem by a finit ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Nonlinear Programming

In mathematics, nonlinear programming (NLP) is the process of solving an optimization problem where some of the constraints are not linear equalities or the objective function is not a linear function. An optimization problem is one of calculation of the extrema (maxima, minima or stationary points) of an objective function over a set of unknown real variables and conditional to the satisfaction of a system of equalities and inequalities, collectively termed constraints. It is the sub-field of mathematical optimization that deals with problems that are not linear. Definition and discussion Let ''n'', ''m'', and ''p'' be positive integers. Let ''X'' be a subset of ''Rn'' (usually a box-constrained one), let ''f'', ''gi'', and ''hj'' be real-valued functions on ''X'' for each ''i'' in and each ''j'' in , with at least one of ''f'', ''gi'', and ''hj'' being nonlinear. A nonlinear programming problem is an optimization problem of the form : \begin \text & f(x) \\ \text ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Objective Function

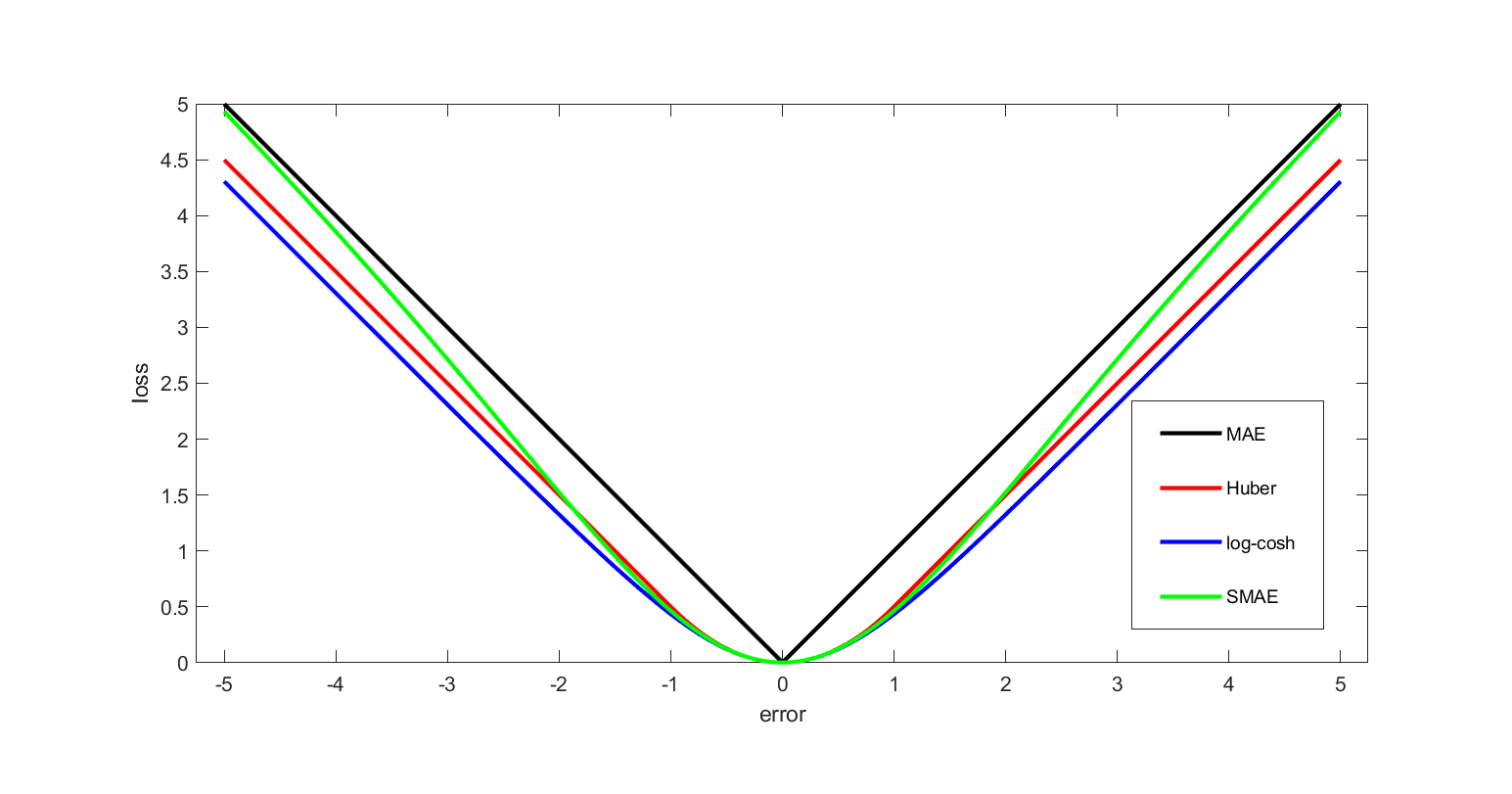

In mathematical optimization and decision theory, a loss function or cost function (sometimes also called an error function) is a function that maps an event or values of one or more variables onto a real number intuitively representing some "cost" associated with the event. An optimization problem seeks to minimize a loss function. An objective function is either a loss function or its opposite (in specific domains, variously called a reward function, a profit function, a utility function, a fitness function, etc.), in which case it is to be maximized. The loss function could include terms from several levels of the hierarchy. In statistics, typically a loss function is used for parameter estimation, and the event in question is some function of the difference between estimated and true values for an instance of data. The concept, as old as Laplace, was reintroduced in statistics by Abraham Wald in the middle of the 20th century. In the context of economics, for example, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Continuously Differentiable

In mathematics, a differentiable function of one Real number, real variable is a Function (mathematics), function whose derivative exists at each point in its Domain of a function, domain. In other words, the Graph of a function, graph of a differentiable function has a non-Vertical tangent, vertical tangent line at each interior point in its domain. A differentiable function is Smoothness, smooth (the function is locally well approximated as a linear function at each interior point) and does not contain any break, angle, or Cusp (singularity), cusp. If is an interior point in the domain of a function , then is said to be ''differentiable at'' if the derivative f'(x_0) exists. In other words, the graph of has a non-vertical tangent line at the point . is said to be differentiable on if it is differentiable at every point of . is said to be ''continuously differentiable'' if its derivative is also a continuous function over the domain of the function f. Generally speaking, i ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Sequential Quadratic Programming

Sequential quadratic programming (SQP) is an iterative method for constrained nonlinear optimization, also known as Lagrange-Newton method. SQP methods are used on mathematical problems for which the objective function and the constraints are twice continuously differentiable, but not necessarily convex. SQP methods solve a sequence of optimization subproblems, each of which optimizes a quadratic model of the objective subject to a linearization of the constraints. If the problem is unconstrained, then the method reduces to Newton's method for finding a point where the gradient of the objective vanishes. If the problem has only equality constraints, then the method is equivalent to applying Newton's method to the first-order optimality conditions, or Karush–Kuhn–Tucker conditions, of the problem. Algorithm basics Consider a nonlinear programming problem of the form: :\begin \min\limits_ & f(x) \\ \mbox & h(x) \ge 0 \\ & g(x) = 0. \end where x \in \mathbb^n, f: \mathbb ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Quadratic Program

Quadratic programming (QP) is the process of solving certain mathematical optimization problems involving quadratic functions. Specifically, one seeks to optimize (minimize or maximize) a multivariate quadratic function subject to linear constraints on the variables. Quadratic programming is a type of nonlinear programming. "Programming" in this context refers to a formal procedure for solving mathematical problems. This usage dates to the 1940s and is not specifically tied to the more recent notion of "computer programming." To avoid confusion, some practitioners prefer the term "optimization" — e.g., "quadratic optimization." Problem formulation The quadratic programming problem with variables and constraints can be formulated as follows. Given: * a real-valued, -dimensional vector , * an -dimensional real symmetric matrix , * an -dimensional real matrix , and * an -dimensional real vector , the objective of quadratic programming is to find an -dimensional vector , that wi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Linear Program

Linear programming (LP), also called linear optimization, is a method to achieve the best outcome (such as maximum profit or lowest cost) in a mathematical model whose requirements and objective are represented by linear relationships. Linear programming is a special case of mathematical programming (also known as mathematical optimization). More formally, linear programming is a technique for the optimization of a linear objective function, subject to linear equality and linear inequality constraints. Its feasible region is a convex polytope, which is a set defined as the intersection of finitely many half spaces, each of which is defined by a linear inequality. Its objective function is a real-valued affine (linear) function defined on this polytope. A linear programming algorithm finds a point in the polytope where this function has the largest (or smallest) value if such a point exists. Linear programs are problems that can be expressed in standard form as: : \beg ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Active Set

In mathematical optimization, the active-set method is an algorithm used to identify the active constraints in a set of inequality constraints. The active constraints are then expressed as equality constraints, thereby transforming an inequality-constrained problem into a simpler equality-constrained subproblem. An optimization problem is defined using an objective function to minimize or maximize, and a set of constraints : g_1(x) \ge 0, \dots, g_k(x) \ge 0 that define the feasible region, that is, the set of all ''x'' to search for the optimal solution. Given a point x in the feasible region, a constraint : g_i(x) \ge 0 is called active at x_0 if g_i(x_0) = 0, and inactive at x_0 if g_i(x_0) > 0. Equality constraints are always active. The active set at x_0 is made up of those constraints g_i(x_0) that are active at the current point . The active set is particularly important in optimization theory, as it determines which constraints will influence the final result of o ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Quasi-Newton Method

In numerical analysis, a quasi-Newton method is an iterative numerical method used either to find zeroes or to find local maxima and minima of functions via an iterative recurrence formula much like the one for Newton's method, except using approximations of the derivatives of the functions in place of exact derivatives. Newton's method requires the Jacobian matrix of all partial derivatives of a multivariate function when used to search for zeros or the Hessian matrix when used for finding extrema. Quasi-Newton methods, on the other hand, can be used when the Jacobian matrices or Hessian matrices are unavailable or are impractical to compute at every iteration. Some iterative methods that reduce to Newton's method, such as sequential quadratic programming, may also be considered quasi-Newton methods. Search for zeros: root finding Newton's method to find zeroes of a function g of multiple variables is given by x_ = x_n - _g(x_n) g(x_n), where _g(x_n) is the left inverse ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Lagrange Multipliers

In mathematical optimization, the method of Lagrange multipliers is a strategy for finding the local maxima and minima of a function subject to equation constraints (i.e., subject to the condition that one or more equations have to be satisfied exactly by the chosen values of the variables). It is named after the mathematician Joseph-Louis Lagrange. Summary and rationale The basic idea is to convert a constrained problem into a form such that the derivative test of an unconstrained problem can still be applied. The relationship between the gradient of the function and gradients of the constraints rather naturally leads to a reformulation of the original problem, known as the Lagrangian function or Lagrangian. In the general case, the Lagrangian is defined as \mathcal(x, \lambda) \equiv f(x) + \langle \lambda, g(x)\rangle for functions f, g; the notation \langle \cdot, \cdot \rangle denotes an inner product. The value \lambda is called the Lagrange multiplier. In simple ca ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Newton's Method

In numerical analysis, the Newton–Raphson method, also known simply as Newton's method, named after Isaac Newton and Joseph Raphson, is a root-finding algorithm which produces successively better approximations to the roots (or zeroes) of a real-valued function. The most basic version starts with a real-valued function , its derivative , and an initial guess for a root of . If satisfies certain assumptions and the initial guess is close, then x_ = x_0 - \frac is a better approximation of the root than . Geometrically, is the x-intercept of the tangent of the graph of at : that is, the improved guess, , is the unique root of the linear approximation of at the initial guess, . The process is repeated as x_ = x_n - \frac until a sufficiently precise value is reached. The number of correct digits roughly doubles with each step. This algorithm is first in the class of Householder's methods, and was succeeded by Halley's method. The method can also be extended t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |