|

Quantile-parameterized Distribution

A quantile-parameterized distribution (QPD) is a probability distributions that is directly parameterized by data. They were created to meet the need for easy-to-use continuous probability distributions flexible enough to represent a wide range of uncertainties, such as those commonly encountered in business, economics, engineering, and science. Because QPDs are directly parameterized by data, they have the practical advantage of avoiding the intermediate step of Estimation theory, parameter estimation, a time-consuming process that typically requires non-linear iterative methods to estimate probability-distribution parameters from data. Some QPDs have virtually unlimited shape flexibility and closed-form moments as well. History The development of quantile-parameterized distributions was inspired by the practical need for flexible continuous probability distributions that are easy to fit to data. Historically, the Pearson distribution, Pearson and Norman Lloyd Johnson, Johnson fam ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Estimation Theory

Estimation theory is a branch of statistics that deals with estimating the values of Statistical parameter, parameters based on measured empirical data that has a random component. The parameters describe an underlying physical setting in such a way that their value affects the distribution of the measured data. An ''estimator'' attempts to approximate the unknown parameters using the measurements. In estimation theory, two approaches are generally considered: * The probabilistic approach (described in this article) assumes that the measured data is random with probability distribution dependent on the parameters of interest * The set estimation, set-membership approach assumes that the measured data vector belongs to a set which depends on the parameter vector. Examples For example, it is desired to estimate the proportion of a population of voters who will vote for a particular candidate. That proportion is the parameter sought; the estimate is based on a small random sa ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Convex Optimization

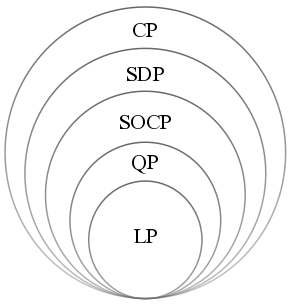

Convex optimization is a subfield of mathematical optimization that studies the problem of minimizing convex functions over convex sets (or, equivalently, maximizing concave functions over convex sets). Many classes of convex optimization problems admit polynomial-time algorithms, whereas mathematical optimization is in general NP-hard. Definition Abstract form A convex optimization problem is defined by two ingredients: * The ''objective function'', which is a real-valued convex function of ''n'' variables, f :\mathcal D \subseteq \mathbb^n \to \mathbb; * The ''feasible set'', which is a convex subset C\subseteq \mathbb^n. The goal of the problem is to find some \mathbf \in C attaining :\inf \. In general, there are three options regarding the existence of a solution: * If such a point ''x''* exists, it is referred to as an ''optimal point'' or ''solution''; the set of all optimal points is called the ''optimal set''; and the problem is called ''solvable''. * If f is unbou ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Probability Theory

Probability theory or probability calculus is the branch of mathematics concerned with probability. Although there are several different probability interpretations, probability theory treats the concept in a rigorous mathematical manner by expressing it through a set of axioms of probability, axioms. Typically these axioms formalise probability in terms of a probability space, which assigns a measure (mathematics), measure taking values between 0 and 1, termed the probability measure, to a set of outcomes called the sample space. Any specified subset of the sample space is called an event (probability theory), event. Central subjects in probability theory include discrete and continuous random variables, probability distributions, and stochastic processes (which provide mathematical abstractions of determinism, non-deterministic or uncertain processes or measured Quantity, quantities that may either be single occurrences or evolve over time in a random fashion). Although it is no ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Chebyshev Polynomial

The Chebyshev polynomials are two sequences of orthogonal polynomials related to the trigonometric functions, cosine and sine functions, notated as T_n(x) and U_n(x). They can be defined in several equivalent ways, one of which starts with trigonometric functions: The Chebyshev polynomials of the first kind T_n are defined by T_n(\cos \theta) = \cos(n\theta). Similarly, the Chebyshev polynomials of the second kind U_n are defined by U_n(\cos \theta) \sin \theta = \sin\big((n + 1)\theta\big). That these expressions define polynomials in \cos\theta is not obvious at first sight but can be shown using de Moivre's formula (see #Trigonometric definition, below). The Chebyshev polynomials are polynomials with the largest possible leading coefficient whose absolute value on the interval (mathematics), interval is bounded by 1. They are also the "extremal" polynomials for many other properties. In 1952, Cornelius Lanczos showed that the Chebyshev polynomials are important in a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Polynomial

In mathematics, a polynomial is a Expression (mathematics), mathematical expression consisting of indeterminate (variable), indeterminates (also called variable (mathematics), variables) and coefficients, that involves only the operations of addition, subtraction, multiplication and exponentiation to nonnegative integer powers, and has a finite number of terms. An example of a polynomial of a single indeterminate is . An example with three indeterminates is . Polynomials appear in many areas of mathematics and science. For example, they are used to form polynomial equations, which encode a wide range of problems, from elementary word problem (mathematics education), word problems to complicated scientific problems; they are used to define polynomial functions, which appear in settings ranging from basic chemistry and physics to economics and social science; and they are used in calculus and numerical analysis to approximate other functions. In advanced mathematics, polynomials are ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Logistic Distribution

In probability theory and statistics, the logistic distribution is a continuous probability distribution. Its cumulative distribution function is the logistic function, which appears in logistic regression and feedforward neural networks. It resembles the normal distribution in shape but has heavier tails (higher kurtosis). The logistic distribution is a special case of the Tukey lambda distribution. Specification Cumulative distribution function The logistic distribution receives its name from its cumulative distribution function, which is an instance of the family of logistic functions. The cumulative distribution function of the logistic distribution is also a scaled version of the Hyperbolic function, hyperbolic tangent. :F(x; \mu, s) = \frac = \frac12 + \frac12 \operatorname \left(\frac\right). In this equation is the mean, and is a scale parameter proportional to the standard deviation. Probability density function The probability density function is the partia ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Cauchy Distribution

The Cauchy distribution, named after Augustin-Louis Cauchy, is a continuous probability distribution. It is also known, especially among physicists, as the Lorentz distribution (after Hendrik Lorentz), Cauchy–Lorentz distribution, Lorentz(ian) function, or Breit–Wigner distribution. The Cauchy distribution f(x; x_0,\gamma) is the distribution of the -intercept of a ray issuing from (x_0,\gamma) with a uniformly distributed angle. It is also the distribution of the Ratio distribution, ratio of two independent Normal distribution, normally distributed random variables with mean zero. The Cauchy distribution is often used in statistics as the canonical example of a "pathological (mathematics), pathological" distribution since both its expected value and its variance are undefined (but see below). The Cauchy distribution does not have finite moment (mathematics), moments of order greater than or equal to one; only fractional absolute moments exist., Chapter 16. The Cauchy dist ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Gumbel Distribution

In probability theory and statistics, the Gumbel distribution (also known as the type-I generalized extreme value distribution) is used to model the distribution of the maximum (or the minimum) of a number of samples of various distributions. This distribution might be used to represent the distribution of the maximum level of a river in a particular year if there was a list of maximum values for the past ten years. It is useful in predicting the chance that an extreme earthquake, flood or other natural disaster will occur. The potential applicability of the Gumbel distribution to represent the distribution of maxima relates to extreme value theory, which indicates that it is likely to be useful if the distribution of the underlying sample data is of the normal or exponential type. The Gumbel distribution is a particular case of the generalized extreme value distribution (also known as the Fisher–Tippett distribution). It is also known as the ''log-Weibull distribution'' and the ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Monte Carlo Method

Monte Carlo methods, or Monte Carlo experiments, are a broad class of computational algorithms that rely on repeated random sampling to obtain numerical results. The underlying concept is to use randomness to solve problems that might be deterministic in principle. The name comes from the Monte Carlo Casino in Monaco, where the primary developer of the method, mathematician Stanisław Ulam, was inspired by his uncle's gambling habits. Monte Carlo methods are mainly used in three distinct problem classes: optimization, numerical integration, and generating draws from a probability distribution. They can also be used to model phenomena with significant uncertainty in inputs, such as calculating the risk of a nuclear power plant failure. Monte Carlo methods are often implemented using computer simulations, and they can provide approximate solutions to problems that are otherwise intractable or too complex to analyze mathematically. Monte Carlo methods are widely used in va ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Metalog Distribution

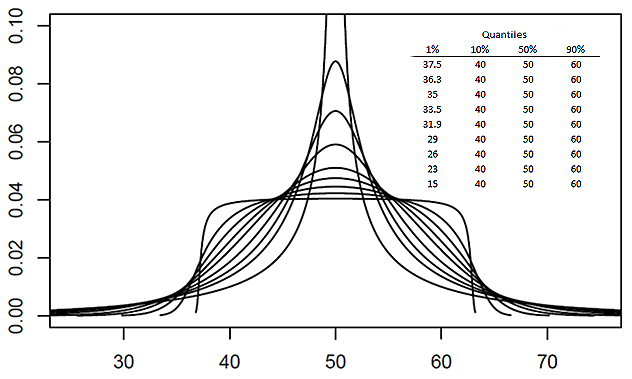

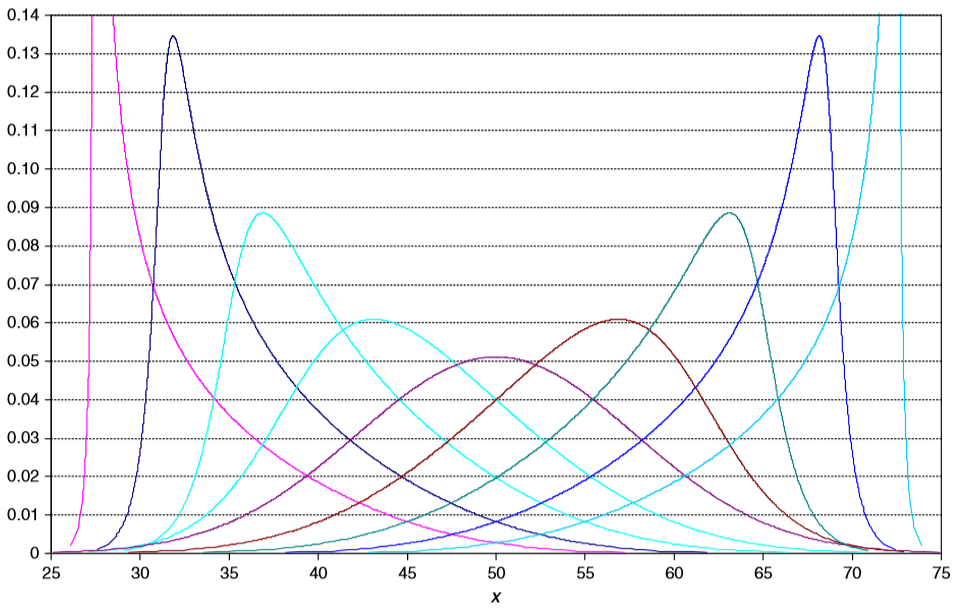

The metalog distribution is a flexible continuous probability distribution designed for ease of use in practice. Together with its transforms, the metalog family of continuous distributions is unique because it embodies ''all'' of following properties: virtually unlimited shape flexibility; a choice among unbounded, semi-bounded, and bounded distributions; ease of fitting to data with linear least squares; simple, closed-form quantile function (inverse Cumulative distribution function, CDF) equations that facilitate Inverse transform sampling, simulation; a simple, closed-form Probability density function, PDF; and Bayesian updating in closed form in light of new data. Moreover, like a Taylor series, metalog distributions may have any number of terms, depending on the degree of shape flexibility desired and other application needs. Applications where metalog distributions can be useful typically involve fitting empirical data, simulated data, or Expert elicitation, expert-elicited ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Log-normal Distribution

In probability theory, a log-normal (or lognormal) distribution is a continuous probability distribution of a random variable whose logarithm is normal distribution, normally distributed. Thus, if the random variable is log-normally distributed, then has a normal distribution. Equivalently, if has a normal distribution, then the exponential function of , , has a log-normal distribution. A random variable which is log-normally distributed takes only positive real values. It is a convenient and useful model for measurements in exact and engineering sciences, as well as medicine, economics and other topics (e.g., energies, concentrations, lengths, prices of financial instruments, and other metrics). The distribution is occasionally referred to as the Galton distribution or Galton's distribution, after Francis Galton. The log-normal distribution has also been associated with other names, such as Donald MacAlister#log-normal, McAlister, Gibrat's law, Gibrat and Cobb–Douglas. A l ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Kolmogorov–Smirnov Test

In statistics, the Kolmogorov–Smirnov test (also K–S test or KS test) is a nonparametric statistics, nonparametric test of the equality of continuous (or discontinuous, see #Discrete and mixed null distribution, Section 2.2), one-dimensional probability distributions. It can be used to test whether a random sample, sample came from a given reference probability distribution (one-sample K–S test), or to test whether two samples came from the same distribution (two-sample K–S test). Intuitively, it provides a method to qualitatively answer the question "How likely is it that we would see a collection of samples like this if they were drawn from that probability distribution?" or, in the second case, "How likely is it that we would see two sets of samples like this if they were drawn from the same (but unknown) probability distribution?". It is named after Andrey Kolmogorov and Nikolai Smirnov (mathematician), Nikolai Smirnov. The Kolmogorov–Smirnov statistic quantifies ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |