|

Helmholtz Machine

The Helmholtz machine (named after Hermann von Helmholtz and his concept of Helmholtz free energy) is a type of artificial neural network that can account for the hidden structure of a set of data by being trained to create a generative model of the original set of data. The hope is that by learning economical representations of the data, the underlying structure of the generative model should reasonably approximate the hidden structure of the data set. A Helmholtz machine contains two networks, a bottom-up ''recognition'' network that takes the data as input and produces a distribution over hidden variables, and a top-down "generative" network that generates values of the hidden variables and the data itself. At the time, Helmholtz machines were one of a handful of learning architectures that used feedback as well as feedforward to ensure quality of learned models. Helmholtz machines are usually trained using an unsupervised learning algorithm, such as the wake-sleep algorithm. T ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Hermann Von Helmholtz

Hermann Ludwig Ferdinand von Helmholtz (; ; 31 August 1821 – 8 September 1894; "von" since 1883) was a German physicist and physician who made significant contributions in several scientific fields, particularly hydrodynamic stability. The Helmholtz Association, the largest German association of research institutions, was named in his honour. In the fields of physiology and psychology, Helmholtz is known for his mathematics concerning the eye, theories of vision, ideas on the visual perception of space, colour vision research, the sensation of tone, perceptions of sound, and empiricism in the physiology of perception. In physics, he is known for his theories on the conservation of energy and on the electrical double layer, work in electrodynamics, chemical thermodynamics, and on a mechanical foundation of thermodynamics. Although credit is shared with Julius von Mayer, James Joule, and Daniel Bernoulli—among others—for the energy conservation principles that e ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Helmholtz Free Energy

In thermodynamics, the Helmholtz free energy (or Helmholtz energy) is a thermodynamic potential that measures the useful work obtainable from a closed thermodynamic system at a constant temperature ( isothermal). The change in the Helmholtz energy during a process is equal to the maximum amount of work that the system can perform in a thermodynamic process in which temperature is held constant. At constant temperature, the Helmholtz free energy is minimized at equilibrium. In contrast, the Gibbs free energy or free enthalpy is most commonly used as a measure of thermodynamic potential (especially in chemistry) when it is convenient for applications that occur at constant ''pressure''. For example, in explosives research Helmholtz free energy is often used, since explosive reactions by their nature induce pressure changes. It is also frequently used to define fundamental equations of state of pure substances. The concept of free energy was developed by Hermann von Helmholtz, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

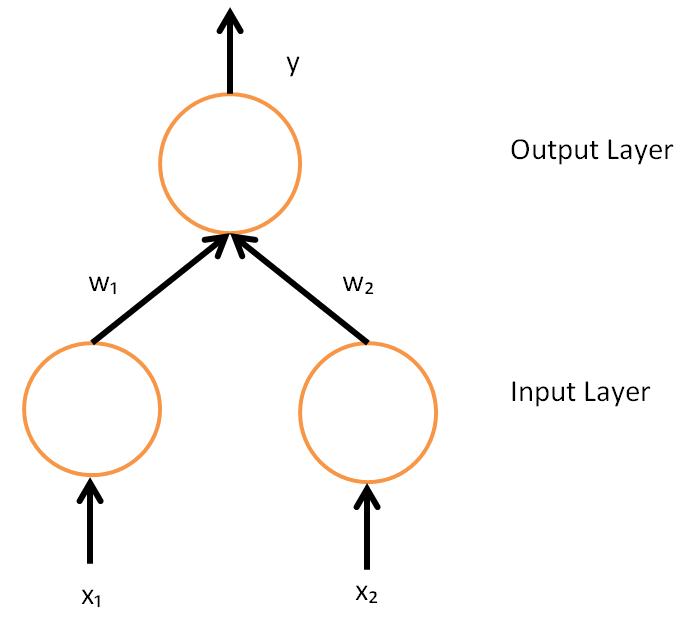

Artificial Neural Network

In machine learning, a neural network (also artificial neural network or neural net, abbreviated ANN or NN) is a computational model inspired by the structure and functions of biological neural networks. A neural network consists of connected units or nodes called '' artificial neurons'', which loosely model the neurons in the brain. Artificial neuron models that mimic biological neurons more closely have also been recently investigated and shown to significantly improve performance. These are connected by ''edges'', which model the synapses in the brain. Each artificial neuron receives signals from connected neurons, then processes them and sends a signal to other connected neurons. The "signal" is a real number, and the output of each neuron is computed by some non-linear function of the sum of its inputs, called the '' activation function''. The strength of the signal at each connection is determined by a ''weight'', which adjusts during the learning process. Typically, ne ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Generative Model

In statistical classification, two main approaches are called the generative approach and the discriminative approach. These compute classifiers by different approaches, differing in the degree of statistical modelling. Terminology is inconsistent, but three major types can be distinguished: # A generative model is a statistical model of the joint probability distribution P(X, Y) on a given observable variable ''X'' and target variable ''Y'';: "Generative classifiers learn a model of the joint probability, p(x, y), of the inputs ''x'' and the label ''y'', and make their predictions by using Bayes rules to calculate p(y\mid x), and then picking the most likely label ''y''. A generative model can be used to "generate" random instances ( outcomes) of an observation ''x''. # A discriminative model is a model of the conditional probability P(Y\mid X = x) of the target ''Y'', given an observation ''x''. It can be used to "discriminate" the value of the target variable ''Y'', given an ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

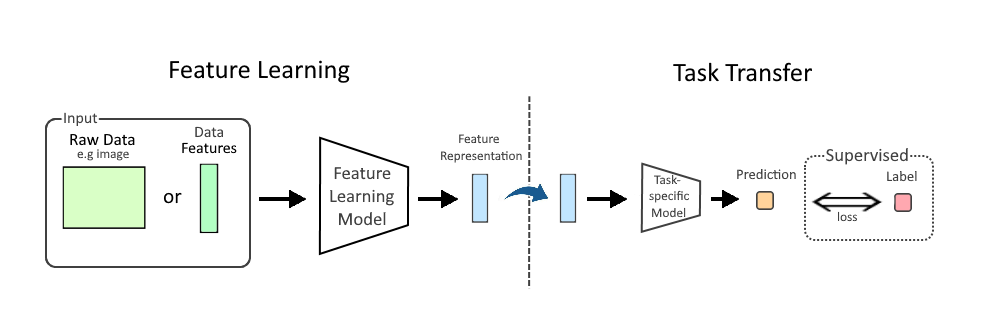

Representation Learning

In machine learning (ML), feature learning or representation learning is a set of techniques that allow a system to automatically discover the representations needed for feature detection or classification from raw data. This replaces manual feature engineering and allows a machine to both learn the features and use them to perform a specific task. Feature learning is motivated by the fact that ML tasks such as classification often require input that is mathematically and computationally convenient to process. However, real-world data, such as image, video, and sensor data, have not yielded to attempts to algorithmically define specific features. An alternative is to discover such features or representations through examination, without relying on explicit algorithms. Feature learning can be either supervised, unsupervised, or self-supervised: * In supervised feature learning, features are learned using labeled input data. Labeled data includes input-label pairs where the inp ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

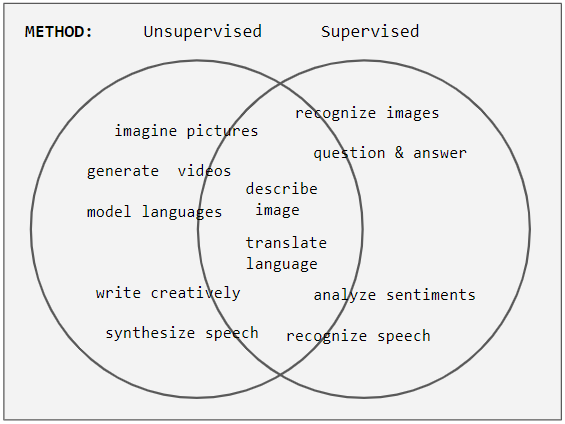

Unsupervised Learning

Unsupervised learning is a framework in machine learning where, in contrast to supervised learning, algorithms learn patterns exclusively from unlabeled data. Other frameworks in the spectrum of supervisions include weak- or semi-supervision, where a small portion of the data is tagged, and self-supervision. Some researchers consider self-supervised learning a form of unsupervised learning. Conceptually, unsupervised learning divides into the aspects of data, training, algorithm, and downstream applications. Typically, the dataset is harvested cheaply "in the wild", such as massive text corpus obtained by web crawling, with only minor filtering (such as Common Crawl). This compares favorably to supervised learning, where the dataset (such as the ImageNet1000) is typically constructed manually, which is much more expensive. There were algorithms designed specifically for unsupervised learning, such as clustering algorithms like k-means, dimensionality reduction techniques l ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Wake-sleep Algorithm

The wake-sleep algorithm is an unsupervised learning algorithm for deep Generative model, generative models, especially Helmholtz machine, Helmholtz Machines. The algorithm is similar to the Expectation–maximization algorithm, expectation-maximization algorithm, and optimizes the model Likelihood function, likelihood for observed data. The name of the algorithm derives from its use of two learning phases, the “wake” phase and the “sleep” phase, which are performed alternately. It can be conceived as a model for learning in the brain, but is also being applied for machine learning. Description The goal of the wake-sleep algorithm is to find a hierarchical representation of observed data. In a graphical representation of the algorithm, data is applied to the algorithm at the bottom, while higher layers form gradually more abstract representations. Between each pair of layers are two sets of weights: Recognition weights, which define how representations are Bayesian infere ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Variational Autoencoder

In machine learning, a variational autoencoder (VAE) is an artificial neural network architecture introduced by Diederik P. Kingma and Max Welling. It is part of the families of probabilistic graphical models and variational Bayesian methods. In addition to being seen as an autoencoder neural network architecture, variational autoencoders can also be studied within the mathematical formulation of variational Bayesian methods, connecting a neural encoder network to its decoder through a probabilistic latent space (for example, as a multivariate Gaussian distribution) that corresponds to the parameters of a variational distribution. Thus, the encoder maps each point (such as an image) from a large complex dataset into a distribution within the latent space, rather than to a single point in that space. The decoder has the opposite function, which is to map from the latent space to the input space, again according to a distribution (although in practice, noise is rarely a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Backpropagation

In machine learning, backpropagation is a gradient computation method commonly used for training a neural network to compute its parameter updates. It is an efficient application of the chain rule to neural networks. Backpropagation computes the gradient of a loss function with respect to the weights of the network for a single input–output example, and does so efficiently, computing the gradient one layer at a time, iterating backward from the last layer to avoid redundant calculations of intermediate terms in the chain rule; this can be derived through dynamic programming. Strictly speaking, the term ''backpropagation'' refers only to an algorithm for efficiently computing the gradient, not how the gradient is used; but the term is often used loosely to refer to the entire learning algorithm – including how the gradient is used, such as by stochastic gradient descent, or as an intermediate step in a more complicated optimizer, such as Adaptive Moment Estimation. The ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

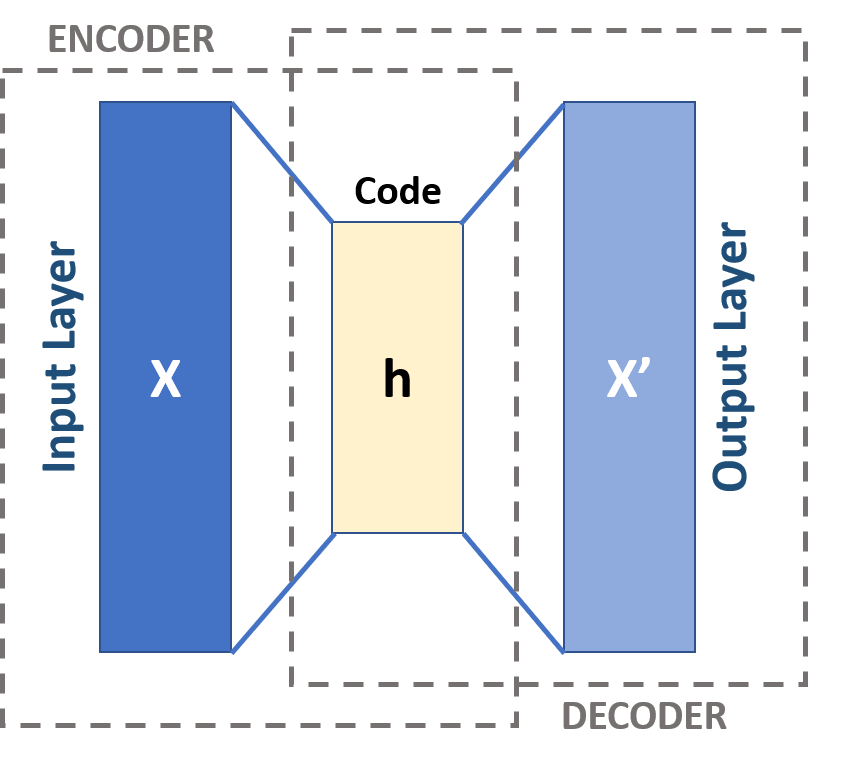

Autoencoder

An autoencoder is a type of artificial neural network used to learn efficient codings of unlabeled data (unsupervised learning). An autoencoder learns two functions: an encoding function that transforms the input data, and a decoding function that recreates the input data from the encoded representation. The autoencoder learns an efficient representation (encoding) for a set of data, typically for dimensionality reduction, to generate lower-dimensional embeddings for subsequent use by other machine learning algorithms. Variants exist which aim to make the learned representations assume useful properties. Examples are regularized autoencoders (''sparse'', ''denoising'' and ''contractive'' autoencoders), which are effective in learning representations for subsequent classification tasks, and ''variational'' autoencoders, which can be used as generative models. Autoencoders are applied to many problems, including facial recognition, feature detection, anomaly detection, and l ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Boltzmann Machine

A Boltzmann machine (also called Sherrington–Kirkpatrick model with external field or stochastic Ising model), named after Ludwig Boltzmann, is a spin glass, spin-glass model with an external field, i.e., a Spin glass#Sherrington–Kirkpatrick model, Sherrington–Kirkpatrick model, that is a stochastic Ising model. It is a statistical physics technique applied in the context of cognitive science. It is also classified as a Markov random field. Boltzmann machines are theoretically intriguing because of the locality and Hebbian nature of their training algorithm (being trained by Hebb's rule), and because of their Parallelism (computing), parallelism and the resemblance of their dynamics to simple physical processes. Boltzmann machines with unconstrained connectivity have not been proven useful for practical problems in machine learning or inference, but if the connectivity is properly constrained, the learning can be made efficient enough to be useful for practical problems. Th ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Hopfield Network

A Hopfield network (or associative memory) is a form of recurrent neural network, or a spin glass system, that can serve as a content-addressable memory. The Hopfield network, named for John Hopfield, consists of a single layer of neurons, where each neuron is connected to every other neuron except itself. These connections are bidirectional and symmetric, meaning the weight of the connection from neuron ''i'' to neuron ''j'' is the same as the weight from neuron ''j'' to neuron ''i''. Patterns are associatively recalled by fixing certain inputs, and dynamically evolve the network to minimize an energy function, towards local energy minimum states that correspond to stored patterns. Patterns are associatively learned (or "stored") by a Hebbian learning algorithm. One of the key features of Hopfield networks is their ability to recover complete patterns from partial or noisy inputs, making them robust in the face of incomplete or corrupted data. Their connection to statistical mech ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |