|

GPT-3.5

Generative Pre-trained Transformer 3 (GPT-3) is an autoregressive language model that uses deep learning to produce human-like text. Given an initial text as prompt, it will produce text that continues the prompt. The architecture is a standard transformer network (with a few engineering tweaks) with the unprecedented size of 2048-token-long context and 175 billion parameters (requiring 800 GB of storage). The training method is "generative pretraining", meaning that it is trained to predict what the next token is. The model demonstrated strong few-shot learning on many text-based tasks. It is the third-generation language prediction model in the GPT-n series (and the successor to GPT-2) created by OpenAI, a San Francisco-based artificial intelligence research laboratory. GPT-3, which was introduced in May 2020, and was in beta testing as of July 2020, is part of a trend in natural language processing (NLP) systems of pre-trained language representations. The quality of the ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Language Model

A language model is a probability distribution over sequences of words. Given any sequence of words of length , a language model assigns a probability P(w_1,\ldots,w_m) to the whole sequence. Language models generate probabilities by training on text corpora in one or many languages. Given that languages can be used to express an infinite variety of valid sentences (the property of digital infinity), language modeling faces the problem of assigning non-zero probabilities to linguistically valid sequences that may never be encountered in the training data. Several modelling approaches have been designed to surmount this problem, such as applying the Markov assumption or using neural architectures such as recurrent neural networks or transformers. Language models are useful for a variety of problems in computational linguistics; from initial applications in speech recognition to ensure nonsensical (i.e. low-probability) word sequences are not predicted, to wider use in machine tran ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

OpenAI

OpenAI is an artificial intelligence (AI) research laboratory consisting of the for-profit corporation OpenAI LP and its parent company, the non-profit OpenAI Inc. The company conducts research in the field of AI with the stated goal of promoting and developing friendly AI in a way that benefits humanity as a whole. The organization was founded in San Francisco in late 2015 by Sam Altman, Elon Musk, and others, who collectively pledged US$1 billion. Musk resigned from the board in February 2018 but remained a donor. In 2019, OpenAI LP received a 1 billion investment from Microsoft. OpenAI is headquartered at the Pioneer Building in Mission District, San Francisco. History In December 2015, Sam Altman, Elon Musk, Greg Brockman, Reid Hoffman, Jessica Livingston, Peter Thiel, Amazon Web Services (AWS), Infosys, and YC Research announced the formation of OpenAI and pledged over 1 billion to the venture. The organization stated it would "freely collabora ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Machine Learning

Machine learning (ML) is a field of inquiry devoted to understanding and building methods that 'learn', that is, methods that leverage data to improve performance on some set of tasks. It is seen as a part of artificial intelligence. Machine learning algorithms build a model based on sample data, known as training data, in order to make predictions or decisions without being explicitly programmed to do so. Machine learning algorithms are used in a wide variety of applications, such as in medicine, email filtering, speech recognition, agriculture, and computer vision, where it is difficult or unfeasible to develop conventional algorithms to perform the needed tasks.Hu, J.; Niu, H.; Carrasco, J.; Lennox, B.; Arvin, F.,Voronoi-Based Multi-Robot Autonomous Exploration in Unknown Environments via Deep Reinforcement Learning IEEE Transactions on Vehicular Technology, 2020. A subset of machine learning is closely related to computational statistics, which focuses on making pred ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Byte Pair Encoding

Byte pair encoding or digram coding is a simple form of data compression in which the most common pair of consecutive bytes of data is replaced with a byte that does not occur within that data. A table of the replacements is required to rebuild the original data. The algorithm was first described publicly by Philip Gage in a February 1994 article "A New Algorithm for Data Compression" in the ''C Users Journal''. A variant of the technique has shown to be useful in several natural language processing (NLP) applications, such as Google's SentencePiece, and OpenAI OpenAI is an artificial intelligence (AI) research laboratory consisting of the for-profit corporation OpenAI LP and its parent company, the non-profit OpenAI Inc. The company conducts research in the field of AI with the stated goal of promo ...'s GPT-3. Here, the goal is not data compression, but encoding text in a given language as a sequence of 'tokens', using a fixed vocabulary of different tokens. Typically, mos ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Common Crawl

Common Crawl is a nonprofit 501(c)(3) organization that crawls the web and freely provides its archives and datasets to the public. Common Crawl's web archive consists of petabytes of data collected since 2011. It completes crawls generally every month. Common Crawl was founded by Gil Elbaz. Advisors to the non-profit include Peter Norvig and Joi Ito. The organization's crawlers respect nofollow and robots.txt policies. Open source code for processing Common Crawl's data set is publicly available. The Common Crawl dataset includes copyrighted work and is distributed from the US under fair use claims. Researchers in other countries have made use of techniques such as shuffling sentences or referencing the common crawl dataset to work around copyright law in other legal jurisdictions. History Amazon Web Services began hosting Common Crawl's archive through its Public Data Sets program in 2012. The organization began releasing metadata files and the text output of the craw ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Parameter

A parameter (), generally, is any characteristic that can help in defining or classifying a particular system (meaning an event, project, object, situation, etc.). That is, a parameter is an element of a system that is useful, or critical, when identifying the system, or when evaluating its performance, status, condition, etc. ''Parameter'' has more specific meanings within various disciplines, including mathematics, computer programming, engineering, statistics, logic, linguistics, and electronic musical composition. In addition to its technical uses, there are also extended uses, especially in non-scientific contexts, where it is used to mean defining characteristics or boundaries, as in the phrases 'test parameters' or 'game play parameters'. Modelization When a system is modeled by equations, the values that describe the system are called ''parameters''. For example, in mechanics, the masses, the dimensions and shapes (for solid bodies), the densities and the viscosit ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

ArXiv

arXiv (pronounced " archive"—the X represents the Greek letter chi ⟨χ⟩) is an open-access repository of electronic preprints and postprints (known as e-prints) approved for posting after moderation, but not peer review. It consists of scientific papers in the fields of mathematics, physics, astronomy, electrical engineering, computer science, quantitative biology, statistics, mathematical finance and economics, which can be accessed online. In many fields of mathematics and physics, almost all scientific papers are self-archived on the arXiv repository before publication in a peer-reviewed journal. Some publishers also grant permission for authors to archive the peer-reviewed postprint. Begun on August 14, 1991, arXiv.org passed the half-million-article milestone on October 3, 2008, and had hit a million by the end of 2014. As of April 2021, the submission rate is about 16,000 articles per month. History arXiv was made possible by the compact TeX file ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Question Answering

Question answering (QA) is a computer science discipline within the fields of information retrieval and natural language processing (NLP), which is concerned with building systems that automatically answer questions posed by humans in a natural language. Overview A question answering implementation, usually a computer program, may construct its answers by querying a structured database of knowledge or information, usually a knowledge base. More commonly, question answering systems can pull answers from an unstructured collection of natural language documents. Some examples of natural language document collections used for question answering systems include: * a local collection of reference texts * internal organization documents and web pages * compiled newswire reports * a set of Wikipedia pages * a subset of World Wide Web pages Types of question answering Question answering research attempts to deal with a wide range of question types including: fact, list, definition, ''Ho ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Automatic Summarization

Automatic summarization is the process of shortening a set of data computationally, to create a subset (a summary) that represents the most important or relevant information within the original content. Artificial intelligence algorithms are commonly developed and employed to achieve this, specialized for different types of data. Text summarization is usually implemented by natural language processing methods, designed to locate the most informative sentences in a given document. On the other hand, visual content can be summarized using computer vision algorithms. Image summarization is the subject of ongoing research; existing approaches typically attempt to display the most representative images from a given image collection, or generate a video that only includes the most important content from the entire collection. Video summarization algorithms identify and extract from the original video content the most important frames (''key-frames''), and/or the most important video segm ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Unsupervised Learning

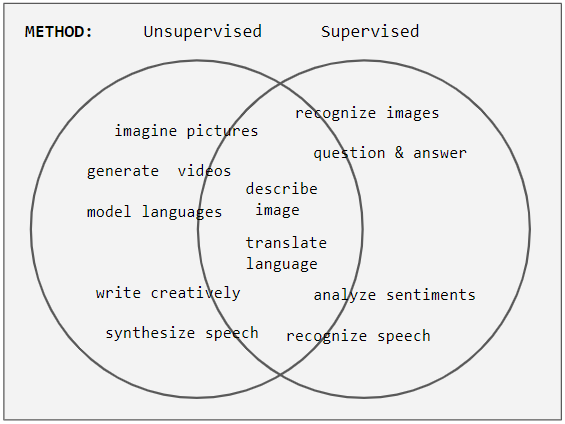

Unsupervised learning is a type of algorithm that learns patterns from untagged data. The hope is that through mimicry, which is an important mode of learning in people, the machine is forced to build a concise representation of its world and then generate imaginative content from it. In contrast to supervised learning where data is tagged by an expert, e.g. tagged as a "ball" or "fish", unsupervised methods exhibit self-organization that captures patterns as probability densities or a combination of neural feature preferences encoded in the machine's weights and activations. The other levels in the supervision spectrum are reinforcement learning where the machine is given only a numerical performance score as guidance, and semi-supervised learning where a small portion of the data is tagged. Neural networks Tasks vs. methods Neural network tasks are often categorized as discriminative (recognition) or generative (imagination). Often but not always, discriminative ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Discriminative Model

Discriminative models, also referred to as conditional models, are a class of logistical models used for classification or regression. They distinguish decision boundaries through observed data, such as pass/fail, win/lose, alive/dead or healthy/sick. Typical discriminative models include logistic regression (LR), conditional random fields (CRFs) (specified over an undirected graph), decision trees, and many others. Typical generative model approaches include naive Bayes classifiers, Gaussian mixture models, variational autoencoders, generative adversarial networks and others. Definition Unlike generative modelling, which studies from the joint probability P(x,y), discriminative modeling studies the P(y, x) or maps the given unobserved variable (target) x to a class label y dependent on the observed variables (training samples). For example, in object recognition, x is likely to be a vector of raw pixels (or features extracted from the raw pixels of the image). Within a pro ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Generative Pre-training

Generative may refer to: * Generative actor, a person who instigates social change * Generative art, art that has been created using an autonomous system that is frequently, but not necessarily, implemented using a computer * Generative music, music that is ever-different and changing, and that is created by a system Mathematics and science * Generative anthropology, a field of study based on the theory that history of human culture is a genetic or "generative" development stemming from the development of language * Generative model, a model for randomly generating observable data in probability and statistics * Generative programming, a type of computer programming in which some mechanism generates a computer program to allow human programmers write code at a higher abstraction level * Generative sciences, an interdisciplinary and multidisciplinary science that explores the natural world and its complex behaviours as a generative process * Generative systems, systems that use ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

_-1.jpg)