|

Data Leakage

In statistics and machine learning, leakage (also known as data leakage or target leakage) is the use of information in the model training process which would not be expected to be available at prediction time, causing the predictive scores (metrics) to overestimate the model's utility when run in a production environment. Leakage is often subtle and indirect, making it hard to detect and eliminate. Leakage can cause a statistician or modeler to select a suboptimal model, which could be outperformed by a leakage-free model. Leakage modes Leakage can occur in many steps in the machine learning process. The leakage causes can be sub-classified into two possible sources of leakage for a model: features and training examples. Feature leakage Feature or column-wise leakage is caused by the inclusion of columns which are one of the following: a duplicate label, a proxy for the label, or the label itself. These features, known as anachronisms, will not be available when the model is ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistics

Statistics (from German language, German: ', "description of a State (polity), state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a scientific, industrial, or social problem, it is conventional to begin with a statistical population or a statistical model to be studied. Populations can be diverse groups of people or objects such as "all people living in a country" or "every atom composing a crystal". Statistics deals with every aspect of data, including the planning of data collection in terms of the design of statistical survey, surveys and experimental design, experiments. When census data (comprising every member of the target population) cannot be collected, statisticians collect data by developing specific experiment designs and survey sample (statistics), samples. Representative sampling assures that inferences and conclusions can reasonably extend from the sample ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Independent And Identically Distributed Random Variables

Independent or Independents may refer to: Arts, entertainment, and media Artist groups * Independents (artist group), a group of modernist painters based in Pennsylvania, United States * Independentes (English: Independents), a Portuguese artist group Music Groups, labels, and genres * Independent music, a number of genres associated with independent labels * Independent record label, a record label not associated with a major label * Independent Albums, American albums chart Albums * ''Independent'' (Ai album), 2012 * ''Independent'' (Faze album), 2006 * ''Independent'' (Sacred Reich album), 1993 Songs * "Independent" (song), a 2007 song by Webbie * "Independent", a 2002 song by Ayumi Hamasaki from '' H'' News media organizations * Independent Media Center (also known as Indymedia or IMC), an open publishing network of journalist collectives that report on political and social issues, e.g., in ''The Indypendent'' newspaper of NYC * ITV (TV network) (Independent Televi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Training, Validation, And Test Sets

In machine learning, a common task is the study and construction of algorithms that can learn from and make predictions on data. Such algorithms function by making data-driven predictions or decisions, through building a mathematical model from input data. These input data used to build the model are usually divided into multiple data sets. In particular, three data sets are commonly used in different stages of the creation of the model: training, validation, and test sets. The model is initially fit on a training data set, which is a set of examples used to fit the parameters (e.g. weights of connections between neurons in artificial neural networks) of the model. The model (e.g. a naive Bayes classifier) is trained on the training data set using a supervised learning method, for example using optimization methods such as gradient descent or stochastic gradient descent. In practice, the training data set often consists of pairs of an input vector (or scalar) and the correspondin ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Supervised Learning

In machine learning, supervised learning (SL) is a paradigm where a Statistical model, model is trained using input objects (e.g. a vector of predictor variables) and desired output values (also known as a ''supervisory signal''), which are often human-made labels. The training process builds a function that maps new data to expected output values. An optimal scenario will allow for the algorithm to accurately determine output values for unseen instances. This requires the learning algorithm to Generalization (learning), generalize from the training data to unseen situations in a reasonable way (see inductive bias). This statistical quality of an algorithm is measured via a ''generalization error''. Steps to follow To solve a given problem of supervised learning, the following steps must be performed: # Determine the type of training samples. Before doing anything else, the user should decide what kind of data is to be used as a Training, validation, and test data sets, trainin ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Resampling (statistics)

In statistics, resampling is the creation of new samples based on one observed sample. Resampling methods are: # Permutation tests (also re-randomization tests) for generating counterfactual samples # Bootstrapping # Cross validation # Jackknife Permutation tests Permutation tests rely on resampling the original data assuming the null hypothesis. Based on the resampled data it can be concluded how likely the original data is to occur under the null hypothesis. Bootstrap Bootstrapping is a statistical method for estimating the sampling distribution of an estimator by sampling with replacement from the original sample, most often with the purpose of deriving robust estimates of standard errors and confidence intervals of a population parameter like a mean, median, proportion, odds ratio, correlation coefficient or regression coefficient. It has been called the plug-in principle,Logan, J. David and Wolesensky, Willian R. Mathematical methods in biology. Pure and Ap ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Overfitting

In mathematical modeling, overfitting is "the production of an analysis that corresponds too closely or exactly to a particular set of data, and may therefore fail to fit to additional data or predict future observations reliably". An overfitted model is a mathematical model that contains more parameters than can be justified by the data. In the special case where the model consists of a polynomial function, these parameters represent the degree of a polynomial. The essence of overfitting is to have unknowingly extracted some of the residual variation (i.e., the Statistical noise, noise) as if that variation represented underlying model structure. Underfitting occurs when a mathematical model cannot adequately capture the underlying structure of the data. An under-fitted model is a model where some parameters or terms that would appear in a correctly specified model are missing. Underfitting would occur, for example, when fitting a linear model to nonlinear data. Such a model ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Concept Drift

In predictive analytics, data science, machine learning and related fields, concept drift or drift is an evolution of data that invalidates the data model. It happens when the statistical properties of the target variable, which the model is trying to predict, change over time in unforeseen ways. This causes problems because the predictions become less accurate as time passes. Drift detection and drift adaptation are of paramount importance in the fields that involve dynamically changing data and data models. Predictive model decay In machine learning and predictive analytics this drift phenomenon is called concept drift. In machine learning, a common element of a data model are the statistical properties, such as probability distribution of the actual data. If they deviate from the statistical properties of the training data set, then the learned predictions may become invalid, if the drift is not addressed. Data configuration decay Another important area is software engineering, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

AutoML

Automated machine learning (AutoML) is the process of automating the tasks of applying machine learning to real-world problems. It is the combination of automation and ML. AutoML potentially includes every stage from beginning with a raw dataset to building a machine learning model ready for deployment. AutoML was proposed as an artificial intelligence-based solution to the growing challenge of applying machine learning. The high degree of automation in AutoML aims to allow non-experts to make use of machine learning models and techniques without requiring them to become experts in machine learning. Automating the process of applying machine learning end-to-end additionally offers the advantages of producing simpler solutions, faster creation of those solutions, and models that often outperform hand-designed models. Common techniques used in AutoML include hyperparameter optimization, meta-learning and neural architecture search. Comparison to the standard approach In a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Replication Crisis

The replication crisis, also known as the reproducibility or replicability crisis, refers to the growing number of published scientific results that other researchers have been unable to reproduce or verify. Because the reproducibility of empirical results is an essential part of the scientific method, such failures undermine the credibility of theories that build on them and can call into question substantial parts of scientific knowledge. The replication crisis is frequently discussed in relation to psychology and medicine, wherein considerable efforts have been undertaken to reinvestigate the results of classic studies to determine whether they are reliable, and if they turn out not to be, the reasons for the failure. Data strongly indicate that other natural science, natural and social sciences are also affected. The phrase "replication crisis" was coined in the early 2010s as part of a growing awareness of the problem. Considerations of causes and remedies have given rise ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Andrew Ng

Andrew Yan-Tak Ng (; born April 18, 1976) is a British-American computer scientist and Internet Entrepreneur, technology entrepreneur focusing on machine learning and artificial intelligence (AI). Ng was a cofounder and head of Google Brain and was the former Chief Scientist at Baidu, building the company's Artificial Intelligence Group into a team of several thousand people. Ng is an adjunct professor at Stanford University (formerly associate professor and Director of its Stanford AI Lab or SAIL). Ng has also worked in the field of online education, cofounding Coursera and DeepLearning.AI. He has spearheaded many efforts to "democratize deep learning" teaching over 8 million students through his online courses. Ng is renowned globally in computer science, recognized in Time (magazine), Time magazine's 100 Most Influential People in 2012 and Fast Company, Fast Company's Most Creative People in 2014. His influence extends to being named in the Time 100, Time100 AI Most Influenti ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Bootstrapping (statistics)

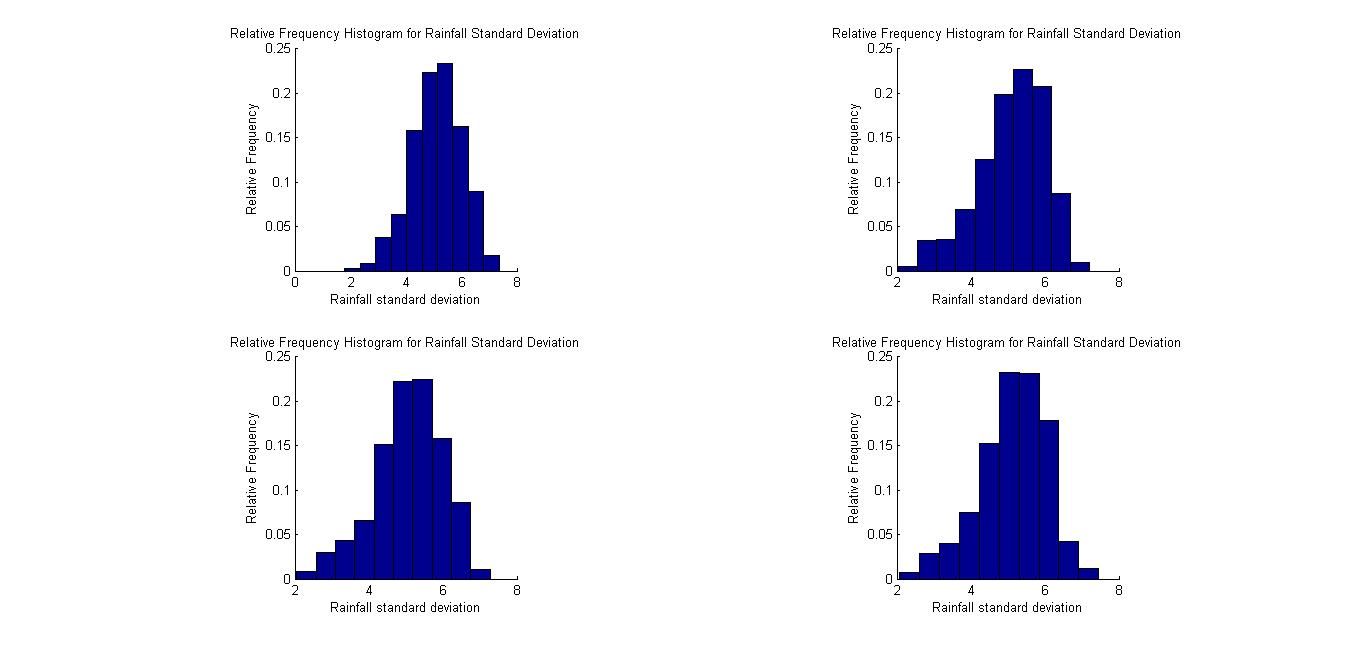

Bootstrapping is a procedure for estimating the distribution of an estimator by resampling (often with replacement) one's data or a model estimated from the data. Bootstrapping assigns measures of accuracy ( bias, variance, confidence intervals, prediction error, etc.) to sample estimates.software This technique allows estimation of the sampling distribution of almost any statistic using random sampling methods. Bootstrapping estimates the properties of an estimand (such as its ) by measuring those properties when sampling from an approximating distribution. One standard choice for an approximating distributi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Machine Learning

Machine learning (ML) is a field of study in artificial intelligence concerned with the development and study of Computational statistics, statistical algorithms that can learn from data and generalise to unseen data, and thus perform Task (computing), tasks without explicit Machine code, instructions. Within a subdiscipline in machine learning, advances in the field of deep learning have allowed Neural network (machine learning), neural networks, a class of statistical algorithms, to surpass many previous machine learning approaches in performance. ML finds application in many fields, including natural language processing, computer vision, speech recognition, email filtering, agriculture, and medicine. The application of ML to business problems is known as predictive analytics. Statistics and mathematical optimisation (mathematical programming) methods comprise the foundations of machine learning. Data mining is a related field of study, focusing on exploratory data analysi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |