|

Chebyshev Inequality

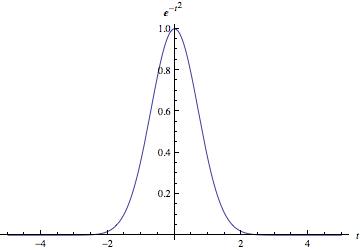

In probability theory, Chebyshev's inequality (also called the Bienaymé–Chebyshev inequality) provides an upper bound on the probability of deviation of a random variable (with finite variance) from its mean. More specifically, the probability that a random variable deviates from its mean by more than k\sigma is at most 1/k^2, where k is any positive constant and \sigma is the standard deviation (the square root of the variance). The rule is often called Chebyshev's theorem, about the range of standard deviations around the mean, in statistics. The inequality has great utility because it can be applied to any probability distribution in which the mean and variance are defined. For example, it can be used to prove the weak law of large numbers. Its practical usage is similar to the 68–95–99.7 rule, which applies only to normal distributions. Chebyshev's inequality is more general, stating that a minimum of just 75% of values must lie within two standard deviations of the ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

Probability Theory

Probability theory or probability calculus is the branch of mathematics concerned with probability. Although there are several different probability interpretations, probability theory treats the concept in a rigorous mathematical manner by expressing it through a set of axioms of probability, axioms. Typically these axioms formalise probability in terms of a probability space, which assigns a measure (mathematics), measure taking values between 0 and 1, termed the probability measure, to a set of outcomes called the sample space. Any specified subset of the sample space is called an event (probability theory), event. Central subjects in probability theory include discrete and continuous random variables, probability distributions, and stochastic processes (which provide mathematical abstractions of determinism, non-deterministic or uncertain processes or measured Quantity, quantities that may either be single occurrences or evolve over time in a random fashion). Although it is no ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

Coverage Probability

In statistical estimation theory, the coverage probability, or coverage for short, is the probability that a confidence interval or confidence region will include the true value (parameter) of interest. It can be defined as the proportion of instances where the interval surrounds the true value as assessed by long-run frequency. In statistical prediction, the coverage probability is the probability that a prediction interval will include an out-of-sample value of the random variable. The coverage probability can be defined as the proportion of instances where the interval surrounds an out-of-sample value as assessed by long-run frequency. Concept The fixed degree of certainty pre-specified by the analyst, referred to as the ''confidence level'' or ''confidence coefficient'' of the constructed interval, is effectively the nominal coverage probability of the procedure for constructing confidence intervals. Hence, referring to a "nominal confidence level" or "nominal confi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

Mahalanobis Distance

The Mahalanobis distance is a distance measure, measure of the distance between a point P and a probability distribution D, introduced by Prasanta Chandra Mahalanobis, P. C. Mahalanobis in 1936. The mathematical details of Mahalanobis distance first appeared in the ''Journal of The Asiatic Society of Bengal'' in 1936. Mahalanobis's definition was prompted by the problem of similarity measure, identifying the similarities of skulls based on measurements (the earliest work related to similarities of skulls are from 1922 and another later work is from 1927). Raj Chandra Bose, R.C. Bose later obtained the sampling distribution of Mahalanobis distance, under the assumption of equal dispersion. It is a multivariate generalization of the square of the standard score z=(x- \mu)/\sigma: how many standard deviations away P is from the mean of D. This distance is zero for P at the mean of D and grows as P moves away from the mean along each principal component axis. If each of these axes ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

Transpose

In linear algebra, the transpose of a Matrix (mathematics), matrix is an operator which flips a matrix over its diagonal; that is, it switches the row and column indices of the matrix by producing another matrix, often denoted by (among other notations). The transpose of a matrix was introduced in 1858 by the British mathematician Arthur Cayley. Transpose of a matrix Definition The transpose of a matrix , denoted by , , , A^, , , or , may be constructed by any one of the following methods: #Reflection (mathematics), Reflect over its main diagonal (which runs from top-left to bottom-right) to obtain #Write the rows of as the columns of #Write the columns of as the rows of Formally, the -th row, -th column element of is the -th row, -th column element of : :\left[\mathbf^\operatorname\right]_ = \left[\mathbf\right]_. If is an matrix, then is an matrix. In the case of square matrices, may also denote the th power of the matrix . For avoiding a possibl ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

Covariance Matrix

In probability theory and statistics, a covariance matrix (also known as auto-covariance matrix, dispersion matrix, variance matrix, or variance–covariance matrix) is a square matrix giving the covariance between each pair of elements of a given random vector. Intuitively, the covariance matrix generalizes the notion of variance to multiple dimensions. As an example, the variation in a collection of random points in two-dimensional space cannot be characterized fully by a single number, nor would the variances in the x and y directions contain all of the necessary information; a 2 \times 2 matrix would be necessary to fully characterize the two-dimensional variation. Any covariance matrix is symmetric and positive semi-definite and its main diagonal contains variances (i.e., the covariance of each element with itself). The covariance matrix of a random vector \mathbf is typically denoted by \operatorname_, \Sigma or S. Definition Throughout this article, boldfaced u ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

Dimension

In physics and mathematics, the dimension of a mathematical space (or object) is informally defined as the minimum number of coordinates needed to specify any point within it. Thus, a line has a dimension of one (1D) because only one coordinate is needed to specify a point on itfor example, the point at 5 on a number line. A surface, such as the boundary of a cylinder or sphere, has a dimension of two (2D) because two coordinates are needed to specify a point on itfor example, both a latitude and longitude are required to locate a point on the surface of a sphere. A two-dimensional Euclidean space is a two-dimensional space on the plane. The inside of a cube, a cylinder or a sphere is three-dimensional (3D) because three coordinates are needed to locate a point within these spaces. In classical mechanics, space and time are different categories and refer to absolute space and time. That conception of the world is a four-dimensional space but not the one that w ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

Multidimensional Chebyshev's Inequality

In probability theory, the multidimensional Chebyshev's inequality is a generalization of Chebyshev's inequality, which puts a bound on the probability of the event that a random variable differs from its expected value by more than a specified amount. Let X be an N-dimensional random vector with expected value \mu=\operatorname and covariance matrix : V=\operatorname X - \mu) (X - \mu)^T \, If V is a positive-definite matrix, for any real number t>0: : \Pr \left( \sqrt > t\right) \le \frac N Proof Since V is positive-definite, so is V^. Define the random variable : y = (X-\mu)^T V^ (X-\mu). Since y is positive, Markov's inequality holds: : \Pr\left( \sqrt > t\right) = \Pr( \sqrt > t) = \Pr(y > t^2) \le \frac. Finally, :\begin \operatorname &= \operatorname X-\mu)^T V^ (X-\mu)\ pt&=\operatorname \operatorname ( V^ (X-\mu) (X-\mu)^T )\ pt&= \operatorname ( V^ V ) = N \end. Infinite dimensions There is a straightforward extension of the vector version of Chebyshev's ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

Multivariate Random Variable

In probability, and statistics, a multivariate random variable or random vector is a list or vector of mathematical variables each of whose value is unknown, either because the value has not yet occurred or because there is imperfect knowledge of its value. The individual variables in a random vector are grouped together because they are all part of a single mathematical system — often they represent different properties of an individual statistical unit. For example, while a given person has a specific age, height and weight, the representation of these features of ''an unspecified person'' from within a group would be a random vector. Normally each element of a random vector is a real number. Random vectors are often used as the underlying implementation of various types of aggregate random variables, e.g. a random matrix, random tree, random sequence, stochastic process, etc. Formally, a multivariate random variable is a column vector \mathbf = (X_1,\dots,X_n)^\mathsf ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

Expected Value

In probability theory, the expected value (also called expectation, expectancy, expectation operator, mathematical expectation, mean, expectation value, or first Moment (mathematics), moment) is a generalization of the weighted average. Informally, the expected value is the arithmetic mean, mean of the possible values a random variable can take, weighted by the probability of those outcomes. Since it is obtained through arithmetic, the expected value sometimes may not even be included in the sample data set; it is not the value you would expect to get in reality. The expected value of a random variable with a finite number of outcomes is a weighted average of all possible outcomes. In the case of a continuum of possible outcomes, the expectation is defined by Integral, integration. In the axiomatic foundation for probability provided by measure theory, the expectation is given by Lebesgue integration. The expected value of a random variable is often denoted by , , or , with a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

Conditional Expectation

In probability theory, the conditional expectation, conditional expected value, or conditional mean of a random variable is its expected value evaluated with respect to the conditional probability distribution. If the random variable can take on only a finite number of values, the "conditions" are that the variable can only take on a subset of those values. More formally, in the case when the random variable is defined over a discrete probability space, the "conditions" are a partition of a set, partition of this probability space. Depending on the context, the conditional expectation can be either a random variable or a function. The random variable is denoted E(X\mid Y) analogously to conditional probability. The function form is either denoted E(X\mid Y=y) or a separate function symbol such as f(y) is introduced with the meaning E(X\mid Y) = f(Y). Examples Example 1: Dice rolling Consider the roll of a fair die and let ''A'' = 1 if the number is even (i.e., 2, 4, or 6) and ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

Affine Transformation

In Euclidean geometry, an affine transformation or affinity (from the Latin, '' affinis'', "connected with") is a geometric transformation that preserves lines and parallelism, but not necessarily Euclidean distances and angles. More generally, an affine transformation is an automorphism of an affine space (Euclidean spaces are specific affine spaces), that is, a function which maps an affine space onto itself while preserving both the dimension of any affine subspaces (meaning that it sends points to points, lines to lines, planes to planes, and so on) and the ratios of the lengths of parallel line segments. Consequently, sets of parallel affine subspaces remain parallel after an affine transformation. An affine transformation does not necessarily preserve angles between lines or distances between points, though it does preserve ratios of distances between points lying on a straight line. If is the point set of an affine space, then every affine transformation on can ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

Markov Inequality

In probability theory, Markov's inequality gives an upper bound on the probability that a non-negative random variable is greater than or equal to some positive constant. Markov's inequality is tight in the sense that for each chosen positive constant, there exists a random variable such that the inequality is in fact an equality. It is named after the Russian mathematician Andrey Markov, although it appeared earlier in the work of Pafnuty Chebyshev (Markov's teacher), and many sources, especially in analysis, refer to it as Chebyshev's inequality (sometimes, calling it the first Chebyshev inequality, while referring to Chebyshev's inequality as the second Chebyshev inequality) or Bienaymé's inequality. Markov's inequality (and other similar inequalities) relate probabilities to expectations, and provide (frequently loose but still useful) bounds for the cumulative distribution function of a random variable. Markov's inequality can also be used to upper bound the expectation o ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |