|

Statistical Mechanics

In physics, statistical mechanics is a mathematical framework that applies statistical methods and probability theory to large assemblies of microscopic entities. It does not assume or postulate any natural laws, but explains the macroscopic behavior of nature from the behavior of such ensembles. Statistical mechanics arose out of the development of classical thermodynamics, a field for which it was successful in explaining macroscopic physical properties—such as temperature, pressure, and heat capacity—in terms of microscopic parameters that fluctuate about average values and are characterized by probability distributions. This established the fields of statistical thermodynamics and statistical physics. The founding of the field of statistical mechanics is generally credited to three physicists: *Ludwig Boltzmann, who developed the fundamental interpretation of entropy in terms of a collection of microstates *James Clerk Maxwell, who developed models of probability distr ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Physics

Physics is the natural science that studies matter, its fundamental constituents, its motion and behavior through space and time, and the related entities of energy and force. "Physical science is that department of knowledge which relates to the order of nature, or, in other words, to the regular succession of events." Physics is one of the most fundamental scientific disciplines, with its main goal being to understand how the universe behaves. "Physics is one of the most fundamental of the sciences. Scientists of all disciplines use the ideas of physics, including chemists who study the structure of molecules, paleontologists who try to reconstruct how dinosaurs walked, and climatologists who study how human activities affect the atmosphere and oceans. Physics is also the foundation of all engineering and technology. No engineer could design a flat-screen TV, an interplanetary spacecraft, or even a better mousetrap without first understanding the basic laws of physic ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Fluctuation–dissipation Theorem

The fluctuation–dissipation theorem (FDT) or fluctuation–dissipation relation (FDR) is a powerful tool in statistical physics for predicting the behavior of systems that obey detailed balance. Given that a system obeys detailed balance, the theorem is a proof that thermodynamic fluctuations in a physical variable predict the response quantified by the admittance or impedance (to be intended in their general sense, not only in electromagnetic terms) of the same physical variable (like voltage, temperature difference, etc.), and vice versa. The fluctuation–dissipation theorem applies both to classical and quantum mechanical systems. The fluctuation–dissipation theorem was proven by Herbert Callen and Theodore Welton in 1951 and expanded by Ryogo Kubo. There are antecedents to the general theorem, including Einstein's explanation of Brownian motion during his ''annus mirabilis'' and Harry Nyquist's explanation in 1928 of Johnson noise in electrical resistors. Qualitat ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Liouville's Theorem (Hamiltonian)

In physics, Liouville's theorem, named after the French mathematician Joseph Liouville, is a key theorem in classical statistical and Hamiltonian mechanics. It asserts that ''the phase-space distribution function is constant along the trajectories of the system''—that is that the density of system points in the vicinity of a given system point traveling through phase-space is constant with time. This time-independent density is in statistical mechanics known as the classical a priori probability. There are related mathematical results in symplectic topology and ergodic theory; systems obeying Liouville's theorem are examples of incompressible dynamical systems. There are extensions of Liouville's theorem to stochastic systems. Liouville equations The Liouville equation describes the time evolution of the ''phase space distribution function''. Although the equation is usually referred to as the "Liouville equation", Josiah Willard Gibbs was the first to recognize the impor ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Empirical Probability

The empirical probability, relative frequency, or experimental probability of an event is the ratio of the number of outcomes in which a specified event occurs to the total number of trials, not in a theoretical sample space but in an actual experiment. More generally, empirical probability estimates probabilities from experience and observation. Given an event ''A'' in a sample space, the relative frequency of ''A'' is the ratio ''m/n'', ''m'' being the number of outcomes in which the event ''A'' occurs, and ''n'' being the total number of outcomes of the experiment. In statistical terms, the empirical probability is an ''estimate'' or estimator of a probability. In simple cases, where the result of a trial only determines whether or not the specified event has occurred, modelling using a binomial distribution might be appropriate and then the empirical estimate is the maximum likelihood estimate. It is the Bayesian estimate for the same case if certain assumptions are made f ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

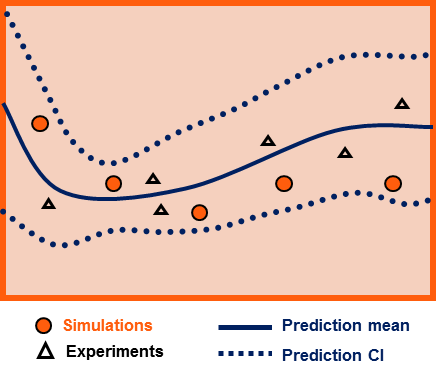

Epistemic Probability

Uncertainty quantification (UQ) is the science of quantitative characterization and reduction of uncertainties in both computational and real world applications. It tries to determine how likely certain outcomes are if some aspects of the system are not exactly known. An example would be to predict the acceleration of a human body in a head-on crash with another car: even if the speed was exactly known, small differences in the manufacturing of individual cars, how tightly every bolt has been tightened, etc., will lead to different results that can only be predicted in a statistical sense. Many problems in the natural sciences and engineering are also rife with sources of uncertainty. Computer experiments on computer simulations are the most common approach to study problems in uncertainty quantification. Sources Uncertainty can enter mathematical models and experimental measurements in various contexts. One way to categorize the sources of uncertainty is to consider: ; Paramet ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Density Matrix

In quantum mechanics, a density matrix (or density operator) is a matrix that describes the quantum state of a physical system. It allows for the calculation of the probabilities of the outcomes of any measurement performed upon this system, using the Born rule. It is a generalization of the more usual state vectors or wavefunctions: while those can only represent pure states, density matrices can also represent ''mixed states''. Mixed states arise in quantum mechanics in two different situations: first when the preparation of the system is not fully known, and thus one must deal with a statistical ensemble of possible preparations, and second when one wants to describe a physical system which is entangled with another, without describing their combined state. Density matrices are thus crucial tools in areas of quantum mechanics that deal with mixed states, such as quantum statistical mechanics, open quantum systems, quantum decoherence, and quantum information. Definition and ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Quantum Superposition

Quantum superposition is a fundamental principle of quantum mechanics. It states that, much like waves in classical physics, any two (or more) quantum states can be added together ("superposed") and the result will be another valid quantum state; and conversely, that every quantum state can be represented as a sum of two or more other distinct states. Mathematically, it refers to a property of solutions to the Schrödinger equation; since the Schrödinger equation is linear, any linear combination of solutions will also be a solution(s) . An example of a physically observable manifestation of the wave nature of quantum systems is the interference peaks from an electron beam in a double-slit experiment. The pattern is very similar to the one obtained by diffraction of classical waves. Another example is a quantum logical qubit state, as used in quantum information processing, which is a quantum superposition of the "basis states" , 0 \rangle and , 1 \rangle . Here , 0 \r ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Canonical Coordinates

In mathematics and classical mechanics, canonical coordinates are sets of coordinates on phase space which can be used to describe a physical system at any given point in time. Canonical coordinates are used in the Hamiltonian formulation of classical mechanics. A closely related concept also appears in quantum mechanics; see the Stone–von Neumann theorem and canonical commutation relations for details. As Hamiltonian mechanics are generalized by symplectic geometry and canonical transformations are generalized by contact transformations, so the 19th century definition of canonical coordinates in classical mechanics may be generalized to a more abstract 20th century definition of coordinates on the cotangent bundle of a manifold (the mathematical notion of phase space). Definition in classical mechanics In classical mechanics, canonical coordinates are coordinates q^i and p_i in phase space that are used in the Hamiltonian formalism. The canonical coordinates satisfy ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistical Ensemble (mathematical Physics)

In physics, specifically statistical mechanics, an ensemble (also statistical ensemble) is an idealization consisting of a large number of virtual copies (sometimes infinitely many) of a system, considered all at once, each of which represents a possible state that the real system might be in. In other words, a statistical ensemble is a set of systems of particles used in statistical mechanics to describe a single system. The concept of an ensemble was introduced by J. Willard Gibbs in 1902. A thermodynamic ensemble is a specific variety of statistical ensemble that, among other properties, is in statistical equilibrium (defined below), and is used to derive the properties of thermodynamic systems from the laws of classical or quantum mechanics. Physical considerations The ensemble formalises the notion that an experimenter repeating an experiment again and again under the same macroscopic conditions, but unable to control the microscopic details, may expect to observe a rang ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Schrödinger Equation

The Schrödinger equation is a linear partial differential equation that governs the wave function of a quantum-mechanical system. It is a key result in quantum mechanics, and its discovery was a significant landmark in the development of the subject. The equation is named after Erwin Schrödinger, who postulated the equation in 1925, and published it in 1926, forming the basis for the work that resulted in his Nobel Prize in Physics in 1933. Conceptually, the Schrödinger equation is the quantum counterpart of Newton's second law in classical mechanics. Given a set of known initial conditions, Newton's second law makes a mathematical prediction as to what path a given physical system will take over time. The Schrödinger equation gives the evolution over time of a wave function, the quantum-mechanical characterization of an isolated physical system. The equation can be derived from the fact that the time-evolution operator must be unitary, and must therefore be generated by t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Hamiltonian Mechanics

Hamiltonian mechanics emerged in 1833 as a reformulation of Lagrangian mechanics. Introduced by Sir William Rowan Hamilton, Hamiltonian mechanics replaces (generalized) velocities \dot q^i used in Lagrangian mechanics with (generalized) ''momenta''. Both theories provide interpretations of classical mechanics and describe the same physical phenomena. Hamiltonian mechanics has a close relationship with geometry (notably, symplectic geometry and Poisson structures) and serves as a link between classical and quantum mechanics. Overview Phase space coordinates (p,q) and Hamiltonian H Let (M, \mathcal L) be a mechanical system with the configuration space M and the smooth Lagrangian \mathcal L. Select a standard coordinate system (\boldsymbol,\boldsymbol) on M. The quantities \textstyle p_i(\boldsymbol,\boldsymbol,t) ~\stackrel~ / are called ''momenta''. (Also ''generalized momenta'', ''conjugate momenta'', and ''canonical momenta''). For a time instant t, the Legendre transformat ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Quantum State Vector

In quantum physics, a quantum state is a mathematical entity that provides a probability distribution for the outcomes of each possible measurement on a system. Knowledge of the quantum state together with the rules for the system's evolution in time exhausts all that can be predicted about the system's behavior. A mixture of quantum states is again a quantum state. Quantum states that cannot be written as a mixture of other states are called pure quantum states, while all other states are called mixed quantum states. A pure quantum state can be represented by a ray in a Hilbert space over the complex numbers, while mixed states are represented by density matrices, which are positive semidefinite operators that act on Hilbert spaces. Pure states are also known as state vectors or wave functions, the latter term applying particularly when they are represented as functions of position or momentum. For example, when dealing with the energy spectrum of the electron in a hydrogen ato ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

.png)