|

Variational Message Passing

Variational message passing (VMP) is an approximate inference technique for continuous- or discrete-valued Bayesian networks, with conjugate-exponential parents, developed by John Winn. VMP was developed as a means of generalizing the approximate variational methods used by such techniques as latent Dirichlet allocation, and works by updating an approximate distribution at each node through messages in the node's Markov blanket. Likelihood lower bound Given some set of hidden variables H and observed variables V, the goal of approximate inference is to lower-bound the probability that a graphical model is in the configuration V. Over some probability distribution Q (to be defined later), : \ln P(V) = \sum_H Q(H) \ln \frac = \sum_ Q(H) \Bigg \ln \frac - \ln \frac \Bigg . So, if we define our lower bound to be : L(Q) = \sum_ Q(H) \ln \frac , then the likelihood is simply this bound plus the relative entropy between P and Q. Because the relative entropy is non-negative, the funct ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Approximate Inference

Approximate inference methods make it possible to learn realistic models from big data by trading off computation time for accuracy, when exact learning and inference are computationally intractable. Major methods classes *Laplace's approximation *Variational Bayesian methods * Markov chain Monte Carlo * Expectation propagation * Markov random fields * Bayesian networks **Variational message passing * Loopy and generalized belief propagation See also *Statistical inference *Fuzzy logic Fuzzy logic is a form of many-valued logic in which the truth value of variables may be any real number between 0 and 1. It is employed to handle the concept of partial truth, where the truth value may range between completely true and completely ... * Data mining References External links *{{cite web, url=http://videolectures.net/mlss09uk_minka_ai/, title=Machine Learning Summer School (MLSS), Cambridge 2009, Approximate Inference, author= Tom Minka, Microsoft Research, date=Nov 2, 2009, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Exponential Family

In probability and statistics, an exponential family is a parametric set of probability distributions of a certain form, specified below. This special form is chosen for mathematical convenience, including the enabling of the user to calculate expectations, covariances using differentiation based on some useful algebraic properties, as well as for generality, as exponential families are in a sense very natural sets of distributions to consider. The term exponential class is sometimes used in place of "exponential family", or the older term Koopman–Darmois family. The terms "distribution" and "family" are often used loosely: specifically, ''an'' exponential family is a ''set'' of distributions, where the specific distribution varies with the parameter; however, a parametric ''family'' of distributions is often referred to as "''a'' distribution" (like "the normal distribution", meaning "the family of normal distributions"), and the set of all exponential families is sometimes l ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Poisson Distribution

In probability theory and statistics, the Poisson distribution is a discrete probability distribution that expresses the probability of a given number of events occurring in a fixed interval of time or space if these events occur with a known constant mean rate and independently of the time since the last event. It is named after French mathematician Siméon Denis Poisson (; ). The Poisson distribution can also be used for the number of events in other specified interval types such as distance, area, or volume. For instance, a call center receives an average of 180 calls per hour, 24 hours a day. The calls are independent; receiving one does not change the probability of when the next one will arrive. The number of calls received during any minute has a Poisson probability distribution with mean 3: the most likely numbers are 2 and 3 but 1 and 4 are also likely and there is a small probability of it being as low as zero and a very small probability it could be 10. ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Dirichlet Distribution

In probability and statistics, the Dirichlet distribution (after Peter Gustav Lejeune Dirichlet), often denoted \operatorname(\boldsymbol\alpha), is a family of continuous multivariate probability distributions parameterized by a vector \boldsymbol\alpha of positive reals. It is a multivariate generalization of the beta distribution, (Chapter 49: Dirichlet and Inverted Dirichlet Distributions) hence its alternative name of multivariate beta distribution (MBD). Dirichlet distributions are commonly used as prior distributions in Bayesian statistics, and in fact, the Dirichlet distribution is the conjugate prior of the categorical distribution and multinomial distribution. The infinite-dimensional generalization of the Dirichlet distribution is the ''Dirichlet process''. Definitions Probability density function The Dirichlet distribution of order ''K'' ≥ 2 with parameters ''α''1, ..., ''α''''K'' > 0 has a probability density function with respect to Leb ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Gamma Distribution

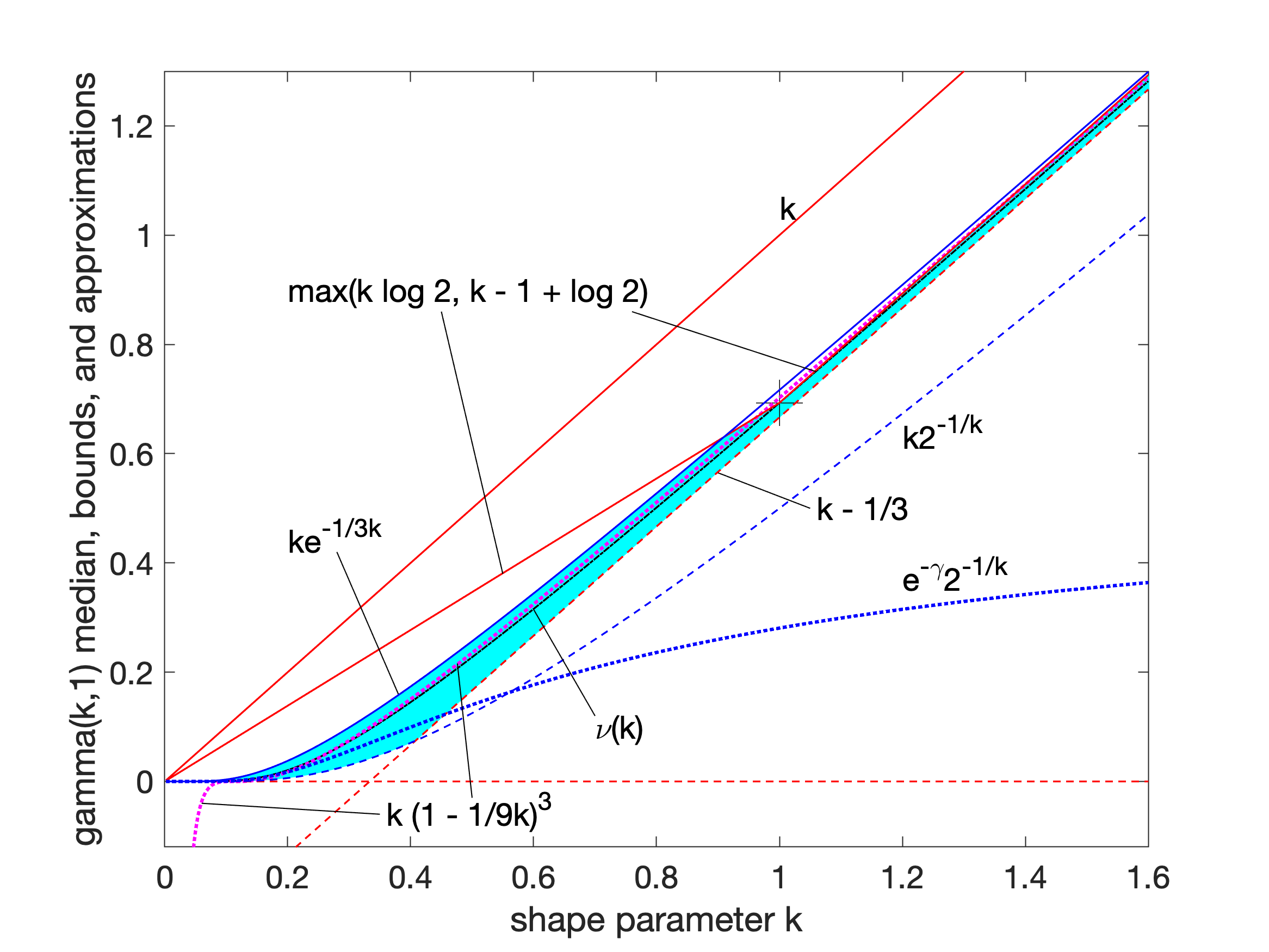

In probability theory and statistics, the gamma distribution is a two- parameter family of continuous probability distributions. The exponential distribution, Erlang distribution, and chi-square distribution are special cases of the gamma distribution. There are two equivalent parameterizations in common use: #With a shape parameter k and a scale parameter \theta. #With a shape parameter \alpha = k and an inverse scale parameter \beta = 1/ \theta , called a rate parameter. In each of these forms, both parameters are positive real numbers. The gamma distribution is the maximum entropy probability distribution (both with respect to a uniform base measure and a 1/x base measure) for a random variable X for which E 'X''= ''kθ'' = ''α''/''β'' is fixed and greater than zero, and E n(''X'')= ''ψ''(''k'') + ln(''θ'') = ''ψ''(''α'') − ln(''β'') is fixed (''ψ'' is the digamma function). Definitions The parameterization with ''k'' and ''θ'' appears to be more common ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Mean

There are several kinds of mean in mathematics, especially in statistics. Each mean serves to summarize a given group of data, often to better understand the overall value ( magnitude and sign) of a given data set. For a data set, the '' arithmetic mean'', also known as "arithmetic average", is a measure of central tendency of a finite set of numbers: specifically, the sum of the values divided by the number of values. The arithmetic mean of a set of numbers ''x''1, ''x''2, ..., x''n'' is typically denoted using an overhead bar, \bar. If the data set were based on a series of observations obtained by sampling from a statistical population, the arithmetic mean is the '' sample mean'' (\bar) to distinguish it from the mean, or expected value, of the underlying distribution, the '' population mean'' (denoted \mu or \mu_x).Underhill, L.G.; Bradfield d. (1998) ''Introstat'', Juta and Company Ltd.p. 181/ref> Outside probability and statistics, a wide range of other notions of m ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Gaussian Distribution

In statistics, a normal distribution or Gaussian distribution is a type of continuous probability distribution for a real-valued random variable. The general form of its probability density function is : f(x) = \frac e^ The parameter \mu is the mean or expectation of the distribution (and also its median and mode), while the parameter \sigma is its standard deviation. The variance of the distribution is \sigma^2. A random variable with a Gaussian distribution is said to be normally distributed, and is called a normal deviate. Normal distributions are important in statistics and are often used in the natural and social sciences to represent real-valued random variables whose distributions are not known. Their importance is partly due to the central limit theorem. It states that, under some conditions, the average of many samples (observations) of a random variable with finite mean and variance is itself a random variable—whose distribution converges to a normal distr ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Normalization Factor

The concept of a normalizing constant arises in probability theory and a variety of other areas of mathematics. The normalizing constant is used to reduce any probability function to a probability density function with total probability of one. Definition In probability theory, a normalizing constant is a constant by which an everywhere non-negative function must be multiplied so the area under its graph is 1, e.g., to make it a probability density function or a probability mass function. Examples If we start from the simple Gaussian function p(x)=e^, \quad x\in(-\infty,\infty) we have the corresponding Gaussian integral \int_^\infty p(x) \, dx = \int_^\infty e^ \, dx = \sqrt, Now if we use the latter's reciprocal value as a normalizing constant for the former, defining a function \varphi(x) as \varphi(x) = \frac p(x) = \frac e^ so that its integral is unit \int_^\infty \varphi(x) \, dx = \int_^\infty \frac e^ \, dx = 1 then the function \varphi(x) is a probability d ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Conjugate Prior

In Bayesian probability theory, if the posterior distribution p(\theta \mid x) is in the same probability distribution family as the prior probability distribution p(\theta), the prior and posterior are then called conjugate distributions, and the prior is called a conjugate prior for the likelihood function p(x \mid \theta). A conjugate prior is an algebraic convenience, giving a closed-form expression for the posterior; otherwise, numerical integration may be necessary. Further, conjugate priors may give intuition by more transparently showing how a likelihood function updates a prior distribution. The concept, as well as the term "conjugate prior", were introduced by Howard Raiffa and Robert Schlaifer in their work on Bayesian decision theory. Howard Raiffa and Robert Schlaifer. ''Applied Statistical Decision Theory''. Division of Research, Graduate School of Business Administration, Harvard University, 1961. A similar concept had been discovered independently by George ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Natural Parameter

In probability and statistics, an exponential family is a parametric set of probability distributions of a certain form, specified below. This special form is chosen for mathematical convenience, including the enabling of the user to calculate expectations, covariances using differentiation based on some useful algebraic properties, as well as for generality, as exponential families are in a sense very natural sets of distributions to consider. The term exponential class is sometimes used in place of "exponential family", or the older term Koopman–Darmois family. The terms "distribution" and "family" are often used loosely: specifically, ''an'' exponential family is a ''set'' of distributions, where the specific distribution varies with the parameter; however, a parametric ''family'' of distributions is often referred to as "''a'' distribution" (like "the normal distribution", meaning "the family of normal distributions"), and the set of all exponential families is sometimes l ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Bayesian Networks

A Bayesian network (also known as a Bayes network, Bayes net, belief network, or decision network) is a probabilistic graphical model that represents a set of variables and their conditional dependencies via a directed acyclic graph (DAG). Bayesian networks are ideal for taking an event that occurred and predicting the likelihood that any one of several possible known causes was the contributing factor. For example, a Bayesian network could represent the probabilistic relationships between diseases and symptoms. Given symptoms, the network can be used to compute the probabilities of the presence of various diseases. Efficient algorithms can perform inference and learning in Bayesian networks. Bayesian networks that model sequences of variables (''e.g.'' speech signals or protein sequences) are called dynamic Bayesian networks. Generalizations of Bayesian networks that can represent and solve decision problems under uncertainty are called influence diagrams. Graphical mo ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Sufficient Statistic

In statistics, a statistic is ''sufficient'' with respect to a statistical model and its associated unknown parameter if "no other statistic that can be calculated from the same sample provides any additional information as to the value of the parameter". In particular, a statistic is sufficient for a family of probability distributions if the sample from which it is calculated gives no additional information than the statistic, as to which of those probability distributions is the sampling distribution. A related concept is that of linear sufficiency, which is weaker than ''sufficiency'' but can be applied in some cases where there is no sufficient statistic, although it is restricted to linear estimators. The Kolmogorov structure function deals with individual finite data; the related notion there is the algorithmic sufficient statistic. The concept is due to Sir Ronald Fisher in 1920. Stephen Stigler noted in 1973 that the concept of sufficiency had fallen out of favor in des ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |