|

Kernel-independent Component Analysis

In statistics, kernel-independent component analysis (kernel ICA) is an efficient algorithm for independent component analysis which estimates source components by optimizing a ''generalized variance'' contrast function, which is based on representations in a reproducing kernel Hilbert space. Those contrast functions use the notion of mutual information as a Independent component analysis#Defining component independence, measure of Independence (probability theory), statistical independence. Main idea Kernel ICA is based on the idea that correlations between two random variables can be represented in a reproducing kernel Hilbert space, reproducing kernel Hilbert space (RKHS), denoted by \mathcal, associated with a feature map L_x: \mathcal \mapsto \mathbb defined for a fixed x \in \mathbb. The \mathcal-correlation between two random variables X and Y is defined as : \rho_(X,Y) = \max_ \operatorname( \langle L_X,f \rangle, \langle L_Y,g \rangle) where the functions f,g: \mathbb ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

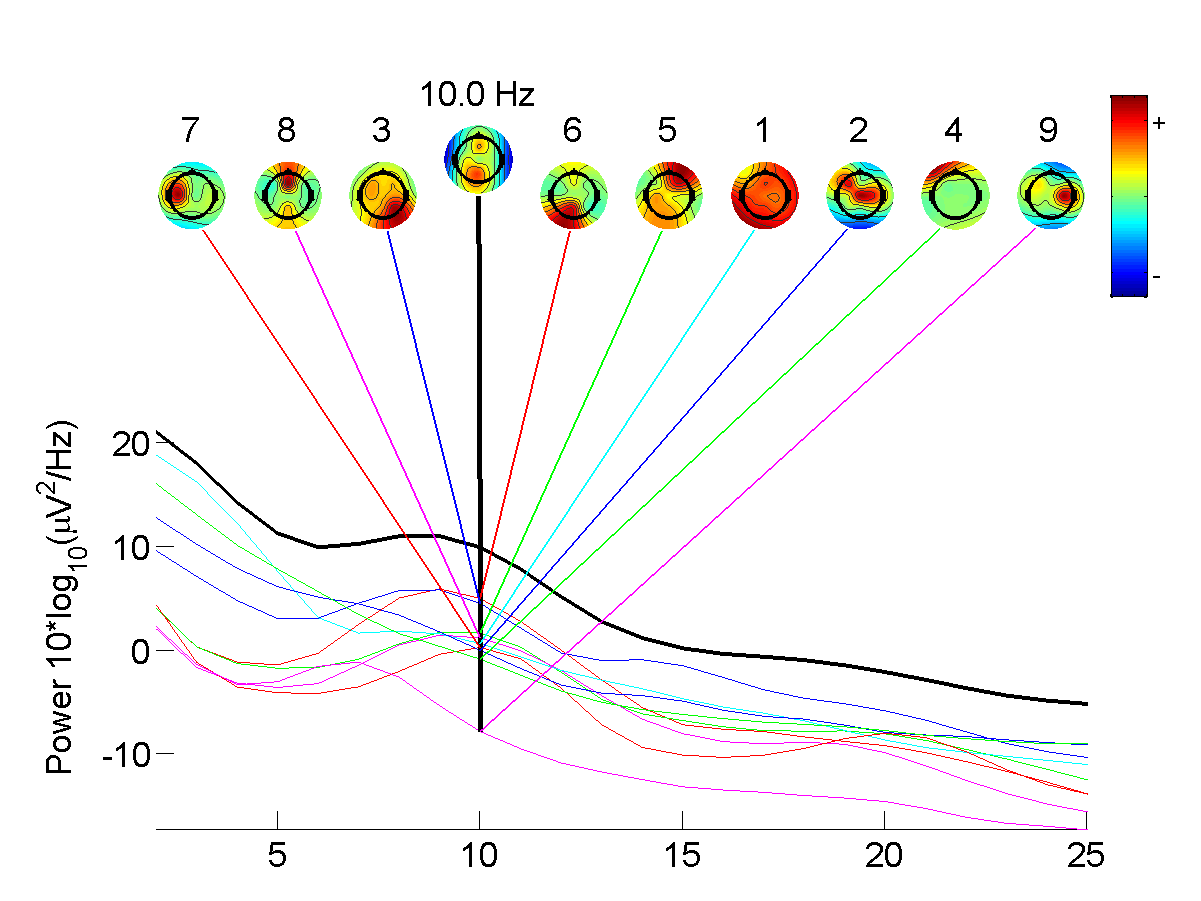

Independent Component Analysis

In signal processing, independent component analysis (ICA) is a computational method for separating a multivariate signal into additive subcomponents. This is done by assuming that at most one subcomponent is Gaussian and that the subcomponents are statistically independent from each other. ICA is a special case of blind source separation. A common example application is the " cocktail party problem" of listening in on one person's speech in a noisy room. Introduction Independent component analysis attempts to decompose a multivariate signal into independent non-Gaussian signals. As an example, sound is usually a signal that is composed of the numerical addition, at each time t, of signals from several sources. The question then is whether it is possible to separate these contributing sources from the observed total signal. When the statistical independence assumption is correct, blind ICA separation of a mixed signal gives very good results. It is also used for signals that a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Reproducing Kernel Hilbert Space

In functional analysis (a branch of mathematics), a reproducing kernel Hilbert space (RKHS) is a Hilbert space of functions in which point evaluation is a continuous linear functional. Roughly speaking, this means that if two functions f and g in the RKHS are close in norm, i.e., \, f-g\, is small, then f and g are also pointwise close, i.e., , f(x)-g(x), is small for all x. The converse does not need to be true. Informally, this can be shown by looking at the supremum norm: the sequence of functions \sin^n (x) converges pointwise, but do not converge uniformly i.e. do not converge with respect to the supremum norm (note that this is not a counterexample because the supremum norm does not arise from any inner product due to not satisfying the parallelogram law). It is not entirely straightforward to construct a Hilbert space of functions which is not an RKHS. Some examples, however, have been found. Note that ''L''2 spaces are not Hilbert spaces of functions (and hence not RK ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Independent Component Analysis

In signal processing, independent component analysis (ICA) is a computational method for separating a multivariate signal into additive subcomponents. This is done by assuming that at most one subcomponent is Gaussian and that the subcomponents are statistically independent from each other. ICA is a special case of blind source separation. A common example application is the " cocktail party problem" of listening in on one person's speech in a noisy room. Introduction Independent component analysis attempts to decompose a multivariate signal into independent non-Gaussian signals. As an example, sound is usually a signal that is composed of the numerical addition, at each time t, of signals from several sources. The question then is whether it is possible to separate these contributing sources from the observed total signal. When the statistical independence assumption is correct, blind ICA separation of a mixed signal gives very good results. It is also used for signals that a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Independence (probability Theory)

Independence is a fundamental notion in probability theory, as in statistics and the theory of stochastic processes. Two events are independent, statistically independent, or stochastically independent if, informally speaking, the occurrence of one does not affect the probability of occurrence of the other or, equivalently, does not affect the odds. Similarly, two random variables are independent if the realization of one does not affect the probability distribution of the other. When dealing with collections of more than two events, two notions of independence need to be distinguished. The events are called pairwise independent if any two events in the collection are independent of each other, while mutual independence (or collective independence) of events means, informally speaking, that each event is independent of any combination of other events in the collection. A similar notion exists for collections of random variables. Mutual independence implies pairwise independen ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Whitening Transformation

A whitening transformation or sphering transformation is a linear transformation that transforms a vector of random variables with a known covariance matrix into a set of new variables whose covariance is the identity matrix, meaning that they are uncorrelated and each have variance 1. The transformation is called "whitening" because it changes the input vector into a white noise vector. Several other transformations are closely related to whitening: # the decorrelation transform removes only the correlations but leaves variances intact, # the standardization transform sets variances to 1 but leaves correlations intact, # a coloring transformation transforms a vector of white random variables into a random vector with a specified covariance matrix. Definition Suppose X is a random (column) vector with non-singular covariance matrix \Sigma and mean 0. Then the transformation Y = W X with a whitening matrix W satisfying the condition W^\mathrm W = \Sigma^ yields the whitened ran ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |