|

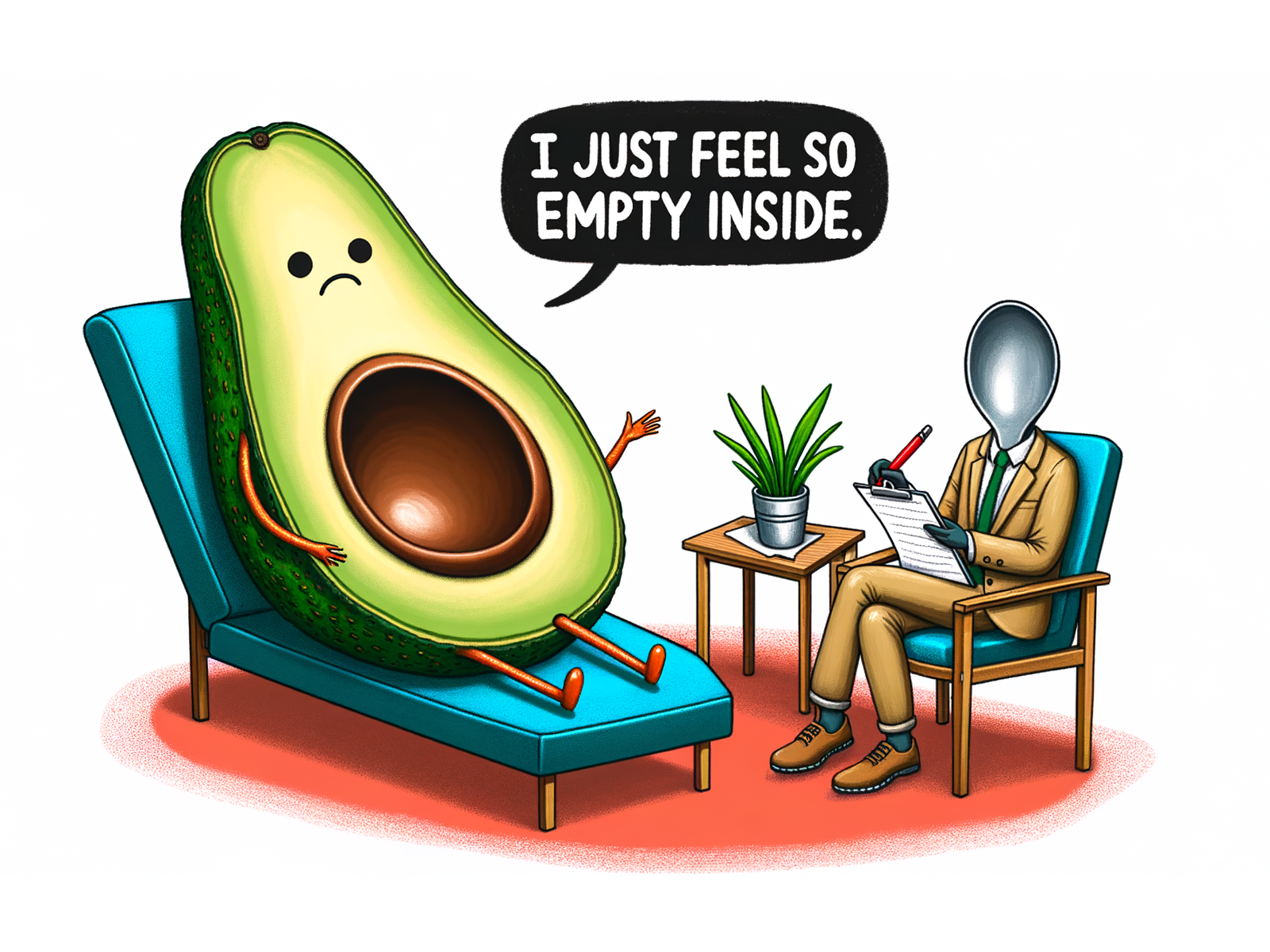

DALL-E 3 Example AI Generated Image

DALL-E, DALL-E 2, and DALL-E 3 (stylised DALL·E) are text-to-image models developed by OpenAI using deep learning methodologies to generate digital images from natural language descriptions known as ''prompts''. The first version of DALL-E was announced in January 2021. In the following year, its successor DALL-E 2 was released. DALL-E 3 was released natively into ChatGPT for ChatGPT Plus and ChatGPT Enterprise customers in October 2023, with availability via OpenAI's API and "Labs" platform provided in early November. Microsoft implemented the model in Bing's Image Creator tool and plans to implement it into their Designer app. With Bing's Image Creator tool, Microsoft Copilot runs on DALL-E 3. In March 2025, DALL-E-3 was replaced in ChatGPT by GPT Image 1's native image-generation capabilities. History and background DALL-E was revealed by OpenAI in a blog post on 5 January 2021, and uses a version of GPT-3 modified to generate images. On 6 April 2022, OpenAI announced ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

OpenAI

OpenAI, Inc. is an American artificial intelligence (AI) organization founded in December 2015 and headquartered in San Francisco, California. It aims to develop "safe and beneficial" artificial general intelligence (AGI), which it defines as "highly autonomous systems that outperform humans at most economically valuable work". As a leading organization in the ongoing AI boom, OpenAI is known for the GPT family of large language models, the DALL-E series of text-to-image models, and a text-to-video model named Sora (text-to-video model), Sora. Its release of ChatGPT in November 2022 has been credited with catalyzing widespread interest in generative AI. The organization has a complex corporate structure. As of April 2025, it is led by the Nonprofit organization, non-profit OpenAI, Inc., Delaware General Corporation Law, registered in Delaware, and has multiple for-profit subsidiaries including OpenAI Holdings, LLC and OpenAI Global, LLC. Microsoft has invested US$13 billion ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

WALL-E (character)

WALL-E (short for ''Waste Allocation Load Lifter: Earth-Class'') is the main protagonist of the 2008 Disney/ Pixar animated film of the same name. He is primarily voiced by Ben Burtt. WALL-E was created by director, Andrew Stanton, and writer, Jim Reardon. In the film, he's a solitary robot on a future, uninhabitable, deserted Earth in 2805, left to clean up garbage. He is visited by a probe sent by the starship ''Axiom'', a robot called EVE (short for ''Extraterrestrial Vegetation Evaluator)'', with whom he falls in love and pursues across the galaxy. Development Director, Andrew Stanton made WALL-E a trash compactor as the idea was instantly understandable, and because it was a low-status menial job that made him sympathetic. Stanton also liked the imagery of stacked cubes of garbage. Before they turned their attention to other projects, Stanton and John Lasseter thought about having WALL-E fall in love, as it was the necessary progression away from loneliness. WALL-E w ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Diffusion Model

In machine learning, diffusion models, also known as diffusion-based generative models or score-based generative models, are a class of latent variable model, latent variable generative model, generative models. A diffusion model consists of two major components: the forward diffusion process, and the reverse sampling process. The goal of diffusion models is to learn a diffusion process for a given dataset, such that the process can generate new elements that are distributed similarly as the original dataset. A diffusion model models data as generated by a diffusion process, whereby a new datum performs a Wiener process, random walk with drift through the space of all possible data. A trained diffusion model can be sampled in many ways, with different efficiency and quality. There are various equivalent formalisms, including Markov chains, denoising diffusion probabilistic models, noise conditioned score networks, and stochastic differential equations. They are typically trained ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Web Scraping

Web scraping, web harvesting, or web data extraction is data scraping used for data extraction, extracting data from websites. Web scraping software may directly access the World Wide Web using the Hypertext Transfer Protocol or a web browser. While web scraping can be done manually by a software user, the term typically refers to automated processes implemented using a Internet bot, bot or web crawler. It is a form of copying in which specific data is gathered and copied from the web, typically into a central local database or spreadsheet, for later data retrieval, retrieval or data analysis, analysis. Scraping a web page involves fetching it and then extracting data from it. Fetching is the downloading of a page (which a browser does when a user views a page). Therefore, web crawling is a main component of web scraping, to fetch pages for later processing. Having fetched, extraction can take place. The content of a page may be Parsing, parsed, searched and reformatted, and its ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Contrastive Learning

Self-supervised learning (SSL) is a paradigm in machine learning where a model is trained on a task using the data itself to generate supervisory signals, rather than relying on externally-provided labels. In the context of neural networks, self-supervised learning aims to leverage inherent structures or relationships within the input data to create meaningful training signals. SSL tasks are designed so that solving them requires capturing essential features or relationships in the data. The input data is typically augmented or transformed in a way that creates pairs of related samples, where one sample serves as the input, and the other is used to formulate the supervisory signal. This augmentation can involve introducing noise, cropping, rotation, or other transformations. Self-supervised learning more closely imitates the way humans learn to classify objects. During SSL, the model learns in two steps. First, the task is solved based on an auxiliary or pretext classification ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Contrastive Language-Image Pre-training

Contrastive Language-Image Pre-training (CLIP) is a technique for training a pair of neural network models, one for image understanding and one for text understanding, using a contrastive objective. This method has enabled broad applications across multiple domains, including cross-modal retrieval, text-to-image generation, and aesthetic ranking. Algorithm The CLIP method trains a pair of models contrastively. One model takes in a piece of text as input and outputs a single vector representing its semantic content. The other model takes in an image and similarly outputs a single vector representing its visual content. The models are trained so that the vectors corresponding to semantically similar text-image pairs are close together in the shared vector space, while those corresponding to dissimilar pairs are far apart. To train a pair of CLIP models, one would start by preparing a large dataset of image-caption pairs. During training, the models are presented with batches ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Variational Autoencoder

In machine learning, a variational autoencoder (VAE) is an artificial neural network architecture introduced by Diederik P. Kingma and Max Welling. It is part of the families of probabilistic graphical models and variational Bayesian methods. In addition to being seen as an autoencoder neural network architecture, variational autoencoders can also be studied within the mathematical formulation of variational Bayesian methods, connecting a neural encoder network to its decoder through a probabilistic latent space (for example, as a multivariate Gaussian distribution) that corresponds to the parameters of a variational distribution. Thus, the encoder maps each point (such as an image) from a large complex dataset into a distribution within the latent space, rather than to a single point in that space. The decoder has the opposite function, which is to map from the latent space to the input space, again according to a distribution (although in practice, noise is rarely a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Byte Pair Encoding

Byte-pair encoding (also known as BPE, or digram coding) is an algorithm, first described in 1994 by Philip Gage, for encoding strings of text into smaller strings by creating and using a translation table. A slightly modified version of the algorithm is used in large language model tokenizers. The original version of the algorithm focused on compression. It replaces the highest-frequency pair of bytes with a new byte that was not contained in the initial dataset. A lookup table of the replacements is required to rebuild the initial dataset. The modified version builds "tokens" (units of recognition) that match varying amounts of source text, from single characters (including single digits or single punctuation marks) to whole words (even long compound words). Original algorithm The original BPE algorithm operates by iteratively replacing the most common contiguous sequences of characters in a target text with unused 'placeholder' bytes. The iteration ends when no sequences can be ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Variational Autoencoder

In machine learning, a variational autoencoder (VAE) is an artificial neural network architecture introduced by Diederik P. Kingma and Max Welling. It is part of the families of probabilistic graphical models and variational Bayesian methods. In addition to being seen as an autoencoder neural network architecture, variational autoencoders can also be studied within the mathematical formulation of variational Bayesian methods, connecting a neural encoder network to its decoder through a probabilistic latent space (for example, as a multivariate Gaussian distribution) that corresponds to the parameters of a variational distribution. Thus, the encoder maps each point (such as an image) from a large complex dataset into a distribution within the latent space, rather than to a single point in that space. The decoder has the opposite function, which is to map from the latent space to the input space, again according to a distribution (although in practice, noise is rarely a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

GPT-2

Generative Pre-trained Transformer 2 (GPT-2) is a large language model by OpenAI and the second in their foundational series of Generative pre-trained transformer, GPT models. GPT-2 was pre-trained on a dataset of 8 million web pages. It was partially released in February 2019, followed by full release of the 1.5-billion-parameter model on November 5, 2019. GPT-2 was created as a "direct scale-up" of GPT-1 with a ten-fold increase in both its parameter count and the size of its training dataset. It is a general-purpose learner and its ability to perform the various tasks was a consequence of its general ability to accurately predict the next item in a sequence, which enabled it to machine translation, translate texts, question answering, answer questions about a topic from a text, automatic summarization, summarize passages from a larger text, and natural language generation, generate text output on a level sometimes Turing test, indistinguishable from that of humans; however, it ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Transformer (machine Learning Model)

The transformer is a deep learning architecture based on the multi-head attention mechanism, in which text is converted to numerical representations called tokens, and each token is converted into a vector via lookup from a word embedding table. At each layer, each token is then contextualized within the scope of the context window with other (unmasked) tokens via a parallel multi-head attention mechanism, allowing the signal for key tokens to be amplified and less important tokens to be diminished. Transformers have the advantage of having no recurrent units, therefore requiring less training time than earlier recurrent neural architectures (RNNs) such as long short-term memory (LSTM). Later variations have been widely adopted for training large language models (LLM) on large (language) datasets. The modern version of the transformer was proposed in the 2017 paper " Attention Is All You Need" by researchers at Google. Transformers were first developed as an improvement ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Generative Pre-trained Transformer

A generative pre-trained transformer (GPT) is a type of large language model (LLM) and a prominent framework for generative artificial intelligence. It is an Neural network (machine learning), artificial neural network that is used in natural language processing by machines. It is based on the Transformer (deep learning architecture), transformer deep learning architecture, pre-trained on large data sets of unlabeled text, and able to generate novel human-like content. As of 2023, most LLMs had these characteristics and are sometimes referred to broadly as GPTs. The first GPT was introduced in 2018 by OpenAI. OpenAI has released significant #Foundation models, GPT foundation models that have been sequentially numbered, to comprise its "GPT-''n''" series. Each of these was significantly more capable than the previous, due to increased size (number of trainable parameters) and training. The most recent of these, GPT-4o, was released in May 2024. Such models have been the basis fo ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |