|

Asynchronous Array Of Simple Processors

The asynchronous array of simple processors (AsAP) architecture comprises a 2-D array of reduced complexity programmable processors with small scratchpad memories interconnected by a reconfigurable mesh network. AsAP was developed by researchers in the VLSI Computation Laboratory (VCL) at the University of California, Davis and achieves high performance and energy efficiency, while using a relatively small circuit area. It was made in 2006. AsAP processors are well suited for implementation in future fabrication technologies, and are clocked in a globally asynchronous locally synchronous (GALS) fashion. Individual oscillators fully halt (leakage only) in 9 cycles when there is no work to do, and restart at full speed in less than one cycle after work is available. The chip requires no crystal oscillators, phase-locked loops, delay-locked loops, global clock signal, or any global frequency or phase-related signals whatsoever. The multi-processor architecture makes use of task-lev ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Scratchpad Memories

Scratchpad memory (SPM), also known as scratchpad, scratchpad RAM or local store in computer terminology, is an internal memory, usually high-speed, used for temporary storage of calculations, data, and other work in progress. In reference to a microprocessor (or CPU), scratchpad refers to a special high-speed memory used to hold small items of data for rapid retrieval. It is similar to the usage and size of a scratchpad in life: a pad of paper for preliminary notes or sketches or writings, etc. When the scratchpad is a hidden portion of the main memory then it is sometimes referred to as ''bump storage''. In some systems it can be considered similar to the L1 cache in that it is the next closest memory to the ALU after the processor registers, with explicit instructions to move data to and from main memory, often using DMA-based data transfer. In contrast to a system that uses caches, a system with scratchpads is a system with non-uniform memory access (NUMA) latencies, becaus ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Fast Fourier Transform

A fast Fourier transform (FFT) is an algorithm that computes the discrete Fourier transform (DFT) of a sequence, or its inverse (IDFT). A Fourier transform converts a signal from its original domain (often time or space) to a representation in the frequency domain and vice versa. The DFT is obtained by decomposing a sequence of values into components of different frequencies. This operation is useful in many fields, but computing it directly from the definition is often too slow to be practical. An FFT rapidly computes such transformations by Matrix decomposition, factorizing the DFT matrix into a product of Sparse matrix, sparse (mostly zero) factors. As a result, it manages to reduce the Computational complexity theory, complexity of computing the DFT from O(n^2), which arises if one simply applies the definition of DFT, to O(n \log n), where is the data size. The difference in speed can be enormous, especially for long data sets where may be in the thousands or millions. ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

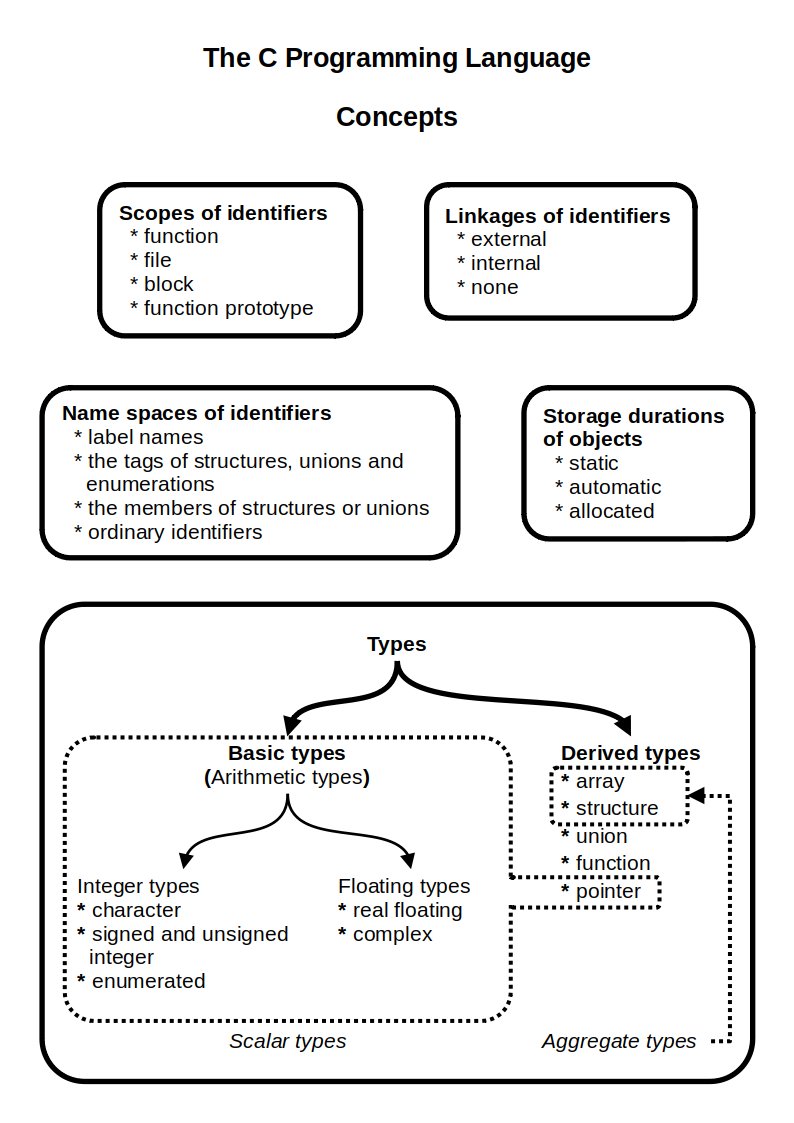

C (programming Language)

C (''pronounced'' '' – like the letter c'') is a general-purpose programming language. It was created in the 1970s by Dennis Ritchie and remains very widely used and influential. By design, C's features cleanly reflect the capabilities of the targeted Central processing unit, CPUs. It has found lasting use in operating systems code (especially in Kernel (operating system), kernels), device drivers, and protocol stacks, but its use in application software has been decreasing. C is commonly used on computer architectures that range from the largest supercomputers to the smallest microcontrollers and embedded systems. A successor to the programming language B (programming language), B, C was originally developed at Bell Labs by Ritchie between 1972 and 1973 to construct utilities running on Unix. It was applied to re-implementing the kernel of the Unix operating system. During the 1980s, C gradually gained popularity. It has become one of the most widely used programming langu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

CAVLC

Context-adaptive variable-length coding (CAVLC) is a form of entropy coding used in H.264/MPEG-4 AVC video encoding. It is an inherently lossless compression technique, like almost all entropy-coders. In H.264/MPEG-4 AVC, it is used to encode residual, zig-zag order, blocks of transform coefficients. It is an alternative to context-adaptive binary arithmetic coding (CABAC). CAVLC requires considerably less processing to decode than CABAC, although it does not compress the data quite as effectively. CAVLC is supported in all H.264 profiles, unlike CABAC which is not supported in Baseline and Extended profiles. CAVLC is used to encode residual, zig-zag ordered 4×4 (and 2×2) blocks of transform coefficients. CAVLC is designed to take advantage of several characteristics of quantized 4×4 blocks: * After prediction, transformation and quantization, blocks are typically sparse (containing mostly zeros). * The highest non-zero coefficients after zig-zag scan are often sequences of + ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

JPEG

JPEG ( , short for Joint Photographic Experts Group and sometimes retroactively referred to as JPEG 1) is a commonly used method of lossy compression for digital images, particularly for those images produced by digital photography. The degree of compression can be adjusted, allowing a selectable trade off between storage size and image quality. JPEG typically achieves 10:1 compression with noticeable, but widely agreed to be acceptable perceptible loss in image quality. Since its introduction in 1992, JPEG has been the most widely used image compression standard in the world, and the most widely used digital image format, with several billion JPEG images produced every day as of 2015. The Joint Photographic Experts Group created the standard in 1992, based on the discrete cosine transform (DCT) algorithm. JPEG was largely responsible for the proliferation of digital images and digital photos across the Internet and later social media. JPEG compression is used in a number of ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Matrix Multiplication

In mathematics, specifically in linear algebra, matrix multiplication is a binary operation that produces a matrix (mathematics), matrix from two matrices. For matrix multiplication, the number of columns in the first matrix must be equal to the number of rows in the second matrix. The resulting matrix, known as the matrix product, has the number of rows of the first and the number of columns of the second matrix. The product of matrices and is denoted as . Matrix multiplication was first described by the French mathematician Jacques Philippe Marie Binet in 1812, to represent the composition of functions, composition of linear maps that are represented by matrices. Matrix multiplication is thus a basic tool of linear algebra, and as such has numerous applications in many areas of mathematics, as well as in applied mathematics, statistics, physics, economics, and engineering. Computing matrix products is a central operation in all computational applications of linear algebra. Not ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

CORDIC

CORDIC, short for coordinate rotation digital computer, is a simple and efficient algorithm to calculate trigonometric functions, hyperbolic functions, square roots, multiplications, divisions, and exponentials and logarithms In mathematics, the logarithm of a number is the exponent by which another fixed value, the base, must be raised to produce that number. For example, the logarithm of to base is , because is to the rd power: . More generally, if , the ... with arbitrary base, typically converging with one digit (or bit) per iteration. CORDIC is therefore also an example of digit-by-digit algorithms. The original system is sometimes referred to as Volder's algorithm. CORDIC and closely related methods known as pseudo-multiplication and pseudo-division or factor combining are commonly used when no hardware multiplier is available (e.g. in simple microcontrollers and field-programmable gate arrays or FPGAs), as the only operations they require are addition, s ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Convolutional Coders

In telecommunication, a convolutional code is a type of error-correcting code that generates parity symbols via the sliding application of a boolean polynomial function to a data stream. The sliding application represents the 'convolution' of the encoder over the data, which gives rise to the term 'convolutional coding'. The sliding nature of the convolutional codes facilitates trellis decoding using a time-invariant trellis. Time invariant trellis decoding allows convolutional codes to be maximum-likelihood soft-decision decoded with reasonable complexity. The ability to perform economical maximum likelihood soft decision decoding is one of the major benefits of convolutional codes. This is in contrast to classic block codes, which are generally represented by a time-variant trellis and therefore are typically hard-decision decoded. Convolutional codes are often characterized by the base code rate and the depth (or memory) of the encoder ,k,K/math>. The base code rate is ty ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Multiple Instruction, Multiple Data

In computing, multiple instruction, multiple data (MIMD) is a technique employed to achieve parallelism. Machines using MIMD have a number of processor cores that function asynchronously and independently. At any time, different processors may be executing different instructions on different pieces of data. MIMD architectures may be used in a number of application areas such as computer-aided design/computer-aided manufacturing, simulation, modeling, and as communication switches. MIMD machines can be of either shared memory or distributed memory categories. These classifications are based on how MIMD processors access memory. Shared memory machines may be of the bus-based, extended, or hierarchical type. Distributed memory machines may have hypercube or mesh interconnection schemes. Examples An example of MIMD system is Intel Xeon Phi, descended from Larrabee microarchitecture. These processors have multiple processing cores (up to 61 as of 2015) that can execute differen ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Arithmetic Logic Unit

In computing, an arithmetic logic unit (ALU) is a Combinational logic, combinational digital circuit that performs arithmetic and bitwise operations on integer binary numbers. This is in contrast to a floating-point unit (FPU), which operates on floating point numbers. It is a fundamental building block of many types of computing circuits, including the central processing unit (CPU) of computers, FPUs, and graphics processing units (GPUs). The inputs to an ALU are the data to be operated on, called operands, and a code indicating the operation to be performed (opcode); the ALU's output is the result of the performed operation. In many designs, the ALU also has status inputs or outputs, or both, which convey information about a previous operation or the current operation, respectively, between the ALU and external status registers. Signals An ALU has a variety of input and output net (electronics), nets, which are the electrical conductors used to convey Digital signal (electroni ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Dynamic Frequency Scaling

Dynamic frequency scaling (also known as CPU throttling) is a power management technique in computer architecture whereby the frequency of a microprocessor can be automatically adjusted "on the fly" depending on the actual needs, to conserve power and reduce the amount of heat generated by the chip. Dynamic frequency scaling helps preserve battery on mobile devices and decrease cooling cost and noise on quiet computing settings, or can be useful as a security measure for overheated systems (e.g. after poor overclocking). Dynamic frequency scaling almost always appear in conjunction with dynamic voltage scaling, since higher frequencies require higher supply voltages for the digital circuit to yield correct results. The combined topic is known as dynamic voltage and frequency scaling (DVFS). Operation The dynamic power ('' switching power'') dissipated by a chip is ''C·V2·A·f'', where C is the capacitance being switched per clock cycle, V is voltage, A is the ''acti ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |