The Chinese room argument holds that a computer executing a

program

Program (American English; also Commonwealth English in terms of computer programming and related activities) or programme (Commonwealth English in all other meanings), programmer, or programming may refer to:

Business and management

* Program m ...

cannot have a

mind

The mind is that which thinks, feels, perceives, imagines, remembers, and wills. It covers the totality of mental phenomena, including both conscious processes, through which an individual is aware of external and internal circumstances ...

,

understanding

Understanding is a cognitive process related to an abstract or physical object, such as a person, situation, or message whereby one is able to use concepts to model that object.

Understanding is a relation between the knower and an object of u ...

, or

consciousness

Consciousness, at its simplest, is awareness of a state or object, either internal to oneself or in one's external environment. However, its nature has led to millennia of analyses, explanations, and debate among philosophers, scientists, an ...

, regardless of how intelligently or human-like the program may make the computer behave. The argument was presented in a 1980 paper by the philosopher

John Searle

John Rogers Searle (; born July 31, 1932) is an American philosopher widely noted for contributions to the philosophy of language, philosophy of mind, and social philosophy. He began teaching at UC Berkeley in 1959 and was Willis S. and Mario ...

entitled "Minds, Brains, and Programs" and published in the journal ''

Behavioral and Brain Sciences

''Behavioral and Brain Sciences'' is a bimonthly peer-reviewed scientific journal of Open Peer Commentary established in 1978 by Stevan Harnad and published by Cambridge University Press. According to the ''Journal Citation Reports'', the journal ...

''. Before Searle, similar arguments had been presented by figures including

Gottfried Wilhelm Leibniz

Gottfried Wilhelm Leibniz (or Leibnitz; – 14 November 1716) was a German polymath active as a mathematician, philosopher, scientist and diplomat who is credited, alongside Sir Isaac Newton, with the creation of calculus in addition to ...

(1714),

Anatoly Dneprov (1961), Lawrence Davis (1974) and

Ned Block

Ned Joel Block (born 1942) is an American philosopher working in philosophy of mind who has made important contributions to the understanding of consciousness and the philosophy of cognitive science. He has been professor of philosophy and psychol ...

(1978). Searle's version has been widely discussed in the years since. The centerpiece of Searle's argument is a

thought experiment

A thought experiment is an imaginary scenario that is meant to elucidate or test an argument or theory. It is often an experiment that would be hard, impossible, or unethical to actually perform. It can also be an abstract hypothetical that is ...

known as the Chinese room.

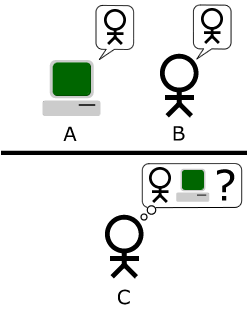

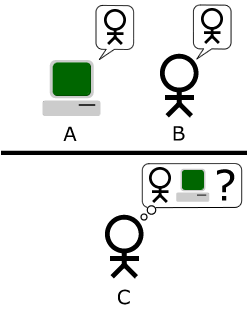

In the thought experiment, Searle imagines a person who does not understand Chinese isolated in a room with a book containing detailed instructions for manipulating Chinese symbols. When Chinese text is passed into the room, the person follows the book's instructions to produce Chinese symbols that, to fluent Chinese speakers outside the room, appear to be appropriate responses. According to Searle, the person is just following ''syntactic'' rules without ''semantic'' comprehension, and neither the human nor the room as a whole understands Chinese. He contends that when computers execute programs, they are similarly just applying syntactic rules without any real understanding or thinking.

The argument is directed against the philosophical positions of

functionalism and

computationalism

In philosophy of mind, the computational theory of mind (CTM), also known as computationalism, is a family of views that hold that the human mind is an information processing system and that cognition and consciousness together are a form of comp ...

, which hold that the mind may be viewed as an information-processing system operating on formal symbols, and that simulation of a given mental state is sufficient for its presence. Specifically, the argument is intended to refute a position Searle calls the

strong AI hypothesis

The Chinese room argument holds that a computer executing a computer program, program cannot have a mind, understanding, or consciousness, regardless of how intelligently or human-like the program may make the computer behave. The argument was ...

: "The appropriately programmed computer with the right inputs and outputs would thereby have a mind in exactly the same sense human beings have minds."

Although its proponents originally presented the argument in reaction to statements of

artificial intelligence

Artificial intelligence (AI) is the capability of computer, computational systems to perform tasks typically associated with human intelligence, such as learning, reasoning, problem-solving, perception, and decision-making. It is a field of re ...

(AI) researchers, it is not an argument against the goals of mainstream AI research because it does not show a limit in the amount of intelligent behavior a machine can display. The argument applies only to digital computers running programs and does not apply to machines in general. While widely discussed, the argument has been subject to significant criticism and remains controversial among

philosophers of mind

Philosophy ('love of wisdom' in Ancient Greek) is a systematic study of general and fundamental questions concerning topics like existence, reason, knowledge, value, mind, and language. It is a rational and critical inquiry that reflects on ...

and AI researchers.

Searle's thought experiment

Suppose that artificial intelligence research has succeeded in programming a computer to behave as if it understands Chinese. The machine accepts

Chinese characters

Chinese characters are logographs used Written Chinese, to write the Chinese languages and others from regions historically influenced by Chinese culture. Of the four independently invented writing systems accepted by scholars, they represe ...

as input, carries out each instruction of the program step by step, and then produces Chinese characters as output. The machine does this so perfectly that no one can tell that they are communicating with a machine and not a hidden Chinese speaker.

The questions at issue are these: does the machine actually

understand the conversation, or is it just

simulating the ability to understand the conversation? Does the machine have a mind in exactly the same sense that people do, or is it just acting

as if it had a mind?

Now suppose that Searle is in a room with an English version of the program, along with sufficient pencils, paper, erasers and filing cabinets. Chinese characters are slipped in under the door, he follows the program step-by-step, which eventually instructs him to slide other Chinese characters back out under the door. If the computer had passed the

Turing test

The Turing test, originally called the imitation game by Alan Turing in 1949,. Turing wrote about the ‘imitation game’ centrally and extensively throughout his 1950 text, but apparently retired the term thereafter. He referred to ‘ iste ...

this way, it follows that Searle would do so as well, simply by running the program by hand.

Searle asserts that there is no essential difference between the roles of the computer and himself in the experiment. Each simply follows a program, step-by-step, producing behavior that makes them appear to understand. However, Searle would not be able to understand the conversation. Therefore, he argues, it follows that the computer would not be able to understand the conversation either.

Searle argues that, without "understanding" (or "

intentionality

Intentionality is the mental ability to refer to or represent something. Sometimes regarded as the ''mark of the mental'', it is found in mental states like perceptions, beliefs or desires. For example, the perception of a tree has intentionality ...

"), we cannot describe what the machine is doing as "thinking" and, since it does not think, it does not have a "mind" in the normal sense of the word. Therefore, he concludes that the strong AI hypothesis is false: a computer running a program that simulates a mind would not have a mind in the same sense that human beings have a mind.

History

Gottfried Leibniz

Gottfried Wilhelm Leibniz (or Leibnitz; – 14 November 1716) was a German polymath active as a mathematician, philosopher, scientist and diplomat who is credited, alongside Isaac Newton, Sir Isaac Newton, with the creation of calculus in ad ...

made a similar argument in 1714 against

mechanism

Mechanism may refer to:

*Mechanism (economics), a set of rules for a game designed to achieve a certain outcome

**Mechanism design, the study of such mechanisms

*Mechanism (engineering), rigid bodies connected by joints in order to accomplish a ...

(the idea that everything that makes up a human being could, in principle, be explained in mechanical terms. In other words, that a person, including their mind, is merely a very complex machine). Leibniz used the thought experiment of expanding the brain until it was the size of a mill. Leibniz found it difficult to imagine that a "mind" capable of "perception" could be constructed using only mechanical processes.

Peter Winch

Peter Guy Winch (14 January 1926 – 27 April 1997) was a British philosopher known for his contributions to the philosophy of social science, Wittgenstein scholarship, ethics, and the philosophy of religion. His early book ''The Idea of a S ...

made the same point in his book ''The Idea of a Social Science and its Relation to Philosophy'' (1958), where he provides an argument to show that "a man who understands Chinese is not a man who has a firm grasp of the statistical probabilities for the occurrence of the various words in the Chinese language" (p. 108).

Soviet cyberneticist

Anatoly Dneprov made an essentially identical argument in 1961, in the form of the short story "

The Game". In it, a stadium of people act as switches and memory cells implementing a program to translate a sentence of Portuguese, a language that none of them know. The game was organized by a "Professor Zarubin" to answer the question "Can mathematical machines think?" Speaking through Zarubin, Dneprov writes "the only way to prove that machines can think is to turn yourself into a machine and examine your thinking process" and he concludes, as Searle does, "We've proven that even the most perfect simulation of machine thinking is not the thinking process itself."

In 1974,

Lawrence H. Davis

Lawrence may refer to:

Education Colleges and universities

* Lawrence Technological University, a university in Southfield, Michigan, United States

* Lawrence University, a liberal arts university in Appleton, Wisconsin, United States

Preparator ...

imagined duplicating the brain using telephone lines and offices staffed by people, and in 1978

Ned Block

Ned Joel Block (born 1942) is an American philosopher working in philosophy of mind who has made important contributions to the understanding of consciousness and the philosophy of cognitive science. He has been professor of philosophy and psychol ...

envisioned the entire population of China involved in such a brain simulation. This thought experiment is called the

China brain

In the philosophy of mind, the China brain thought experiment (also known as the Chinese Nation or Chinese Gym) considers what would happen if the entire population of China were asked to simulate the action of one neuron in the brain, using t ...

, also the "Chinese Nation" or the "Chinese Gym".

Searle's version appeared in his 1980 paper "Minds, Brains, and Programs", published in ''

Behavioral and Brain Sciences

''Behavioral and Brain Sciences'' is a bimonthly peer-reviewed scientific journal of Open Peer Commentary established in 1978 by Stevan Harnad and published by Cambridge University Press. According to the ''Journal Citation Reports'', the journal ...

''. It eventually became the journal's "most influential target article", generating an enormous number of commentaries and responses in the ensuing decades, and Searle has continued to defend and refine the argument in many papers, popular articles and books. David Cole writes that "the Chinese Room argument has probably been the most widely discussed philosophical argument in cognitive science to appear in the past 25 years".

Most of the discussion consists of attempts to refute it. "The overwhelming majority", notes ''Behavioral and Brain Sciences'' editor

Stevan Harnad

Stevan Robert Harnad (Hernád István Róbert, Hesslein István, born 1945) is a Canadian cognitive scientist based in Montreal.

Early life and education

Harnad was born in Budapest, Hungary. He did his undergraduate work at McGill University an ...

, "still think that the Chinese Room Argument is dead wrong". The sheer volume of the literature that has grown up around it inspired

Pat Hayes

Patrick John Hayes (born 21 August 1944) is a British computer scientist who lives and works in the United States. He is a Senior Research Scientist Emeritus at the Institute for Human and Machine Cognition (IHMC) in Pensacola, Florida.

Educa ...

to comment that the field of

cognitive science

Cognitive science is the interdisciplinary, scientific study of the mind and its processes. It examines the nature, the tasks, and the functions of cognition (in a broad sense). Mental faculties of concern to cognitive scientists include percep ...

ought to be redefined as "the ongoing research program of showing Searle's Chinese Room Argument to be false".

Searle's argument has become "something of a classic in cognitive science", according to Harnad.

Varol Akman

Varol Akman (born 8 June 1957, Antalya, Turkey) is Professor of Computer Engineering in Bilkent University, Ankara.

An academic of engineering background, Akman obtained his B.A in Electrical Engineering from the Middle East Technical University ...

agrees, and has described the original paper as "an exemplar of philosophical clarity and purity".

Philosophy

Although the Chinese Room argument was originally presented in reaction to the statements of

artificial intelligence

Artificial intelligence (AI) is the capability of computer, computational systems to perform tasks typically associated with human intelligence, such as learning, reasoning, problem-solving, perception, and decision-making. It is a field of re ...

researchers, philosophers have come to consider it as an important part of the

philosophy of mind

Philosophy of mind is a branch of philosophy that deals with the nature of the mind and its relation to the Body (biology), body and the Reality, external world.

The mind–body problem is a paradigmatic issue in philosophy of mind, although a ...

. It is a challenge to

functionalism and the

computational theory of mind

In philosophy of mind, the computational theory of mind (CTM), also known as computationalism, is a family of views that hold that the human mind is an information processing system and that cognition and consciousness together are a form of comp ...

, and is related to such questions as the

mind–body problem

The mind–body problem is a List_of_philosophical_problems#Mind–body_problem, philosophical problem concerning the relationship between thought and consciousness in the human mind and Human body, body. It addresses the nature of consciousness ...

, the

problem of other minds

The problem of other minds is a Philosophy, philosophical problem traditionally stated as the following Epistemology, epistemological question: "Given that I can only observe the behavior of others, how can I know that others have minds?" The pr ...

, the

symbol grounding

The symbol grounding problem is a concept in the fields of artificial intelligence, cognitive science, philosophy of mind, and semantics. It addresses the challenge of connecting symbols, such as words or abstract representations, to the real-wor ...

problem, and the

hard problem of consciousness

In the philosophy of mind, the hard problem of consciousness is to explain why and how humans and other organisms have qualia, phenomenal consciousness, or subjective experience. It is contrasted with the "easy problems" of explaining why and how ...

.

Strong AI

Searle identified a philosophical position he calls "strong AI":

The definition depends on the distinction between simulating a mind and actually having one. Searle writes that "according to Strong AI, the correct simulation really is a mind. According to Weak AI, the correct simulation is a model of the mind."

The claim is implicit in some of the statements of early AI researchers and analysts. For example, in 1955, AI founder

Herbert A. Simon

Herbert Alexander Simon (June 15, 1916 – February 9, 2001) was an American scholar whose work influenced the fields of computer science, economics, and cognitive psychology. His primary research interest was decision-making within organi ...

declared that "there are now in the world machines that think, that learn and create". Simon, together with

Allen Newell

Allen Newell (March 19, 1927 – July 19, 1992) was an American researcher in computer science and cognitive psychology at the RAND Corporation and at Carnegie Mellon University's School of Computer Science, Tepper School of Business, and D ...

and

Cliff Shaw

John Clifford Shaw (February 23, 1922 – February 9, 1991) was a systems programmer at the RAND Corporation. He is a coauthor of the first artificial intelligence program, the Logic Theorist, and was one of the developers of General Problem Sol ...

, after having completed the first program that could do

formal reasoning (the

Logic Theorist

Logic Theorist is a computer program written in 1956 by Allen Newell, Herbert A. Simon, and Cliff Shaw.

, and It was the first program deliberately engineered to perform automated reasoning, and has been described as "the first artificial intelli ...

), claimed that they had "solved the venerable mind–body problem, explaining how a system composed of matter can have the properties of mind."

John Haugeland

John Haugeland ( ; March 13, 1945 – June 23, 2010) was an American philosopher, specializing in the philosophy of mind, cognitive science, phenomenology, and Heidegger. He spent most of his career at the University of Pittsburgh, followed by th ...

wrote that "AI wants only the genuine article:

machines with minds, in the full and literal sense. This is not science fiction, but real science, based on a theoretical conception as deep as it is daring: namely, we are, at root,

computers ourselves."

Searle also ascribes the following claims to advocates of strong AI:

* AI systems can be used to explain the mind;

* The study of the brain is irrelevant to the study of the mind; and

* The

Turing test

The Turing test, originally called the imitation game by Alan Turing in 1949,. Turing wrote about the ‘imitation game’ centrally and extensively throughout his 1950 text, but apparently retired the term thereafter. He referred to ‘ iste ...

is adequate for establishing the existence of mental states.

Strong AI as computationalism or functionalism

In more recent presentations of the Chinese room argument, Searle has identified "strong AI" as "computer

functionalism" (a term he attributes to

Daniel Dennett

Daniel Clement Dennett III (March 28, 1942 – April 19, 2024) was an American philosopher and cognitive scientist. His research centered on the philosophy of mind, the philosophy of science, and the philosophy of biology, particularly as those ...

). Functionalism is a position in modern

philosophy of mind

Philosophy of mind is a branch of philosophy that deals with the nature of the mind and its relation to the Body (biology), body and the Reality, external world.

The mind–body problem is a paradigmatic issue in philosophy of mind, although a ...

that holds that we can define mental phenomena (such as beliefs, desires, and perceptions) by describing their functions in relation to each other and to the outside world. Because a computer program can accurately

represent

Represent may refer to:

* ''Represent'' (Compton's Most Wanted album) or the title song, 2000

* ''Represent'' (Fat Joe album), 1993

* ''Represent'', an album by DJ Magic Mike, 1994

* "Represent" (song), by Nas, 1994

* "Represent", a song by the ...

functional relationships as relationships between symbols, a computer can have mental phenomena if it runs the right program, according to functionalism.

Stevan Harnad

Stevan Robert Harnad (Hernád István Róbert, Hesslein István, born 1945) is a Canadian cognitive scientist based in Montreal.

Early life and education

Harnad was born in Budapest, Hungary. He did his undergraduate work at McGill University an ...

argues that Searle's depictions of strong AI can be reformulated as "recognizable tenets of

computationalism, a position (unlike "strong AI") that is actually held by many thinkers, and hence one worth refuting."

Computationalism

In philosophy of mind, the computational theory of mind (CTM), also known as computationalism, is a family of views that hold that the human mind is an information processing system and that cognition and consciousness together are a form of comp ...

is the position in the philosophy of mind which argues that the mind can be accurately described as an

information-processing system.

Each of the following, according to Harnad, is a "tenet" of computationalism:

* Mental states are computational states (which is why computers can have mental states and help to explain the mind);

* Computational states are

implementation-independent—in other words, it is the software that determines the computational state, not the hardware (which is why the brain, being hardware, is irrelevant); and that

* Since implementation is unimportant, the only empirical data that matters is how the system functions; hence the Turing test is definitive.

Recent philosophical discussions have revisited the implications of computationalism for artificial intelligence. Goldstein and Levinstein explore whether

large language model

A large language model (LLM) is a language model trained with self-supervised machine learning on a vast amount of text, designed for natural language processing tasks, especially language generation.

The largest and most capable LLMs are g ...

s (LLMs) like

ChatGPT

ChatGPT is a generative artificial intelligence chatbot developed by OpenAI and released on November 30, 2022. It uses large language models (LLMs) such as GPT-4o as well as other Multimodal learning, multimodal models to create human-like re ...

can possess minds, focusing on their ability to exhibit folk psychology, including beliefs, desires, and intentions. The authors argue that LLMs satisfy several philosophical theories of mental representation, such as informational, causal, and structural theories, by demonstrating robust internal representations of the world. However, they highlight that the evidence for LLMs having action dispositions necessary for belief-desire psychology remains inconclusive. Additionally, they refute common skeptical challenges, such as the "

stochastic parrots" argument and concerns over memorization, asserting that LLMs exhibit structured internal representations that align with these philosophical criteria.

David Chalmers

David John Chalmers (; born 20 April 1966) is an Australian philosopher and cognitive scientist, specializing in philosophy of mind and philosophy of language. He is a professor of philosophy and neural science at New York University, as well ...

suggests that while current LLMs lack features like recurrent processing and unified agency, advancements in AI could address these limitations within the next decade, potentially enabling systems to achieve consciousness. This perspective challenges Searle's original claim that purely "syntactic" processing cannot yield understanding or consciousness, arguing instead that such systems could have authentic mental states.

Strong AI vs. biological naturalism

Searle holds a philosophical position he calls "

biological naturalism

Biological naturalism is a theory about, among other things, the relationship between consciousness and body (i.e., brain), and hence an approach to the mind–body problem. It was first proposed by the philosopher John Searle in 1980 and is defi ...

": that consciousness and understanding require specific biological machinery that is found in brains. He writes "brains cause minds" and that "actual human mental phenomena

redependent on actual physical–chemical properties of actual human brains". Searle argues that this machinery (known in

neuroscience

Neuroscience is the scientific study of the nervous system (the brain, spinal cord, and peripheral nervous system), its functions, and its disorders. It is a multidisciplinary science that combines physiology, anatomy, molecular biology, ...

as the "

neural correlates of consciousness

The neural correlates of consciousness (NCC) are the minimal set of neuronal events and mechanisms sufficient for the occurrence of the mental states to which they are related. Neuroscientists use empirical approaches to discover neural correla ...

") must have some causal powers that permit the human experience of consciousness. Searle's belief in the existence of these powers has been criticized.

Searle does not disagree with the notion that machines can have consciousness and understanding, because, as he writes, "we are precisely such machines". Searle holds that the brain is, in fact, a machine, but that the brain gives rise to consciousness and understanding using specific machinery. If neuroscience is able to isolate the mechanical process that gives rise to consciousness, then Searle grants that it may be possible to create machines that have consciousness and understanding. However, without the specific machinery required, Searle does not believe that consciousness can occur.

Biological naturalism implies that one cannot determine if the experience of consciousness is occurring merely by examining how a system functions, because the specific machinery of the brain is essential. Thus, biological naturalism is directly opposed to both

behaviorism

Behaviorism is a systematic approach to understand the behavior of humans and other animals. It assumes that behavior is either a reflex elicited by the pairing of certain antecedent stimuli in the environment, or a consequence of that indivi ...

and

functionalism (including "computer functionalism" or "strong AI"). Biological naturalism is similar to

identity theory (the position that mental states are "identical to" or "composed of" neurological events); however, Searle has specific technical objections to identity theory. Searle's biological naturalism and strong AI are both opposed to

Cartesian dualism Cartesian means of or relating to the French philosopher René Descartes—from his Latinized name ''Cartesius''. It may refer to:

Mathematics

*Cartesian closed category, a closed category in category theory

*Cartesian coordinate system, modern ...

, the classical idea that the brain and mind are made of different "substances". Indeed, Searle accuses strong AI of dualism, writing that "strong AI only makes sense given the dualistic assumption that, where the mind is concerned, the brain doesn't matter".

Consciousness

Searle's original presentation emphasized understanding—that is,

mental state

A mental state, or a mental property, is a state of mind of a person. Mental states comprise a diverse class, including perception, pain/pleasure experience, belief, desire, intention, emotion, and memory. There is controversy concerning the exact ...

s with

intentionality

Intentionality is the mental ability to refer to or represent something. Sometimes regarded as the ''mark of the mental'', it is found in mental states like perceptions, beliefs or desires. For example, the perception of a tree has intentionality ...

—and did not directly address other closely related ideas such as "consciousness". However, in more recent presentations, Searle has included consciousness as the real target of the argument.

David Chalmers

David John Chalmers (; born 20 April 1966) is an Australian philosopher and cognitive scientist, specializing in philosophy of mind and philosophy of language. He is a professor of philosophy and neural science at New York University, as well ...

writes, "it is fairly clear that consciousness is at the root of the matter" of the Chinese room.

Colin McGinn

Colin McGinn (born 10 March 1950) is a British philosopher. He has held teaching posts and professorships at University College London, the University of Oxford, Rutgers University, and the University of Miami.

McGinn is best known for his work ...

argues that the Chinese room provides strong evidence that the

hard problem of consciousness

In the philosophy of mind, the hard problem of consciousness is to explain why and how humans and other organisms have qualia, phenomenal consciousness, or subjective experience. It is contrasted with the "easy problems" of explaining why and how ...

is fundamentally insoluble. The argument, to be clear, is not about whether a machine can be conscious, but about whether it (or anything else for that matter) can be shown to be conscious. It is plain that any other method of probing the occupant of a Chinese room has the same difficulties in principle as exchanging questions and answers in Chinese. It is simply not possible to divine whether a conscious agency or some clever

simulation

A simulation is an imitative representation of a process or system that could exist in the real world. In this broad sense, simulation can often be used interchangeably with model. Sometimes a clear distinction between the two terms is made, in ...

inhabits the room.

Searle argues that this is only true for an observer outside of the room. The whole point of the thought experiment is to put someone inside the room, where they can directly observe the operations of consciousness. Searle claims that from his vantage point within the room there is nothing he can see that could imaginably give rise to consciousness, other than himself, and clearly he does not have a mind that can speak Chinese. In Searle's words, "the computer has nothing more than I have in the case where I understand nothing".

Applied ethics

Patrick Hew used the Chinese Room argument to deduce requirements from military

command and control systems if they are to preserve a commander's

moral agency

Moral agency is an individual's ability to make morality, moral choices based on some notion of ethics, right and wrong and to be held accountable for these actions. A moral agent is "a being who is capable of acting with reference to right and wro ...

. He drew an analogy between a commander in their

command center

A command center (often called a war room) is any place that is used to provide centralized command for some purpose.

While frequently considered to be a military facility, these can be used in many other cases by governments or businesses ...

and the person in the Chinese Room, and analyzed it under a reading of

Aristotle's notions of "compulsory" and "ignorance". Information could be "down converted" from meaning to symbols, and manipulated symbolically, but moral agency could be undermined if there was inadequate 'up conversion' into meaning. Hew cited examples from the

USS ''Vincennes'' incident.

Computer science

The Chinese room argument is primarily an argument in the philosophy of mind, and both major computer scientists and artificial intelligence researchers consider it irrelevant to their fields. However, several concepts developed by computer scientists are essential to understanding the argument, including

symbol processing,

Turing machine

A Turing machine is a mathematical model of computation describing an abstract machine that manipulates symbols on a strip of tape according to a table of rules. Despite the model's simplicity, it is capable of implementing any computer algori ...

s,

Turing completeness

In computability theory, a system of data-manipulation rules (such as a model of computation, a computer's instruction set, a programming language, or a cellular automaton) is said to be Turing-complete or computationally universal if it can b ...

, and the Turing test.

Strong AI vs. AI research

Searle's arguments are not usually considered an issue for AI research. The primary mission of artificial intelligence research is only to create useful systems that act intelligently and it does not matter if the intelligence is "merely" a simulation. AI researchers

Stuart J. Russell and

Peter Norvig

Peter Norvig (born 14 December 1956) is an American computer scientist and Distinguished Education Fellow at the Stanford Institute for Human-Centered AI. He previously served as a director of research and search quality at Google. Norvig is th ...

wrote in 2021: "We are interested in programs that behave intelligently. Individual aspects of consciousness—awareness, self-awareness, attention—can be programmed and can be part of an intelligent machine. The additional project making a machine conscious in exactly the way humans are is not one that we are equipped to take on."

Searle does not disagree that AI research can create machines that are capable of highly intelligent behavior. The Chinese room argument leaves open the possibility that a digital machine could be built that acts more intelligently than a person, but does not have a mind or intentionality in the same way that brains do.

Searle's "strong AI hypothesis" should not be confused with "strong AI" as defined by

Ray Kurzweil

Raymond Kurzweil ( ; born February 12, 1948) is an American computer scientist, author, entrepreneur, futurist, and inventor. He is involved in fields such as optical character recognition (OCR), speech synthesis, text-to-speech synthesis, spee ...

and other futurists, who use the term to describe machine intelligence that rivals or exceeds human intelligence—that is,

artificial general intelligence

Artificial general intelligence (AGI)—sometimes called human‑level intelligence AI—is a type of artificial intelligence that would match or surpass human capabilities across virtually all cognitive tasks.

Some researchers argue that sta ...

,

human level AI or

superintelligence

A superintelligence is a hypothetical intelligent agent, agent that possesses intelligence surpassing that of the brightest and most intellectual giftedness, gifted human minds. "Superintelligence" may also refer to a property of advanced problem- ...

. Kurzweil is referring primarily to the

amount of intelligence displayed by the machine, whereas Searle's argument sets no limit on this. Searle argues that a superintelligent machine would not necessarily have a mind and consciousness.

Turing test

The Chinese room implements a version of the Turing test.

Alan Turing

Alan Mathison Turing (; 23 June 1912 – 7 June 1954) was an English mathematician, computer scientist, logician, cryptanalyst, philosopher and theoretical biologist. He was highly influential in the development of theoretical computer ...

introduced the test in 1950 to help answer the question "can machines think?" In the standard version, a human judge engages in a natural language conversation with a human and a machine designed to generate performance indistinguishable from that of a human being. All participants are separated from one another. If the judge cannot reliably tell the machine from the human, the machine is said to have passed the test.

Turing then considered each possible objection to the proposal "machines can think", and found that there are simple, obvious answers if the question is de-mystified in this way. He did not, however, intend for the test to measure for the presence of "consciousness" or "understanding". He did not believe this was relevant to the issues that he was addressing. He wrote:

To Searle, as a philosopher investigating in the nature of mind and consciousness, these are the relevant mysteries. The Chinese room is designed to show that the Turing test is insufficient to detect the presence of consciousness, even if the room can behave or function as a conscious mind would.

Symbol processing

Computers manipulate physical objects in order to carry out calculations and do simulations. AI researchers

Allen Newell

Allen Newell (March 19, 1927 – July 19, 1992) was an American researcher in computer science and cognitive psychology at the RAND Corporation and at Carnegie Mellon University's School of Computer Science, Tepper School of Business, and D ...

and

Herbert A. Simon

Herbert Alexander Simon (June 15, 1916 – February 9, 2001) was an American scholar whose work influenced the fields of computer science, economics, and cognitive psychology. His primary research interest was decision-making within organi ...

called this kind of machine a

physical symbol system

A physical symbol system (also called a formal system) takes physical patterns (symbols), combining them into structures (expressions) and manipulating them (using processes) to produce new expressions.

The physical symbol system hypothesis (PSSH ...

. It is also equivalent to the

formal system

A formal system is an abstract structure and formalization of an axiomatic system used for deducing, using rules of inference, theorems from axioms.

In 1921, David Hilbert proposed to use formal systems as the foundation of knowledge in ma ...

s used in the field of

mathematical logic

Mathematical logic is the study of Logic#Formal logic, formal logic within mathematics. Major subareas include model theory, proof theory, set theory, and recursion theory (also known as computability theory). Research in mathematical logic com ...

.

Searle emphasizes the fact that this kind of symbol manipulation is

syntactic

In linguistics, syntax ( ) is the study of how words and morphemes combine to form larger units such as phrases and sentences. Central concerns of syntax include word order, grammatical relations, hierarchical sentence structure (constituency ...

(borrowing a term from the study of

grammar

In linguistics, grammar is the set of rules for how a natural language is structured, as demonstrated by its speakers or writers. Grammar rules may concern the use of clauses, phrases, and words. The term may also refer to the study of such rul ...

). The computer manipulates the symbols using a form of syntax, without any knowledge of the symbol's

semantics

Semantics is the study of linguistic Meaning (philosophy), meaning. It examines what meaning is, how words get their meaning, and how the meaning of a complex expression depends on its parts. Part of this process involves the distinction betwee ...

(that is, their

meaning).

Newell and Simon had conjectured that a physical symbol system (such as a digital computer) had all the necessary machinery for "general intelligent action", or, as it is known today,

artificial general intelligence

Artificial general intelligence (AGI)—sometimes called human‑level intelligence AI—is a type of artificial intelligence that would match or surpass human capabilities across virtually all cognitive tasks.

Some researchers argue that sta ...

. They framed this as a philosophical position, the

physical symbol system hypothesis: "A physical symbol system has the necessary and sufficient means for general intelligent action." The Chinese room argument does not refute this, because it is framed in terms of "intelligent action", i.e. the external behavior of the machine, rather than the presence or absence of understanding, consciousness and mind.

Twenty-first century AI programs (such as "

deep learning

Deep learning is a subset of machine learning that focuses on utilizing multilayered neural networks to perform tasks such as classification, regression, and representation learning. The field takes inspiration from biological neuroscience a ...

") do mathematical operations on huge matrixes of unidentified numbers and bear little resemblance to the symbolic processing used by AI programs at the time Searle wrote his critique in 1980.

Nils Nilsson describes systems like these as "dynamic" rather than "symbolic". Nilsson notes that these are essentially digitized representations of dynamic systems—the individual numbers do not have a specific semantics, but are instead

sample

Sample or samples may refer to:

* Sample (graphics), an intersection of a color channel and a pixel

* Sample (material), a specimen or small quantity of something

* Sample (signal), a digital discrete sample of a continuous analog signal

* Sample ...

s or

data point

In statistics, a unit of observation is the unit described by the data that one analyzes. A study may treat groups as a unit of observation with a country as the unit of analysis, drawing conclusions on group characteristics from data collected a ...

s from a dynamic signal, and it is the signal being approximated which would have semantics. Nilsson argues it is not reasonable to consider these signals as "symbol processing" in the same sense as the physical symbol systems hypothesis.

Chinese room and Turing completeness

The Chinese room has a design analogous to that of a modern computer. It has a

Von Neumann architecture

The von Neumann architecture—also known as the von Neumann model or Princeton architecture—is a computer architecture based on the '' First Draft of a Report on the EDVAC'', written by John von Neumann in 1945, describing designs discus ...

, which consists of a program (the book of instructions), some memory (the papers and file cabinets), a machine that follows the instructions (the man), and a means to write symbols in memory (the pencil and eraser). A machine with this design is known in

theoretical computer science

Theoretical computer science is a subfield of computer science and mathematics that focuses on the Abstraction, abstract and mathematical foundations of computation.

It is difficult to circumscribe the theoretical areas precisely. The Associati ...

as "

Turing complete

Alan Mathison Turing (; 23 June 1912 – 7 June 1954) was an English mathematician, computer scientist, logician, cryptanalyst, philosopher and theoretical biologist. He was highly influential in the development of theoretical comput ...

", because it has the necessary machinery to carry out any computation that a Turing machine can do, and therefore it is capable of doing a step-by-step simulation of any other digital machine, given enough memory and time. Turing writes, "all digital computers are in a sense equivalent." The widely accepted

Church–Turing thesis

In Computability theory (computation), computability theory, the Church–Turing thesis (also known as computability thesis, the Turing–Church thesis, the Church–Turing conjecture, Church's thesis, Church's conjecture, and Turing's thesis) ...

holds that any function computable by an effective procedure is computable by a Turing machine.

The Turing completeness of the Chinese room implies that it can do whatever any other digital computer can do (albeit much, much more slowly). Thus, if the Chinese room does not or can not contain a Chinese-speaking mind, then no other digital computer can contain a mind. Some replies to Searle begin by arguing that the room, as described, cannot have a Chinese-speaking mind. Arguments of this form, according to

Stevan Harnad

Stevan Robert Harnad (Hernád István Róbert, Hesslein István, born 1945) is a Canadian cognitive scientist based in Montreal.

Early life and education

Harnad was born in Budapest, Hungary. He did his undergraduate work at McGill University an ...

, are "no refutation (but rather an affirmation)" of the Chinese room argument, because these arguments actually imply that no digital computers can have a mind.

There are some critics, such as Hanoch Ben-Yami, who argue that the Chinese room cannot simulate all the abilities of a digital computer, such as being able to determine the current time.

Complete argument

Searle has produced a more formal version of the argument of which the Chinese Room forms a part. He presented the first version in 1984. The version given below is from 1990. The Chinese room thought experiment is intended to prove point A3.

He begins with three axioms:

:(A1) "Programs are formal (syntactic)."

::A program uses syntax to manipulate symbols and pays no attention to the semantics of the symbols. It knows where to put the symbols and how to move them around, but it does not know what they stand for or what they mean. For the program, the symbols are just physical objects like any others.

:(A2) "Minds have mental contents (semantics)."

::Unlike the symbols used by a program, our thoughts have meaning: they represent things and we know what it is they represent.

:(A3) "Syntax by itself is neither constitutive of nor sufficient for semantics."

::This is what the Chinese room thought experiment is intended to prove: the Chinese room has syntax (because there is a man in there moving symbols around). The Chinese room has no semantics (because, according to Searle, there is no one or nothing in the room that understands what the symbols mean). Therefore, having syntax is not enough to generate semantics.

Searle posits that these lead directly to this conclusion:

:(C1) Programs are neither constitutive of nor sufficient for minds.

::This should follow without controversy from the first three: Programs don't have semantics. Programs have only syntax, and syntax is insufficient for semantics. Every mind has semantics. Therefore no programs are minds.

This much of the argument is intended to show that artificial intelligence can never produce a machine with a mind by writing programs that manipulate symbols. The remainder of the argument addresses a different issue. Is the human brain running a program? In other words, is the

computational theory of mind

In philosophy of mind, the computational theory of mind (CTM), also known as computationalism, is a family of views that hold that the human mind is an information processing system and that cognition and consciousness together are a form of comp ...

correct? He begins with an axiom that is intended to express the basic modern scientific consensus about brains and minds:

:(A4) Brains cause minds.

Searle claims that we can derive "immediately" and "trivially" that:

:(C2) Any other system capable of causing minds would have to have causal powers (at least) equivalent to those of brains.

::Brains must have something that causes a mind to exist. Science has yet to determine exactly what it is, but it must exist, because minds exist. Searle calls it "causal powers". "Causal powers" is whatever the brain uses to create a mind. If anything else can cause a mind to exist, it must have "equivalent causal powers". "Equivalent causal powers" is whatever

else that could be used to make a mind.

And from this he derives the further conclusions:

:(C3) Any artifact that produced mental phenomena, any artificial brain, would have to be able to duplicate the specific causal powers of brains, and it could not do that just by running a formal program.

::This follows from C1 and C2: Since no program can produce a mind, and "equivalent causal powers" produce minds, it follows that programs do not have "equivalent causal powers."

:(C4) The way that human brains actually produce mental phenomena cannot be solely by virtue of running a computer program.

::Since programs do not have "equivalent causal powers", "equivalent causal powers" produce minds, and brains produce minds, it follows that brains do not use programs to produce minds.

Refutations of Searle's argument take many different forms (see below). Computationalists and functionalists reject A3, arguing that "syntax" (as Searle describes it)

can have "semantics" if the syntax has the right functional structure. Eliminative materialists reject A2, arguing that minds don't actually have "semantics"—that thoughts and other mental phenomena are inherently meaningless but nevertheless function as if they had meaning.

Replies

Replies to Searle's argument may be classified according to what they claim to show:

* Those which identify who speaks Chinese

* Those which demonstrate how meaningless symbols can become meaningful

* Those which suggest that the Chinese room should be redesigned in some way

* Those which contend that Searle's argument is misleading

* Those which argue that the argument makes false assumptions about subjective conscious experience and therefore proves nothing

Some of the arguments (robot and brain simulation, for example) fall into multiple categories.

Systems and virtual mind replies: finding the mind

These replies attempt to answer the question: since the man in the room does not speak Chinese, where is the mind that does? These replies address the key

ontological

Ontology is the philosophical study of being. It is traditionally understood as the subdiscipline of metaphysics focused on the most general features of reality. As one of the most fundamental concepts, being encompasses all of reality and every ...

issues of

mind versus body and simulation vs. reality. All of the replies that identify the mind in the room are versions of "the system reply".

System reply

The basic version of the system reply argues that it is the "whole system" that understands Chinese. While the man understands only English, when he is combined with the program, scratch paper, pencils and file cabinets, they form a system that can understand Chinese. "Here, understanding is not being ascribed to the mere individual; rather it is being ascribed to this whole system of which he is a part" Searle explains.

Searle notes that (in this simple version of the reply) the "system" is nothing more than a collection of ordinary physical objects; it grants the power of understanding and consciousness to "the conjunction of that person and bits of paper" without making any effort to explain how this pile of objects has become a conscious, thinking being. Searle argues that no reasonable person should be satisfied with the reply, unless they are "under the grip of an ideology;" In order for this reply to be remotely plausible, one must take it for granted that consciousness can be the product of an information processing "system", and does not require anything resembling the actual biology of the brain.

Searle then responds by simplifying this list of physical objects: he asks what happens if the man memorizes the rules and keeps track of everything in his head? Then the whole system consists of just one object: the man himself. Searle argues that if the man does not understand Chinese then the system does not understand Chinese either because now "the system" and "the man" both describe exactly the same object.

Critics of Searle's response argue that the program has allowed the man to have two minds in one head. If we assume a "mind" is a form of information processing, then the

theory of computation

In theoretical computer science and mathematics, the theory of computation is the branch that deals with what problems can be solved on a model of computation, using an algorithm, how efficiently they can be solved or to what degree (e.g., app ...

can account for two computations occurring at once, namely (1) the computation for

universal programmability (which is the function instantiated by the person and note-taking materials independently from any particular program contents) and (2) the computation of the Turing machine that is described by the program (which is instantiated by everything including the specific program). The theory of computation thus formally explains the open possibility that the second computation in the Chinese Room could entail a human-equivalent semantic understanding of the Chinese inputs. The focus belongs on the program's Turing machine rather than on the person's. However, from Searle's perspective, this argument is circular. The question at issue is whether consciousness is a form of information processing, and this reply requires that we make that assumption.

More sophisticated versions of the systems reply try to identify more precisely what "the system" is and they differ in exactly how they describe it. According to these replies, the "mind that speaks Chinese" could be such things as: the "software", a "program", a "running program", a simulation of the "neural correlates of consciousness", the "functional system", a "simulated mind", an "

emergent property", or "a virtual mind".

Virtual mind reply

Marvin Minsky

Marvin Lee Minsky (August 9, 1927 – January 24, 2016) was an American cognitive scientist, cognitive and computer scientist concerned largely with research in artificial intelligence (AI). He co-founded the Massachusetts Institute of Technology ...

suggested a version of the system reply known as the "virtual mind reply". The term "

virtual" is used in computer science to describe an object that appears to exist "in" a computer (or computer network) only because software makes it appear to exist. The objects "inside" computers (including files, folders, and so on) are all "virtual", except for the computer's electronic components. Similarly, Minsky proposes that a computer may contain a "mind" that is virtual in the same sense as

virtual machine

In computing, a virtual machine (VM) is the virtualization or emulator, emulation of a computer system. Virtual machines are based on computer architectures and provide the functionality of a physical computer. Their implementations may involve ...

s,

virtual communities

A virtual community is a social network of individuals who connect through specific social media, potentially crossing geographical and political boundaries in order to pursue mutual interests or goals. Some of the most pervasive virtual commu ...

and

virtual reality

Virtual reality (VR) is a Simulation, simulated experience that employs 3D near-eye displays and pose tracking to give the user an immersive feel of a virtual world. Applications of virtual reality include entertainment (particularly video gam ...

.

To clarify the distinction between the simple systems reply given above and virtual mind reply, David Cole notes that two simulations could be running on one system at the same time: one speaking Chinese and one speaking Korean. While there is only one system, there can be multiple "virtual minds," thus the "system" cannot be the "mind".

Searle responds that such a mind is at best a simulation, and writes: "No one supposes that computer simulations of a five-alarm fire will burn the neighborhood down or that a computer simulation of a rainstorm will leave us all drenched." Nicholas Fearn responds that, for some things, simulation is as good as the real thing. "When we call up the pocket calculator function on a desktop computer, the image of a pocket calculator appears on the screen. We don't complain that it isn't really a calculator, because the physical attributes of the device do not matter." The question is, is the human mind like the pocket calculator, essentially composed of information, where a perfect simulation of the thing just

is the thing? Or is the mind like the rainstorm, a thing in the world that is more than just its simulation, and not realizable in full by a computer simulation? For decades, this question of simulation has led AI researchers and philosophers to consider whether the term "

synthetic intelligence

Synthetic intelligence (SI) is an alternative/opposite term for artificial intelligence emphasizing that the intelligence of machines need not be an imitation or in any way artificial; it can be a genuine form of intelligence. John Haugeland prop ...

" is more appropriate than the common description of such intelligences as "artificial."

These replies provide an explanation of exactly who it is that understands Chinese. If there is something ''besides'' the man in the room that can understand Chinese, Searle cannot argue that (1) the man does not understand Chinese, therefore (2) nothing in the room understands Chinese. This, according to those who make this reply, shows that Searle's argument fails to prove that "strong AI" is false.

These replies, by themselves, do not provide any evidence that strong AI is true, however. They do not show that the system (or the virtual mind) understands Chinese, other than the hypothetical premise that it passes the Turing test. Searle argues that, if we are to consider Strong AI remotely plausible, the Chinese Room is an example that requires explanation, and it is difficult or impossible to explain how consciousness might "emerge" from the room or how the system would have consciousness. As Searle writes "the systems reply simply begs the question by insisting that the system must understand Chinese" and thus is dodging the question or hopelessly circular.

Robot and semantics replies: finding the meaning

As far as the person in the room is concerned, the symbols are just meaningless "squiggles." But if the Chinese room really "understands" what it is saying, then the symbols must get their meaning from somewhere. These arguments attempt to connect the symbols to the things they symbolize. These replies address Searle's concerns about

intentionality

Intentionality is the mental ability to refer to or represent something. Sometimes regarded as the ''mark of the mental'', it is found in mental states like perceptions, beliefs or desires. For example, the perception of a tree has intentionality ...

,

symbol grounding

The symbol grounding problem is a concept in the fields of artificial intelligence, cognitive science, philosophy of mind, and semantics. It addresses the challenge of connecting symbols, such as words or abstract representations, to the real-wor ...

and

syntax

In linguistics, syntax ( ) is the study of how words and morphemes combine to form larger units such as phrases and sentences. Central concerns of syntax include word order, grammatical relations, hierarchical sentence structure (constituenc ...

vs.

semantic

Semantics is the study of linguistic Meaning (philosophy), meaning. It examines what meaning is, how words get their meaning, and how the meaning of a complex expression depends on its parts. Part of this process involves the distinction betwee ...

s.

Robot reply

Suppose that instead of a room, the program was placed into a robot that could wander around and interact with its environment. This would allow a "

causal

Causality is an influence by which one Event (philosophy), event, process, state, or Object (philosophy), object (''a'' ''cause'') contributes to the production of another event, process, state, or object (an ''effect'') where the cause is at l ...

connection" between the symbols and things they represent.

Hans Moravec

Hans Peter Moravec (born November 30, 1948, Kautzen, Austria) is a computer scientist and an adjunct faculty member at the Robotics Institute of Carnegie Mellon University in Pittsburgh, USA. He is known for his work on robotics, artificial inte ...

comments: "If we could graft a robot to a reasoning program, we wouldn't need a person to provide the meaning anymore: it would come from the physical world."

Searle's reply is to suppose that, unbeknownst to the individual in the Chinese room, some of the inputs came directly from a camera mounted on a robot, and some of the outputs were used to manipulate the arms and legs of the robot. Nevertheless, the person in the room is still just following the rules, and does not know what the symbols mean. Searle writes "he doesn't

see what comes into the robot's eyes."

Derived meaning

Some respond that the room, as Searle describes it, is connected to the world: through the Chinese speakers that it is "talking" to and through the programmers who designed the

knowledge base

In computer science, a knowledge base (KB) is a set of sentences, each sentence given in a knowledge representation language, with interfaces to tell new sentences and to ask questions about what is known, where either of these interfaces migh ...

in his file cabinet. The symbols Searle manipulates are already meaningful, they are just not meaningful to him.

Searle says that the symbols only have a "derived" meaning, like the meaning of words in books. The meaning of the symbols depends on the conscious understanding of the Chinese speakers and the programmers outside the room. The room, like a book, has no understanding of its own.

Contextualist reply

Some have argued that the meanings of the symbols would come from a vast "background" of

commonsense knowledge

In artificial intelligence research, commonsense knowledge consists of facts about the everyday world, such as "Lemons are sour", or "Cows say moo", that all humans are expected to know. It is currently an unsolved problem in artificial gener ...

encoded in the program and the filing cabinets. This would provide a "context" that would give the symbols their meaning.

Searle agrees that this background exists, but he does not agree that it can be built into programs.

Hubert Dreyfus

Hubert Lederer Dreyfus ( ; October 15, 1929 – April 22, 2017) was an American philosopher and a professor of philosophy at the University of California, Berkeley. His main interests included phenomenology, existentialism and the philosophy of ...

has also criticized the idea that the "background" can be represented symbolically.

To each of these suggestions, Searle's response is the same: no matter how much knowledge is written into the program and no matter how the program is connected to the world, he is still in the room manipulating symbols according to rules. His actions are syntactic and this can never explain to him what the symbols stand for. Searle writes "syntax is insufficient for semantics."

However, for those who accept that Searle's actions simulate a mind, separate from his own, the important question is not what the symbols mean to Searle, what is important is what they mean to the virtual mind. While Searle is trapped in the room, the virtual mind is not: it is connected to the outside world through the Chinese speakers it speaks to, through the programmers who gave it world knowledge, and through the cameras and other sensors that

roboticist

Robotics is the interdisciplinary study and practice of the design, construction, operation, and use of robots.

Within mechanical engineering, robotics is the design and construction of the physical structures of robots, while in computer s ...

s can supply.

Brain simulation and connectionist replies: redesigning the room

These arguments are all versions of the systems reply that identify a particular kind of system as being important; they identify some special technology that would create conscious understanding in a machine. (The "robot" and "commonsense knowledge" replies above also specify a certain kind of system as being important.)

Brain simulator reply

Suppose that the program simulated in fine detail the action of every neuron in the brain of a Chinese speaker. This strengthens the intuition that there would be no significant difference between the operation of the program and the operation of a live human brain.

Searle replies that such a simulation does not reproduce the important features of the brain—its causal and intentional states. He is adamant that "human mental phenomena

redependent on actual physical–chemical properties of actual human brains." Moreover, he argues:

= China brain

=

What if we ask each citizen of China to simulate one neuron, using the telephone system to simulate the connections between

axon

An axon (from Greek ἄξων ''áxōn'', axis) or nerve fiber (or nerve fibre: see American and British English spelling differences#-re, -er, spelling differences) is a long, slender cellular extensions, projection of a nerve cell, or neuron, ...

s and

dendrite

A dendrite (from Ancient Greek language, Greek δένδρον ''déndron'', "tree") or dendron is a branched cytoplasmic process that extends from a nerve cell that propagates the neurotransmission, electrochemical stimulation received from oth ...

s? In this version, it seems obvious that no individual would have any understanding of what the brain might be saying. It is also obvious that this system would be functionally equivalent to a brain, so if consciousness is a function, this system would be conscious.

=Brain replacement scenario

=

In this, we are asked to imagine that engineers have invented a tiny computer that simulates the action of an individual neuron. What would happen if we replaced one neuron at a time? Replacing one would clearly do nothing to change conscious awareness. Replacing all of them would create a digital computer that simulates a brain. If Searle is right, then conscious awareness must disappear during the procedure (either gradually or all at once). Searle's critics argue that there would be no point during the procedure when he can claim that conscious awareness ends and mindless simulation begins. (See

Ship of Theseus

The Ship of Theseus, also known as Theseus's Paradox, is a paradox and a common thought experiment about whether an object is the same object after having all of its original components replaced over time, typically one after the other.

In Gre ...

for a similar thought experiment.)

Connectionist replies

:Closely related to the brain simulator reply, this claims that a massively parallel connectionist architecture would be capable of understanding. Modern

deep learning

Deep learning is a subset of machine learning that focuses on utilizing multilayered neural networks to perform tasks such as classification, regression, and representation learning. The field takes inspiration from biological neuroscience a ...

is massively parallel and has successfully displayed intelligent behavior in many domains.

Nils Nilsson argues that modern AI is using digitized "dynamic signals" rather than symbols of the kind used by AI in 1980. Here it is the

sampled

Sample or samples may refer to:

* Sample (graphics), an intersection of a color channel and a pixel

* Sample (material), a specimen or small quantity of something

* Sample (signal), a digital discrete sample of a continuous analog signal

* Sample ...

signal which would have the semantics, not the individual numbers manipulated by the program. This is a different kind of machine than the one that Searle visualized.

Combination reply

:This response combines the robot reply with the brain simulation reply, arguing that a brain simulation connected to the world through a robot body could have a mind.

Many mansions / wait till next year reply

:Better technology in the future will allow computers to understand. Searle agrees that this is possible, but considers this point irrelevant. Searle agrees that there may be other hardware besides brains that have conscious understanding.

These arguments (and the robot or common-sense knowledge replies) identify some special technology that would help create conscious understanding in a machine. They may be interpreted in two ways: either they claim (1) this technology is required for consciousness, the Chinese room does not or cannot implement this technology, and therefore the Chinese room cannot pass the Turing test or (even if it did) it would not have conscious understanding. Or they may be claiming that (2) it is easier to see that the Chinese room has a mind if we visualize this technology as being used to create it.

In the first case, where features like a robot body or a connectionist architecture are required, Searle claims that strong AI (as he understands it) has been abandoned. The Chinese room has all the elements of a Turing complete machine, and thus is capable of simulating any digital computation whatsoever. If Searle's room cannot pass the Turing test then there is no other digital technology that could pass the Turing test. If Searle's room could pass the Turing test, but still does not have a mind, then the Turing test is not sufficient to determine if the room has a "mind". Either way, it denies one or the other of the positions Searle thinks of as "strong AI", proving his argument.

The brain arguments in particular deny strong AI if they assume that there is no simpler way to describe the mind than to create a program that is just as mysterious as the brain was. He writes "I thought the whole idea of strong AI was that we don't need to know how the brain works to know how the mind works." If computation does not provide an explanation of the human mind, then strong AI has failed, according to Searle.

Other critics hold that the room as Searle described it does, in fact, have a mind, however they argue that it is difficult to see—Searle's description is correct, but misleading. By redesigning the room more realistically they hope to make this more obvious. In this case, these arguments are being used as appeals to intuition (see next section).

In fact, the room can just as easily be redesigned to weaken our intuitions.

Ned Block

Ned Joel Block (born 1942) is an American philosopher working in philosophy of mind who has made important contributions to the understanding of consciousness and the philosophy of cognitive science. He has been professor of philosophy and psychol ...

's

Blockhead argument suggests that the program could, in theory, be rewritten into a simple

lookup table

In computer science, a lookup table (LUT) is an array data structure, array that replaces runtime (program lifecycle phase), runtime computation of a mathematical function (mathematics), function with a simpler array indexing operation, in a proc ...

of

rules

Rule or ruling may refer to:

Human activity

* The exercise of political or personal control by someone with authority or power

* Business rule, a rule pertaining to the structure or behavior internal to a business

* School rule, a rule tha ...

of the form "if the user writes ''S'', reply with ''P'' and goto X". At least in principle, any program can be rewritten (or "

refactored

In computer programming and software design, code refactoring is the process of restructuring existing source code—changing the '' factoring''—without changing its external behavior. Refactoring is intended to improve the design, structure, ...

") into this form, even a brain simulation. In the blockhead scenario, the entire mental state is hidden in the letter X, which represents a

memory address

In computing, a memory address is a reference to a specific memory location in memory used by both software and hardware. These addresses are fixed-length sequences of digits, typically displayed and handled as unsigned integers. This numeric ...

—a number associated with the next rule. It is hard to visualize that an instant of one's conscious experience can be captured in a single large number, yet this is exactly what "strong AI" claims. On the other hand, such a lookup table would be ridiculously large (to the point of being physically impossible), and the states could therefore be overly specific.

Searle argues that however the program is written or however the machine is connected to the world, the mind is being simulated by a simple step-by-step digital machine (or machines). These machines are always just like the man in the room: they understand nothing and do not speak Chinese. They are merely manipulating symbols without knowing what they mean. Searle writes: "I can have any formal program you like, but I still understand nothing."

Speed and complexity: appeals to intuition

The following arguments (and the intuitive interpretations of the arguments above) do not directly explain how a Chinese speaking mind could exist in Searle's room, or how the symbols he manipulates could become meaningful. However, by raising doubts about Searle's intuitions they support other positions, such as the system and robot replies. These arguments, if accepted, prevent Searle from claiming that his conclusion is obvious by undermining the intuitions that his certainty requires.

Several critics believe that Searle's argument relies entirely on intuitions. Block writes "Searle's argument depends for its force on intuitions that certain entities do not think."

Daniel Dennett

Daniel Clement Dennett III (March 28, 1942 – April 19, 2024) was an American philosopher and cognitive scientist. His research centered on the philosophy of mind, the philosophy of science, and the philosophy of biology, particularly as those ...

describes the Chinese room argument as a misleading "

intuition pump" and writes "Searle's thought experiment depends, illicitly, on your imagining too simple a case, an irrelevant case, and drawing the obvious conclusion from it."

Some of the arguments above also function as appeals to intuition, especially those that are intended to make it seem more plausible that the Chinese room contains a mind, which can include the robot, commonsense knowledge, brain simulation and connectionist replies. Several of the replies above also address the specific issue of complexity. The connectionist reply emphasizes that a working artificial intelligence system would have to be as complex and as interconnected as the human brain. The commonsense knowledge reply emphasizes that any program that passed a Turing test would have to be "an extraordinarily supple, sophisticated, and multilayered system, brimming with 'world knowledge' and meta-knowledge and meta-meta-knowledge", as

Daniel Dennett

Daniel Clement Dennett III (March 28, 1942 – April 19, 2024) was an American philosopher and cognitive scientist. His research centered on the philosophy of mind, the philosophy of science, and the philosophy of biology, particularly as those ...

explains.

Speed and complexity replies

Many of these critiques emphasize speed and complexity of the human brain, which processes information at 100 billion operations per second (by some estimates). Several critics point out that the man in the room would probably take millions of years to respond to a simple question, and would require "filing cabinets" of astronomical proportions. This brings the clarity of Searle's intuition into doubt.

An especially vivid version of the speed and complexity reply is from

Paul

Paul may refer to:

People

* Paul (given name), a given name, including a list of people

* Paul (surname), a list of people

* Paul the Apostle, an apostle who wrote many of the books of the New Testament

* Ray Hildebrand, half of the singing duo ...

and

Patricia Churchland

Patricia Smith Churchland (born 16 July 1943) is a Canadian-American analytic philosopher noted for her contributions to neurophilosophy and the philosophy of mind. She is UC President's Professor of Philosophy Emerita at the University of Cali ...

. They propose this analogous thought experiment: "Consider a dark room containing a man holding a bar magnet or charged object. If the man pumps the magnet up and down, then, according to

Maxwell

Maxwell may refer to:

People

* Maxwell (surname), including a list of people and fictional characters with the name

** James Clerk Maxwell, mathematician and physicist

* Justice Maxwell (disambiguation)

* Maxwell baronets, in the Baronetage of N ...

's theory of artificial luminance (AL), it will initiate a spreading circle of electromagnetic waves and will thus be luminous. But as all of us who have toyed with magnets or charged balls well know, their forces (or any other forces for that matter), even when set in motion produce no luminance at all. It is inconceivable that you might constitute real luminance just by moving forces around!" Churchland's point is that the problem is that he would have to wave the magnet up and down something like 450 trillion times per second in order to see anything.

Stevan Harnad

Stevan Robert Harnad (Hernád István Róbert, Hesslein István, born 1945) is a Canadian cognitive scientist based in Montreal.

Early life and education

Harnad was born in Budapest, Hungary. He did his undergraduate work at McGill University an ...