sensitivity index on:

[Wikipedia]

[Google]

[Amazon]

The sensitivity index or discriminability index or detectability index is a dimensionless

The sensitivity index or discriminability index or detectability index is a dimensionless

Matlab code

, and may also be used as an approximation when the distributions are close to normal. is a positive-definite statistical distance measure that is free of assumptions about the distributions, like the Kullback-Leibler divergence . is asymmetric, whereas is symmetric for the two distributions. However, does not satisfy the triangle inequality, so it is not a full metric. In particular, for a yes/no task between two univariate normal distributions with means and variances , the Bayes-optimal classification accuracies are: : , where denotes the non-central chi-squared distribution, , and . The Bayes discriminability can also be computed from the ROC curve of a yes/no task between two univariate normal distributions with a single shifting criterion. It can also be computed from the ROC curve of any two distributions (in any number of variables) with a shifting likelihood-ratio, by locating the point on the ROC curve that is farthest from the diagonal. For a two-interval task between these distributions, the optimal accuracy is ( denotes the generalized chi-squared distribution), where . The Bayes discriminability .

We may sometimes want to scale the discriminability of two data distributions by moving them closer or farther apart. One such case is when we are modeling a detection or classification task, and the model performance exceeds that of the subject or observed data. In that case, we can move the model variable distributions closer together so that it matches the observed performance, while also predicting which specific data points should start overlapping and be misclassified.

There are several ways of doing this. One is to compute the mean vector and

We may sometimes want to scale the discriminability of two data distributions by moving them closer or farther apart. One such case is when we are modeling a detection or classification task, and the model performance exceeds that of the subject or observed data. In that case, we can move the model variable distributions closer together so that it matches the observed performance, while also predicting which specific data points should start overlapping and be misclassified.

There are several ways of doing this. One is to compute the mean vector and

Interactive signal detection theory tutorial

including calculation of ''d''′. Detection theory Signal processing Summary statistics {{stat-stub

The sensitivity index or discriminability index or detectability index is a dimensionless

The sensitivity index or discriminability index or detectability index is a dimensionless statistic

A statistic (singular) or sample statistic is any quantity computed from values in a sample which is considered for a statistical purpose. Statistical purposes include estimating a population parameter, describing a sample, or evaluating a hypot ...

used in signal detection theory

Detection theory or signal detection theory is a means to measure the ability to differentiate between information-bearing patterns (called Stimulus (psychology), stimulus in living organisms, Signal (electronics), signal in machines) and random pa ...

. A higher index indicates that the signal can be more readily detected.

Definition

The discriminability index is the separation between the means of two distributions (typically the signal and the noise distributions), in units of thestandard deviation

In statistics, the standard deviation is a measure of the amount of variation of the values of a variable about its Expected value, mean. A low standard Deviation (statistics), deviation indicates that the values tend to be close to the mean ( ...

.Equal variances/covariances

For two univariate distributions and with the same standard deviation, it is denoted by ('dee-prime'): : . In higher dimensions, i.e. with two multivariate distributions with the same variance-covariance matrix , (whose symmetric square-root, the standard deviation matrix, is ), this generalizes to theMahalanobis distance

The Mahalanobis distance is a distance measure, measure of the distance between a point P and a probability distribution D, introduced by Prasanta Chandra Mahalanobis, P. C. Mahalanobis in 1936. The mathematical details of Mahalanobis distance ...

between the two distributions:

: ,

where is the 1d slice of the sd along the unit vector through the means, i.e. the equals the along the 1d slice through the means.

For two bivariate distributions with equal variance-covariance, this is given by:

: ,

where is the correlation coefficient, and here and , i.e. including the signs of the mean differences instead of the absolute.

is also estimated as .

Unequal variances/covariances

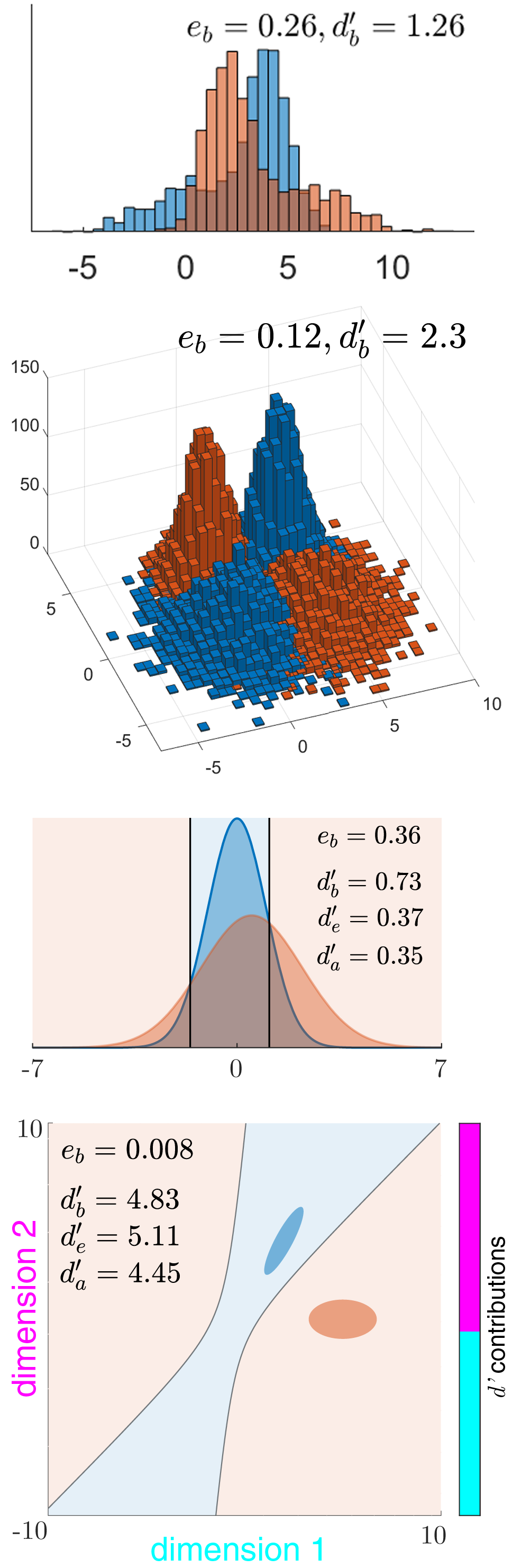

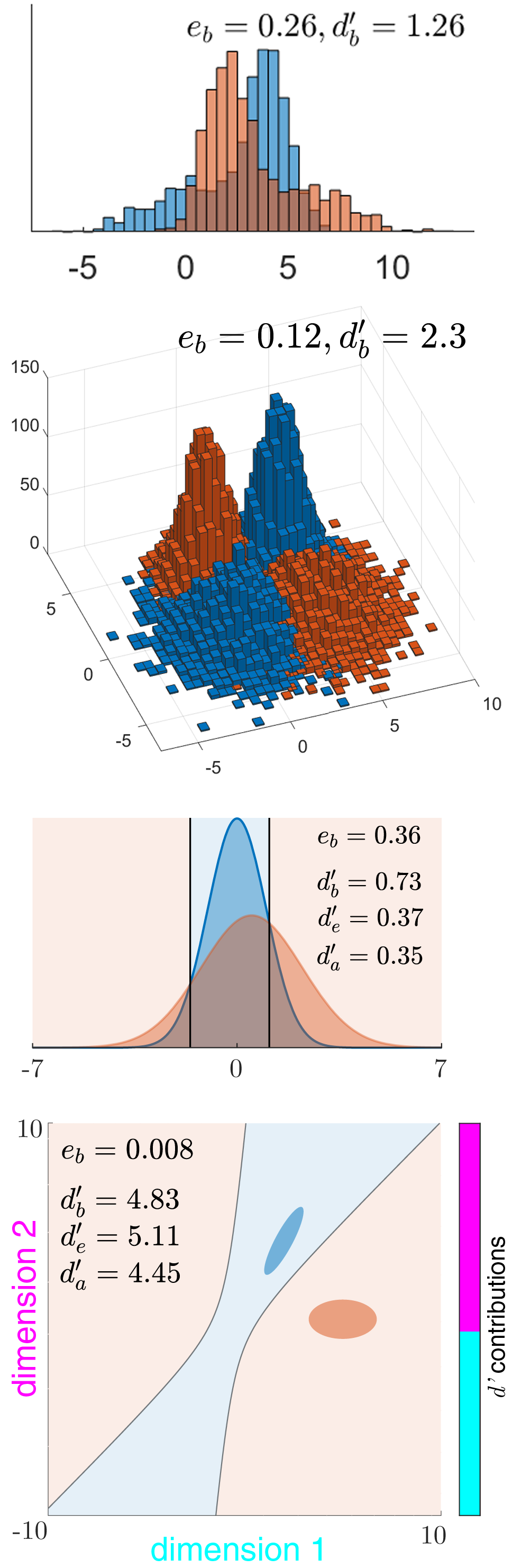

When the two distributions have different standard deviations (or in general dimensions, different covariance matrices), there exist several contending indices, all of which reduce to for equal variance/covariance.Bayes discriminability index

This is the maximum (Bayes-optimal) discriminability index for two distributions, based on the amount of their overlap, i.e. the optimal (Bayes) error of classification by an ideal observer, or its complement, the optimal accuracy : : , where is the inverse cumulative distribution function of the standard normal. The Bayes discriminability between univariate or multivariate normal distributions can be numerically computedMatlab code

, and may also be used as an approximation when the distributions are close to normal. is a positive-definite statistical distance measure that is free of assumptions about the distributions, like the Kullback-Leibler divergence . is asymmetric, whereas is symmetric for the two distributions. However, does not satisfy the triangle inequality, so it is not a full metric. In particular, for a yes/no task between two univariate normal distributions with means and variances , the Bayes-optimal classification accuracies are: : , where denotes the non-central chi-squared distribution, , and . The Bayes discriminability can also be computed from the ROC curve of a yes/no task between two univariate normal distributions with a single shifting criterion. It can also be computed from the ROC curve of any two distributions (in any number of variables) with a shifting likelihood-ratio, by locating the point on the ROC curve that is farthest from the diagonal. For a two-interval task between these distributions, the optimal accuracy is ( denotes the generalized chi-squared distribution), where . The Bayes discriminability .

RMS sd discriminability index

A common approximate (i.e. sub-optimal) discriminability index that has a closed-form is to take the average of the variances, i.e. the rms of the two standard deviations: (also denoted by ). It is times the -score of the area under thereceiver operating characteristic

A receiver operating characteristic curve, or ROC curve, is a graph of a function, graphical plot that illustrates the performance of a binary classifier model (can be used for multi class classification as well) at varying threshold values. ROC ...

curve (AUC) of a single-criterion observer. This index is extended to general dimensions as the Mahalanobis distance using the pooled covariance, i.e. with as the common sd matrix.

Average sd discriminability index

Another index is , extended to general dimensions using as the common sd matrix.Comparison of the indices

It has been shown that for two univariate normal distributions, , and for multivariate normal distributions, still. Thus, and underestimate the maximum discriminability of univariate normal distributions. can underestimate by a maximum of approximately 30%. At the limit of high discriminability for univariate normal distributions, converges to . These results often hold true in higher dimensions, but not always. Simpson and Fitter promoted as the best index, particularly for two-interval tasks, but Das and Geisler have shown that is the optimal discriminability in all cases, and is often a better closed-form approximation than , even for two-interval tasks. The approximate index , which uses thegeometric mean

In mathematics, the geometric mean is a mean or average which indicates a central tendency of a finite collection of positive real numbers by using the product of their values (as opposed to the arithmetic mean which uses their sum). The geometri ...

of the sd's, is less than at small discriminability, but greater at large discriminability.

Contribution to discriminability by each dimension

In general, the contribution to the total discriminability by each dimension or feature may be measured using the amount by which the discriminability drops when that dimension is removed. If the total Bayes discriminability is and the Bayes discriminability with dimension removed is , we can define the contribution of dimension as . This is the same as the individual discriminability of dimension when the covariance matrices are equal and diagonal, but in the other cases, this measure more accurately reflects the contribution of a dimension than its individual discriminability.Scaling the discriminability of two distributions

We may sometimes want to scale the discriminability of two data distributions by moving them closer or farther apart. One such case is when we are modeling a detection or classification task, and the model performance exceeds that of the subject or observed data. In that case, we can move the model variable distributions closer together so that it matches the observed performance, while also predicting which specific data points should start overlapping and be misclassified.

There are several ways of doing this. One is to compute the mean vector and

We may sometimes want to scale the discriminability of two data distributions by moving them closer or farther apart. One such case is when we are modeling a detection or classification task, and the model performance exceeds that of the subject or observed data. In that case, we can move the model variable distributions closer together so that it matches the observed performance, while also predicting which specific data points should start overlapping and be misclassified.

There are several ways of doing this. One is to compute the mean vector and covariance matrix

In probability theory and statistics, a covariance matrix (also known as auto-covariance matrix, dispersion matrix, variance matrix, or variance–covariance matrix) is a square matrix giving the covariance between each pair of elements of ...

of the two distributions, then effect a linear transformation to interpolate the mean and sd matrix (square root of the covariance matrix) of one of the distributions towards the other.

Another way that is by computing the decision variables of the data points (log likelihood ratio that a point belongs to one distribution vs another) under a multinormal model, then moving these decision variables closer together or farther apart.

See also

*Receiver operating characteristic

A receiver operating characteristic curve, or ROC curve, is a graph of a function, graphical plot that illustrates the performance of a binary classifier model (can be used for multi class classification as well) at varying threshold values. ROC ...

(ROC)

* Summary statistics

In descriptive statistics, summary statistics are used to summarize a set of observations, in order to communicate the largest amount of information as simply as possible. Statisticians commonly try to describe the observations in

* a measure of ...

* Effect size

In statistics, an effect size is a value measuring the strength of the relationship between two variables in a population, or a sample-based estimate of that quantity. It can refer to the value of a statistic calculated from a sample of data, the ...

References

*External links

Interactive signal detection theory tutorial

including calculation of ''d''′. Detection theory Signal processing Summary statistics {{stat-stub