|

Statistical Randomness

A numeric sequence is said to be statistically random when it contains no recognizable patterns or regularities; sequences such as the results of an ideal dice, dice roll or the digits of pi, π exhibit statistical randomness. Statistical randomness does not necessarily imply "true" randomness, i.e., objective Predictability, unpredictability. Pseudorandomness is sufficient for many uses, such as statistics, hence the name ''statistical'' randomness. ''Global randomness'' and ''local randomness'' are different. Most philosophical conceptions of randomness are global—because they are based on the idea that "in the long run" a sequence looks truly random, even if certain sub-sequences would ''not'' look random. In a "truly" random sequence of numbers of sufficient length, for example, it is probable there would be long sequences of nothing but repeating numbers, though on the whole the sequence might be random. ''Local'' randomness refers to the idea that there can be minimum s ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

Sequence

In mathematics, a sequence is an enumerated collection of objects in which repetitions are allowed and order matters. Like a set, it contains members (also called ''elements'', or ''terms''). The number of elements (possibly infinite) is called the ''length'' of the sequence. Unlike a set, the same elements can appear multiple times at different positions in a sequence, and unlike a set, the order does matter. Formally, a sequence can be defined as a function from natural numbers (the positions of elements in the sequence) to the elements at each position. The notion of a sequence can be generalized to an indexed family, defined as a function from an ''arbitrary'' index set. For example, (M, A, R, Y) is a sequence of letters with the letter "M" first and "Y" last. This sequence differs from (A, R, M, Y). Also, the sequence (1, 1, 2, 3, 5, 8), which contains the number 1 at two different positions, is a valid sequence. Sequences can be '' finite'', as in these examples, or '' ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

George Marsaglia

George Marsaglia (March 12, 1924 – February 15, 2011) was an American mathematician and computer scientist. He is best known for creating the diehard tests, a suite of software for measuring statistical randomness. Research on random numbers George Marsaglia established the lattice structure of linear congruential generators in the paper "Random numbers fall mainly in the planes", later termed Marsaglia's theorem. This phenomenon means that ''n''-tuples with coordinates obtained from consecutive use of the generator will lie on a small number of equally spaced hyperplanes in ''n''-dimensional space. He also developed the diehard tests, a series of tests to determine whether or not a sequence of numbers have the statistical properties that could be expected from a random sequence. In 1995 he published a CD-ROM of random numbers, which included the diehard tests. His diehard paper came with the quotation "Nothing is random, only uncertain" attributed to ''Gail Gasram'', ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

Spectral Density Estimation

In statistical signal processing, the goal of spectral density estimation (SDE) or simply spectral estimation is to estimate the spectral density (also known as the power spectral density) of a signal from a sequence of time samples of the signal. Intuitively speaking, the spectral density characterizes the frequency content of the signal. One purpose of estimating the spectral density is to detect any periodicities in the data, by observing peaks at the frequencies corresponding to these periodicities. Some SDE techniques assume that a signal is composed of a limited (usually small) number of generating frequencies plus noise and seek to find the location and intensity of the generated frequencies. Others make no assumption on the number of components and seek to estimate the whole generating spectrum. Overview Spectrum analysis, also referred to as frequency domain analysis or spectral density estimation, is the technical process of decomposing a complex signal into s ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

Kolmogorov–Smirnov Test

In statistics, the Kolmogorov–Smirnov test (also K–S test or KS test) is a nonparametric statistics, nonparametric test of the equality of continuous (or discontinuous, see #Discrete and mixed null distribution, Section 2.2), one-dimensional probability distributions. It can be used to test whether a random sample, sample came from a given reference probability distribution (one-sample K–S test), or to test whether two samples came from the same distribution (two-sample K–S test). Intuitively, it provides a method to qualitatively answer the question "How likely is it that we would see a collection of samples like this if they were drawn from that probability distribution?" or, in the second case, "How likely is it that we would see two sets of samples like this if they were drawn from the same (but unknown) probability distribution?". It is named after Andrey Kolmogorov and Nikolai Smirnov (mathematician), Nikolai Smirnov. The Kolmogorov–Smirnov statistic quantifies ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

Autocorrelation

Autocorrelation, sometimes known as serial correlation in the discrete time case, measures the correlation of a signal with a delayed copy of itself. Essentially, it quantifies the similarity between observations of a random variable at different points in time. The analysis of autocorrelation is a mathematical tool for identifying repeating patterns or hidden periodicities within a signal obscured by noise. Autocorrelation is widely used in signal processing, time domain and time series analysis to understand the behavior of data over time. Different fields of study define autocorrelation differently, and not all of these definitions are equivalent. In some fields, the term is used interchangeably with autocovariance. Various time series models incorporate autocorrelation, such as unit root processes, trend-stationary processes, autoregressive processes, and moving average processes. Autocorrelation of stochastic processes In statistics, the autocorrelation of a real ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

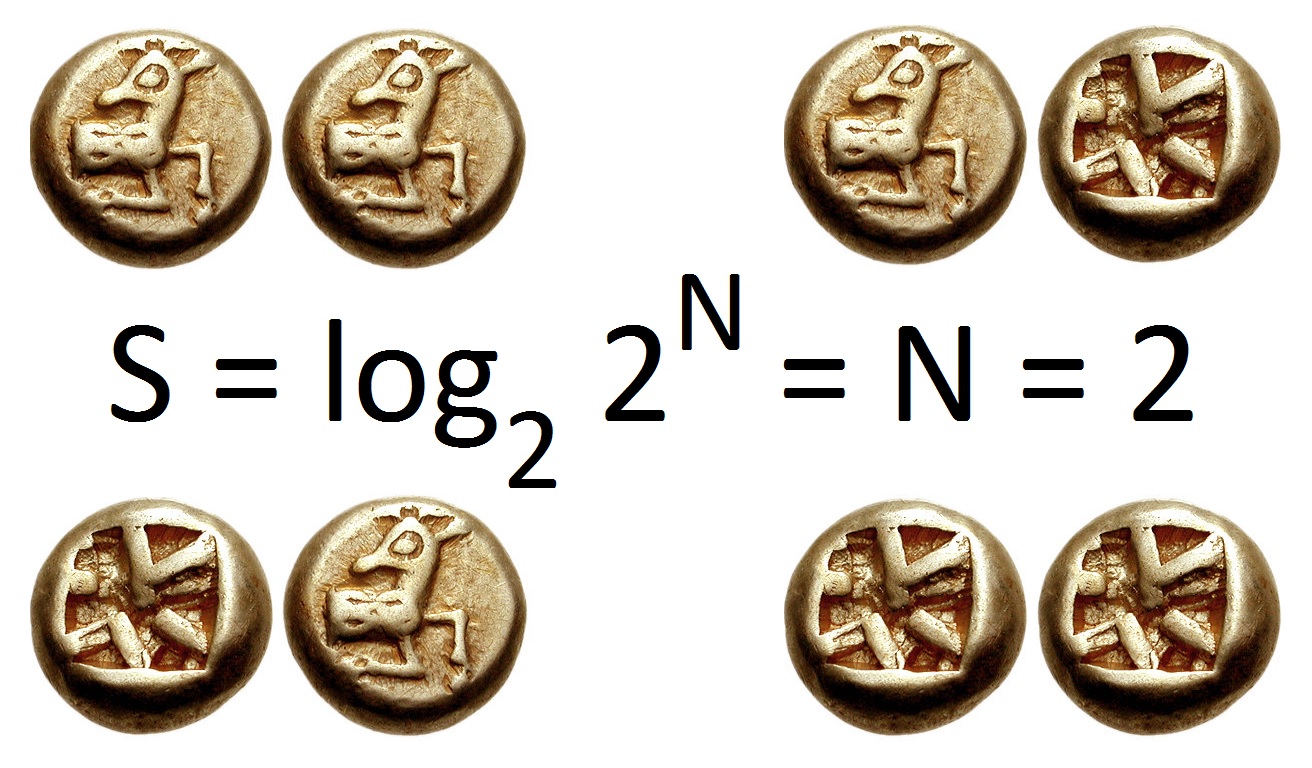

Information Entropy

In information theory, the entropy of a random variable quantifies the average level of uncertainty or information associated with the variable's potential states or possible outcomes. This measures the expected amount of information needed to describe the state of the variable, considering the distribution of probabilities across all potential states. Given a discrete random variable X, which may be any member x within the set \mathcal and is distributed according to p\colon \mathcal\to , 1/math>, the entropy is \Eta(X) := -\sum_ p(x) \log p(x), where \Sigma denotes the sum over the variable's possible values. The choice of base for \log, the logarithm, varies for different applications. Base 2 gives the unit of bits (or " shannons"), while base ''e'' gives "natural units" nat, and base 10 gives units of "dits", "bans", or " hartleys". An equivalent definition of entropy is the expected value of the self-information of a variable. The concept of information entropy was ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

Wald–Wolfowitz Runs Test

The Wald–Wolfowitz runs test (or simply runs test), named after statisticians Abraham Wald and Jacob Wolfowitz is a non-parametric statistical test that checks a randomness hypothesis for a two-valued data sequence. More precisely, it can be used to test the hypothesis that the elements of the sequence are mutually independent. Definition A ''run'' of a sequence is a maximal non-empty segment of the sequence consisting of adjacent equal elements. For example, the 21-element-long sequence : + + + + − − − + + + − + + + + + + − − − − consists of 6 runs, with lengths 4, 3, 3, 1, 6, and 4. The run test is based on the null hypothesis that each element in the sequence is independently drawn from the same distribution. Under the null hypothesis, the number of runs in a sequence of ''N'' elements''N'' is the number of elements, not the number of runs. is a random variable whose conditional distribution given the observation of ''N''+ positive values''N''+ is the nu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

Binomial Distribution

In probability theory and statistics, the binomial distribution with parameters and is the discrete probability distribution of the number of successes in a sequence of statistical independence, independent experiment (probability theory), experiments, each asking a yes–no question, and each with its own Boolean-valued function, Boolean-valued outcome (probability), outcome: ''success'' (with probability ) or ''failure'' (with probability ). A single success/failure experiment is also called a Bernoulli trial or Bernoulli experiment, and a sequence of outcomes is called a Bernoulli process; for a single trial, i.e., , the binomial distribution is a Bernoulli distribution. The binomial distribution is the basis for the binomial test of statistical significance. The binomial distribution is frequently used to model the number of successes in a sample of size drawn with replacement from a population of size . If the sampling is carried out without replacement, the draws ar ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

Quasi-random

In mathematics, a low-discrepancy sequence is a sequence with the property that for all values of N, its subsequence x_1, \ldots, x_N has a low discrepancy. Roughly speaking, the discrepancy of a sequence is low if the proportion of points in the sequence falling into an arbitrary set ''B'' is close to proportional to the measure of ''B'', as would happen on average (but not for particular samples) in the case of an equidistributed sequence. Specific definitions of discrepancy differ regarding the choice of ''B'' ( hyperspheres, hypercubes, etc.) and how the discrepancy for every B is computed (usually normalized) and combined (usually by taking the worst value). Low-discrepancy sequences are also called quasirandom sequences, due to their common use as a replacement of uniformly distributed random numbers. The "quasi" modifier is used to denote more clearly that the values of a low-discrepancy sequence are neither random nor pseudorandom, but such sequences share some propertie ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

Pseudorandom Number Generator

A pseudorandom number generator (PRNG), also known as a deterministic random bit generator (DRBG), is an algorithm for generating a sequence of numbers whose properties approximate the properties of sequences of random number generation, random numbers. The PRNG-generated sequence is not truly random, because it is completely determined by an initial value, called the PRNG's ''random seed, seed'' (which may include truly random values). Although sequences that are closer to truly random can be generated using hardware random number generators, ''pseudorandom number generators'' are important in practice for their speed in number generation and their reproducibility. PRNGs are central in applications such as simulations (e.g. for the Monte Carlo method), electronic games (e.g. for procedural generation), and cryptography. Cryptographic applications require the output not to be predictable from earlier outputs, and more cryptographically-secure pseudorandom number generator, elabora ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

Yongge Wang

Yongge Wang (born 1967) is a computer science professor at the University of North Carolina at Charlotte specialized in algorithmic complexity and cryptography. He is the inventor of IEEE P1363 cryptographic standards SRP5 and WANG-KE and has contributed to the mathematical theory of algorithmic randomness. He co-authored a paper demonstrating that a recursively enumerable real number In mathematics, a real number is a number that can be used to measure a continuous one- dimensional quantity such as a duration or temperature. Here, ''continuous'' means that pairs of values can have arbitrarily small differences. Every re ... is an algorithmically random sequence if and only if it is a Chaitin's constant for some encoding of programs. He also showed the separation of Schnorr randomness from recursive randomness. He also invented a distance based statistical testing technique to improve NIST SP800-22 testing in randomness tests. In cryptographic research, he is known fo ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |