|

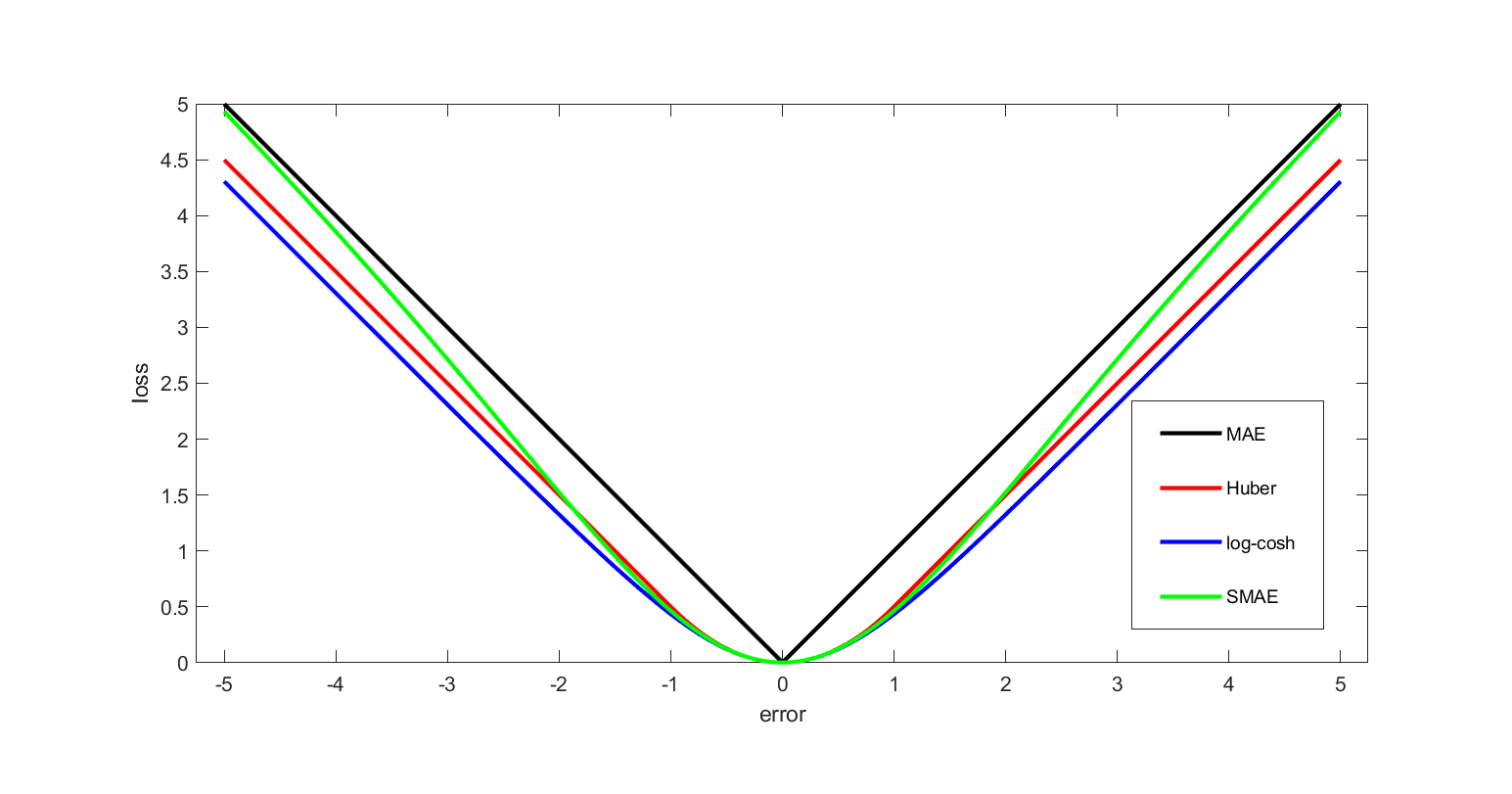

Loss Functions

In mathematical optimization and decision theory, a loss function or cost function (sometimes also called an error function) is a function that maps an event or values of one or more variables onto a real number intuitively representing some "cost" associated with the event. An optimization problem seeks to minimize a loss function. An objective function is either a loss function or its opposite (in specific domains, variously called a reward function, a profit function, a utility function, a fitness function, etc.), in which case it is to be maximized. The loss function could include terms from several levels of the hierarchy. In statistics, typically a loss function is used for parameter estimation, and the event in question is some function of the difference between estimated and true values for an instance of data. The concept, as old as Laplace, was reintroduced in statistics by Abraham Wald in the middle of the 20th century. In the context of economics, for example, th ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Mathematical Optimization

Mathematical optimization (alternatively spelled ''optimisation'') or mathematical programming is the selection of a best element, with regard to some criteria, from some set of available alternatives. It is generally divided into two subfields: discrete optimization and continuous optimization. Optimization problems arise in all quantitative disciplines from computer science and engineering to operations research and economics, and the development of solution methods has been of interest in mathematics for centuries. In the more general approach, an optimization problem consists of maxima and minima, maximizing or minimizing a Function of a real variable, real function by systematically choosing Argument of a function, input values from within an allowed set and computing the Value (mathematics), value of the function. The generalization of optimization theory and techniques to other formulations constitutes a large area of applied mathematics. Optimization problems Opti ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

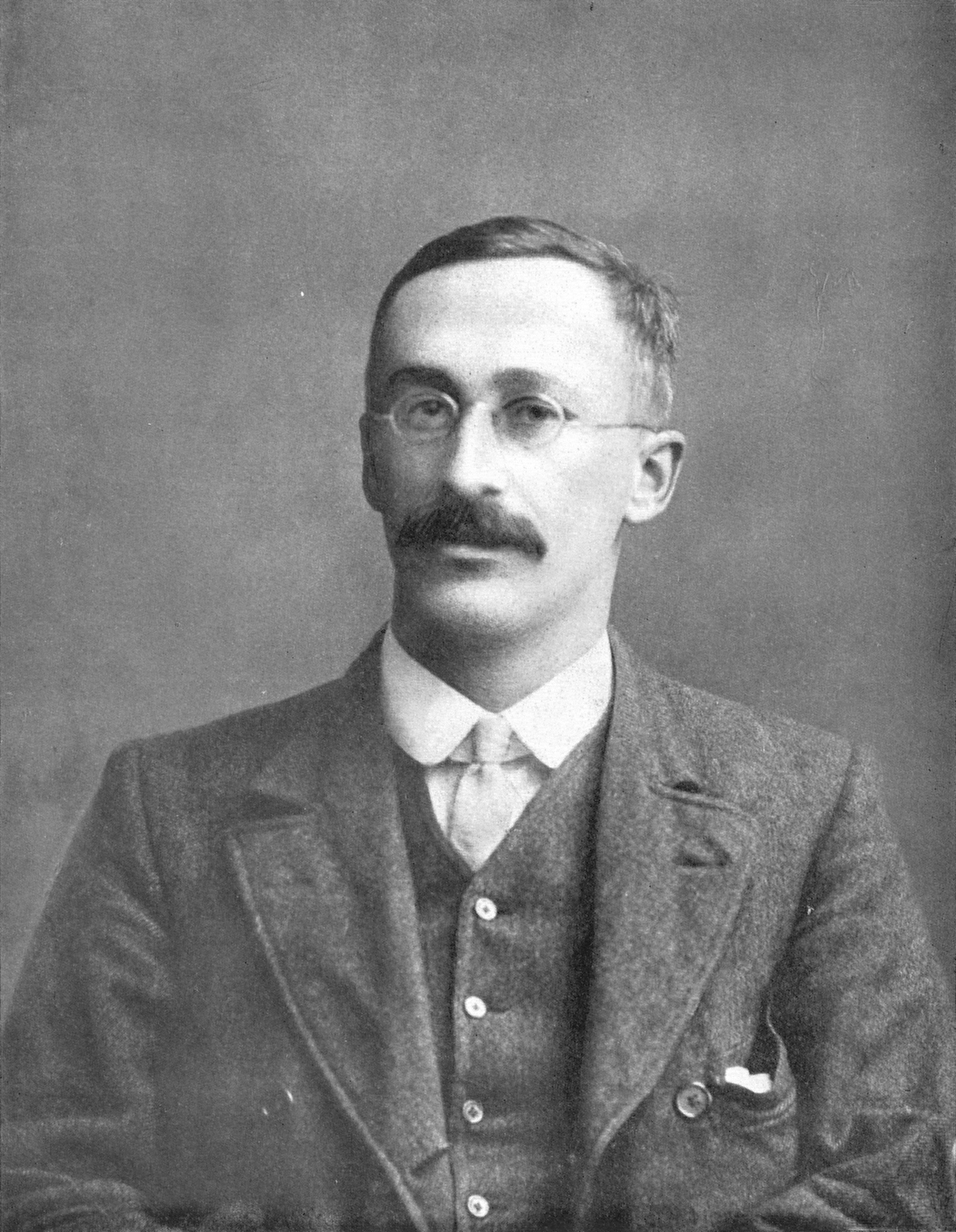

Harald Cramér

Harald Cramér (; 25 September 1893 – 5 October 1985) was a Swedish mathematician, actuary, and statistician, specializing in mathematical statistics and probabilistic number theory. John Kingman described him as "one of the giants of statistical theory".Kingman 1986, p. 186. Biography Early life Harald Cramér was born in Stockholm, Sweden on 25 September 1893. Cramér remained close to Stockholm for most of his life. He entered the Stockholm University as an undergraduate in 1912, where he studied mathematics and chemistry. During this period, he was a research assistant under the famous chemist, Hans von Euler-Chelpin, with whom he published his first five articles from 1913 to 1914. Following his lab experience, he began to focus solely on mathematics. He eventually began his work on his doctoral studies in mathematics which were supervised by Marcel Riesz at the Stockholm University. Also influenced by G. H. Hardy, Cramér's research led to a PhD in 1917 for his thesi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Design Of Experiments

The design of experiments (DOE), also known as experiment design or experimental design, is the design of any task that aims to describe and explain the variation of information under conditions that are hypothesized to reflect the variation. The term is generally associated with experiments in which the design introduces conditions that directly affect the variation, but may also refer to the design of quasi-experiments, in which natural conditions that influence the variation are selected for observation. In its simplest form, an experiment aims at predicting the outcome by introducing a change of the preconditions, which is represented by one or more independent variables, also referred to as "input variables" or "predictor variables." The change in one or more independent variables is generally hypothesized to result in a change in one or more dependent variables, also referred to as "output variables" or "response variables." The experimental design may also identify ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

T-test

Student's ''t''-test is a statistical test used to test whether the difference between the response of two groups is Statistical significance, statistically significant or not. It is any statistical hypothesis testing, statistical hypothesis test in which the test statistic follows a Student's t-distribution, Student's ''t''-distribution under the null hypothesis. It is most commonly applied when the test statistic would follow a normal distribution if the value of a Scale parameter, scaling term in the test statistic were known (typically, the scaling term is unknown and is therefore a nuisance parameter). When the scaling term is estimated based on the data, the test statistic—under certain conditions—follows a Student's ''t'' distribution. The ''t''-test's most common application is to test whether the means of two populations are significantly different. In many cases, a Z-test, ''Z''-test will yield very similar results to a ''t''-test because the latter converges to the fo ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistic

A statistic (singular) or sample statistic is any quantity computed from values in a sample which is considered for a statistical purpose. Statistical purposes include estimating a population parameter, describing a sample, or evaluating a hypothesis. The average (or mean) of sample values is a statistic. The term statistic is used both for the function (e.g., a calculation method of the average) and for the value of the function on a given sample (e.g., the result of the average calculation). When a statistic is being used for a specific purpose, it may be referred to by a name indicating its purpose. When a statistic is used for estimating a population parameter, the statistic is called an '' estimator''. A population parameter is any characteristic of a population under study, but when it is not feasible to directly measure the value of a population parameter, statistical methods are used to infer the likely value of the parameter on the basis of a statistic computed from a s ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Variance

In probability theory and statistics, variance is the expected value of the squared deviation from the mean of a random variable. The standard deviation (SD) is obtained as the square root of the variance. Variance is a measure of dispersion, meaning it is a measure of how far a set of numbers is spread out from their average value. It is the second central moment of a distribution, and the covariance of the random variable with itself, and it is often represented by \sigma^2, s^2, \operatorname(X), V(X), or \mathbb(X). An advantage of variance as a measure of dispersion is that it is more amenable to algebraic manipulation than other measures of dispersion such as the expected absolute deviation; for example, the variance of a sum of uncorrelated random variables is equal to the sum of their variances. A disadvantage of the variance for practical applications is that, unlike the standard deviation, its units differ from the random variable, which is why the standard devi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Least Squares

The method of least squares is a mathematical optimization technique that aims to determine the best fit function by minimizing the sum of the squares of the differences between the observed values and the predicted values of the model. The method is widely used in areas such as regression analysis, curve fitting and data modeling. The least squares method can be categorized into linear and nonlinear forms, depending on the relationship between the model parameters and the observed data. The method was first proposed by Adrien-Marie Legendre in 1805 and further developed by Carl Friedrich Gauss. History Founding The method of least squares grew out of the fields of astronomy and geodesy, as scientists and mathematicians sought to provide solutions to the challenges of navigating the Earth's oceans during the Age of Discovery. The accurate description of the behavior of celestial bodies was the key to enabling ships to sail in open seas, where sailors could no longer rely on la ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Quadratic Function

In mathematics, a quadratic function of a single variable (mathematics), variable is a function (mathematics), function of the form :f(x)=ax^2+bx+c,\quad a \ne 0, where is its variable, and , , and are coefficients. The mathematical expression, expression , especially when treated as an mathematical object, object in itself rather than as a function, is a quadratic polynomial, a polynomial of degree two. In elementary mathematics a polynomial and its associated polynomial function are rarely distinguished and the terms ''quadratic function'' and ''quadratic polynomial'' are nearly synonymous and often abbreviated as ''quadratic''. The graph of a function, graph of a function of a real variable, real single-variable quadratic function is a parabola. If a quadratic function is equation, equated with zero, then the result is a quadratic equation. The solutions of a quadratic equation are the zero of a function, zeros (or ''roots'') of the corresponding quadratic function, of which ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Regret (decision Theory)

In decision theory, regret aversion (or anticipated regret) describes how the human emotional response of regret can influence decision-making under uncertainty. When individuals make choices without complete information, they often experience regret if they later discover that a different choice would have produced a better outcome. This regret can be quantified as the difference in value between the actual decision made and what would have been the optimal decision in hindsight. Unlike traditional models that consider regret as merely a post-decision emotional response, the theory of regret aversion proposes that decision-makers actively anticipate potential future regret and incorporate this anticipation into their current decision-making process. This anticipation can lead individuals to make choices specifically designed to minimize the possibility of experiencing regret later, even if those choices are not optimal from a purely probabilistic expected-value perspective. Regre ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Minimax

Minimax (sometimes Minmax, MM or saddle point) is a decision rule used in artificial intelligence, decision theory, combinatorial game theory, statistics, and philosophy for ''minimizing'' the possible loss function, loss for a Worst-case scenario, worst case (''max''imum loss) scenario. When dealing with gains, it is referred to as "maximin" – to maximize the minimum gain. Originally formulated for several-player zero-sum game theory, covering both the cases where players take alternate moves and those where they make simultaneous moves, it has also been extended to more complex games and to general decision-making in the presence of uncertainty. Game theory In general games The maximin value is the highest value that the player can be sure to get without knowing the actions of the other players; equivalently, it is the lowest value the other players can force the player to receive when they know the player's action. Its formal definition is: :\underline = \max_ \min_ W ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |