Tf–idf on:

[Wikipedia]

[Google]

[Amazon]

In

Anatomy of a search engine

as used in

TfidfTransformer

in scikit-learn

Text to Matrix Generator (TMG)

MATLAB toolbox that can be used for various tasks in text mining (TM) specifically i) indexing, ii) retrieval, iii) dimensionality reduction, iv) clustering, v) classification. The indexing step offers the user the ability to apply local and global weighting methods, including tf–idf.

Term-frequency explained

Explanation of term-frequency {{DEFAULTSORT:Tf-Idf Statistical natural language processing Ranking functions Vector space model

information retrieval

Information retrieval (IR) in computing and information science is the process of obtaining information system resources that are relevant to an information need from a collection of those resources. Searches can be based on full-text or other c ...

, tf–idf (also TF*IDF, TFIDF, TF–IDF, or Tf–idf), short for term frequency–inverse document frequency, is a numerical statistic that is intended to reflect how important a word is to a document

A document is a written, drawn, presented, or memorialized representation of thought, often the manifestation of non-fictional, as well as fictional, content. The word originates from the Latin ''Documentum'', which denotes a "teaching" o ...

in a collection or corpus. It is often used as a weighting factor in searches of information retrieval, text mining, and user modeling User modeling is the subdivision of human–computer interaction which describes the

process of building up and modifying a conceptual understanding of the user. The main goal of user modeling is customization and adaptation of systems to the user' ...

.

The tf–idf value increases proportionally to the number of times a word appears in the document and is offset by the number of documents in the corpus that contain the word, which helps to adjust for the fact that some words appear more frequently in general. tf–idf is one of the most popular term-weighting schemes today. A survey conducted in 2015 showed that 83% of text-based recommender systems in digital libraries use tf–idf.

Variations of the tf–idf weighting scheme are often used by search engine

A search engine is a software system designed to carry out web searches. They search the World Wide Web in a systematic way for particular information specified in a textual web search query. The search results are generally presented in a ...

s as a central tool in scoring and ranking a document's relevance

Relevance is the concept of one topic being connected to another topic in a way that makes it useful to consider the second topic when considering the first. The concept of relevance is studied in many different fields, including cognitive sc ...

given a user query. tf–idf can be successfully used for stop-words filtering in various subject fields, including text summarization and classification.

One of the simplest ranking functions is computed by summing the tf–idf for each query term; many more sophisticated ranking functions are variants of this simple model.

Motivations

Term frequency

Suppose we have a set of English text documents and wish to rank them by which document is more relevant to the query, "the brown cow". A simple way to start out is by eliminating documents that do not contain all three words "the", "brown", and "cow", but this still leaves many documents. To further distinguish them, we might count the number of times each term occurs in each document; the number of times a term occurs in a document is called its ''term frequency''. However, in the case where the length of documents varies greatly, adjustments are often made (see definition below). The first form of term weighting is due to Hans Peter Luhn (1957) which may be summarized as:Inverse document frequency

Because the term "the" is so common, term frequency will tend to incorrectly emphasize documents which happen to use the word "the" more frequently, without giving enough weight to the more meaningful terms "brown" and "cow". The term "the" is not a good keyword to distinguish relevant and non-relevant documents and terms, unlike the less-common words "brown" and "cow". Hence, an ''inverse document frequency'' factor is incorporated which diminishes the weight of terms that occur very frequently in the document set and increases the weight of terms that occur rarely. Karen Spärck Jones (1972) conceived a statistical interpretation of term-specificity called Inverse Document Frequency (idf), which became a cornerstone of term weighting:Definition

# The tf–idf is the product of two statistics, ''term frequency'' and ''inverse document frequency''. There are various ways for determining the exact values of both statistics. # A formula that aims to define the importance of a keyword or phrase within a document or a web page.Term frequency

Term frequency, , is the relative frequency of term within document , :, where is the ''raw count'' of a term in a document, i.e., the number of times that term occurs in document . Note the denominator is simply the total number of terms in document (counting each occurrence of the same term separately). There are various other ways to define term frequency: * the raw count itself: * Boolean "frequencies": if occurs in and 0 otherwise; * logarithmically scaled frequency: ; * augmented frequency, to prevent a bias towards longer documents, e.g. raw frequency divided by the raw frequency of the most frequently occurring term in the document: :Inverse document frequency

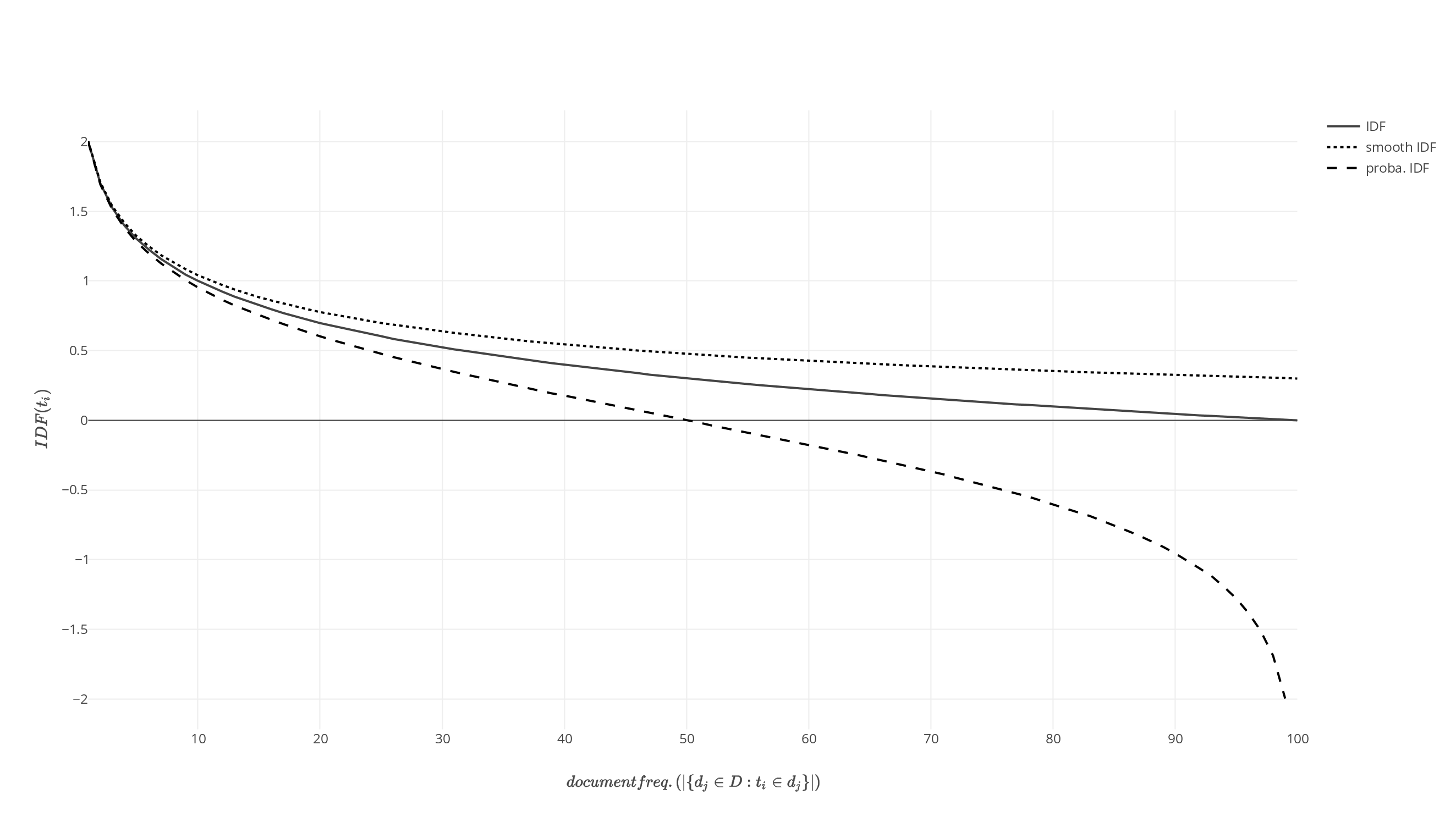

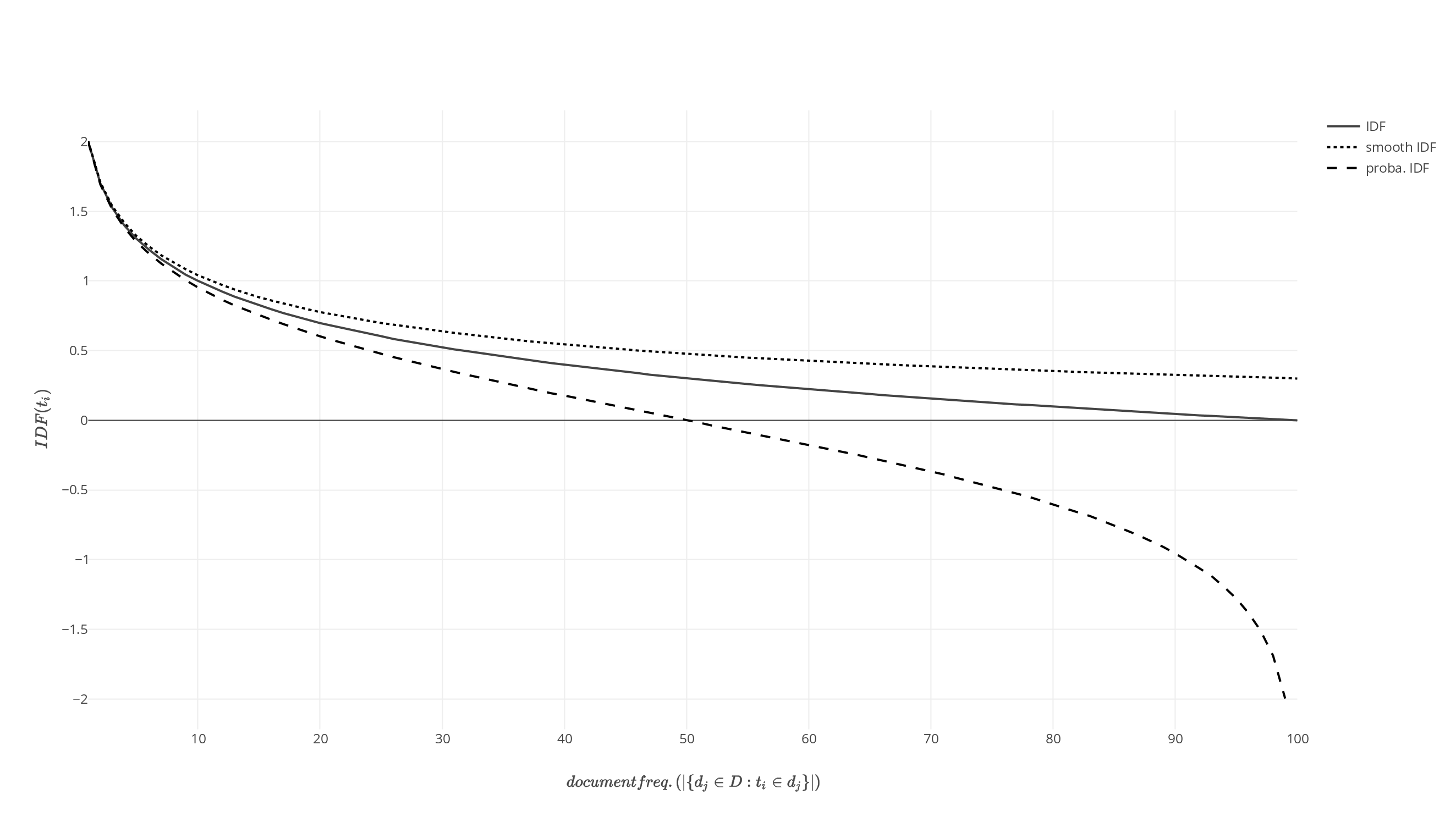

The inverse document frequency is a measure of how much information the word provides, i.e., if it is common or rare across all documents. It is the logarithmically scaled inverse fraction of the documents that contain the word (obtained by dividing the total number of documents by the number of documents containing the term, and then taking the logarithm of that quotient): : with * : total number of documents in the corpus * : number of documents where the term appears (i.e., ). If the term is not in the corpus, this will lead to a division-by-zero. It is therefore common to adjust the denominator to .

Term frequency–inverse document frequency

Then tf–idf is calculated as : A high weight in tf–idf is reached by a high termfrequency

Frequency is the number of occurrences of a repeating event per unit of time. It is also occasionally referred to as ''temporal frequency'' for clarity, and is distinct from ''angular frequency''. Frequency is measured in hertz (Hz) which is eq ...

(in the given document) and a low document frequency of the term in the whole collection of documents; the weights hence tend to filter out common terms. Since the ratio inside the idf's log function is always greater than or equal to 1, the value of idf (and tf–idf) is greater than or equal to 0. As a term appears in more documents, the ratio inside the logarithm approaches 1, bringing the idf and tf–idf closer to 0.

Justification of idf

Idf was introduced as "term specificity" by Karen Spärck Jones in a 1972 paper. Although it has worked well as aheuristic

A heuristic (; ), or heuristic technique, is any approach to problem solving or self-discovery that employs a practical method that is not guaranteed to be optimal, perfect, or rational, but is nevertheless sufficient for reaching an immediate ...

, its theoretical foundations have been troublesome for at least three decades afterward, with many researchers trying to find information theoretic justifications for it.

Spärck Jones's own explanation did not propose much theory, aside from a connection to Zipf's law. Attempts have been made to put idf on a probabilistic

Probability is the branch of mathematics concerning numerical descriptions of how likely an event is to occur, or how likely it is that a proposition is true. The probability of an event is a number between 0 and 1, where, roughly speaking, ...

footing, by estimating the probability that a given document contains a term as the relative document frequency,

:

so that we can define idf as

:

Namely, the inverse document frequency is the logarithm of "inverse" relative document frequency.

This probabilistic interpretation in turn takes the same form as that of self-information. However, applying such information-theoretic notions to problems in information retrieval leads to problems when trying to define the appropriate event spaces for the required probability distribution

In probability theory and statistics, a probability distribution is the mathematical function that gives the probabilities of occurrence of different possible outcomes for an experiment. It is a mathematical description of a random phenomenon ...

s: not only documents need to be taken into account, but also queries and terms.

Link with information theory

Both term frequency and inverse document frequency can be formulated in terms ofinformation theory

Information theory is the scientific study of the quantification, storage, and communication of information. The field was originally established by the works of Harry Nyquist and Ralph Hartley, in the 1920s, and Claude Shannon in the 1940s. ...

; it helps to understand why their product has a meaning in terms of joint informational content of a document. A characteristic assumption about the distribution is that:

:

This assumption and its implications, according to Aizawa: "represent the heuristic that tf–idf employs."

The conditional entropy of a "randomly chosen" document in the corpus , conditional to the fact it contains a specific term (and assuming that all documents have equal probability to be chosen) is:

:

In terms of notation, and are "random variables" corresponding to respectively draw a document or a term. The mutual information

In probability theory and information theory, the mutual information (MI) of two random variables is a measure of the mutual dependence between the two variables. More specifically, it quantifies the " amount of information" (in units such ...

can be expressed as

:

The last step is to expand , the unconditional probability to draw a term, with respect to the (random) choice of a document, to obtain:

:

This expression shows that summing the Tf–idf of all possible terms and documents recovers the mutual information between documents and term taking into account all the specificities of their joint distribution. Each Tf–idf hence carries the "bit of information" attached to a term x document pair.

Example of tf–idf

Suppose that we have term count tables of a corpus consisting of only two documents, as listed on the right. The calculation of tf–idf for the term "this" is performed as follows: In its raw frequency form, tf is just the frequency of the "this" for each document. In each document, the word "this" appears once; but as the document 2 has more words, its relative frequency is smaller. : : An idf is constant per corpus, and accounts for the ratio of documents that include the word "this". In this case, we have a corpus of two documents and all of them include the word "this". : So tf–idf is zero for the word "this", which implies that the word is not very informative as it appears in all documents. : : The word "example" is more interesting - it occurs three times, but only in the second document: : : : Finally, : : (using thebase 10 logarithm

In mathematics, the common logarithm is the logarithm with base 10. It is also known as the decadic logarithm and as the decimal logarithm, named after its base, or Briggsian logarithm, after Henry Briggs, an English mathematician who pioneered ...

).

Beyond terms

The idea behind tf–idf also applies to entities other than terms. In 1998, the concept of idf was applied to citations. The authors argued that "if a very uncommon citation is shared by two documents, this should be weighted more highly than a citation made by a large number of documents". In addition, tf–idf was applied to "visual words" with the purpose of conducting object matching in videos, and entire sentences. However, the concept of tf–idf did not prove to be more effective in all cases than a plain tf scheme (without idf). When tf–idf was applied to citations, researchers could find no improvement over a simple citation-count weight that had no idf component.Derivatives

A number of term-weighting schemes have derived from tf–idf. One of them is TF–PDF (term frequency * proportional document frequency). TF–PDF was introduced in 2001 in the context of identifying emerging topics in the media. The PDF component measures the difference of how often a term occurs in different domains. Another derivate is TF–IDuF. In TF–IDuF, idf is not calculated based on the document corpus that is to be searched or recommended. Instead, idf is calculated on users' personal document collections. The authors report that TF–IDuF was equally effective as tf–idf but could also be applied in situations when, e.g., a user modeling system has no access to a global document corpus.See also

* Word embedding *Kullback–Leibler divergence

In mathematical statistics, the Kullback–Leibler divergence (also called relative entropy and I-divergence), denoted D_\text(P \parallel Q), is a type of statistical distance: a measure of how one probability distribution ''P'' is different fr ...

* Latent Dirichlet allocation

* Latent semantic analysis

* Mutual information

In probability theory and information theory, the mutual information (MI) of two random variables is a measure of the mutual dependence between the two variables. More specifically, it quantifies the " amount of information" (in units such ...

* Noun phrase

In linguistics, a noun phrase, or nominal (phrase), is a phrase that has a noun or pronoun as its head or performs the same grammatical function as a noun. Noun phrases are very common cross-linguistically, and they may be the most frequently oc ...

* Okapi BM25

* PageRank

PageRank (PR) is an algorithm used by Google Search to rank webpages, web pages in their search engine results. It is named after both the term "web page" and co-founder Larry Page. PageRank is a way of measuring the importance of website pages. A ...

* Vector space model

Vector space model or term vector model is an algebraic model for representing text documents (and any objects, in general) as vectors of identifiers (such as index terms). It is used in information filtering, information retrieval, indexing an ...

* Word count

* SMART Information Retrieval System

References

* * * *External links and suggested reading

*Gensim

Gensim is an open-source library for unsupervised topic modeling, document indexing, retrieval by similarity, and other natural language processing functionalities, using modern statistical machine learning.

Gensim is implemented in Python and ...

is a Python library for vector space modeling and includes tf–idf weighting.

Anatomy of a search engine

as used in

Lucene

Apache Lucene is a free and open-source search engine software library, originally written in Java by Doug Cutting. It is supported by the Apache Software Foundation and is released under the Apache Software License. Lucene is widely used as ...

TfidfTransformer

in scikit-learn

Text to Matrix Generator (TMG)

MATLAB toolbox that can be used for various tasks in text mining (TM) specifically i) indexing, ii) retrieval, iii) dimensionality reduction, iv) clustering, v) classification. The indexing step offers the user the ability to apply local and global weighting methods, including tf–idf.

Term-frequency explained

Explanation of term-frequency {{DEFAULTSORT:Tf-Idf Statistical natural language processing Ranking functions Vector space model