Natural user interface on:

[Wikipedia]

[Google]

[Amazon]

In

In the 1990s, Steve Mann developed a number of user-interface strategies using natural interaction with the real world as an alternative to a command-line interface (CLI) or

In the 1990s, Steve Mann developed a number of user-interface strategies using natural interaction with the real world as an alternative to a command-line interface (CLI) or

computing

Computing is any goal-oriented activity requiring, benefiting from, or creating computing machinery. It includes the study and experimentation of algorithmic processes, and development of both hardware and software. Computing has scientific, ...

, a natural user interface (NUI) or natural interface is a user interface

In the industrial design field of human–computer interaction, a user interface (UI) is the space where interactions between humans and machines occur. The goal of this interaction is to allow effective operation and control of the machine f ...

that is effectively invisible, and remains invisible as the user continuously learns increasingly complex interactions. The word "natural" is used because most computer interfaces use artificial control devices whose operation has to be learned. Examples include voice assistants

An intelligent virtual assistant (IVA) or intelligent personal assistant (IPA) is a software agent that can perform tasks or services for an individual based on commands or questions. The term "chatbot" is sometimes used to refer to virtual ...

, such as Alexa and Siri, touch and multitouch interactions on today's mobile phones and tablets, but also touch interfaces invisibly integrated into the textiles furnitures.

An NUI relies on a user being able to quickly transition from novice to expert. While the interface requires learning, that learning is eased through design which gives the user the feeling that they are instantly and continuously successful. Thus, "natural" refers to a goal in the user experience – that the interaction comes naturally, while interacting with the technology, rather than that the interface itself is natural. This is contrasted with the idea of an intuitive interface, referring to one that can be used without previous learning.

Several design strategies have been proposed which have met this goal to varying degrees of success. One strategy is the use of a "reality user interface" ("RUI"), also known as "reality-based interfaces" (RBI) methods. One example of an RUI strategy is to use a wearable computer

A wearable computer, also known as a body-borne computer, is a computing device worn on the body. The definition of 'wearable computer' may be narrow or broad, extending to smartphones or even ordinary wristwatches.

Wearables may be for general ...

to render real-world objects "clickable", i.e. so that the wearer can click on any everyday object so as to make it function as a hyperlink, thus merging cyberspace and the real world. Because the term "natural" is evocative of the "natural world", RBI are often confused for NUI, when in fact they are merely one means of achieving it.

One example of a strategy for designing a NUI not based in RBI is the strict limiting of functionality and customization, so that users have very little to learn in the operation of a device. Provided that the default capabilities match the user's goals, the interface is effortless to use. This is an overarching design strategy in Apple's iOS. Because this design is coincident with a direct-touch display, non-designers commonly misattribute the effortlessness of interacting with the device to that multi-touch display, and not to the design of the software where it actually resides.

History

In the 1990s, Steve Mann developed a number of user-interface strategies using natural interaction with the real world as an alternative to a command-line interface (CLI) or

In the 1990s, Steve Mann developed a number of user-interface strategies using natural interaction with the real world as an alternative to a command-line interface (CLI) or graphical user interface

The GUI ( "UI" by itself is still usually pronounced . or ), graphical user interface, is a form of user interface that allows users to interact with electronic devices through graphical icons and audio indicator such as primary notation, inst ...

(GUI). Mann referred to this work as "natural user interfaces", "Direct User Interfaces", and "metaphor-free computing". Mann's EyeTap

An EyeTap is a concept for a wearable computing device that is worn in front of the eye that acts as a camera to record the scene available to the eye as well as a display to superimpose computer-generated imagery on the original scene availabl ...

technology typically embodies an example of a natural user interface. Mann's use of the word "Natural" refers to both action that comes naturally to human users, as well as the use of nature itself, i.e. physics (''Natural Philosophy''), and the natural environment. A good example of an NUI in both these senses is the hydraulophone

A hydraulophone is a tonal acoustic musical instrument played by direct physical contact with water (sometimes other fluids) where sound is generated or affected hydraulically."Fluid Melodies: The hydraulophones of Professor Steve Mann" In Wate ...

, especially when it is used as an input device, in which touching a natural element (water) becomes a way of inputting data. More generally, a class of musical instruments called "physiphones", so-named from the Greek words "physika", "physikos" (nature) and "phone" (sound) have also been proposed as "Nature-based user interfaces".

In 2006, Christian Moore established an open research

Open research is research that is openly accessible and modifiable by others. The central theme of open research is to make clear accounts of research methods freely available via the internet, along with any data or results extracted or derived ...

community with the goal to expand discussion and development related to NUI technologies. In a 2008 conference presentation "Predicting the Past," August de los Reyes, a Principal User Experience Director of Surface Computing at Microsoft described the NUI as the next evolutionary phase following the shift from the CLI to the GUI. Of course, this too is an over-simplification, since NUIs necessarily include visual elements – and thus, graphical user interfaces. A more accurate description of this concept would be to describe it as a transition from WIMP to NUI.

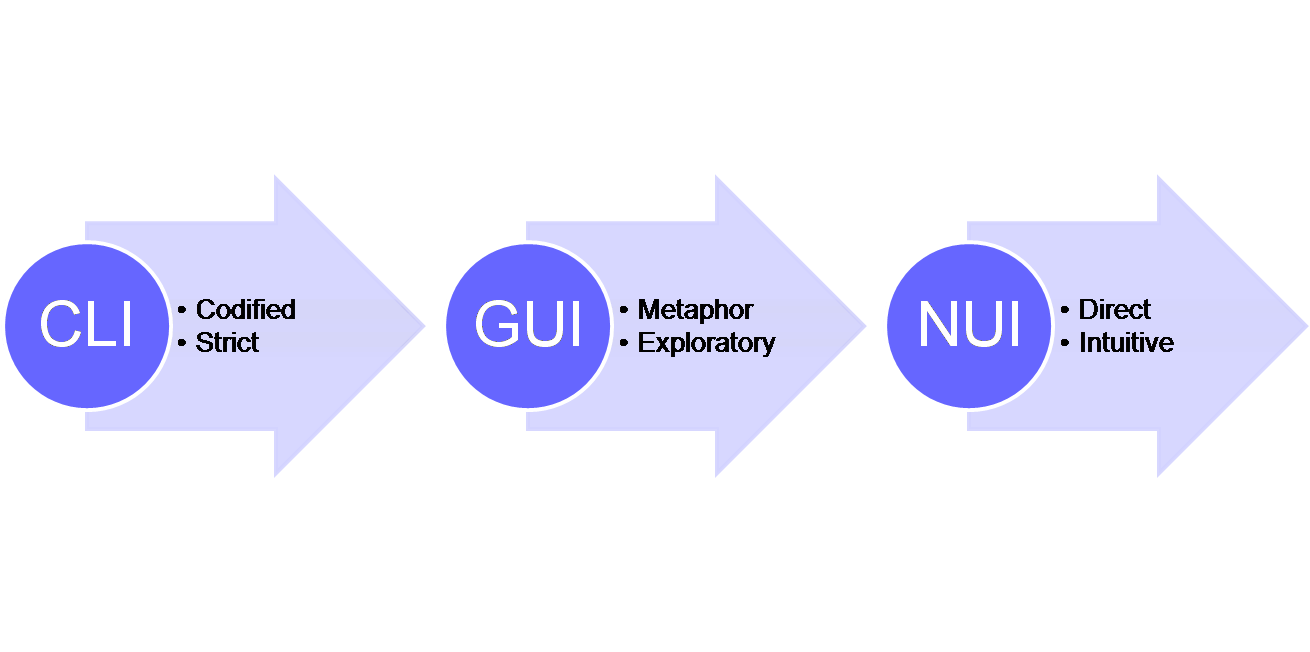

In the CLI, users had to learn an artificial means of input, the keyboard, and a series of codified inputs, that had a limited range of responses, where the syntax of those commands was strict.

Then, when the mouse enabled the GUI, users could more easily learn the mouse movements and actions, and were able to explore the interface much more. The GUI relied on metaphors for interacting with on-screen content or objects. The 'desktop' and 'drag' for example, being metaphors for a visual interface that ultimately was translated back into the strict codified language of the computer.

An example of the misunderstanding of the term NUI was demonstrated at the Consumer Electronics Show in 2010. "Now a new wave of products is poised to bring natural user interfaces, as these methods of controlling electronics devices are called, to an even broader audience."

In 2010, Microsoft's Bill Buxton reiterated the importance of the NUI within Microsoft Corporation with a video discussing technologies which could be used in creating a NUI, and its future potential.

In 2010, Daniel Wigdor and Dennis Wixon provided an operationalization of building natural user interfaces in their book.Brave NUI World In it, they carefully distinguish between natural user interfaces, the technologies used to achieve them, and reality-based UI.

Early examples

Multi-touch

When Bill Buxton was asked about the iPhone's interface, he responded "Multi-touch technologies have a long history. To put it in perspective, the original work undertaken by my team was done in 1984, the same year that the first Macintosh computer was released, and we were not the first." Multi-Touch is a technology which could enable a natural user interface. However, most UI toolkits used to construct interfaces executed with such technology are traditional GUIs.Examples of interfaces commonly referred to as NUI

Perceptive Pixel

One example is the work done by Jefferson Han on multi-touch interfaces. In a demonstration at TED in 2006, he showed a variety of means of interacting with on-screen content using both direct manipulations and gestures. For example, to shape an on-screen glutinous mass, Jeff literally 'pinches' and prods and pokes it with his fingers. In a GUI interface for a design application for example, a user would use the metaphor of 'tools' to do this, for example, selecting a prod tool, or selecting two parts of the mass that they then wanted to apply a 'pinch' action to. Han showed that user interaction could be much more intuitive by doing away with the interaction devices that we are used to and replacing them with a screen that was capable of detecting a much wider range of human actions and gestures. Of course, this allows only for a very limited set of interactions which map neatly onto physical manipulation (RBI). Extending the capabilities of the software beyond physical actions requires significantly more design work.Microsoft PixelSense

Microsoft PixelSense takes similar ideas on how users interact with content, but adds in the ability for the device to optically recognize objects placed on top of it. In this way, users can trigger actions on the computer through the same gestures and motions as Jeff Han's touchscreen allowed, but also objects become a part of the control mechanisms. So for example, when you place a wine glass on the table, the computer recognizes it as such and displays content associated with that wine glass. Placing a wine glass on a table maps well onto actions taken with wine glasses and other tables, and thus maps well onto reality-based interfaces. Thus, it could be seen as an entrée to a NUI experience.3D Immersive Touch

"3D Immersive Touch" is defined as the direct manipulation of 3D virtual environment objects using single or multi-touch surface hardware in multi-user 3D virtual environments. Coined first in 2007 to describe and define the 3D natural user interface learning principles associated with Edusim. Immersive Touch natural user interface now appears to be taking on a broader focus and meaning with the broader adaption of surface and touch driven hardware such as the iPhone, iPod touch, iPad, and a growing list of other hardware. Apple also seems to be taking a keen interest in “Immersive Touch” 3D natural user interfaces over the past few years. This work builds atop the broad academic base which has studied 3D manipulation in virtual reality environments.Xbox Kinect

Kinect is a motion sensinginput device

In computing, an input device is a piece of equipment used to provide data and control signals to an information processing system, such as a computer or information appliance. Examples of input devices include keyboards, mouse, scanners, cameras ...

by Microsoft

Microsoft Corporation is an American multinational technology corporation producing computer software, consumer electronics, personal computers, and related services headquartered at the Microsoft Redmond campus located in Redmond, Washin ...

for the Xbox 360

The Xbox 360 is a home video game console developed by Microsoft. As the successor to the original Xbox, it is the second console in the Xbox series. It competed with Sony's PlayStation 3 and Nintendo's Wii as part of the seventh generati ...

video game console

A video game console is an electronic device that outputs a video signal or image to display a video game that can be played with a game controller. These may be home consoles, which are generally placed in a permanent location connected to ...

and Windows

Windows is a group of several proprietary graphical operating system families developed and marketed by Microsoft. Each family caters to a certain sector of the computing industry. For example, Windows NT for consumers, Windows Server for ser ...

PCs that uses spatial gestures for interaction instead of a game controller

A game controller, gaming controller, or simply controller, is an input device used with video games or entertainment systems to provide input to a video game, typically to control an object or character in the game. Before the seventh generatio ...

. According to Microsoft

Microsoft Corporation is an American multinational technology corporation producing computer software, consumer electronics, personal computers, and related services headquartered at the Microsoft Redmond campus located in Redmond, Washin ...

's page, Kinect is designed for "a revolutionary new way to play: no controller required.". Again, because Kinect allows the sensing of the physical world, it shows potential for RBI designs, and thus potentially also for NUI.

See also

* Edusim *Eye tracking

Eye tracking is the process of measuring either the point of gaze (where one is looking) or the motion of an eye relative to the head. An eye tracker is a device for measuring eye positions and eye movement. Eye trackers are used in research ...

* Kinetic user interface

* Organic user interface

* Intelligent personal assistant

* Post-WIMP

In computing, post-WIMP ("windows, icons, menus, pointer") comprises work on user interfaces, mostly graphical user interfaces, which attempt to go beyond the paradigm of windows, icons, menus and a pointing device, i.e. WIMP interfaces.

The r ...

* Scratch input

* Spatial navigation

* Tangible user interface

A tangible user interface (TUI) is a user interface in which a person interacts with digital information through the physical environment. The initial name was Graspable User Interface, which is no longer used. The purpose of TUI development ...

* Touch user interface

A touch user interface (TUI) is a computer-pointing technology based upon the sense of touch ( haptics). Whereas a graphical user interface (GUI) relies upon the sense of sight, a TUI enables not only the sense of touch to innervate and activate ...

Notes

References

* http://blogs.msdn.com/surface/archive/2009/02/25/what-is-nui.aspx * https://www.amazon.com/Brave-NUI-World-Designing-Interfaces/dp/0123822319/ref=sr_1_1?ie=UTF8&qid=1329478543&sr=8-1 The book Brave NUI World from the creators of Microsoft Surface's NUI {{DEFAULTSORT:Natural User Interface User interfaces History of human–computer interaction