|

Complexity

Complexity characterises the behaviour of a system or model whose components interaction, interact in multiple ways and follow local rules, leading to nonlinearity, randomness, collective dynamics, hierarchy, and emergence. The term is generally used to characterize something with many parts where those parts interact with each other in multiple ways, culminating in a higher order of emergence greater than the sum of its parts. The study of these complex linkages at various scales is the main goal of complex systems theory. The intuitive criterion of complexity can be formulated as follows: a system would be more complex if more parts could be distinguished, and if more connections between them existed. Science takes a number of approaches to characterizing complexity; Zayed ''et al.'' reflect many of these. Neil F. Johnson, Neil Johnson states that "even among scientists, there is no unique definition of complexity – and the scientific notion has traditionally been conveyed ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Complex Systems Theory

A complex system is a system composed of many components which may interact with each other. Examples of complex systems are Earth's global climate, organisms, the human brain, infrastructure such as power grid, transportation or communication systems, complex software and electronic systems, social and economic organizations (like cities), an ecosystem, a living cell, and ultimately the entire universe. Complex systems are systems whose behavior is intrinsically difficult to model due to the dependencies, competitions, relationships, or other types of interactions between their parts or between a given system and its environment. Systems that are "complex" have distinct properties that arise from these relationships, such as nonlinearity, emergence, spontaneous order, adaptation, and feedback loops, among others. Because such systems appear in a wide variety of fields, the commonalities among them have become the topic of their independent area of research. In many cases, it ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Computational Complexity Theory

In theoretical computer science and mathematics, computational complexity theory focuses on classifying computational problems according to their resource usage, and relating these classes to each other. A computational problem is a task solved by a computer. A computation problem is solvable by mechanical application of mathematical steps, such as an algorithm. A problem is regarded as inherently difficult if its solution requires significant resources, whatever the algorithm used. The theory formalizes this intuition, by introducing mathematical models of computation to study these problems and quantifying their computational complexity, i.e., the amount of resources needed to solve them, such as time and storage. Other measures of complexity are also used, such as the amount of communication (used in communication complexity), the number of gates in a circuit (used in circuit complexity) and the number of processors (used in parallel computing). One of the roles of computationa ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Complexity Class

In computational complexity theory, a complexity class is a set of computational problems of related resource-based complexity. The two most commonly analyzed resources are time and memory. In general, a complexity class is defined in terms of a type of computational problem, a model of computation, and a bounded resource like time or memory. In particular, most complexity classes consist of decision problems that are solvable with a Turing machine, and are differentiated by their time or space (memory) requirements. For instance, the class P is the set of decision problems solvable by a deterministic Turing machine in polynomial time. There are, however, many complexity classes defined in terms of other types of problems (e.g. counting problems and function problems) and using other models of computation (e.g. probabilistic Turing machines, interactive proof systems, Boolean circuits, and quantum computers). The study of the relationships between complexity classes is a ma ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Kolmogorov Complexity

In algorithmic information theory (a subfield of computer science and mathematics), the Kolmogorov complexity of an object, such as a piece of text, is the length of a shortest computer program (in a predetermined programming language) that produces the object as output. It is a measure of the computational resources needed to specify the object, and is also known as algorithmic complexity, Solomonoff–Kolmogorov–Chaitin complexity, program-size complexity, descriptive complexity, or algorithmic entropy. It is named after Andrey Kolmogorov, who first published on the subject in 1963 and is a generalization of classical information theory. The notion of Kolmogorov complexity can be used to state and prove impossibility results akin to Cantor's diagonal argument, Gödel's incompleteness theorem, and Turing's halting problem. In particular, no program ''P'' computing a lower bound for each text's Kolmogorov complexity can return a value essentially larger than ''P'''s own leng ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Chaos Theory

Chaos theory is an interdisciplinary area of scientific study and branch of mathematics focused on underlying patterns and deterministic laws of dynamical systems that are highly sensitive to initial conditions, and were once thought to have completely random states of disorder and irregularities. Chaos theory states that within the apparent randomness of chaotic complex systems, there are underlying patterns, interconnection, constant feedback loops, repetition, self-similarity, fractals, and self-organization. The butterfly effect, an underlying principle of chaos, describes how a small change in one state of a deterministic nonlinear system can result in large differences in a later state (meaning that there is sensitive dependence on initial conditions). A metaphor for this behavior is that a butterfly flapping its wings in Brazil can cause a tornado in Texas. Small differences in initial conditions, such as those due to errors in measurements or due to rounding errors i ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Algorithmic Information Theory

Algorithmic information theory (AIT) is a branch of theoretical computer science that concerns itself with the relationship between computation and information of computably generated objects (as opposed to stochastically generated), such as strings or any other data structure. In other words, it is shown within algorithmic information theory that computational incompressibility "mimics" (except for a constant that only depends on the chosen universal programming language) the relations or inequalities found in information theory. According to Gregory Chaitin, it is "the result of putting Shannon's information theory and Turing's computability theory into a cocktail shaker and shaking vigorously." Besides the formalization of a universal measure for irreducible information content of computably generated objects, some main achievements of AIT were to show that: in fact algorithmic complexity follows (in the self-delimited case) the same inequalities (except for a constant) tha ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

NP (complexity)

In computational complexity theory, NP (nondeterministic polynomial time) is a complexity class used to classify decision problems. NP is the set of decision problems for which the problem instances, where the answer is "yes", have proofs verifiable in polynomial time by a deterministic Turing machine, or alternatively the set of problems that can be solved in polynomial time by a nondeterministic Turing machine.''Polynomial time'' refers to how quickly the number of operations needed by an algorithm, relative to the size of the problem, grows. It is therefore a measure of efficiency of an algorithm. An equivalent definition of NP is the set of decision problems ''solvable'' in polynomial time by a nondeterministic Turing machine. This definition is the basis for the abbreviation NP; " nondeterministic, polynomial time". These two definitions are equivalent because the algorithm based on the Turing machine consists of two phases, the first of which consists of a guess abou ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

P (complexity)

In computational complexity theory, P, also known as PTIME or DTIME(''n''O(1)), is a fundamental complexity class. It contains all decision problems that can be solved by a deterministic Turing machine using a polynomial amount of computation time, or polynomial time. Cobham's thesis holds that P is the class of computational problems that are "efficiently solvable" or " tractable". This is inexact: in practice, some problems not known to be in P have practical solutions, and some that are in P do not, but this is a useful rule of thumb. Definition A language ''L'' is in P if and only if there exists a deterministic Turing machine ''M'', such that * ''M'' runs for polynomial time on all inputs * For all ''x'' in ''L'', ''M'' outputs 1 * For all ''x'' not in ''L'', ''M'' outputs 0 P can also be viewed as a uniform family of boolean circuits. A language ''L'' is in P if and only if there exists a polynomial-time uniform family of boolean circuits \, such that * For all n \in \m ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Emergence

In philosophy, systems theory, science, and art, emergence occurs when an entity is observed to have properties its parts do not have on their own, properties or behaviors that emerge only when the parts interact in a wider whole. Emergence plays a central role in theories of integrative levels and of complex systems. For instance, the phenomenon of life as studied in biology is an emergent property of chemistry. In philosophy, theories that emphasize emergent properties have been called emergentism. In philosophy Philosophers often understand emergence as a claim about the etiology of a system's properties. An emergent property of a system, in this context, is one that is not a property of any component of that system, but is still a feature of the system as a whole. Nicolai Hartmann (1882–1950), one of the first modern philosophers to write on emergence, termed this a ''categorial novum'' (new category). Definitions This concept of emergence dates from at least the ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Minimum Message Length

Minimum message length (MML) is a Bayesian information-theoretic method for statistical model comparison and selection. It provides a formal information theory restatement of Occam's Razor: even when models are equal in their measure of fit-accuracy to the observed data, the one generating the most concise ''explanation'' of data is more likely to be correct (where the ''explanation'' consists of the statement of the model, followed by the lossless encoding of the data using the stated model). MML was invented by Chris Wallace, first appearing in the seminal paper "An information measure for classification". MML is intended not just as a theoretical construct, but as a technique that may be deployed in practice. It differs from the related concept of Kolmogorov complexity in that it does not require use of a Turing-complete language to model data. Definition Shannon's ''A Mathematical Theory of Communication'' (1948) states that in an optimal code, the message length (in binary) o ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Simplicity

Simplicity is the state or quality of being simple. Something easy to understand or explain seems simple, in contrast to something complicated. Alternatively, as Herbert A. Simon suggests, something is simple or complex depending on the way we choose to describe it. In some uses, the label "simplicity" can imply beauty, purity, or clarity. In other cases, the term may suggest a lack of nuance or complexity relative to what is required. The concept of simplicity is related to the field of epistemology and philosophy of science (e.g., in Occam's razor). Religions also reflect on simplicity with concepts such as divine simplicity. In human lifestyles, simplicity can denote freedom from excessive possessions or distractions, such as having a simple living style. Some other information In some contextual uses, "simplicity" can imply beauty, purity, or clarity. In other cases, the term may have negative connotations, as when referring to people as simpletons. In philosophy of sci ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

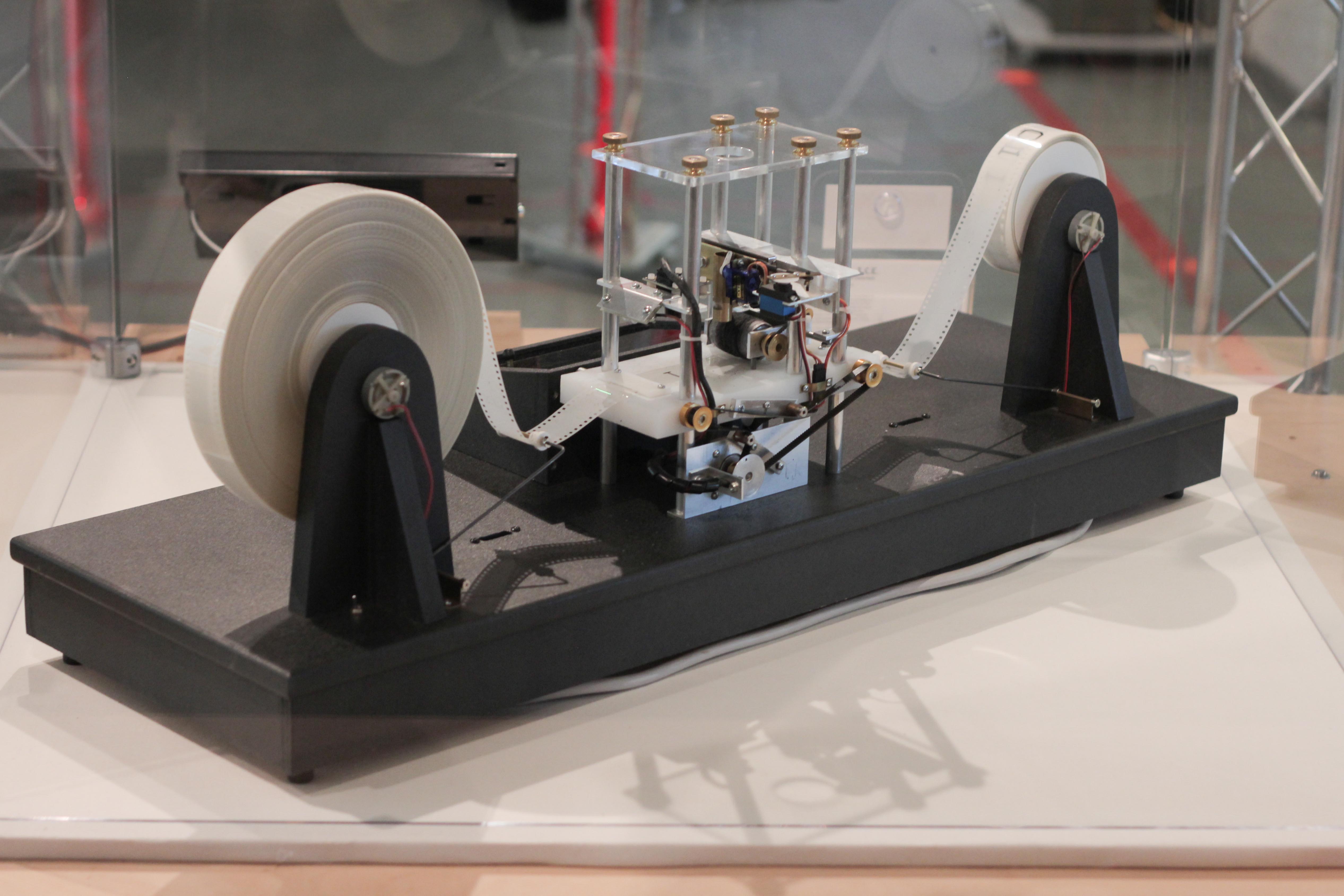

Turing Machine

A Turing machine is a mathematical model of computation describing an abstract machine that manipulates symbols on a strip of tape according to a table of rules. Despite the model's simplicity, it is capable of implementing any computer algorithm. The machine operates on an infinite memory tape divided into discrete cells, each of which can hold a single symbol drawn from a finite set of symbols called the alphabet of the machine. It has a "head" that, at any point in the machine's operation, is positioned over one of these cells, and a "state" selected from a finite set of states. At each step of its operation, the head reads the symbol in its cell. Then, based on the symbol and the machine's own present state, the machine writes a symbol into the same cell, and moves the head one step to the left or the right, or halts the computation. The choice of which replacement symbol to write and which direction to move is based on a finite table that specifies what to do for each comb ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |