forward error correction codes on:

[Wikipedia]

[Google]

[Amazon]

In

The two main categories of ECC codes are

The two main categories of ECC codes are

Interleaving is frequently used in digital communication and storage systems to improve the performance of forward error correcting codes. Many

Interleaving is frequently used in digital communication and storage systems to improve the performance of forward error correcting codes. Many

AFF3CT

A Fast Forward Error Correction Toolbox): a full communication chain in C++ (many supported codes like Turbo, LDPC, Polar codes, etc.), very fast and specialized on channel coding (can be used as a program for simulations or as a library for the SDR). * IT++: a C++ library of classes and functions for linear algebra, numerical optimization, signal processing, communications, and statistics.

OpenAir

implementation (in C) of the 3GPP specifications concerning the Evolved Packet Core Networks.

"Error Correction Code in Single Level Cell NAND Flash memories"

2007-02-16

"Error Correction Code in NAND Flash memories"

2004-11-29

Observations on Errors, Corrections, & Trust of Dependent Systems

by James Hamilton, 2012-02-26 * Sphere Packings, Lattices and Groups, By J. H. Conway, Neil James Alexander Sloane,

lpdec: library for LP decoding and related things (Python)

Error detection and correction

computing

Computing is any goal-oriented activity requiring, benefiting from, or creating computing machinery. It includes the study and experimentation of algorithmic processes, and development of both hardware and software. Computing has scientific, ...

, telecommunication

Telecommunication is the transmission of information by various types of technologies over wire, radio, optical, or other electromagnetic systems. It has its origin in the desire of humans for communication over a distance greater than that fe ...

, information theory, and coding theory

Coding theory is the study of the properties of codes and their respective fitness for specific applications. Codes are used for data compression, cryptography, error detection and correction, data transmission and data storage. Codes are studied ...

, an error correction code, sometimes error correcting code, (ECC) is used for controlling errors in data over unreliable or noisy communication channel

A communication channel refers either to a physical transmission medium such as a wire, or to a logical connection over a multiplexed medium such as a radio channel in telecommunications and computer networking. A channel is used for informa ...

s. The central idea is the sender encodes the message with redundant information in the form of an ECC. The redundancy allows the receiver to detect a limited number of errors that may occur anywhere in the message, and often to correct these errors without retransmission. The American mathematician Richard Hamming

Richard Wesley Hamming (February 11, 1915 – January 7, 1998) was an American mathematician whose work had many implications for computer engineering and telecommunications. His contributions include the Hamming code (which makes use of a ...

pioneered this field in the 1940s and invented the first error-correcting code in 1950: the Hamming (7,4) code.

ECC contrasts with error detection

In information theory and coding theory with applications in computer science and telecommunication, error detection and correction (EDAC) or error control are techniques that enable reliable delivery of digital data over unreliable communi ...

in that errors that are encountered can be corrected, not simply detected. The advantage is that a system using ECC does not require a reverse channel to request retransmission of data when an error occurs. The downside is that there is a fixed overhead that is added to the message, thereby requiring a higher forward-channel bandwidth. ECC is therefore applied in situations where retransmissions are costly or impossible, such as one-way communication links and when transmitting to multiple receivers in multicast

In computer networking, multicast is group communication where data transmission is addressed to a group of destination computers simultaneously. Multicast can be one-to-many or many-to-many distribution. Multicast should not be confused with ...

. Long-latency connections also benefit; in the case of a satellite orbiting around Uranus

Uranus is the seventh planet from the Sun. Its name is a reference to the Greek god of the sky, Uranus ( Caelus), who, according to Greek mythology, was the great-grandfather of Ares (Mars), grandfather of Zeus (Jupiter) and father of ...

, retransmission due to errors can create a delay of five hours. ECC information is usually added to mass storage

In computing, mass storage refers to the storage of large amounts of data in a persisting and machine-readable fashion. In general, the term is used as large in relation to contemporaneous hard disk drives, but it has been used large in relati ...

devices to enable recovery of corrupted data, is widely used in modem

A modulator-demodulator or modem is a computer hardware device that converts data from a digital format into a format suitable for an analog transmission medium such as telephone or radio. A modem transmits data by Modulation#Digital modulati ...

s, and is used on systems where the primary memory is ECC memory

Error correction code memory (ECC memory) is a type of computer data storage that uses an error correction code (ECC) to detect and correct n-bit data corruption which occurs in memory. ECC memory is used in most computers where data corruption c ...

.

ECC processing in a receiver may be applied to a digital bitstream or in the demodulation of a digitally modulated carrier. For the latter, ECC is an integral part of the initial analog-to-digital conversion

In electronics, an analog-to-digital converter (ADC, A/D, or A-to-D) is a system that converts an analog signal, such as a sound picked up by a microphone or light entering a digital camera, into a digital signal. An ADC may also provi ...

in the receiver. The Viterbi decoder implements a soft-decision algorithm to demodulate digital data from an analog signal corrupted by noise. Many ECC encoders/decoders can also generate a bit-error rate

In digital transmission, the number of bit errors is the number of received bits of a data stream over a communication channel that have been altered due to noise, interference, distortion or bit synchronization errors.

The bit error rate (BER) ...

(BER) signal, which can be used as feedback to fine-tune the analog receiving electronics.

The maximum fractions of errors or of missing bits that can be corrected are determined by the design of the ECC code, so different error correcting codes are suitable for different conditions. In general, a stronger code induces more redundancy that needs to be transmitted using the available bandwidth, which reduces the effective bit-rate while improving the received effective signal-to-noise ratio. The noisy-channel coding theorem

In information theory, the noisy-channel coding theorem (sometimes Shannon's theorem or Shannon's limit), establishes that for any given degree of noise contamination of a communication channel, it is possible to communicate discrete data (di ...

of Claude Shannon

Claude Elwood Shannon (April 30, 1916 – February 24, 2001) was an American mathematician, electrical engineer, and cryptographer known as a "father of information theory".

As a 21-year-old master's degree student at the Massachusetts Inst ...

can be used to compute the maximum achievable communication bandwidth for a given maximum acceptable error probability. This establishes bounds on the theoretical maximum information transfer rate of a channel with some given base noise level. However, the proof is not constructive, and hence gives no insight of how to build a capacity achieving code. After years of research, some advanced ECC systems as of 2016 come very close to the theoretical maximum.

Forward error correction

Intelecommunication

Telecommunication is the transmission of information by various types of technologies over wire, radio, optical, or other electromagnetic systems. It has its origin in the desire of humans for communication over a distance greater than that fe ...

, information theory, and coding theory

Coding theory is the study of the properties of codes and their respective fitness for specific applications. Codes are used for data compression, cryptography, error detection and correction, data transmission and data storage. Codes are studied ...

, forward error correction (FEC) or channel coding is a technique used for controlling errors in data transmission

Data transmission and data reception or, more broadly, data communication or digital communications is the transfer and reception of data in the form of a digital bitstream or a digitized analog signal transmitted over a point-to-point o ...

over unreliable or noisy communication channel

A communication channel refers either to a physical transmission medium such as a wire, or to a logical connection over a multiplexed medium such as a radio channel in telecommunications and computer networking. A channel is used for informa ...

s. The central idea is that the sender encodes the message in a redundant way, most often by using an ECC.

The redundancy allows the receiver to detect a limited number of errors that may occur anywhere in the message, and often to correct these errors without re-transmission. FEC gives the receiver the ability to correct errors without needing a reverse channel to request re-transmission of data, but at the cost of a fixed, higher forward channel bandwidth. FEC is therefore applied in situations where re-transmissions are costly or impossible, such as one-way communication links and when transmitting to multiple receivers in multicast

In computer networking, multicast is group communication where data transmission is addressed to a group of destination computers simultaneously. Multicast can be one-to-many or many-to-many distribution. Multicast should not be confused with ...

. FEC information is usually added to mass storage

In computing, mass storage refers to the storage of large amounts of data in a persisting and machine-readable fashion. In general, the term is used as large in relation to contemporaneous hard disk drives, but it has been used large in relati ...

(magnetic, optical and solid state/flash based) devices to enable recovery of corrupted data, is widely used in modem

A modulator-demodulator or modem is a computer hardware device that converts data from a digital format into a format suitable for an analog transmission medium such as telephone or radio. A modem transmits data by Modulation#Digital modulati ...

s, is used on systems where the primary memory is ECC memory

Error correction code memory (ECC memory) is a type of computer data storage that uses an error correction code (ECC) to detect and correct n-bit data corruption which occurs in memory. ECC memory is used in most computers where data corruption c ...

and in broadcast situations, where the receiver does not have capabilities to request re-transmission or doing so would induce significant latency. For example, in the case of a satellite orbiting Uranus

Uranus is the seventh planet from the Sun. Its name is a reference to the Greek god of the sky, Uranus ( Caelus), who, according to Greek mythology, was the great-grandfather of Ares (Mars), grandfather of Zeus (Jupiter) and father of ...

, a re-transmission because of decoding errors would create a delay of at least 5 hours.

FEC processing in a receiver may be applied to a digital bit stream or in the demodulation of a digitally modulated carrier. For the latter, FEC is an integral part of the initial analog-to-digital conversion

In electronics, an analog-to-digital converter (ADC, A/D, or A-to-D) is a system that converts an analog signal, such as a sound picked up by a microphone or light entering a digital camera, into a digital signal. An ADC may also provi ...

in the receiver. The Viterbi decoder implements a soft-decision algorithm to demodulate digital data from an analog signal corrupted by noise. Many FEC coders can also generate a bit-error rate

In digital transmission, the number of bit errors is the number of received bits of a data stream over a communication channel that have been altered due to noise, interference, distortion or bit synchronization errors.

The bit error rate (BER) ...

(BER) signal which can be used as feedback to fine-tune the analog receiving electronics.

The maximum proportion of errors or missing bits that can be corrected is determined by the design of the ECC, so different forward error correcting codes are suitable for different conditions. In general, a stronger code induces more redundancy that needs to be transmitted using the available bandwidth, which reduces the effective bit-rate while improving the received effective signal-to-noise ratio. The noisy-channel coding theorem

In information theory, the noisy-channel coding theorem (sometimes Shannon's theorem or Shannon's limit), establishes that for any given degree of noise contamination of a communication channel, it is possible to communicate discrete data (di ...

of Claude Shannon answers the question of how much bandwidth is left for data communication while using the most efficient code that turns the decoding error probability to zero. This establishes bounds on the theoretical maximum information transfer rate of a channel with some given base noise level. His proof is not constructive, and hence gives no insight of how to build a capacity achieving code. However, after years of research, some advanced FEC systems like polar code achieve the Shannon channel capacity under the hypothesis of an infinite length frame.

How it works

ECC is accomplished by adding redundancy to the transmitted information using an algorithm. A redundant bit may be a complex function of many original information bits. The original information may or may not appear literally in the encoded output; codes that include the unmodified input in the output are systematic, while those that do not are non-systematic. A simplistic example of ECC is to transmit each data bit 3 times, which is known as a (3,1)repetition code

In coding theory, the repetition code is one of the most basic error-correcting codes. In order to transmit a message over a noisy channel that may corrupt the transmission in a few places, the idea of the repetition code is to just repeat the me ...

. Through a noisy channel, a receiver might see 8 versions of the output, see table below.

This allows an error in any one of the three samples to be corrected by "majority vote", or "democratic voting". The correcting ability of this ECC is:

* Up to 1 bit of triplet in error, or

* up to 2 bits of triplet omitted (cases not shown in table).

Though simple to implement and widely used, this triple modular redundancy

Triple is used in several contexts to mean "threefold" or a " treble":

Sports

* Triple (baseball), a three-base hit

* A basketball three-point field goal

* A figure skating jump with three rotations

* In bowling terms, three strikes in a row

* I ...

is a relatively inefficient ECC. Better ECC codes typically examine the last several tens or even the last several hundreds of previously received bits to determine how to decode the current small handful of bits (typically in groups of 2 to 8 bits).

Averaging noise to reduce errors

ECC could be said to work by "averaging noise"; since each data bit affects many transmitted symbols, the corruption of some symbols by noise usually allows the original user data to be extracted from the other, uncorrupted received symbols that also depend on the same user data. * Because of this "risk-pooling" effect, digital communication systems that use ECC tend to work well above a certain minimum signal-to-noise ratio and not at all below it. * This ''all-or-nothing tendency'' – thecliff effect

In telecommunications, the (digital) cliff effect or brickwall effect is a sudden loss of digital signal reception. Unlike analog signals, which gradually fade when signal strength decreases or electromagnetic interference or multipath increases, ...

– becomes more pronounced as stronger codes are used that more closely approach the theoretical Shannon limit

In information theory, the noisy-channel coding theorem (sometimes Shannon's theorem or Shannon's limit), establishes that for any given degree of noise contamination of a communication channel, it is possible to communicate discrete data (di ...

.

* Interleaving ECC coded data can reduce the all or nothing properties of transmitted ECC codes when the channel errors tend to occur in bursts. However, this method has limits; it is best used on narrowband data.

Most telecommunication systems use a fixed channel code designed to tolerate the expected worst-case bit error rate

In digital transmission, the number of bit errors is the number of received bits of a data stream over a communication channel that have been altered due to noise, interference, distortion or bit synchronization errors.

The bit error rate (BER) ...

, and then fail to work at all if the bit error rate is ever worse.

However, some systems adapt to the given channel error conditions: some instances of hybrid automatic repeat-request use a fixed ECC method as long as the ECC can handle the error rate, then switch to ARQ when the error rate gets too high;

adaptive modulation and coding uses a variety of ECC rates, adding more error-correction bits per packet when there are higher error rates in the channel, or taking them out when they are not needed.

Types of ECC

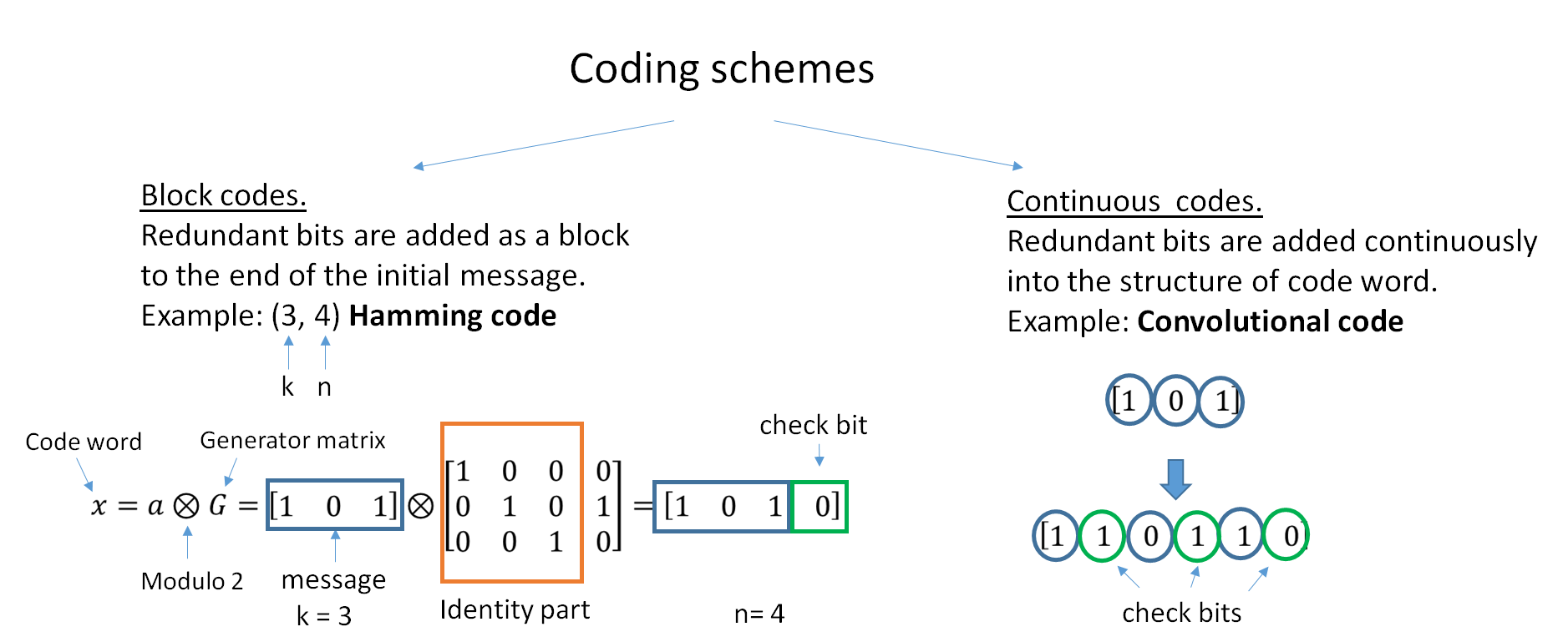

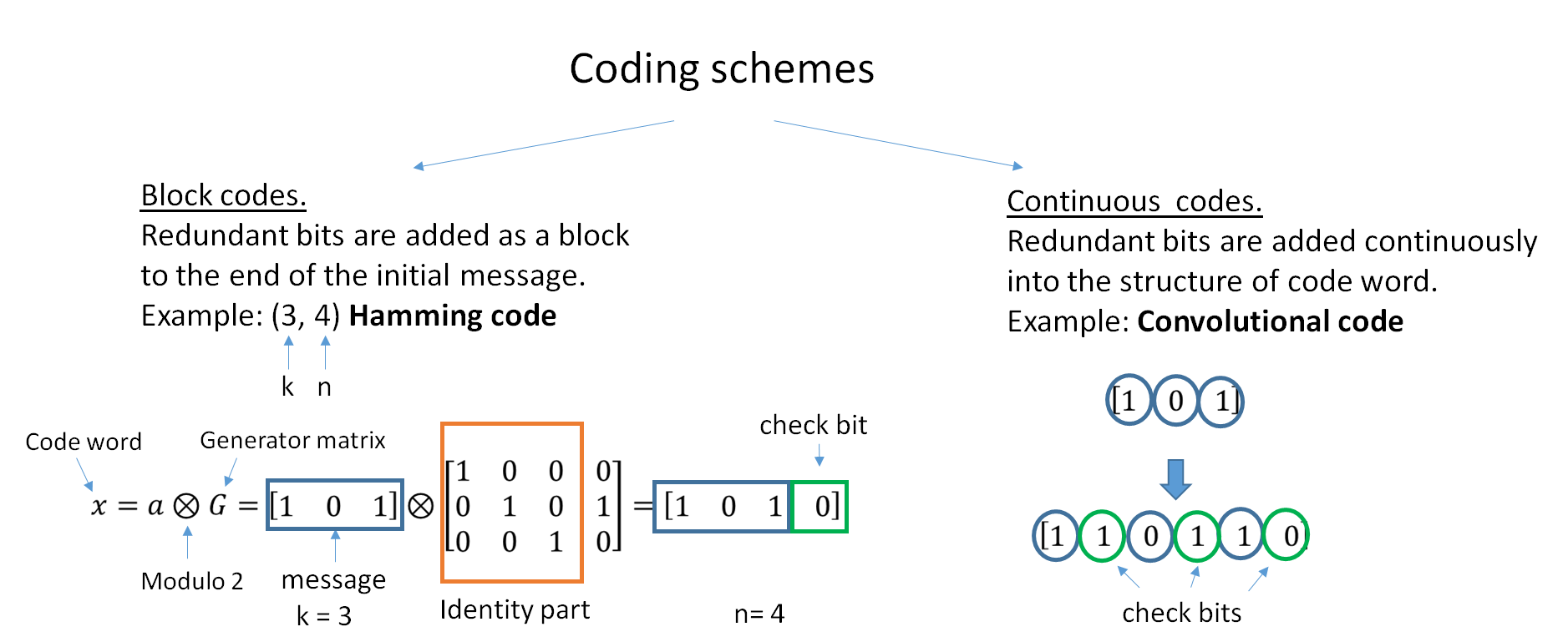

The two main categories of ECC codes are

The two main categories of ECC codes are block code

In coding theory, block codes are a large and important family of error-correcting codes that encode data in blocks.

There is a vast number of examples for block codes, many of which have a wide range of practical applications. The abstract defini ...

s and convolutional code

In telecommunication, a convolutional code is a type of error-correcting code that generates parity symbols via the sliding application of a boolean polynomial function to a data stream. The sliding application represents the 'convolution' of t ...

s.

* Block codes work on fixed-size blocks (packets) of bits or symbols of predetermined size. Practical block codes can generally be hard-decoded in polynomial time to their block length.

* Convolutional codes work on bit or symbol streams of arbitrary length. They are most often soft decoded with the Viterbi algorithm

The Viterbi algorithm is a dynamic programming algorithm for obtaining the maximum a posteriori probability estimate of the most likely sequence of hidden states—called the Viterbi path—that results in a sequence of observed events, especiall ...

, though other algorithms are sometimes used. Viterbi decoding allows asymptotically optimal decoding efficiency with increasing constraint length of the convolutional code, but at the expense of exponentially

Exponential may refer to any of several mathematical topics related to exponentiation, including:

*Exponential function, also:

**Matrix exponential, the matrix analogue to the above

*Exponential decay, decrease at a rate proportional to value

*Expo ...

increasing complexity. A convolutional code that is terminated is also a 'block code' in that it encodes a block of input data, but the block size of a convolutional code is generally arbitrary, while block codes have a fixed size dictated by their algebraic characteristics. Types of termination for convolutional codes include "tail-biting" and "bit-flushing".

There are many types of block codes; Reed–Solomon coding is noteworthy for its widespread use in compact disc

The compact disc (CD) is a digital optical disc data storage format that was co-developed by Philips and Sony to store and play digital audio recordings. In August 1982, the first compact disc was manufactured. It was then released in Oc ...

s, DVD

The DVD (common abbreviation for Digital Video Disc or Digital Versatile Disc) is a digital optical disc data storage format. It was invented and developed in 1995 and first released on November 1, 1996, in Japan. The medium can store any kind ...

s, and hard disk drives. Other examples of classical block codes include Golay, BCH, Multidimensional parity, and Hamming codes.

Hamming ECC is commonly used to correct NAND flash

Flash memory is an electronic non-volatile computer memory storage medium that can be electrically erased and reprogrammed. The two main types of flash memory, NOR flash and NAND flash, are named for the NOR and NAND logic gates. Both use ...

memory errors.

This provides single-bit error correction and 2-bit error detection.

Hamming codes are only suitable for more reliable single-level cell (SLC) NAND.

Denser multi-level cell

In electronics, a multi-level cell (MLC) is a memory cell capable of storing more than a single bit of information, compared to a single-level cell (SLC), which can store only one bit per memory cell. A memory cell typically consists of a single ...

(MLC) NAND may use multi-bit correcting ECC such as BCH or Reed–Solomon. NOR Flash typically does not use any error correction.

Classical block codes are usually decoded using hard-decision algorithms, which means that for every input and output signal a hard decision is made whether it corresponds to a one or a zero bit. In contrast, convolutional codes are typically decoded using soft-decision algorithms like the Viterbi, MAP or BCJR

The BCJR algorithm is an algorithm for maximum a posteriori decoding of error correcting codes defined on trellises (principally convolutional codes). The algorithm is named after its inventors: Bahl, Cocke, Jelinek and Raviv.L.Bahl, J.Cocke, F.J ...

algorithms, which process (discretized) analog signals, and which allow for much higher error-correction performance than hard-decision decoding.

Nearly all classical block codes apply the algebraic properties of finite field

In mathematics, a finite field or Galois field (so-named in honor of Évariste Galois) is a field that contains a finite number of elements. As with any field, a finite field is a set on which the operations of multiplication, addition, subtr ...

s. Hence classical block codes are often referred to as algebraic codes.

In contrast to classical block codes that often specify an error-detecting or error-correcting ability, many modern block codes such as LDPC codes

In information theory, a low-density parity-check (LDPC) code is a linear error correcting code, a method of transmitting a message over a noisy transmission channel. An LDPC code is constructed using a sparse Tanner graph (subclass of the bipa ...

lack such guarantees. Instead, modern codes are evaluated in terms of their bit error rates.

Most forward error correction codes correct only bit-flips, but not bit-insertions or bit-deletions.

In this setting, the Hamming distance

In information theory, the Hamming distance between two strings of equal length is the number of positions at which the corresponding symbols are different. In other words, it measures the minimum number of ''substitutions'' required to chan ...

is the appropriate way to measure the bit error rate

In digital transmission, the number of bit errors is the number of received bits of a data stream over a communication channel that have been altered due to noise, interference, distortion or bit synchronization errors.

The bit error rate (BER) ...

.

A few forward error correction codes are designed to correct bit-insertions and bit-deletions, such as Marker Codes and Watermark Codes.

The Levenshtein distance

In information theory, linguistics, and computer science, the Levenshtein distance is a string metric for measuring the difference between two sequences. Informally, the Levenshtein distance between two words is the minimum number of single-charac ...

is a more appropriate way to measure the bit error rate when using such codes.

Code-rate and the tradeoff between reliability and data rate

The fundamental principle of ECC is to add redundant bits in order to help the decoder to find out the true message that was encoded by the transmitter. The code-rate of a given ECC system is defined as the ratio between the number of information bits and the total number of bits (i.e., information plus redundancy bits) in a given communication package. The code-rate is hence a real number. A low code-rate close to zero implies a strong code that uses many redundant bits to achieve a good performance, while a large code-rate close to 1 implies a weak code. The redundant bits that protect the information have to be transferred using the same communication resources that they are trying to protect. This causes a fundamental tradeoff between reliability and data rate. In one extreme, a strong code (with low code-rate) can induce an important increase in the receiver SNR (signal-to-noise-ratio) decreasing the bit error rate, at the cost of reducing the effective data rate. On the other extreme, not using any ECC (i.e., a code-rate equal to 1) uses the full channel for information transfer purposes, at the cost of leaving the bits without any additional protection. One interesting question is the following: how efficient in terms of information transfer can an ECC be that has a negligible decoding error rate? This question was answered by Claude Shannon with his second theorem, which says that the channel capacity is the maximum bit rate achievable by any ECC whose error rate tends to zero: His proof relies on Gaussian random coding, which is not suitable to real-world applications. The upper bound given by Shannon's work inspired a long journey in designing ECCs that can come close to the ultimate performance boundary. Various codes today can attain almost the Shannon limit. However, capacity achieving ECCs are usually extremely complex to implement. The most popular ECCs have a trade-off between performance and computational complexity. Usually, their parameters give a range of possible code rates, which can be optimized depending on the scenario. Usually, this optimization is done in order to achieve a low decoding error probability while minimizing the impact to the data rate. Another criterion for optimizing the code rate is to balance low error rate and retransmissions number in order to the energy cost of the communication.Concatenated ECC codes for improved performance

Classical (algebraic) block codes and convolutional codes are frequently combined in concatenated coding schemes in which a short constraint-length Viterbi-decoded convolutional code does most of the work and a block code (usually Reed–Solomon) with larger symbol size and block length "mops up" any errors made by the convolutional decoder. Single pass decoding with this family of error correction codes can yield very low error rates, but for long range transmission conditions (like deep space) iterative decoding is recommended. Concatenated codes have been standard practice in satellite and deep space communications sinceVoyager 2

''Voyager 2'' is a space probe launched by NASA on August 20, 1977, to study the outer planets and interstellar space beyond the Sun's heliosphere. As a part of the Voyager program, it was launched 16 days before its twin, '' Voyager 1'', o ...

first used the technique in its 1986 encounter with Uranus

Uranus is the seventh planet from the Sun. Its name is a reference to the Greek god of the sky, Uranus ( Caelus), who, according to Greek mythology, was the great-grandfather of Ares (Mars), grandfather of Zeus (Jupiter) and father of ...

. The Galileo craft used iterative concatenated codes to compensate for the very high error rate conditions caused by having a failed antenna.

Low-density parity-check (LDPC)

Low-density parity-check (LDPC) codes are a class of highly efficient linear block codes made from many single parity check (SPC) codes. They can provide performance very close to the channel capacity (the theoretical maximum) using an iterated soft-decision decoding approach, at linear time complexity in terms of their block length. Practical implementations rely heavily on decoding the constituent SPC codes in parallel. LDPC codes were first introduced by Robert G. Gallager in his PhD thesis in 1960, but due to the computational effort in implementing encoder and decoder and the introduction of Reed–Solomon codes, they were mostly ignored until the 1990s. LDPC codes are now used in many recent high-speed communication standards, such as DVB-S2 (Digital Video Broadcasting – Satellite – Second Generation),WiMAX

Worldwide Interoperability for Microwave Access (WiMAX) is a family of wireless broadband communication standards based on the IEEE 802.16 set of standards, which provide physical layer (PHY) and media access control (MAC) options.

The WiMAX ...

(IEEE 802.16e

IEEE 802.16 is a series of wireless broadband standards written by the Institute of Electrical and Electronics Engineers (IEEE). The IEEE Standards Board established a working group in 1999 to develop standards for broadband for wireless metro ...

standard for microwave communications), High-Speed Wireless LAN ( IEEE 802.11n), 10GBase-T Ethernet (802.3an) and G.hn/G.9960 (ITU-T Standard for networking over power lines, phone lines and coaxial cable). Other LDPC codes are standardized for wireless communication standards within 3GPP MBMS

Multimedia Broadcast Multicast Services (MBMS) is a point-to-multipoint interface specification for existing 3GPP cellular networks, which is designed to provide efficient delivery of broadcast and multicast services, both within a cell as well ...

(see fountain codes In coding theory, fountain codes (also known as rateless erasure codes) are a class of erasure codes with the property that a potentially limitless sequence of encoding symbols can be generated from a given set of source symbols such that the origi ...

).

Turbo codes

Turbo coding

In information theory, turbo codes (originally in French ''Turbocodes'') are a class of high-performance forward error correction (FEC) codes developed around 1990–91, but first published in 1993. They were the first practical codes to closely ...

is an iterated soft-decoding scheme that combines two or more relatively simple convolutional codes and an interleaver to produce a block code that can perform to within a fraction of a decibel of the Shannon limit

In information theory, the noisy-channel coding theorem (sometimes Shannon's theorem or Shannon's limit), establishes that for any given degree of noise contamination of a communication channel, it is possible to communicate discrete data (di ...

. Predating LDPC codes

In information theory, a low-density parity-check (LDPC) code is a linear error correcting code, a method of transmitting a message over a noisy transmission channel. An LDPC code is constructed using a sparse Tanner graph (subclass of the bipa ...

in terms of practical application, they now provide similar performance.

One of the earliest commercial applications of turbo coding was the CDMA2000 1x

CDMA2000 (also known as C2K or IMT Multi‑Carrier (IMT‑MC)) is a family of 3G mobile technology standards for sending voice, data, and signaling data between mobile phones and cell sites. It is developed by 3GPP2 as a backwards-compatible ...

(TIA IS-2000) digital cellular technology developed by Qualcomm and sold by Verizon Wireless

Verizon is an American wireless network operator that previously operated as a separate division of Verizon Communications under the name Verizon Wireless. In a 2019 reorganization, Verizon moved the wireless products and services into the div ...

, Sprint, and other carriers. It is also used for the evolution of CDMA2000 1x specifically for Internet access, 1xEV-DO

Evolution-Data Optimized (EV-DO, EVDO, etc.) is a telecommunications standard for the wireless transmission of data through radio signals, typically for broadband Internet access. EV-DO is an evolution of the CDMA2000 (IS-2000) standard which su ...

(TIA IS-856). Like 1x, EV-DO was developed by Qualcomm, and is sold by Verizon Wireless

Verizon is an American wireless network operator that previously operated as a separate division of Verizon Communications under the name Verizon Wireless. In a 2019 reorganization, Verizon moved the wireless products and services into the div ...

, Sprint, and other carriers (Verizon's marketing name for 1xEV-DO is ''Broadband Access'', Sprint's consumer and business marketing names for 1xEV-DO are ''Power Vision'' and ''Mobile Broadband'', respectively).

Local decoding and testing of codes

Sometimes it is only necessary to decode single bits of the message, or to check whether a given signal is a codeword, and do so without looking at the entire signal. This can make sense in a streaming setting, where codewords are too large to be classically decoded fast enough and where only a few bits of the message are of interest for now. Also such codes have become an important tool incomputational complexity theory

In theoretical computer science and mathematics, computational complexity theory focuses on classifying computational problems according to their resource usage, and relating these classes to each other. A computational problem is a task solved ...

, e.g., for the design of probabilistically checkable proof

In computational complexity theory, a probabilistically checkable proof (PCP) is a type of proof that can be checked by a randomized algorithm using a bounded amount of randomness and reading a bounded number of bits of the proof. The algorithm is ...

s.

Locally decodable codes are error-correcting codes for which single bits of the message can be probabilistically recovered by only looking at a small (say constant) number of positions of a codeword, even after the codeword has been corrupted at some constant fraction of positions. Locally testable code A locally testable code is a type of error-correcting code for which it can be determined if a string is a word in that code by looking at a small (frequently constant) number of bits of the string. In some situations, it is useful to know if the ...

s are error-correcting codes for which it can be checked probabilistically whether a signal is close to a codeword by only looking at a small number of positions of the signal.

Interleaving

Interleaving is frequently used in digital communication and storage systems to improve the performance of forward error correcting codes. Many

Interleaving is frequently used in digital communication and storage systems to improve the performance of forward error correcting codes. Many communication channel

A communication channel refers either to a physical transmission medium such as a wire, or to a logical connection over a multiplexed medium such as a radio channel in telecommunications and computer networking. A channel is used for informa ...

s are not memoryless: errors typically occur in burst

Burst may refer to:

*Burst mode (disambiguation), a mode of operation where events occur in rapid succession

**Burst transmission, a term in telecommunications

**Burst switching, a feature of some packet-switched networks

** Bursting, a signaling m ...

s rather than independently. If the number of errors within a code word

In communication, a code word is an element of a standardized code or protocol. Each code word is assembled in accordance with the specific rules of the code and assigned a unique meaning. Code words are typically used for reasons of reliability, ...

exceeds the error-correcting code's capability, it fails to recover the original code word. Interleaving alleviates this problem by shuffling source symbols across several code words, thereby creating a more uniform distribution of errors. Therefore, interleaving is widely used for burst error-correction.

The analysis of modern iterated codes, like turbo code

In information theory, turbo codes (originally in French ''Turbocodes'') are a class of high-performance forward error correction (FEC) codes developed around 1990–91, but first published in 1993. They were the first practical codes to closel ...

s and LDPC code

In information theory, a low-density parity-check (LDPC) code is a linear error correcting code, a method of transmitting a message over a noisy transmission channel. An LDPC code is constructed using a sparse Tanner graph (subclass of the ...

s, typically assumes an independent distribution of errors. Systems using LDPC codes therefore typically employ additional interleaving across the symbols within a code word.

For turbo codes, an interleaver is an integral component and its proper design is crucial for good performance. The iterative decoding algorithm works best when there are not short cycles in the factor graph

A factor graph is a bipartite graph representing the factorization of a function. In probability theory and its applications, factor graphs are used to represent factorization of a probability distribution function, enabling efficient computatio ...

that represents the decoder; the interleaver is chosen to avoid short cycles.

Interleaver designs include:

* rectangular (or uniform) interleavers (similar to the method using skip factors described above)

* convolutional interleavers

* random interleavers (where the interleaver is a known random permutation)

* S-random interleaver (where the interleaver is a known random permutation with the constraint that no input symbols within distance S appear within a distance of S in the output).

* a contention-free quadratic permutation polynomial In mathematics, a permutation polynomial (for a given ring) is a polynomial that acts as a permutation of the elements of the ring, i.e. the map x \mapsto g(x) is a bijection. In case the ring is a finite field, the Dickson polynomials, whic ...

(QPP). An example of use is in the 3GPP Long Term Evolution

In telecommunications, long-term evolution (LTE) is a standard for wireless broadband communication for mobile devices and data terminals, based on the GSM/EDGE and UMTS/HSPA standards. It improves on those standards' capacity and speed by usi ...

mobile telecommunication standard.

In multi- carrier communication systems, interleaving across carriers may be employed to provide frequency diversity

Diversity, diversify, or diverse may refer to:

Business

*Diversity (business), the inclusion of people of different identities (ethnicity, gender, age) in the workforce

*Diversity marketing, marketing communication targeting diverse customers

* ...

, e.g., to mitigate frequency-selective fading

In wireless communications, fading is variation of the attenuation of a signal with various variables. These variables include time, geographical position, and radio frequency. Fading is often modeled as a random process. A fading channel is a ...

or narrowband interference.

Example

Transmission without interleaving: Error-free message: Transmission with a burst error: Here, each group of the same letter represents a 4-bit one-bit error-correcting codeword. The codeword is altered in one bit and can be corrected, but the codeword is altered in three bits, so either it cannot be decoded at all or it might be decoded incorrectly. With interleaving: Error-free code words: Interleaved: Transmission with a burst error: Received code words after deinterleaving: In each of the codewords "", "", "", and "", only one bit is altered, so one-bit error-correcting code will decode everything correctly. Transmission without interleaving: Original transmitted sentence: Received sentence with a burst error: The term "" ends up mostly unintelligible and difficult to correct. With interleaving: Transmitted sentence: Error-free transmission: Received sentence with a burst error: Received sentence after deinterleaving: No word is completely lost and the missing letters can be recovered with minimal guesswork.Disadvantages of interleaving

Use of interleaving techniques increases total delay. This is because the entire interleaved block must be received before the packets can be decoded. Also interleavers hide the structure of errors; without an interleaver, more advanced decoding algorithms can take advantage of the error structure and achieve more reliable communication than a simpler decoder combined with an interleaver. An example of such an algorithm is based on neural network structures.Software for error-correcting codes

Simulating the behaviour of error-correcting codes (ECCs) in software is a common practice to design, validate and improve ECCs. The upcoming wireless 5G standard raises a new range of applications for the software ECCs: the Cloud Radio Access Networks (C-RAN) in a Software-defined radio (SDR) context. The idea is to directly use software ECCs in the communications. For instance in the 5G, the software ECCs could be located in the cloud and the antennas connected to this computing resources: improving this way the flexibility of the communication network and eventually increasing the energy efficiency of the system. In this context, there are various available Open-source software listed below (non exhaustive).AFF3CT

A Fast Forward Error Correction Toolbox): a full communication chain in C++ (many supported codes like Turbo, LDPC, Polar codes, etc.), very fast and specialized on channel coding (can be used as a program for simulations or as a library for the SDR). * IT++: a C++ library of classes and functions for linear algebra, numerical optimization, signal processing, communications, and statistics.

OpenAir

implementation (in C) of the 3GPP specifications concerning the Evolved Packet Core Networks.

List of error-correcting codes

* AN codes *BCH code

In coding theory, the Bose–Chaudhuri–Hocquenghem codes (BCH codes) form a class of cyclic error-correcting codes that are constructed using polynomials over a finite field (also called ''Galois field''). BCH codes were invented in 1959 ...

, which can be designed to correct any arbitrary number of errors per code block.

* Barker code

In telecommunication technology, a Barker code, or Barker sequence, is a finite sequence of digital values with the ideal autocorrelation property. It is used as a synchronising pattern between sender and receiver.

Explanation

Binary digits ha ...

used for radar, telemetry, ultra sound, Wifi, DSSS mobile phone networks, GPS etc.

* Berger code

* Constant-weight code

In coding theory, a constant-weight code, also called an ''m''-of-''n'' code, is an error detection and correction code where all codewords share the same Hamming weight.

The one-hot code and the balanced code are two widely used kinds of constan ...

* Convolutional code

In telecommunication, a convolutional code is a type of error-correcting code that generates parity symbols via the sliding application of a boolean polynomial function to a data stream. The sliding application represents the 'convolution' of t ...

* Expander codes

* Group codes

* Golay codes, of which the Binary Golay code is of practical interest

* Goppa code

In mathematics, an algebraic geometric code (AG-code), otherwise known as a Goppa code, is a general type of linear code constructed by using an algebraic curve X over a finite field \mathbb_q. Such codes were introduced by Valerii Denisovich Go ...

, used in the McEliece cryptosystem

* Hadamard code

The Hadamard code is an error-correcting code named after Jacques Hadamard that is used for error detection and correction when transmitting messages over very noisy or unreliable channels. In 1971, the code was used to transmit photos of Mar ...

* Hagelbarger code

* Hamming code

* Latin square based code for non-white noise (prevalent for example in broadband over powerlines)

* Lexicographic code Lexicographic codes or lexicodes are greedily generated error-correcting codes with remarkably good properties. They were produced independently by

Vladimir Levenshtein and by John Horton Conway and Neil Sloane. The binary lexicographic codes are ...

* Linear Network Coding, a type of erasure correcting code across networks instead of point-to-point links

* Long code

A long number (e.g. +44 7624 800555 in international notation or 07624 800555 in UK national notation), also known as a virtual mobile number (VMN), dedicated phone number MSISDN or long code, is a reception mechanism used by businesses to receive ...

* Low-density parity-check code, also known as Gallager code, as the archetype for sparse graph code A Sparse graph code is a code which is represented by a sparse graph.

Any linear code can be represented as a graph, where there are two sets of nodes - a set representing the transmitted bits and another set representing the constraints that the ...

s

* LT code In computer science, Luby transform codes (LT codes) are the first class of practical fountain codes that are near-optimal erasure correcting codes. They were invented by Michael Luby in 1998 and published in 2002. Like some other fountain codes, LT ...

, which is a near-optimal rateless erasure correcting code (Fountain code)

* m of n codes

In coding theory, a constant-weight code, also called an ''m''-of-''n'' code, is an error detection and correction code where all codewords share the same Hamming weight.

The one-hot code and the balanced code are two widely used kinds of constan ...

* Nordstrom-Robinson code, used in Geometry and Group Theory

* Online code, a near-optimal rateless erasure correcting code

* Polar code (coding theory)

In information theory, a polar code is a linear block code, linear block error-correcting code. The code construction is based on a multiple recursive concatenation of a short kernel code which transforms the physical channel into virtual outer cha ...

* Raptor code

In computer science, Raptor codes (''rapid tornado''; see Tornado codes) are the first known class of fountain codes with linear time encoding and decoding. They were invented by Amin Shokrollahi in 2000/2001 and were first published in 2004 as an ...

, a near-optimal rateless erasure correcting code

* Reed–Solomon error correction

* Reed–Muller code

Reed–Muller codes are error-correcting codes that are used in wireless communications applications, particularly in deep-space communication. Moreover, the proposed 5G standard relies on the closely related polar codes for error correction in ...

* Repeat-accumulate code

In computer science, repeat-accumulate codes (RA codes) are a low complexity class of error-correcting codes. They were devised so that their ensemble weight distributions are easy to derive. RA codes were introduced by Divsalar ''et al.''

In ...

* Repetition code

In coding theory, the repetition code is one of the most basic error-correcting codes. In order to transmit a message over a noisy channel that may corrupt the transmission in a few places, the idea of the repetition code is to just repeat the me ...

s, such as Triple modular redundancy

Triple is used in several contexts to mean "threefold" or a " treble":

Sports

* Triple (baseball), a three-base hit

* A basketball three-point field goal

* A figure skating jump with three rotations

* In bowling terms, three strikes in a row

* I ...

* Spinal code, a rateless, nonlinear code based on pseudo-random hash functions

* Tornado code, a near-optimal erasure correcting code

In coding theory, an erasure code is a forward error correction (FEC) code under the assumption of bit erasures (rather than bit errors), which transforms a message of ''k'' symbols into a longer message (code word) with ''n'' symbols such that the ...

, and the precursor to Fountain code In coding theory, fountain codes (also known as rateless erasure codes) are a class of erasure codes with the property that a potentially limitless sequence of encoding symbols can be generated from a given set of source symbols such that the origi ...

s

* Turbo code

In information theory, turbo codes (originally in French ''Turbocodes'') are a class of high-performance forward error correction (FEC) codes developed around 1990–91, but first published in 1993. They were the first practical codes to closel ...

* Walsh–Hadamard code

The Hadamard code is an error-correcting code named after Jacques Hadamard that is used for error detection and correction when transmitting messages over very noisy or unreliable channels. In 1971, the code was used to transmit photos of Mar ...

* Cyclic redundancy checks (CRCs) can correct 1-bit errors for messages at most bits long for optimal generator polynomials of degree , see Mathematics of cyclic redundancy checks#Bitfilters

See also

*Code rate

In telecommunication and information theory, the code rate (or information rateHuffman, W. Cary, and Pless, Vera, ''Fundamentals of Error-Correcting Codes'', Cambridge, 2003.) of a forward error correction code is the proportion of the data-str ...

* Erasure code

In coding theory, an erasure code is a forward error correction (FEC) code under the assumption of bit erasures (rather than bit errors), which transforms a message of ''k'' symbols into a longer message (code word) with ''n'' symbols such that th ...

s

* Soft-decision decoder In information theory, a soft-decision decoder is a kind of decoding methods – a class of algorithm used to decode data that has been encoded with an error correcting code. Whereas a hard-decision decoder operates on data that take on a fixed s ...

* Burst error-correcting code

In coding theory, burst error-correcting codes employ methods of correcting burst errors, which are errors that occur in many consecutive bits rather than occurring in bits independently of each other.

Many codes have been designed to correct rand ...

* Error detection and correction

* Error-correcting codes with feedback In mathematics, computer science, telecommunication, information theory, and searching theory, error-correcting codes with feedback refers to error correcting codes designed to work in the presence of feedback from the receiver to the sender.See a ...

References

Further reading

* (xxii+762+6 pages) * * (x+2+208+4 pages) * *"Error Correction Code in Single Level Cell NAND Flash memories"

2007-02-16

"Error Correction Code in NAND Flash memories"

2004-11-29

Observations on Errors, Corrections, & Trust of Dependent Systems

by James Hamilton, 2012-02-26 * Sphere Packings, Lattices and Groups, By J. H. Conway, Neil James Alexander Sloane,

Springer Science & Business Media

Springer Science+Business Media, commonly known as Springer, is a German multinational publishing company of books, e-books and peer-reviewed journals in science, humanities, technical and medical (STM) publishing.

Originally founded in 1842 ...

, 2013-03-09 – Mathematics – 682 pages.

External links

* {{Cite web , author-last=Morelos-Zaragoza , author-first=Robert , date=2004 , url=http://www.eccpage.com/ , title=The Correcting Codes (ECC) Page , access-date=2006-03-05lpdec: library for LP decoding and related things (Python)

Error detection and correction