In

linear algebra, a rotation matrix is a

transformation matrix

In linear algebra, linear transformations can be represented by matrices. If T is a linear transformation mapping \mathbb^n to \mathbb^m and \mathbf x is a column vector with n entries, then

T( \mathbf x ) = A \mathbf x

for some m \times n matrix ...

that is used to perform a

rotation in

Euclidean space

Euclidean space is the fundamental space of geometry, intended to represent physical space. Originally, that is, in Euclid's ''Elements'', it was the three-dimensional space of Euclidean geometry, but in modern mathematics there are Euclidean ...

. For example, using the convention below, the matrix

:

rotates points in the plane counterclockwise through an angle with respect to the positive axis about the origin of a two-dimensional

Cartesian coordinate system. To perform the rotation on a plane point with standard coordinates , it should be written as a

column vector

In linear algebra, a column vector with m elements is an m \times 1 matrix consisting of a single column of m entries, for example,

\boldsymbol = \begin x_1 \\ x_2 \\ \vdots \\ x_m \end.

Similarly, a row vector is a 1 \times n matrix for some n, c ...

, and

multiplied by the matrix :

:

If and are the endpoint coordinates of a vector, where is cosine and is sine, then the above equations become the

trigonometric summation angle formulae. Indeed, a rotation matrix can be seen as the trigonometric summation angle formulae in matrix form. One way to understand this is say we have a vector at an angle 30° from the axis, and we wish to rotate that angle by a further 45°. We simply need to compute the vector endpoint coordinates at 75°.

The examples in this article apply to ''

active

Active may refer to:

Music

* ''Active'' (album), a 1992 album by Casiopea

* Active Records, a record label

Ships

* ''Active'' (ship), several commercial ships by that name

* HMS ''Active'', the name of various ships of the British Royal ...

rotations'' of vectors ''counterclockwise'' in a ''right-handed coordinate system'' ( counterclockwise from ) by ''pre-multiplication'' ( on the left). If any one of these is changed (such as rotating axes instead of vectors, a ''

passive

Passive may refer to:

* Passive voice, a grammatical voice common in many languages, see also Pseudopassive

* Passive language, a language from which an interpreter works

* Passivity (behavior), the condition of submitting to the influence of o ...

transformation''), then the

inverse of the example matrix should be used, which coincides with its

transpose

In linear algebra, the transpose of a matrix is an operator which flips a matrix over its diagonal;

that is, it switches the row and column indices of the matrix by producing another matrix, often denoted by (among other notations).

The tr ...

.

Since matrix multiplication has no effect on the

zero vector

In mathematics, a zero element is one of several generalizations of 0, the number zero to other algebraic structures. These alternate meanings may or may not reduce to the same thing, depending on the context.

Additive identities

An additive iden ...

(the coordinates of the origin), rotation matrices describe rotations about the origin. Rotation matrices provide an algebraic description of such rotations, and are used extensively for computations in

geometry

Geometry (; ) is, with arithmetic, one of the oldest branches of mathematics. It is concerned with properties of space such as the distance, shape, size, and relative position of figures. A mathematician who works in the field of geometry is ...

,

physics

Physics is the natural science that studies matter, its fundamental constituents, its motion and behavior through space and time, and the related entities of energy and force. "Physical science is that department of knowledge which r ...

, and

computer graphics

Computer graphics deals with generating images with the aid of computers. Today, computer graphics is a core technology in digital photography, film, video games, cell phone and computer displays, and many specialized applications. A great de ...

. In some literature, the term ''rotation'' is generalized to include

improper rotation

In geometry, an improper rotation,. also called rotation-reflection, rotoreflection, rotary reflection,. or rotoinversion is an isometry in Euclidean space that is a combination of a rotation about an axis and a reflection in a plane perpendicul ...

s, characterized by orthogonal matrices with a

determinant of −1 (instead of +1). These combine ''proper'' rotations with ''reflections'' (which invert

orientation). In other cases, where reflections are not being considered, the label ''proper'' may be dropped. The latter convention is followed in this article.

Rotation matrices are

square matrices

In mathematics, a square matrix is a matrix with the same number of rows and columns. An ''n''-by-''n'' matrix is known as a square matrix of order Any two square matrices of the same order can be added and multiplied.

Square matrices are often ...

, with

real

Real may refer to:

Currencies

* Brazilian real (R$)

* Central American Republic real

* Mexican real

* Portuguese real

* Spanish real

* Spanish colonial real

Music Albums

* ''Real'' (L'Arc-en-Ciel album) (2000)

* ''Real'' (Bright album) (2010) ...

entries. More specifically, they can be characterized as

orthogonal matrices with

determinant 1; that is, a square matrix is a rotation matrix if and only if and . The

set of all orthogonal matrices of size with determinant +1 is a

representation of a

group

A group is a number of persons or things that are located, gathered, or classed together.

Groups of people

* Cultural group, a group whose members share the same cultural identity

* Ethnic group, a group whose members share the same ethnic ide ...

known as the

special orthogonal group , one example of which is the

rotation group SO(3). The set of all orthogonal matrices of size with determinant +1 or −1 is a representation of the (general)

orthogonal group .

In two dimensions

In two dimensions, the standard rotation matrix has the following form:

:

This rotates

column vector

In linear algebra, a column vector with m elements is an m \times 1 matrix consisting of a single column of m entries, for example,

\boldsymbol = \begin x_1 \\ x_2 \\ \vdots \\ x_m \end.

Similarly, a row vector is a 1 \times n matrix for some n, c ...

s by means of the following

matrix multiplication

In mathematics, particularly in linear algebra, matrix multiplication is a binary operation that produces a matrix from two matrices. For matrix multiplication, the number of columns in the first matrix must be equal to the number of rows in the s ...

,

:

Thus, the new coordinates of a point after rotation are

:

Examples

For example, when the vector

:

is rotated by an angle , its new coordinates are

:

and when the vector

:

is rotated by an angle , its new coordinates are

:

Direction

The direction of vector rotation is counterclockwise if is positive (e.g. 90°), and clockwise if is negative (e.g. −90°). Thus the clockwise rotation matrix is found as

:

The two-dimensional case is the only non-trivial (i.e. not one-dimensional) case where the rotation matrices group is commutative, so that it does not matter in which order multiple rotations are performed. An alternative convention uses rotating axes, and the above matrices also represent a rotation of the ''axes clockwise'' through an angle .

Non-standard orientation of the coordinate system

If a standard

right-handed

In human biology, handedness is an individual's preferential use of one hand, known as the dominant hand, due to it being stronger, faster or more dextrous. The other hand, comparatively often the weaker, less dextrous or simply less subjecti ...

Cartesian coordinate system is used, with the to the right and the up, the rotation is counterclockwise. If a left-handed Cartesian coordinate system is used, with directed to the right but directed down, is clockwise. Such non-standard orientations are rarely used in mathematics but are common in

2D computer graphics, which often have the origin in the top left corner and the down the screen or page.

See

below for other alternative conventions which may change the sense of the rotation produced by a rotation matrix.

Common rotations

Particularly useful are the matrices

:

for 90°, 180°, and 270° counter-clockwise rotations.

Relationship with complex plane

Since

:

the matrices of the shape

:

form a

ring isomorphic to the

field of the

complex number

In mathematics, a complex number is an element of a number system that extends the real numbers with a specific element denoted , called the imaginary unit and satisfying the equation i^= -1; every complex number can be expressed in the fo ...

s . Under this isomorphism, the rotation matrices correspond to

circle of the

unit complex number

In mathematics, the circle group, denoted by \mathbb T or \mathbb S^1, is the multiplicative group of all complex numbers with absolute value 1, that is, the unit circle in the complex plane or simply the unit complex numbers.

\mathbb T = \.

...

s, the complex numbers of modulus .

If one identifies

with

through the

linear isomorphism

In mathematics, and more specifically in linear algebra, a linear map (also called a linear mapping, linear transformation, vector space homomorphism, or in some contexts linear function) is a mapping V \to W between two vector spaces that pre ...

the action of a matrix of the above form on vectors of

corresponds to the multiplication by the complex number , and rotations correspond to multiplication by complex numbers of modulus .

As every rotation matrix can be written

:

the above correspondence associates such a matrix with the complex number

:

(this last equality is

Euler's formula).

In three dimensions

Basic rotations

A basic rotation (also called elemental rotation) is a rotation about one of the axes of a coordinate system. The following three basic rotation matrices rotate vectors by an angle about the -, -, or -axis, in three dimensions, using the

right-hand rule—which codifies their alternating signs. (The same matrices can also represent a clockwise rotation of the axes.

[Note that if instead of rotating vectors, it is the reference frame that is being rotated, the signs on the terms will be reversed. If reference frame A is rotated anti-clockwise about the origin through an angle to create reference frame B, then (with the signs flipped) will transform a vector described in reference frame A coordinates to reference frame B coordinates. Coordinate frame transformations in aerospace, robotics, and other fields are often performed using this interpretation of the rotation matrix.])

:

For

column vector

In linear algebra, a column vector with m elements is an m \times 1 matrix consisting of a single column of m entries, for example,

\boldsymbol = \begin x_1 \\ x_2 \\ \vdots \\ x_m \end.

Similarly, a row vector is a 1 \times n matrix for some n, c ...

s, each of these basic vector rotations appears counterclockwise when the axis about which they occur points toward the observer, the coordinate system is right-handed, and the angle is positive. , for instance, would rotate toward the a vector aligned with the , as can easily be checked by operating with on the vector :

:

This is similar to the rotation produced by the above-mentioned two-dimensional rotation matrix. See

below for alternative conventions which may apparently or actually invert the sense of the rotation produced by these matrices.

General rotations

Other rotation matrices can be obtained from these three using

matrix multiplication

In mathematics, particularly in linear algebra, matrix multiplication is a binary operation that produces a matrix from two matrices. For matrix multiplication, the number of columns in the first matrix must be equal to the number of rows in the s ...

. For example, the product

:

represents a rotation whose

yaw, pitch, and roll angles are , and , respectively. More formally, it is an

intrinsic rotation whose

Tait–Bryan angles

The Euler angles are three angles introduced by Leonhard Euler to describe the orientation of a rigid body with respect to a fixed coordinate system.Novi Commentarii academiae scientiarum Petropolitanae 20, 1776, pp. 189–207 (E478PDF/ref>

The ...

are , , , about axes , , , respectively.

Similarly, the product

:

represents an extrinsic rotation whose (improper)

Euler angles are , , , about axes , , .

These matrices produce the desired effect only if they are used to premultiply

column vector

In linear algebra, a column vector with m elements is an m \times 1 matrix consisting of a single column of m entries, for example,

\boldsymbol = \begin x_1 \\ x_2 \\ \vdots \\ x_m \end.

Similarly, a row vector is a 1 \times n matrix for some n, c ...

s, and (since in general matrix multiplication is not

commutative

In mathematics, a binary operation is commutative if changing the order of the operands does not change the result. It is a fundamental property of many binary operations, and many mathematical proofs depend on it. Most familiar as the name of ...

) only if they are applied in the specified order (see

Ambiguities for more details). The order of rotation operations is from right to left; the matrix adjacent to the column vector is the first to be applied, and then the one to the left.

Conversion from rotation matrix to axis–angle

Every rotation in three dimensions is defined by its axis (a vector along this axis is unchanged by the rotation), and its angle — the amount of rotation about that axis (

Euler rotation theorem).

There are several methods to compute the axis and angle from a rotation matrix (see also

axis–angle representation). Here, we only describe the method based on the computation of the

eigenvector

In linear algebra, an eigenvector () or characteristic vector of a linear transformation is a nonzero vector that changes at most by a scalar factor when that linear transformation is applied to it. The corresponding eigenvalue, often denoted ...

s and

eigenvalues of the rotation matrix. It is also possible to use the

trace of the rotation matrix.

Determining the axis

Given a rotation matrix , a vector parallel to the rotation axis must satisfy

:

since the rotation of around the rotation axis must result in . The equation above may be solved for which is unique up to a scalar factor unless .

Further, the equation may be rewritten

:

which shows that lies in the

null space

In mathematics, the kernel of a linear map, also known as the null space or nullspace, is the linear subspace of the Domain of a function, domain of the map which is mapped to the zero vector. That is, given a linear map between two vector space ...

of .

Viewed in another way, is an

eigenvector

In linear algebra, an eigenvector () or characteristic vector of a linear transformation is a nonzero vector that changes at most by a scalar factor when that linear transformation is applied to it. The corresponding eigenvalue, often denoted ...

of corresponding to the

eigenvalue . Every rotation matrix must have this eigenvalue, the other two eigenvalues being

complex conjugate

In mathematics, the complex conjugate of a complex number is the number with an equal real part and an imaginary part equal in magnitude but opposite in sign. That is, (if a and b are real, then) the complex conjugate of a + bi is equal to a - ...

s of each other. It follows that a general rotation matrix in three dimensions has, up to a multiplicative constant, only one real eigenvector.

One way to determine the rotation axis is by showing that:

:

Since is a

skew-symmetric matrix, we can choose such that

:

The matrix–vector product becomes a

cross product of a vector with itself, ensuring that the result is zero:

:

Therefore, if

:

then

:

The magnitude of computed this way is , where is the angle of rotation.

This does not work if is symmetric. Above, if is zero, then all subsequent steps are invalid. In this case, it is necessary to diagonalize and find the eigenvector corresponding to an eigenvalue of 1.

Determining the angle

To find the angle of a rotation, once the axis of the rotation is known, select a vector perpendicular to the axis. Then the angle of the rotation is the angle between and .

A more direct method, however, is to simply calculate the

trace: the sum of the diagonal elements of the rotation matrix. Care should be taken to select the right sign for the angle to match the chosen axis:

:

from which follows that the angle's absolute value is

:

Rotation matrix from axis and angle

The matrix of a proper rotation by angle around the axis , a unit vector with , is given by:

:

A derivation of this matrix from first principles can be found in section 9.2 here. The basic idea to derive this matrix is dividing the problem into few known simple steps.

# First rotate the given axis and the point such that the axis lies in one of the coordinate planes (, or )

# Then rotate the given axis and the point such that the axis is aligned with one of the two coordinate axes for that particular coordinate plane (, or )

# Use one of the fundamental rotation matrices to rotate the point depending on the coordinate axis with which the rotation axis is aligned.

# Reverse rotate the axis-point pair such that it attains the final configuration as that was in step 2 (Undoing step 2)

# Reverse rotate the axis-point pair which was done in step 1 (undoing step 1)

This can be written more concisely as

:

where is the

cross product matrix of ; the expression is the

outer product, and is the

identity matrix. Alternatively, the matrix entries are:

:

where is the

Levi-Civita symbol

In mathematics, particularly in linear algebra, tensor analysis, and differential geometry, the Levi-Civita symbol or Levi-Civita epsilon represents a collection of numbers; defined from the parity of a permutation, sign of a permutation of the n ...

with . This is a matrix form of

Rodrigues' rotation formula, (or the equivalent, differently parametrized

Euler–Rodrigues formula) with

[Note that

:

so that, in Rodrigues' notation, equivalently,

:]

:

In

the rotation of a vector around the axis by an angle can be written as:

:

If the 3D space is right-handed and , this rotation will be counterclockwise when points towards the observer (

Right-hand rule). Explicitly, with

a right-handed orthonormal basis,

:

Note the striking ''merely apparent differences'' to the ''equivalent'' Lie-algebraic formulation

below.

Properties

For any -dimensional rotation matrix acting on

:

(The rotation is an

orthogonal matrix

In linear algebra, an orthogonal matrix, or orthonormal matrix, is a real square matrix whose columns and rows are orthonormal vectors.

One way to express this is

Q^\mathrm Q = Q Q^\mathrm = I,

where is the transpose of and is the identity m ...

)

It follows that:

:

A rotation is termed proper if , and

improper (or a roto-reflection) if . For even dimensions , the

eigenvalues of a proper rotation occur as pairs of

complex conjugate

In mathematics, the complex conjugate of a complex number is the number with an equal real part and an imaginary part equal in magnitude but opposite in sign. That is, (if a and b are real, then) the complex conjugate of a + bi is equal to a - ...

s which are roots of unity: for , which is real only for . Therefore, there may be no vectors fixed by the rotation (), and thus no axis of rotation. Any fixed eigenvectors occur in pairs, and the axis of rotation is an even-dimensional subspace.

For odd dimensions , a proper rotation will have an odd number of eigenvalues, with at least one and the axis of rotation will be an odd dimensional subspace. Proof:

:

Here is the identity matrix, and we use , as well as since is odd. Therefore, , meaning there is a null vector with , that is , a fixed eigenvector. There may also be pairs of fixed eigenvectors in the even-dimensional subspace orthogonal to , so the total dimension of fixed eigenvectors is odd.

For example, in

2-space , a rotation by angle has eigenvalues and , so there is no axis of rotation except when , the case of the null rotation. In

3-space

Three-dimensional space (also: 3D space, 3-space or, rarely, tri-dimensional space) is a geometric setting in which three values (called ''parameters'') are required to determine the position of an element (i.e., point). This is the informa ...

, the axis of a non-null proper rotation is always a unique line, and a rotation around this axis by angle has eigenvalues . In

4-space , the four eigenvalues are of the form . The null rotation has . The case of is called a ''simple rotation'', with two unit eigenvalues forming an ''axis plane'', and a two-dimensional rotation orthogonal to the axis plane. Otherwise, there is no axis plane. The case of is called an ''isoclinic rotation'', having eigenvalues repeated twice, so every vector is rotated through an angle .

The trace of a rotation matrix is equal to the sum of its eigenvalues. For , a rotation by angle has trace . For , a rotation around any axis by angle has trace . For , and the trace is , which becomes for an isoclinic rotation.

Examples

*The rotation matrix

::

:corresponds to a 90° planar rotation clockwise about the origin.

*The

transpose

In linear algebra, the transpose of a matrix is an operator which flips a matrix over its diagonal;

that is, it switches the row and column indices of the matrix by producing another matrix, often denoted by (among other notations).

The tr ...

of the matrix

::

:is its inverse, but since its determinant is −1, this is not a proper rotation matrix; it is a reflection across the line .

*The rotation matrix

::

:corresponds to a −30° rotation around the -axis in three-dimensional space.

*The rotation matrix

::

:corresponds to a rotation of approximately −74° around the axis in three-dimensional space.

*The

permutation matrix

::

:is a rotation matrix, as is the matrix of any

even permutation, and rotates through 120° about the axis .

*The matrix

::

:has determinant +1, but is not orthogonal (its transpose is not its inverse), so it is not a rotation matrix.

*The matrix

::

:is not square, and so cannot be a rotation matrix; yet yields a identity matrix (the columns are orthonormal).

*The matrix

::

:describes an

isoclinic rotation

In mathematics, the group of rotations about a fixed point in four-dimensional Euclidean space is denoted SO(4). The name comes from the fact that it is the special orthogonal group of order 4.

In this article ''rotation'' means ''rotational di ...

in four dimensions, a rotation through equal angles (180°) through two orthogonal planes.

*The rotation matrix

::

:rotates vectors in the plane of the first two coordinate axes 90°, rotates vectors in the plane of the next two axes 180°, and leaves the last coordinate axis unmoved.

Geometry

In

Euclidean geometry

Euclidean geometry is a mathematical system attributed to ancient Greek mathematician Euclid, which he described in his textbook on geometry: the '' Elements''. Euclid's approach consists in assuming a small set of intuitively appealing axioms ...

, a rotation is an example of an

isometry, a transformation that moves points without changing the distances between them. Rotations are distinguished from other isometries by two additional properties: they leave (at least) one point fixed, and they leave "

handedness" unchanged. In contrast, a

translation moves every point, a

reflection Reflection or reflexion may refer to:

Science and technology

* Reflection (physics), a common wave phenomenon

** Specular reflection, reflection from a smooth surface

*** Mirror image, a reflection in a mirror or in water

** Signal reflection, in ...

exchanges left- and right-handed ordering, a

glide reflection does both, and an

improper rotation

In geometry, an improper rotation,. also called rotation-reflection, rotoreflection, rotary reflection,. or rotoinversion is an isometry in Euclidean space that is a combination of a rotation about an axis and a reflection in a plane perpendicul ...

combines a change in handedness with a normal rotation.

If a fixed point is taken as the origin of a

Cartesian coordinate system, then every point can be given coordinates as a displacement from the origin. Thus one may work with the

vector space

In mathematics and physics, a vector space (also called a linear space) is a set whose elements, often called '' vectors'', may be added together and multiplied ("scaled") by numbers called ''scalars''. Scalars are often real numbers, but can ...

of displacements instead of the points themselves. Now suppose are the coordinates of the vector from the origin to point . Choose an

orthonormal basis for our coordinates; then the squared distance to , by

Pythagoras

Pythagoras of Samos ( grc, Πυθαγόρας ὁ Σάμιος, Pythagóras ho Sámios, Pythagoras the Samian, or simply ; in Ionian Greek; ) was an ancient Ionian Greek philosopher and the eponymous founder of Pythagoreanism. His politi ...

, is

:

which can be computed using the matrix multiplication

:

A geometric rotation transforms lines to lines, and preserves ratios of distances between points. From these properties it can be shown that a rotation is a

linear transformation

In mathematics, and more specifically in linear algebra, a linear map (also called a linear mapping, linear transformation, vector space homomorphism, or in some contexts linear function) is a mapping V \to W between two vector spaces that pre ...

of the vectors, and thus can be written in

matrix form, . The fact that a rotation preserves, not just ratios, but distances themselves, is stated as

:

or

:

Because this equation holds for all vectors, , one concludes that every rotation matrix, , satisfies the orthogonality condition,

:

Rotations preserve handedness because they cannot change the ordering of the axes, which implies the special matrix condition,

:

Equally important, it can be shown that any matrix satisfying these two conditions acts as a rotation.

Multiplication

The inverse of a rotation matrix is its transpose, which is also a rotation matrix:

:

The product of two rotation matrices is a rotation matrix:

:

For , multiplication of rotation matrices is generally not

commutative

In mathematics, a binary operation is commutative if changing the order of the operands does not change the result. It is a fundamental property of many binary operations, and many mathematical proofs depend on it. Most familiar as the name of ...

.

:

Noting that any

identity matrix is a rotation matrix, and that matrix multiplication is

associative, we may summarize all these properties by saying that the rotation matrices form a

group

A group is a number of persons or things that are located, gathered, or classed together.

Groups of people

* Cultural group, a group whose members share the same cultural identity

* Ethnic group, a group whose members share the same ethnic ide ...

, which for is

non-abelian, called a

special orthogonal group, and denoted by , , , or , the group of rotation matrices is isomorphic to the group of rotations in an space. This means that multiplication of rotation matrices corresponds to composition of rotations, applied in left-to-right order of their corresponding matrices.

Ambiguities

The interpretation of a rotation matrix can be subject to many ambiguities.

In most cases the effect of the ambiguity is equivalent to the effect of a rotation matrix

inversion (for these orthogonal matrices equivalently matrix

transpose

In linear algebra, the transpose of a matrix is an operator which flips a matrix over its diagonal;

that is, it switches the row and column indices of the matrix by producing another matrix, often denoted by (among other notations).

The tr ...

).

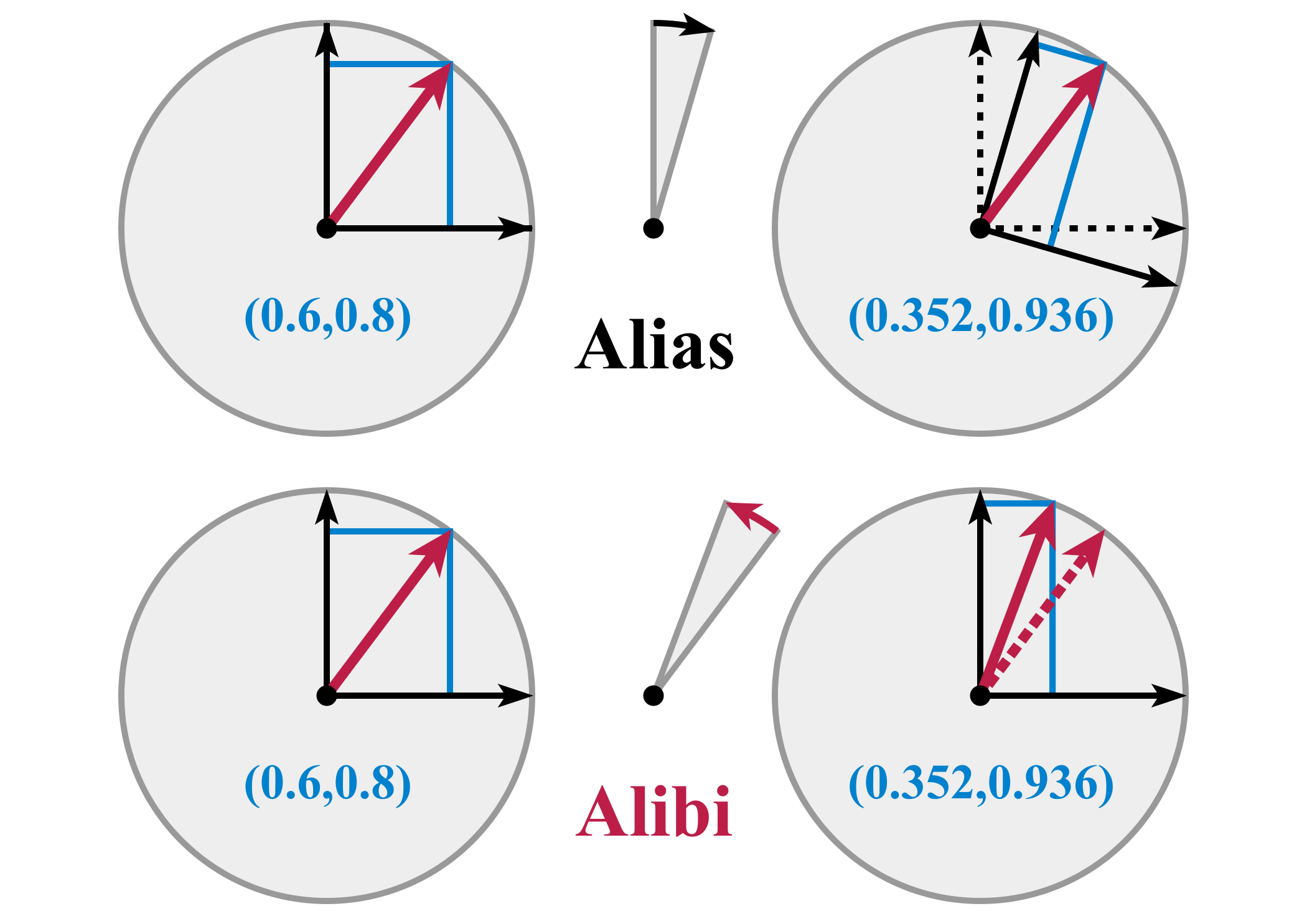

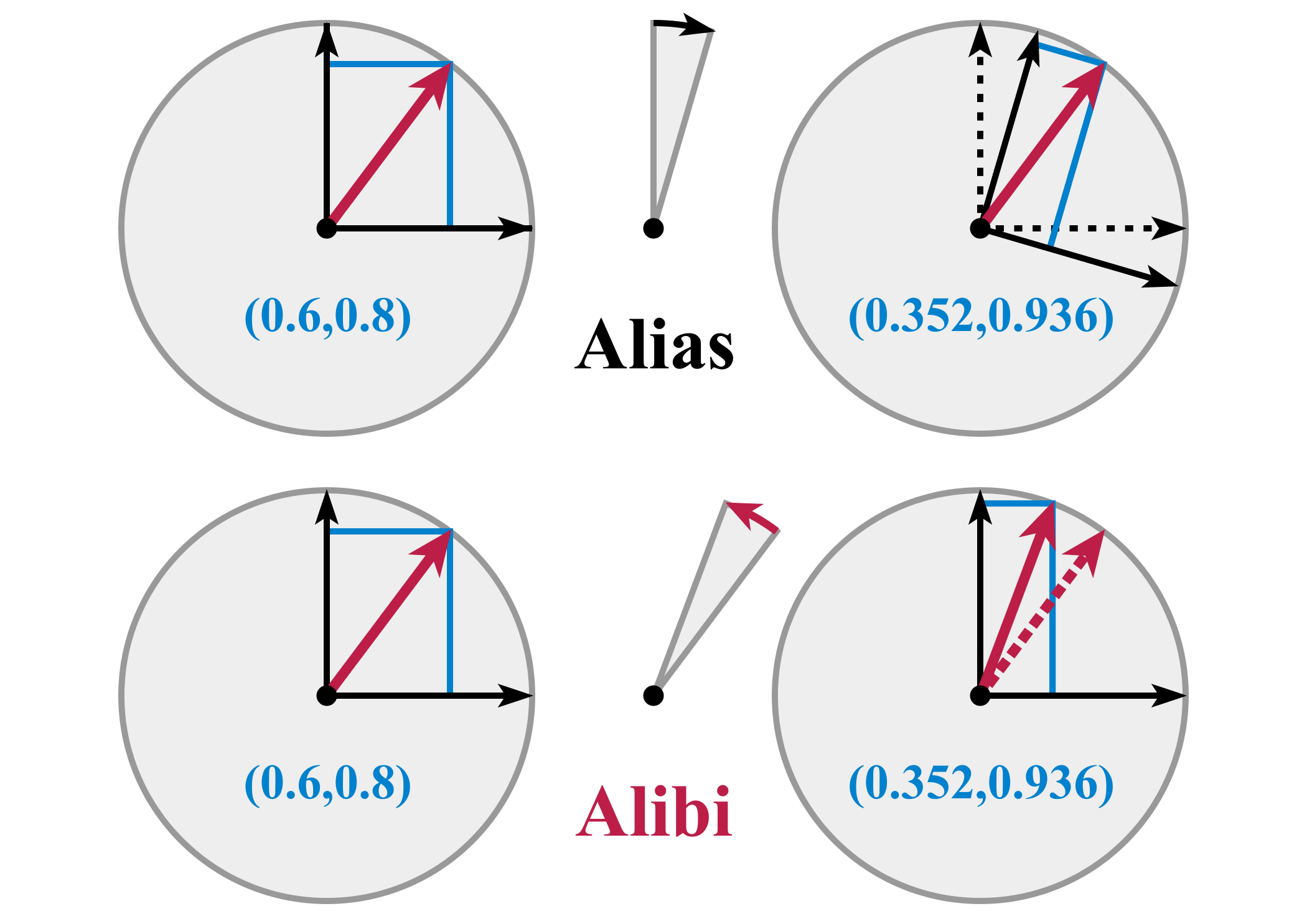

; Alias or alibi (passive or active) transformation

: The coordinates of a point may change due to either a rotation of the coordinate system (

alias), or a rotation of the point (

alibi

An alibi (from the Latin, '' alibī'', meaning "somewhere else") is a statement by a person, who is a possible perpetrator of a crime, of where they were at the time a particular offence was committed, which is somewhere other than where the crim ...

). In the latter case, the rotation of also produces a rotation of the vector representing . In other words, either and are fixed while rotates (alias), or is fixed while and rotate (alibi). Any given rotation can be legitimately described both ways, as vectors and coordinate systems actually rotate with respect to each other, about the same axis but in opposite directions. Throughout this article, we chose the alibi approach to describe rotations. For instance,

::

: represents a counterclockwise rotation of a vector by an angle , or a rotation of by the same angle but in the opposite direction (i.e. clockwise). Alibi and alias transformations are also known as

active and passive transformation

Active may refer to:

Music

* ''Active'' (album), a 1992 album by Casiopea

* Active Records, a record label

Ships

* ''Active'' (ship), several commercial ships by that name

* HMS ''Active'', the name of various ships of the British Royal ...

s, respectively.

; Pre-multiplication or post-multiplication

: The same point can be represented either by a

column vector

In linear algebra, a column vector with m elements is an m \times 1 matrix consisting of a single column of m entries, for example,

\boldsymbol = \begin x_1 \\ x_2 \\ \vdots \\ x_m \end.

Similarly, a row vector is a 1 \times n matrix for some n, c ...

or a

row vector

In linear algebra, a column vector with m elements is an m \times 1 matrix consisting of a single column of m entries, for example,

\boldsymbol = \begin x_1 \\ x_2 \\ \vdots \\ x_m \end.

Similarly, a row vector is a 1 \times n matrix for some n, c ...

. Rotation matrices can either pre-multiply column vectors (), or post-multiply row vectors (). However, produces a rotation in the opposite direction with respect to . Throughout this article, rotations produced on column vectors are described by means of a pre-multiplication. To obtain exactly the same rotation (i.e. the same final coordinates of point ), the equivalent row vector must be post-multiplied by the

transpose

In linear algebra, the transpose of a matrix is an operator which flips a matrix over its diagonal;

that is, it switches the row and column indices of the matrix by producing another matrix, often denoted by (among other notations).

The tr ...

of (i.e. ).

; Right- or left-handed coordinates

: The matrix and the vector can be represented with respect to a

right-handed

In human biology, handedness is an individual's preferential use of one hand, known as the dominant hand, due to it being stronger, faster or more dextrous. The other hand, comparatively often the weaker, less dextrous or simply less subjecti ...

or left-handed coordinate system. Throughout the article, we assumed a right-handed orientation, unless otherwise specified.

; Vectors or forms

: The vector space has a

dual space of

linear forms, and the matrix can act on either vectors or forms.

Decompositions

Independent planes

Consider the rotation matrix

:

If acts in a certain direction, , purely as a scaling by a factor , then we have

:

so that

:

Thus is a root of the

characteristic polynomial for ,

:

Two features are noteworthy. First, one of the roots (or

eigenvalues) is 1, which tells us that some direction is unaffected by the matrix. For rotations in three dimensions, this is the ''axis'' of the rotation (a concept that has no meaning in any other dimension). Second, the other two roots are a pair of complex conjugates, whose product is 1 (the constant term of the quadratic), and whose sum is (the negated linear term). This factorization is of interest for rotation matrices because the same thing occurs for all of them. (As special cases, for a null rotation the "complex conjugates" are both 1, and for a 180° rotation they are both −1.) Furthermore, a similar factorization holds for any rotation matrix. If the dimension, , is odd, there will be a "dangling" eigenvalue of 1; and for any dimension the rest of the polynomial factors into quadratic terms like the one here (with the two special cases noted). We are guaranteed that the characteristic polynomial will have degree and thus eigenvalues. And since a rotation matrix commutes with its transpose, it is a

normal matrix In mathematics, a complex square matrix is normal if it commutes with its conjugate transpose :

The concept of normal matrices can be extended to normal operators on infinite dimensional normed spaces and to normal elements in C*-algebras. As ...

, so can be diagonalized. We conclude that every rotation matrix, when expressed in a suitable coordinate system, partitions into independent rotations of two-dimensional subspaces, at most of them.

The sum of the entries on the main diagonal of a matrix is called the

trace; it does not change if we reorient the coordinate system, and always equals the sum of the eigenvalues. This has the convenient implication for and rotation matrices that the trace reveals the

angle of rotation, , in the two-dimensional space (or subspace). For a matrix the trace is , and for a matrix it is . In the three-dimensional case, the subspace consists of all vectors perpendicular to the rotation axis (the invariant direction, with eigenvalue 1). Thus we can extract from any rotation matrix a rotation axis and an angle, and these completely determine the rotation.

Sequential angles

The constraints on a rotation matrix imply that it must have the form

:

with . Therefore, we may set and , for some angle . To solve for it is not enough to look at alone or alone; we must consider both together to place the angle in the correct

quadrant, using a

two-argument arctangent function.

Now consider the first column of a rotation matrix,

:

Although will probably not equal 1, but some value , we can use a slight variation of the previous computation to find a so-called

Givens rotation In numerical linear algebra, a Givens rotation is a rotation in the plane spanned by two coordinates axes. Givens rotations are named after Wallace Givens, who introduced them to numerical analysts in the 1950s while he was working at Argonne Nation ...

that transforms the column to

:

zeroing . This acts on the subspace spanned by the - and -axes. We can then repeat the process for the -subspace to zero . Acting on the full matrix, these two rotations produce the schematic form

:

Shifting attention to the second column, a Givens rotation of the -subspace can now zero the value. This brings the full matrix to the form

:

which is an identity matrix. Thus we have decomposed as

:

An rotation matrix will have , or

:

entries below the diagonal to zero. We can zero them by extending the same idea of stepping through the columns with a series of rotations in a fixed sequence of planes. We conclude that the set of rotation matrices, each of which has entries, can be parameterized by angles.

In three dimensions this restates in matrix form an observation made by

Euler, so mathematicians call the ordered sequence of three angles

Euler angles. However, the situation is somewhat more complicated than we have so far indicated. Despite the small dimension, we actually have considerable freedom in the sequence of axis pairs we use; and we also have some freedom in the choice of angles. Thus we find many different conventions employed when three-dimensional rotations are parameterized for physics, or medicine, or chemistry, or other disciplines. When we include the option of world axes or body axes, 24 different sequences are possible. And while some disciplines call any sequence Euler angles, others give different names (Cardano, Tait–Bryan,

roll-pitch-yaw) to different sequences.

One reason for the large number of options is that, as noted previously, rotations in three dimensions (and higher) do not commute. If we reverse a given sequence of rotations, we get a different outcome. This also implies that we cannot compose two rotations by adding their corresponding angles. Thus Euler angles are not

vectors, despite a similarity in appearance as a triplet of numbers.

Nested dimensions

A rotation matrix such as

:

suggests a rotation matrix,

:

is embedded in the upper left corner:

:

This is no illusion; not just one, but many, copies of -dimensional rotations are found within -dimensional rotations, as

subgroup

In group theory, a branch of mathematics, given a group ''G'' under a binary operation ∗, a subset ''H'' of ''G'' is called a subgroup of ''G'' if ''H'' also forms a group under the operation ∗. More precisely, ''H'' is a subgroup ...

s. Each embedding leaves one direction fixed, which in the case of matrices is the rotation axis. For example, we have

:

fixing the -axis, the -axis, and the -axis, respectively. The rotation axis need not be a coordinate axis; if is a unit vector in the desired direction, then

:

where , , is a rotation by angle leaving axis fixed.

A direction in -dimensional space will be a unit magnitude vector, which we may consider a point on a generalized sphere, . Thus it is natural to describe the rotation group as combining and . A suitable formalism is the

fiber bundle,

:

where for every direction in the base space, , the fiber over it in the total space, , is a copy of the fiber space, , namely the rotations that keep that direction fixed.

Thus we can build an rotation matrix by starting with a matrix, aiming its fixed axis on (the ordinary sphere in three-dimensional space), aiming the resulting rotation on , and so on up through . A point on can be selected using numbers, so we again have numbers to describe any rotation matrix.

In fact, we can view the sequential angle decomposition, discussed previously, as reversing this process. The composition of Givens rotations brings the first column (and row) to , so that the remainder of the matrix is a rotation matrix of dimension one less, embedded so as to leave fixed.

Skew parameters via Cayley's formula

When an rotation matrix , does not include a −1 eigenvalue, thus none of the planar rotations which it comprises are 180° rotations, then is an

invertible matrix

In linear algebra, an -by- square matrix is called invertible (also nonsingular or nondegenerate), if there exists an -by- square matrix such that

:\mathbf = \mathbf = \mathbf_n \

where denotes the -by- identity matrix and the multiplicati ...

. Most rotation matrices fit this description, and for them it can be shown that is a

skew-symmetric matrix, . Thus ; and since the diagonal is necessarily zero, and since the upper triangle determines the lower one, contains independent numbers.

Conveniently, is invertible whenever is skew-symmetric; thus we can recover the original matrix using the ''

Cayley transform'',

:

which maps any skew-symmetric matrix to a rotation matrix. In fact, aside from the noted exceptions, we can produce any rotation matrix in this way. Although in practical applications we can hardly afford to ignore 180° rotations, the Cayley transform is still a potentially useful tool, giving a parameterization of most rotation matrices without trigonometric functions.

In three dimensions, for example, we have

:

If we condense the skew entries into a vector, , then we produce a 90° rotation around the -axis for (1, 0, 0), around the -axis for (0, 1, 0), and around the -axis for (0, 0, 1). The 180° rotations are just out of reach; for, in the limit as , does approach a 180° rotation around the axis, and similarly for other directions.

Decomposition into shears

For the 2D case, a rotation matrix can be decomposed into three

shear matrices ():

:

This is useful, for instance, in computer graphics, since shears can be implemented with fewer multiplication instructions than rotating a bitmap directly. On modern computers, this may not matter, but it can be relevant for very old or low-end microprocessors.

A rotation can also be written as two shears and

scaling ():

:

Group theory

Below follow some basic facts about the role of the collection of ''all'' rotation matrices of a fixed dimension (here mostly 3) in mathematics and particularly in physics where

rotational symmetry is a ''requirement'' of every truly fundamental law (due to the assumption of isotropy of space), and where the same symmetry, when present, is a ''simplifying property'' of many problems of less fundamental nature. Examples abound in

classical mechanics

Classical mechanics is a physical theory describing the motion of macroscopic objects, from projectiles to parts of machinery, and astronomical objects, such as spacecraft, planets, stars, and galaxies. For objects governed by classi ...

and

quantum mechanics

Quantum mechanics is a fundamental theory in physics that provides a description of the physical properties of nature at the scale of atoms and subatomic particles. It is the foundation of all quantum physics including quantum chemistr ...

. Knowledge of the part of the solutions pertaining to this symmetry applies (with qualifications) to ''all'' such problems and it can be factored out of a specific problem at hand, thus reducing its complexity. A prime example – in mathematics and physics – would be the theory of

spherical harmonics. Their role in the group theory of the rotation groups is that of being a

representation space for the entire set of finite-dimensional

irreducible representations of the rotation group SO(3). For this topic, see

Rotation group SO(3) § Spherical harmonics.

The main articles listed in each subsection are referred to for more detail.

Lie group

The rotation matrices for each form a

group

A group is a number of persons or things that are located, gathered, or classed together.

Groups of people

* Cultural group, a group whose members share the same cultural identity

* Ethnic group, a group whose members share the same ethnic ide ...

, the

special orthogonal group, . This

algebraic structure is coupled with a

topological structure

In mathematics, a topological space is, roughly speaking, a geometrical space in which closeness is defined but cannot necessarily be measured by a numeric distance. More specifically, a topological space is a set whose elements are called point ...

inherited from

in such a way that the operations of multiplication and taking the inverse are

analytic functions of the matrix entries. Thus is for each a Lie group. It is

compact and

connected

Connected may refer to:

Film and television

* ''Connected'' (2008 film), a Hong Kong remake of the American movie ''Cellular''

* '' Connected: An Autoblogography About Love, Death & Technology'', a 2011 documentary film

* ''Connected'' (2015 TV ...

, but not

simply connected. It is also a

semi-simple group, in fact a

simple group

SIMPLE Group Limited is a conglomeration of separately run companies that each has its core area in International Consulting. The core business areas are Legal Services, Fiduciary Activities, Banking Intermediation and Corporate Service.

The d ...

with the exception SO(4). The relevance of this is that all theorems and all machinery from the theory of

analytic manifold

In mathematics, an analytic manifold, also known as a C^\omega manifold, is a differentiable manifold with analytic transition maps. The term usually refers to real analytic manifolds, although complex manifolds are also analytic. In algebraic ge ...

s (analytic manifolds are in particular

smooth manifold

In mathematics, a differentiable manifold (also differential manifold) is a type of manifold that is locally similar enough to a vector space to allow one to apply calculus. Any manifold can be described by a collection of charts (atlas). One ma ...

s) apply and the well-developed representation theory of compact semi-simple groups is ready for use.

Lie algebra

The Lie algebra of is given by

:

and is the space of skew-symmetric matrices of dimension , see

classical group

In mathematics, the classical groups are defined as the special linear groups over the reals , the complex numbers and the quaternions together with special automorphism groups of symmetric or skew-symmetric bilinear forms and Hermitian or s ...

, where is the Lie algebra of , the

orthogonal group. For reference, the most common basis for is

:

Exponential map

Connecting the Lie algebra to the Lie group is the

exponential map, which is defined using the standard

matrix exponential

In mathematics, the matrix exponential is a matrix function on square matrices analogous to the ordinary exponential function. It is used to solve systems of linear differential equations. In the theory of Lie groups, the matrix exponential give ...

series for For any

skew-symmetric matrix , is always a rotation matrix.

[Note that this exponential map of skew-symmetric matrices to rotation matrices is quite different from the Cayley transform discussed earlier, differing to the third order,

:

Conversely, a skew-symmetric matrix specifying a rotation matrix through the Cayley map specifies the ''same'' rotation matrix through the map .]

An important practical example is the case. In

rotation group SO(3), it is shown that one can identify every with an Euler vector , where is a unit magnitude vector.

By the properties of the identification

, is in the null space of . Thus, is left invariant by and is hence a rotation axis.

According to

Rodrigues' rotation formula on matrix form, one obtains,

:

where

:

This is the matrix for a rotation around axis by the angle . For full detail, see

exponential map SO(3).

Baker–Campbell–Hausdorff formula

The BCH formula provides an explicit expression for in terms of a series expansion of nested commutators of and . This general expansion unfolds as

[For a detailed derivation, see ]Derivative of the exponential map

In the theory of Lie groups, the exponential map is a map from the Lie algebra of a Lie group into . In case is a matrix Lie group, the exponential map reduces to the matrix exponential. The exponential map, denoted , is analytic and has as su ...

. Issues of convergence of this series to the right element of the Lie algebra are here swept under the carpet. Convergence is guaranteed when and . If these conditions are not fulfilled, the series may still converge. A solution always exists since is onto in the cases under consideration.

:

In the case, the general infinite expansion has a compact form,

:

for suitable trigonometric function coefficients, detailed in the

Baker–Campbell–Hausdorff formula for SO(3).

As a group identity, the above holds for ''all faithful representations'', including the doublet (spinor representation), which is simpler. The same explicit formula thus follows straightforwardly through Pauli matrices; see the

derivation for SU(2). For the general case, one might use Ref.

Spin group

The Lie group of rotation matrices, , is not

simply connected, so Lie theory tells us it is a homomorphic image of a

universal covering group. Often the covering group, which in this case is called the

spin group denoted by , is simpler and more natural to work with.

In the case of planar rotations, SO(2) is topologically a

circle, . Its universal covering group, Spin(2), is isomorphic to the

real line, , under addition. Whenever angles of arbitrary magnitude are used one is taking advantage of the convenience of the universal cover. Every rotation matrix is produced by a countable infinity of angles, separated by integer multiples of 2. Correspondingly, the

fundamental group of is isomorphic to the integers, .

In the case of spatial rotations,

SO(3)

In mechanics and geometry, the 3D rotation group, often denoted SO(3), is the group of all rotations about the origin of three-dimensional Euclidean space \R^3 under the operation of composition.

By definition, a rotation about the origin is a tr ...

is topologically equivalent to three-dimensional

real projective space

In mathematics, real projective space, denoted or is the topological space of lines passing through the origin 0 in It is a compact, smooth manifold of dimension , and is a special case of a Grassmannian space.

Basic properties Construction

A ...

, . Its universal covering group, Spin(3), is isomorphic to the , . Every rotation matrix is produced by two opposite points on the sphere. Correspondingly, the

fundamental group of SO(3) is isomorphic to the two-element group, .

We can also describe Spin(3) as isomorphic to

quaternions of unit norm under multiplication, or to certain real matrices, or to complex

special unitary matrices, namely SU(2). The covering maps for the first and the last case are given by

:

and

:

For a detailed account of the and the quaternionic covering, see

spin group SO(3).

Many features of these cases are the same for higher dimensions. The coverings are all two-to-one, with , , having fundamental group . The natural setting for these groups is within a

Clifford algebra. One type of action of the rotations is produced by a kind of "sandwich", denoted by . More importantly in applications to physics, the corresponding spin representation of the Lie algebra sits inside the Clifford algebra. It can be exponentiated in the usual way to give rise to a representation, also known as

projective representation In the field of representation theory in mathematics, a projective representation of a group ''G'' on a vector space ''V'' over a field ''F'' is a group homomorphism from ''G'' to the projective linear group

\mathrm(V) = \mathrm(V) / F^*,

where G ...

of the rotation group. This is the case with SO(3) and SU(2), where the representation can be viewed as an "inverse" of the covering map. By properties of covering maps, the inverse can be chosen ono-to-one as a local section, but not globally.

Infinitesimal rotations

The matrices in the Lie algebra are not themselves rotations; the skew-symmetric matrices are derivatives, proportional differences of rotations. An actual "differential rotation", or ''infinitesimal rotation matrix'' has the form

:

where is vanishingly small and , for instance with ,

:

The computation rules are as usual except that infinitesimals of second order are routinely dropped. With these rules, these matrices do not satisfy all the same properties as ordinary finite rotation matrices under the usual treatment of infinitesimals. It turns out that ''the order in which infinitesimal rotations are applied is irrelevant''. To see this exemplified, consult

infinitesimal rotations SO(3).

Conversions

We have seen the existence of several decompositions that apply in any dimension, namely independent planes, sequential angles, and nested dimensions. In all these cases we can either decompose a matrix or construct one. We have also given special attention to rotation matrices, and these warrant further attention, in both directions .

Quaternion

Given the unit quaternion , the equivalent pre-multiplied (to be used with column vectors) rotation matrix is

:

Now every

quaternion component appears multiplied by two in a term of degree two, and if all such terms are zero what is left is an identity matrix. This leads to an efficient, robust conversion from any quaternion – whether unit or non-unit – to a rotation matrix. Given:

:

we can calculate

:

Freed from the demand for a unit quaternion, we find that nonzero quaternions act as

homogeneous coordinates for rotation matrices. The Cayley transform, discussed earlier, is obtained by scaling the quaternion so that its component is 1. For a 180° rotation around any axis, will be zero, which explains the Cayley limitation.

The sum of the entries along the main diagonal (the

trace), plus one, equals , which is . Thus we can write the trace itself as ; and from the previous version of the matrix we see that the diagonal entries themselves have the same form: , , and . So we can easily compare the magnitudes of all four quaternion components using the matrix diagonal. We can, in fact, obtain all four magnitudes using sums and square roots, and choose consistent signs using the skew-symmetric part of the off-diagonal entries:

:

Alternatively, use a single square root and division

:

This is numerically stable so long as the trace, , is not negative; otherwise, we risk dividing by (nearly) zero. In that case, suppose is the largest diagonal entry, so will have the largest magnitude (the other cases are derived by cyclic permutation); then the following is safe.

:

If the matrix contains significant error, such as accumulated numerical error, we may construct a symmetric matrix,

:

and find the

eigenvector

In linear algebra, an eigenvector () or characteristic vector of a linear transformation is a nonzero vector that changes at most by a scalar factor when that linear transformation is applied to it. The corresponding eigenvalue, often denoted ...

, , of its largest magnitude eigenvalue. (If is truly a rotation matrix, that value will be 1.) The quaternion so obtained will correspond to the rotation matrix closest to the given matrix (Note: formulation of the cited article is post-multiplied, works with row vectors).

Polar decomposition

If the matrix is nonsingular, its columns are linearly independent vectors; thus the

Gram–Schmidt process

In mathematics, particularly linear algebra and numerical analysis, the Gram–Schmidt process is a method for orthonormalizing a set of vectors in an inner product space, most commonly the Euclidean space equipped with the standard inner produ ...

can adjust them to be an orthonormal basis. Stated in terms of

numerical linear algebra, we convert to an orthogonal matrix, , using

QR decomposition

In linear algebra, a QR decomposition, also known as a QR factorization or QU factorization, is a decomposition of a matrix ''A'' into a product ''A'' = ''QR'' of an orthogonal matrix ''Q'' and an upper triangular matrix ''R''. QR decomp ...

. However, we often prefer a closest to , which this method does not accomplish. For that, the tool we want is the

polar decomposition

In mathematics, the polar decomposition of a square real or complex matrix A is a factorization of the form A = U P, where U is an orthogonal matrix and P is a positive semi-definite symmetric matrix (U is a unitary matrix and P is a positive se ...

(; ).

To measure closeness, we may use any

matrix norm

In mathematics, a matrix norm is a vector norm in a vector space whose elements (vectors) are matrices (of given dimensions).

Preliminaries

Given a field K of either real or complex numbers, let K^ be the -vector space of matrices with m ro ...

invariant under orthogonal transformations. A convenient choice is the

Frobenius norm

In mathematics, a matrix norm is a vector norm in a vector space whose elements (vectors) are matrices (of given dimensions).

Preliminaries

Given a field K of either real or complex numbers, let K^ be the -vector space of matrices with m ro ...

, , squared, which is the sum of the squares of the element differences. Writing this in terms of the

trace, , our goal is,

: Find minimizing , subject to .

Though written in matrix terms, the

objective function

In mathematical optimization and decision theory, a loss function or cost function (sometimes also called an error function) is a function that maps an event or values of one or more variables onto a real number intuitively representing some "cost ...

is just a quadratic polynomial. We can minimize it in the usual way, by finding where its derivative is zero. For a matrix, the orthogonality constraint implies six scalar equalities that the entries of must satisfy. To incorporate the constraint(s), we may employ a standard technique,

Lagrange multipliers

In mathematical optimization, the method of Lagrange multipliers is a strategy for finding the local maxima and minima of a function subject to equality constraints (i.e., subject to the condition that one or more equations have to be satisfied e ...

, assembled as a symmetric matrix, . Thus our method is:

: Differentiate with respect to (the entries of) , and equate to zero.

Consider a example. Including constraints, we seek to minimize

:

Taking the derivative with respect to , , , in turn, we assemble a matrix.

:

In general, we obtain the equation

:

so that

:

where is orthogonal and is symmetric. To ensure a minimum, the matrix (and hence ) must be positive definite. Linear algebra calls the

polar decomposition

In mathematics, the polar decomposition of a square real or complex matrix A is a factorization of the form A = U P, where U is an orthogonal matrix and P is a positive semi-definite symmetric matrix (U is a unitary matrix and P is a positive se ...

of , with the positive square root of .

:

When is

non-singular, the and factors of the polar decomposition are uniquely determined. However, the determinant of is positive because is positive definite, so inherits the sign of the determinant of . That is, is only guaranteed to be orthogonal, not a rotation matrix. This is unavoidable; an with negative determinant has no uniquely defined closest rotation matrix.

Axis and angle

To efficiently construct a rotation matrix from an angle and a unit axis , we can take advantage of symmetry and skew-symmetry within the entries. If , , and are the components of the unit vector representing the axis, and

:

then

:

Determining an axis and angle, like determining a quaternion, is only possible up to the sign; that is, and correspond to the same rotation matrix, just like and . Additionally, axis–angle extraction presents additional difficulties. The angle can be restricted to be from 0° to 180°, but angles are formally ambiguous by multiples of 360°. When the angle is zero, the axis is undefined. When the angle is 180°, the matrix becomes symmetric, which has implications in extracting the axis. Near multiples of 180°, care is needed to avoid numerical problems: in extracting the angle, a

two-argument arctangent with equal to avoids the insensitivity of arccos; and in computing the axis magnitude in order to force unit magnitude, a brute-force approach can lose accuracy through underflow .

A partial approach is as follows:

:

The -, -, and -components of the axis would then be divided by . A fully robust approach will use a different algorithm when , the

trace of the matrix , is negative, as with quaternion extraction. When is zero because the angle is zero, an axis must be provided from some source other than the matrix.

Euler angles

Complexity of conversion escalates with

Euler angles (used here in the broad sense). The first difficulty is to establish which of the twenty-four variations of Cartesian axis order we will use. Suppose the three angles are , , ; physics and chemistry may interpret these as

:

while aircraft dynamics may use

:

One systematic approach begins with choosing the rightmost axis. Among all

permutations of , only two place that axis first; one is an even permutation and the other odd. Choosing parity thus establishes the middle axis. That leaves two choices for the left-most axis, either duplicating the first or not. These three choices gives us variations; we double that to 24 by choosing static or rotating axes.

This is enough to construct a matrix from angles, but triples differing in many ways can give the same rotation matrix. For example, suppose we use the convention above; then we have the following equivalent pairs:

:

Angles for any order can be found using a concise common routine (; ).

The problem of singular alignment, the mathematical analog of physical

gimbal lock

Gimbal lock is the loss of one degree of freedom in a three-dimensional, three-gimbal mechanism that occurs when the axes of two of the three gimbals are driven into a parallel configuration, "locking" the system into rotation in a degenerate t ...

, occurs when the middle rotation aligns the axes of the first and last rotations. It afflicts every axis order at either even or odd multiples of 90°. These singularities are not characteristic of the rotation matrix as such, and only occur with the usage of Euler angles.

The singularities are avoided when considering and manipulating the rotation matrix as orthonormal row vectors (in 3D applications often named the right-vector, up-vector and out-vector) instead of as angles. The singularities are also avoided when working with quaternions.

Vector to vector formulation

In some instances it is interesting to describe a rotation by specifying how a vector is mapped into another through the shortest path (smallest angle). In

this completely describes the associated rotation matrix. In general, given , the matrix

:

belongs to and maps to .

Uniform random rotation matrices

We sometimes need to generate a uniformly distributed random rotation matrix. It seems intuitively clear in two dimensions that this means the rotation angle is uniformly distributed between 0 and 2. That intuition is correct, but does not carry over to higher dimensions. For example, if we decompose rotation matrices in axis–angle form, the angle should ''not'' be uniformly distributed; the probability that (the magnitude of) the angle is at most should be , for .

Since is a connected and locally compact Lie group, we have a simple standard criterion for uniformity, namely that the distribution be unchanged when composed with any arbitrary rotation (a Lie group "translation"). This definition corresponds to what is called ''

Haar measure''. show how to use the Cayley transform to generate and test matrices according to this criterion.

We can also generate a uniform distribution in any dimension using the ''subgroup algorithm'' of . This recursively exploits the nested dimensions group structure of , as follows. Generate a uniform angle and construct a rotation matrix. To step from to , generate a vector uniformly distributed on the -sphere , embed the matrix in the next larger size with last column , and rotate the larger matrix so the last column becomes .

As usual, we have special alternatives for the case. Each of these methods begins with three independent random scalars uniformly distributed on the unit interval. takes advantage of the odd dimension to change a

Householder reflection

In linear algebra, a Householder transformation (also known as a Householder reflection or elementary reflector) is a linear transformation that describes a reflection about a plane or hyperplane containing the origin. The Householder transformati ...

to a rotation by negation, and uses that to aim the axis of a uniform planar rotation.

Another method uses unit quaternions. Multiplication of rotation matrices is homomorphic to multiplication of quaternions, and multiplication by a unit quaternion rotates the unit sphere. Since the homomorphism is a local

isometry, we immediately conclude that to produce a uniform distribution on SO(3) we may use a uniform distribution on . In practice: create a four-element vector where each element is a sampling of a normal distribution. Normalize its length and you have a uniformly sampled random unit quaternion which represents a uniformly sampled random rotation. Note that the aforementioned only applies to rotations in dimension 3. For a generalised idea of quaternions, one should look into

Rotors.

Euler angles can also be used, though not with each angle uniformly distributed (; ).

For the axis–angle form, the axis is uniformly distributed over the unit sphere of directions, , while the angle has the nonuniform distribution over noted previously .

See also

*

Euler–Rodrigues formula

*

Euler's rotation theorem

In geometry, Euler's rotation theorem states that, in three-dimensional space, any displacement of a rigid body such that a point on the rigid body remains fixed, is equivalent to a single rotation about some axis that runs through the fixed p ...

*

Rodrigues' rotation formula

*

Plane of rotation

*

Axis–angle representation

*

Rotation group SO(3)

*

Rotation formalisms in three dimensions

In geometry, various formalisms exist to express a rotation in three dimensions as a mathematical transformation. In physics, this concept is applied to classical mechanics where rotational (or angular) kinematics is the science of quantitative ...

*

Rotation operator (vector space)

Rotation in mathematics is a concept originating in geometry. Any rotation is a motion of a certain space that preserves at least one point. It can describe, for example, the motion of a rigid body around a fixed point. Rotation can have sign ...

*

Transformation matrix

In linear algebra, linear transformations can be represented by matrices. If T is a linear transformation mapping \mathbb^n to \mathbb^m and \mathbf x is a column vector with n entries, then

T( \mathbf x ) = A \mathbf x

for some m \times n matrix ...

*

Yaw-pitch-roll system

*

Kabsch algorithm

*

Isometry

*

Rigid transformation

*

Rotations in 4-dimensional Euclidean space

*

Trigonometric Identities

In trigonometry, trigonometric identities are equalities that involve trigonometric functions and are true for every value of the occurring variables for which both sides of the equality are defined. Geometrically, these are identities involvin ...

*

Versor

In mathematics, a versor is a quaternion of norm one (a ''unit quaternion''). The word is derived from Latin ''versare'' = "to turn" with the suffix ''-or'' forming a noun from the verb (i.e. ''versor'' = "the turner"). It was introduced by Will ...

Remarks

Notes

References

*

*

*

*

* ; reprinted as article 52 in

*

*

*

*

*

* (

GTM 222)

*

*

*

*

*

*

*

*

*

*

*

*

*

*

* (Als

NASA-CR-53568)

* (

GTM 102)

*

External links

*

Rotation matrices at Mathworld(requires

Java)

Rotation Matricesat MathPages

*

A parametrization of SOn(R) by generalized Euler AnglesRotation about any point

{{Matrix classes

Transformation (function)

Matrices

Mathematical physics

In two dimensions, the standard rotation matrix has the following form:

:

This rotates

In two dimensions, the standard rotation matrix has the following form:

:

This rotates  If a standard

If a standard  Given a rotation matrix , a vector parallel to the rotation axis must satisfy

:

since the rotation of around the rotation axis must result in . The equation above may be solved for which is unique up to a scalar factor unless .

Further, the equation may be rewritten

:

which shows that lies in the

Given a rotation matrix , a vector parallel to the rotation axis must satisfy

:

since the rotation of around the rotation axis must result in . The equation above may be solved for which is unique up to a scalar factor unless .

Further, the equation may be rewritten

:

which shows that lies in the  The interpretation of a rotation matrix can be subject to many ambiguities.

In most cases the effect of the ambiguity is equivalent to the effect of a rotation matrix inversion (for these orthogonal matrices equivalently matrix

The interpretation of a rotation matrix can be subject to many ambiguities.

In most cases the effect of the ambiguity is equivalent to the effect of a rotation matrix inversion (for these orthogonal matrices equivalently matrix