Neural cryptography on:

[Wikipedia]

[Google]

[Amazon]

Neural cryptography is a branch of

One example of a public-key protocol is given by Khalil Shihab. He describes the decryption scheme and the public key creation that are based on a backpropagation neural network. The encryption scheme and the private key creation process are based on Boolean algebra. This technique has the advantage of small time and memory complexities. A disadvantage is the property of backpropagation algorithms: because of huge training sets, the learning phase of a neural network is very long. Therefore, the use of this protocol is only theoretical so far.

: If the scalar product is 0, the output of the hidden neuron is mapped to −1 in order to ensure a binary output value. The output of neural network is then computed as the multiplication of all values produced by hidden elements:

: Output of the tree parity machine is binary.

This method is known as a bidirectional learning.

One of the following learning rules can be used for the synchronization: * Hebbian learning rule: : * Anti-Hebbian learning rule: : * Random walk: : Where: : if otherwise And: : is a function that keeps the in the range

: The output of neural network with two or more hidden neurons can be computed as the exclusive or of the values produced by hidden elements:

: Other configurations of the output layer for K>2 are also possible. This machine has proven to be robust enough against some attacks so it could be used as a cryptographic mean, but it has been shown to be vulnerable to a probabilistic attack.

Neuro-Cryptography

1995 - The first definition of the Neuro-Cryptography (AI Neural-Cryptography) applied to DES cryptanalysis by Sebastien Dourlens, France.

- Description of one kind of neural cryptography at the

Neural Synchronization and Cryptography

- Andreas Ruttor. PhD thesis, Bayerische Julius-Maximilians-Universität Würzburg, 2006. * * {{Cryptography navbox Theory of cryptography Artificial neural networks

cryptography

Cryptography, or cryptology (from grc, , translit=kryptós "hidden, secret"; and ''graphein'', "to write", or ''-logia'', "study", respectively), is the practice and study of techniques for secure communication in the presence of adver ...

dedicated to analyzing the application of stochastic

Stochastic (, ) refers to the property of being well described by a random probability distribution. Although stochasticity and randomness are distinct in that the former refers to a modeling approach and the latter refers to phenomena themselv ...

algorithms, especially artificial neural network

Artificial neural networks (ANNs), usually simply called neural networks (NNs) or neural nets, are computing systems inspired by the biological neural networks that constitute animal brains.

An ANN is based on a collection of connected unit ...

algorithms, for use in encryption

In cryptography, encryption is the process of encoding information. This process converts the original representation of the information, known as plaintext, into an alternative form known as ciphertext. Ideally, only authorized parties can decip ...

and cryptanalysis

Cryptanalysis (from the Greek ''kryptós'', "hidden", and ''analýein'', "to analyze") refers to the process of analyzing information systems in order to understand hidden aspects of the systems. Cryptanalysis is used to breach cryptographic sec ...

.

Definition

Artificial neural network

Artificial neural networks (ANNs), usually simply called neural networks (NNs) or neural nets, are computing systems inspired by the biological neural networks that constitute animal brains.

An ANN is based on a collection of connected unit ...

s are well known for their ability to selectively explore the solution space of a given problem. This feature finds a natural niche of application in the field of cryptanalysis

Cryptanalysis (from the Greek ''kryptós'', "hidden", and ''analýein'', "to analyze") refers to the process of analyzing information systems in order to understand hidden aspects of the systems. Cryptanalysis is used to breach cryptographic sec ...

. At the same time, neural networks offer a new approach to attack ciphering algorithms based on the principle that any function could be reproduced by a neural network, which is a powerful proven computational tool that can be used to find the inverse-function of any cryptographic algorithm.

The ideas of mutual learning, self learning, and stochastic behavior of neural networks and similar algorithms can be used for different aspects of cryptography, like public-key cryptography

Public-key cryptography, or asymmetric cryptography, is the field of cryptographic systems that use pairs of related keys. Each key pair consists of a public key and a corresponding private key. Key pairs are generated with cryptographic alg ...

, solving the key

Key or The Key may refer to:

Common meanings

* Key (cryptography), a piece of information that controls the operation of a cryptography algorithm

* Key (lock), device used to control access to places or facilities restricted by a lock

* Key (map ...

distribution problem using neural network mutual synchronization, hashing or generation of pseudo-random numbers.

Another idea is the ability of a neural network to separate space in non-linear pieces using "bias". It gives different probabilities of activating the neural network or not. This is very useful in the case of Cryptanalysis.

Two names are used to design the same domain of research: Neuro-Cryptography and Neural Cryptography.

The first work that it is known on this topic can be traced back to 1995 in an IT Master Thesis.

Applications

In 1995, Sebastien Dourlens applied neural networks to cryptanalyzeDES

Des is a masculine given name, mostly a short form (hypocorism) of Desmond. People named Des include:

People

* Des Buckingham, English football manager

* Des Corcoran, (1928–2004), Australian politician

* Des Dillon (disambiguation), sever ...

by allowing the networks to learn how to invert the S-tables of the DES. The bias in DES studied through Differential Cryptanalysis by Adi Shamir is highlighted. The experiment shows about 50% of the key bits can be found, allowing the complete key to be found in a short time. Hardware application with multi micro-controllers have been proposed due to the easy implementation of multilayer neural networks in hardware.One example of a public-key protocol is given by Khalil Shihab. He describes the decryption scheme and the public key creation that are based on a backpropagation neural network. The encryption scheme and the private key creation process are based on Boolean algebra. This technique has the advantage of small time and memory complexities. A disadvantage is the property of backpropagation algorithms: because of huge training sets, the learning phase of a neural network is very long. Therefore, the use of this protocol is only theoretical so far.

Neural key exchange protocol

The most used protocol for key exchange between two parties and in the practice isDiffie–Hellman key exchange

Diffie–Hellman key exchangeSynonyms of Diffie–Hellman key exchange include:

* Diffie–Hellman–Merkle key exchange

* Diffie–Hellman key agreement

* Diffie–Hellman key establishment

* Diffie–Hellman key negotiation

* Exponential key exc ...

protocol. Neural key exchange, which is based on the synchronization of two tree parity machines, should be a secure replacement for this method.

Synchronizing these two machines is similar to synchronizing two chaotic oscillators in chaos communications.

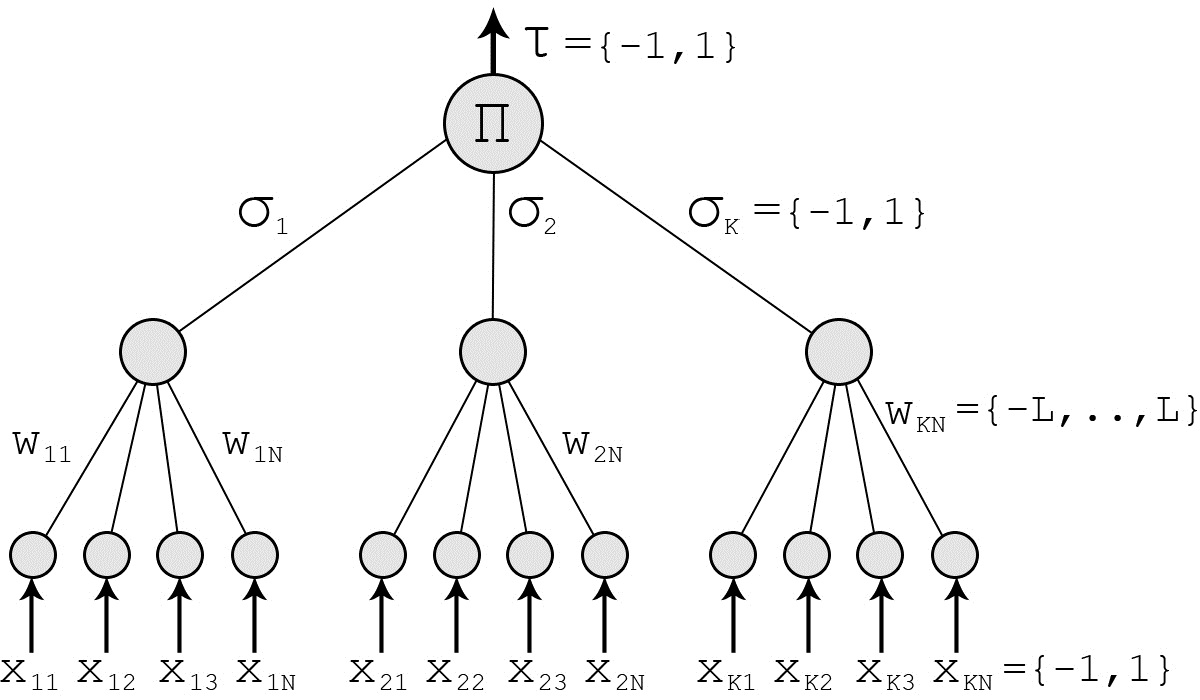

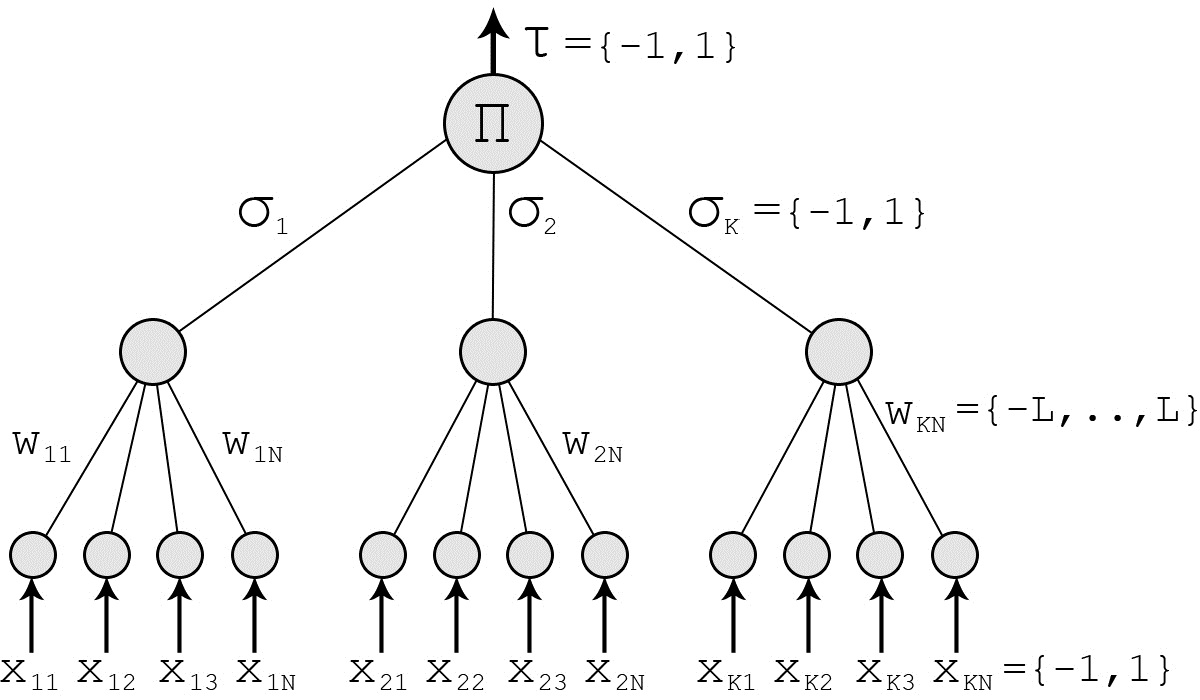

Tree parity machine

The tree parity machine is a special type of multi-layerfeedforward neural network

A feedforward neural network (FNN) is an artificial neural network wherein connections between the nodes do ''not'' form a cycle. As such, it is different from its descendant: recurrent neural networks.

The feedforward neural network was the ...

.

It consists of one output neuron, hidden neurons and × input neurons. Inputs to the network take three values:

:

The weights between input and hidden neurons take the values:

:

Output value of each hidden neuron is calculated as a sum of all multiplications of input neurons and these weights:

:

Signum is a simple function, which returns −1,0 or 1: : If the scalar product is 0, the output of the hidden neuron is mapped to −1 in order to ensure a binary output value. The output of neural network is then computed as the multiplication of all values produced by hidden elements:

: Output of the tree parity machine is binary.

Protocol

Each party ( and ) uses its own tree parity machine. Synchronization of the tree parity machines is achieved in these steps # Initialize random weight values # Execute these steps until the full synchronization is achieved ## Generate random input vector ## Compute the values of the hidden neurons ## Compute the value of the output neuron ## Compare the values of both tree parity machines ### Outputs are the same: one of the suitable learning rules is applied to the weights ### Outputs are different: go to 2.1 After the full synchronization is achieved (the weights wij of both tree parity machines are same), and can use their weights as keys.This method is known as a bidirectional learning.

One of the following learning rules can be used for the synchronization: * Hebbian learning rule: : * Anti-Hebbian learning rule: : * Random walk: : Where: : if otherwise And: : is a function that keeps the in the range

Attacks and security of this protocol

In every attack it is considered, that the attacker can eavesdrop messages between the parties and , but does not have an opportunity to change them.Brute force

To provide a brute force attack, an attacker has to test all possible keys (all possible values of weights wij). By hidden neurons, × input neurons and boundary of weights , this gives (2L+1)KN possibilities. For example, the configuration = 3, = 3 and = 100 gives us 3*10253 key possibilities, making the attack impossible with today's computer power.Learning with own tree parity machine

One of the basic attacks can be provided by an attacker, who owns the same tree parity machine as the parties and . He wants to synchronize his tree parity machine with these two parties. In each step there are three situations possible: # Output(A) ≠ Output(B): None of the parties updates its weights. # Output(A) = Output(B) = Output(E): All the three parties update weights in their tree parity machines. # Output(A) = Output(B) ≠ Output(E): Parties and update their tree parity machines, but the attacker can not do that. Because of this situation his learning is slower than the synchronization of parties and . It has been proven, that the synchronization of two parties is faster than learning of an attacker. It can be improved by increasing of the synaptic depth L of the neural network. That gives this protocol enough security and an attacker can find out the key only with small probability.Other attacks

For conventional cryptographic systems, we can improve the security of the protocol by increasing of the key length. In the case of neural cryptography, we improve it by increasing of the synaptic depth of the neural networks. Changing this parameter increases the cost of a successful attack exponentially, while the effort for the users grows polynomially. Therefore, breaking the security of neural key exchange belongs to the complexity class NP. Alexander Klimov, Anton Mityaguine, and Adi Shamir say that the original neural synchronization scheme can be broken by at least three different attacks—geometric, probabilistic analysis, and using genetic algorithms. Even though this particular implementation is insecure, the ideas behind chaotic synchronization could potentially lead to a secure implementation.Permutation parity machine

The permutation parity machine is a binary variant of the tree parity machine. It consists of one input layer, one hidden layer and one output layer. The number of neurons in the output layer depends on the number of hidden units K. Each hidden neuron has N binary input neurons: : The weights between input and hidden neurons are also binary: : Output value of each hidden neuron is calculated as a sum of all exclusive disjunctions (exclusive or) of input neurons and these weights: : (⊕ means XOR). The function is a threshold function, which returns 0 or 1:: The output of neural network with two or more hidden neurons can be computed as the exclusive or of the values produced by hidden elements:

: Other configurations of the output layer for K>2 are also possible. This machine has proven to be robust enough against some attacks so it could be used as a cryptographic mean, but it has been shown to be vulnerable to a probabilistic attack.

Security against quantum computers

Aquantum computer

Quantum computing is a type of computation whose operations can harness the phenomena of quantum mechanics, such as superposition, interference, and entanglement. Devices that perform quantum computations are known as quantum computers. Though ...

is a device that uses quantum mechanisms for computation. In this device the data are stored as qubits (quantum binary digits). That gives a quantum computer in comparison with a conventional computer the opportunity to solve complicated problems in a short time, e.g. discrete logarithm problem or factorization. Algorithms that are not based on any of these number theory problems are being searched because of this property.

Neural key exchange protocol is not based on any number theory. It is based on the difference between unidirectional and bidirectional synchronization of neural networks. Therefore, something like the neural key exchange protocol could give rise to potentially faster key exchange schemes.

See also

*Neural Network

A neural network is a network or circuit of biological neurons, or, in a modern sense, an artificial neural network, composed of artificial neurons or nodes. Thus, a neural network is either a biological neural network, made up of biological ...

* Stochastic neural network

Artificial neural networks (ANNs), usually simply called neural networks (NNs) or neural nets, are computing systems inspired by the biological neural networks that constitute animal brains.

An ANN is based on a collection of connected units ...

* Shor's algorithm

Shor's algorithm is a quantum algorithm, quantum computer algorithm for finding the prime factors of an integer. It was developed in 1994 by the American mathematician Peter Shor.

On a quantum computer, to factor an integer N , Shor's algorithm ...

References

Neuro-Cryptography

1995 - The first definition of the Neuro-Cryptography (AI Neural-Cryptography) applied to DES cryptanalysis by Sebastien Dourlens, France.

- Description of one kind of neural cryptography at the

University of Würzburg

The Julius Maximilian University of Würzburg (also referred to as the University of Würzburg, in German ''Julius-Maximilians-Universität Würzburg'') is a public research university in Würzburg, Germany. The University of Würzburg is one of ...

, Germany.

* - One of the leading papers that introduce the concept of using synchronized neural networks to achieve a public key authentication system.

* - Possible practical application of Neural Cryptography.

* - Analysis of neural cryptography in general and focusing on the weakness and possible attacks of using synchronized neural networks.

Neural Synchronization and Cryptography

- Andreas Ruttor. PhD thesis, Bayerische Julius-Maximilians-Universität Würzburg, 2006. * * {{Cryptography navbox Theory of cryptography Artificial neural networks