|

Sparse Graph Code

A Sparse graph code is a code which is represented by a sparse graph. Any linear code can be represented as a graph, where there are two sets of nodes - a set representing the transmitted bits and another set representing the constraints that the transmitted bits have to satisfy. The state of the art classical error-correcting codes are based on sparse graphs, achieving close to the Shannon limit. The archetypal sparse-graph codes are Gallager's low-density parity-check code In information theory, a low-density parity-check (LDPC) code is a linear error correcting code, a method of transmitting a message over a noisy transmission channel. An LDPC code is constructed using a sparse Tanner graph (subclass of the bip ...s. External links The on-line textbook: Information Theory, Inference, and Learning Algorithms by David J.C. MacKay, discusses sparse-graph codes in Chapters 47–50.Encyclopedia of Sparse Graph CodesIterative Error Correction: Turbo, Low-Density Parity-Chec ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Code

In communications and information processing, code is a system of rules to convert information—such as a letter, word, sound, image, or gesture—into another form, sometimes shortened or secret, for communication through a communication channel or storage in a storage medium. An early example is an invention of language, which enabled a person, through speech, to communicate what they thought, saw, heard, or felt to others. But speech limits the range of communication to the distance a voice can carry and limits the audience to those present when the speech is uttered. The invention of writing, which converted spoken language into visual symbols, extended the range of communication across space and time. The process of encoding converts information from a source into symbols for communication or storage. Decoding is the reverse process, converting code symbols back into a form that the recipient understands, such as English or/and Spanish. One reason for coding is t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Sparse Graph

In mathematics, a dense graph is a graph in which the number of edges is close to the maximal number of edges (where every pair of vertices is connected by one edge). The opposite, a graph with only a few edges, is a sparse graph. The distinction of what constitutes a dense or sparse graph is ill-defined, and depends on context. The graph density of simple graphs is defined to be the ratio of the number of edges with respect to the maximum possible edges. For undirected simple graphs, the graph density is: :D = \frac = \frac For directed, simple graphs, the maximum possible edges is twice that of undirected graphs (as there are two directions to an edge) so the density is: :D = \frac = \frac where is the number of edges and is the number of vertices in the graph. The maximum number of edges for an undirected graph is = \frac2, so the maximal density is 1 (for complete graphs) and the minimal density is 0 . Upper density ''Upper density'' is an extension of the concept of g ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

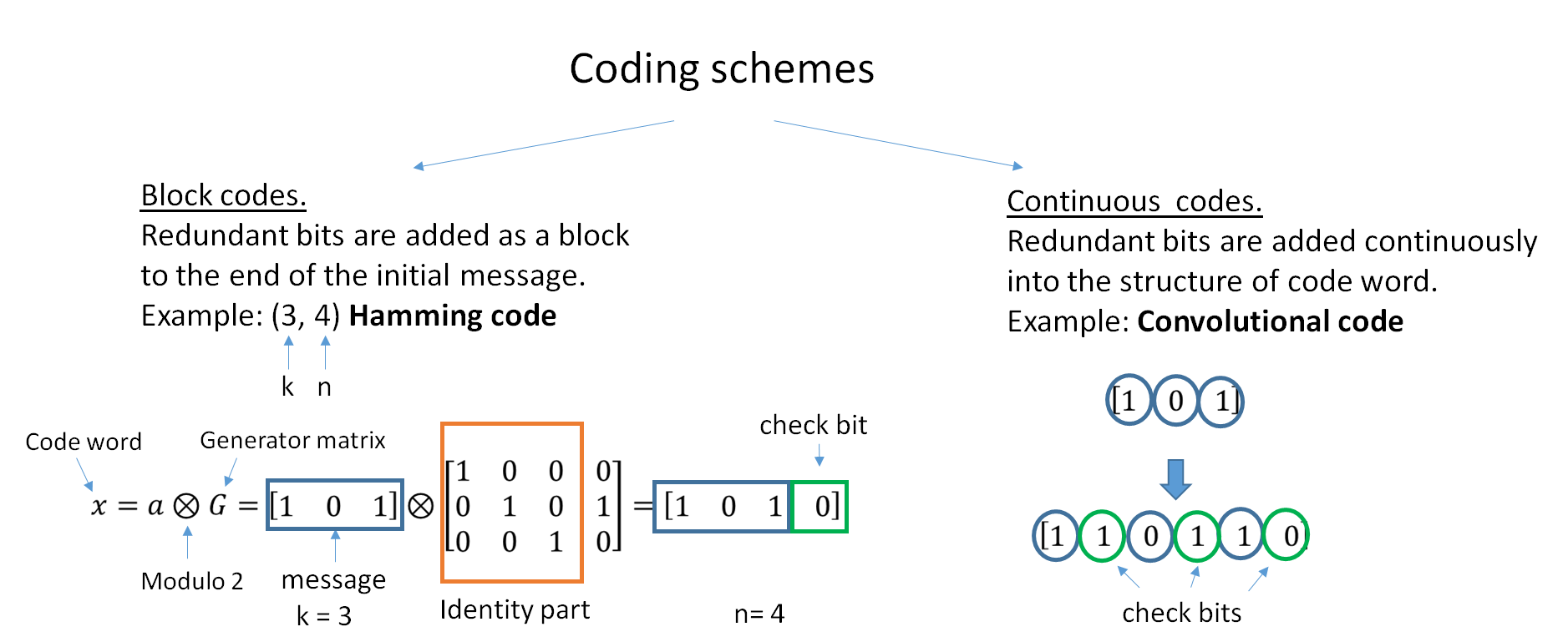

Linear Code

In coding theory, a linear code is an error-correcting code for which any linear combination of codewords is also a codeword. Linear codes are traditionally partitioned into block codes and convolutional codes, although turbo codes can be seen as a hybrid of these two types. Linear codes allow for more efficient encoding and decoding algorithms than other codes (cf. syndrome decoding). Linear codes are used in forward error correction and are applied in methods for transmitting symbols (e.g., bits) on a communications channel so that, if errors occur in the communication, some errors can be corrected or detected by the recipient of a message block. The codewords in a linear block code are blocks of symbols that are encoded using more symbols than the original value to be sent. A linear code of length ''n'' transmits blocks containing ''n'' symbols. For example, the ,4,3 Hamming code is a linear binary code which represents 4-bit messages using 7-bit codewords. Two distinct ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Error-correcting Code

In computing, telecommunication, information theory, and coding theory, an error correction code, sometimes error correcting code, (ECC) is used for controlling errors in data over unreliable or noisy communication channels. The central idea is the sender encodes the message with redundant information in the form of an ECC. The redundancy allows the receiver to detect a limited number of errors that may occur anywhere in the message, and often to correct these errors without retransmission. The American mathematician Richard Hamming pioneered this field in the 1940s and invented the first error-correcting code in 1950: the Hamming (7,4) code. ECC contrasts with error detection in that errors that are encountered can be corrected, not simply detected. The advantage is that a system using ECC does not require a reverse channel to request retransmission of data when an error occurs. The downside is that there is a fixed overhead that is added to the message, thereby requiring a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Shannon Limit

In information theory, the noisy-channel coding theorem (sometimes Shannon's theorem or Shannon's limit), establishes that for any given degree of noise contamination of a communication channel, it is possible to communicate discrete data (digital information) nearly error-free up to a computable maximum rate through the channel. This result was presented by Claude Shannon in 1948 and was based in part on earlier work and ideas of Harry Nyquist and Ralph Hartley. The Shannon limit or Shannon capacity of a communication channel refers to the maximum rate of error-free data that can theoretically be transferred over the channel if the link is subject to random data transmission errors, for a particular noise level. It was first described by Shannon (1948), and shortly after published in a book by Shannon and Warren Weaver entitled '' The Mathematical Theory of Communication'' (1949). This founded the modern discipline of information theory. Overview Stated by Claude Shann ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Robert G

The name Robert is an ancient Germanic given name, from Proto-Germanic "fame" and "bright" (''Hrōþiberhtaz''). Compare Old Dutch ''Robrecht'' and Old High German ''Hrodebert'' (a compound of '' Hruod'' ( non, Hróðr) "fame, glory, honour, praise, renown" and ''berht'' "bright, light, shining"). It is the second most frequently used given name of ancient Germanic origin. It is also in use as a surname. Another commonly used form of the name is Rupert. After becoming widely used in Continental Europe it entered England in its Old French form ''Robert'', where an Old English cognate form (''Hrēodbēorht'', ''Hrodberht'', ''Hrēodbēorð'', ''Hrœdbœrð'', ''Hrœdberð'', ''Hrōðberχtŕ'') had existed before the Norman Conquest. The feminine version is Roberta. The Italian, Portuguese, and Spanish form is Roberto. Robert is also a common name in many Germanic languages, including English, German, Dutch, Norwegian, Swedish, Scots, Danish, and Icelandic. It can be ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Low-density Parity-check Code

In information theory, a low-density parity-check (LDPC) code is a linear error correcting code, a method of transmitting a message over a noisy transmission channel. An LDPC code is constructed using a sparse Tanner graph (subclass of the bipartite graph). LDPC codes are capacity-approaching codes, which means that practical constructions exist that allow the noise threshold to be set very close to the theoretical maximum (the Shannon limit) for a symmetric memoryless channel. The noise threshold defines an upper bound for the channel noise, up to which the probability of lost information can be made as small as desired. Using iterative belief propagation techniques, LDPC codes can be decoded in time linear to their block length. LDPC codes are finding increasing use in applications requiring reliable and highly efficient information transfer over bandwidth-constrained or return-channel-constrained links in the presence of corrupting noise. Implementation of LDPC codes has l ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

David J

David John Haskins (born 24 April 1957, Northampton, Northamptonshire, England), better known as David J, is a British alternative rock musician, producer, and writer. He is the bassist for the gothic rock band Bauhaus and for Love and Rockets. He has composed the scores for a number of plays and films, and also wrote and directed his own plays, ''Silver for Gold (The Odyssey of Edie Sedgwick)'', in 2008, which was restaged at REDCAT in Los Angeles in 2011, and ''The Chanteuse and The Devil's Muse'' in 2011. His artwork has been shown in galleries internationally, and he has been a resident DJ at venues such as the Knitting Factory. David J has released a number of singles and solo albums, and in 1990 he released one of the first No. 1 hits on the then nascent Modern Rock Tracks charts, with "I'll Be Your Chauffeur". His most recent single, "The Day That David Bowie Died" entered the UK vinyl singles chart at number 4 in 2016. The track appears on his double album, ''Vaga ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Matrix Theory

In mathematics, a matrix (plural matrices) is a rectangular array or table of numbers, symbols, or expressions, arranged in rows and columns, which is used to represent a mathematical object or a property of such an object. For example, \begin1 & 9 & -13 \\20 & 5 & -6 \end is a matrix with two rows and three columns. This is often referred to as a "two by three matrix", a "-matrix", or a matrix of dimension . Without further specifications, matrices represent linear maps, and allow explicit computations in linear algebra. Therefore, the study of matrices is a large part of linear algebra, and most properties and operations of abstract linear algebra can be expressed in terms of matrices. For example, matrix multiplication represents composition of linear maps. Not all matrices are related to linear algebra. This is, in particular, the case in graph theory, of incidence matrices, and adjacency matrices. ''This article focuses on matrices related to linear algebra, and, un ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |