|

Semantic Compression

In natural language processing, semantic compression is a process of compacting a lexicon used to build a textual document (or a set of documents) by reducing language heterogeneity, while maintaining text semantics. As a result, the same ideas can be represented using a smaller set of words. In most applications, semantic compression is a lossy compression. Increased prolixity does not compensate for the lexical compression and an original document cannot be reconstructed in a reverse process. By generalization Semantic compression is basically achieved in two steps, using frequency dictionaries and semantic network: # determining cumulated term frequencies to identify target lexicon, # replacing less frequent terms with their hypernyms (generalization) from target lexicon. Step 1 requires assembling word frequencies and information on semantic relationships, specifically hyponymy. Moving upwards in word hierarchy, a cumulative concept frequency is calculating by adding a su ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

Natural Language Processing

Natural language processing (NLP) is a subfield of computer science and especially artificial intelligence. It is primarily concerned with providing computers with the ability to process data encoded in natural language and is thus closely related to information retrieval, knowledge representation and computational linguistics, a subfield of linguistics. Major tasks in natural language processing are speech recognition, text classification, natural-language understanding, natural language understanding, and natural language generation. History Natural language processing has its roots in the 1950s. Already in 1950, Alan Turing published an article titled "Computing Machinery and Intelligence" which proposed what is now called the Turing test as a criterion of intelligence, though at the time that was not articulated as a problem separate from artificial intelligence. The proposed test includes a task that involves the automated interpretation and generation of natural language ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

Precision And Recall

In pattern recognition, information retrieval, object detection and classification (machine learning), precision and recall are performance metrics that apply to data retrieved from a collection, corpus or sample space. Precision (also called positive predictive value) is the fraction of relevant instances among the retrieved instances. Written as a formula: \text = \frac Recall (also known as sensitivity) is the fraction of relevant instances that were retrieved. Written as a formula: \text = \frac Both precision and recall are therefore based on relevance. Consider a computer program for recognizing dogs (the relevant element) in a digital photograph. Upon processing a picture which contains ten cats and twelve dogs, the program identifies eight dogs. Of the eight elements identified as dogs, only five actually are dogs ( true positives), while the other three are cats ( false positives). Seven dogs were missed ( false negatives), and seven cats were correctly ex ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

Natural Language Processing

Natural language processing (NLP) is a subfield of computer science and especially artificial intelligence. It is primarily concerned with providing computers with the ability to process data encoded in natural language and is thus closely related to information retrieval, knowledge representation and computational linguistics, a subfield of linguistics. Major tasks in natural language processing are speech recognition, text classification, natural-language understanding, natural language understanding, and natural language generation. History Natural language processing has its roots in the 1950s. Already in 1950, Alan Turing published an article titled "Computing Machinery and Intelligence" which proposed what is now called the Turing test as a criterion of intelligence, though at the time that was not articulated as a problem separate from artificial intelligence. The proposed test includes a task that involves the automated interpretation and generation of natural language ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

Information Retrieval Techniques

Information is an abstract concept that refers to something which has the power to inform. At the most fundamental level, it pertains to the interpretation (perhaps formally) of that which may be sensed, or their abstractions. Any natural process that is not completely random and any observable pattern in any medium can be said to convey some amount of information. Whereas digital signals and other data use discrete signs to convey information, other phenomena and artifacts such as analogue signals, poems, pictures, music or other sounds, and currents convey information in a more continuous form. Information is not knowledge itself, but the meaning that may be derived from a representation through interpretation. The concept of ''information'' is relevant or connected to various concepts, including constraint, communication, control, data, form, education, knowledge, meaning, understanding, mental stimuli, pattern, perception, proposition, representation, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

Text Simplification

Text simplification is an operation used in natural language processing to change, enhance, classify, or otherwise process an existing body of human-readable text so its grammar and structure is greatly simplified while the underlying meaning and information remain the same. Text simplification is an important area of research because of communication needs in an increasingly complex and interconnected world more dominated by science, technology, and new media. But natural human languages pose huge problems because they ordinarily contain large vocabularies and complex constructions that machines, no matter how fast and well-programmed, cannot easily process. However, researchers have discovered that, to reduce linguistic diversity, they can use methods of semantic compression to limit and simplify a set of words used in given texts. Example Text simplification is illustrated with an example used by Siddharthan (2006). The first sentence contains two relative clauses and one c ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

Quantities Of Information

The mathematical theory of information is based on probability theory and statistics, and measures information with several quantities of information. The choice of logarithmic base in the following formulae determines the unit of information entropy that is used. The most common unit of information is the ''bit'', or more correctly the shannon, based on the binary logarithm. Although ''bit'' is more frequently used in place of ''shannon'', its name is not distinguished from the bit as used in data processing to refer to a binary value or stream regardless of its entropy (information content). Other units include the nat, based on the natural logarithm, and the hartley, based on the base 10 or common logarithm. In what follows, an expression of the form p \log p \, is considered by convention to be equal to zero whenever p is zero. This is justified because \lim_ p \log p = 0 for any logarithmic base. Self-information Shannon derived a measure of information content cal ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

Lexical Substitution

Lexical substitution is the task of identifying a substitute for a word in the context of a clause. For instance, given the following text: "After the ''match'', replace any remaining fluid deficit to prevent chronic dehydration throughout the tournament", a substitute of ''game'' might be given. Lexical substitution is strictly related to word sense disambiguation (WSD), in that both aim to determine the meaning of a word. However, while WSD consists of automatically assigning the appropriate sense from a fixed sense inventory, lexical substitution does not impose any constraint on which substitute to choose as the best representative for the word in context. By not prescribing the inventory, lexical substitution overcomes the issue of the granularity of sense distinctions and provides a level playing field for automatic systems that automatically acquire word senses (a task referred to as Word Sense Induction). Evaluation In order to evaluate automatic systems on lexical su ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

Information Theory

Information theory is the mathematical study of the quantification (science), quantification, Data storage, storage, and telecommunications, communication of information. The field was established and formalized by Claude Shannon in the 1940s, though early contributions were made in the 1920s through the works of Harry Nyquist and Ralph Hartley. It is at the intersection of electronic engineering, mathematics, statistics, computer science, Neuroscience, neurobiology, physics, and electrical engineering. A key measure in information theory is information entropy, entropy. Entropy quantifies the amount of uncertainty involved in the value of a random variable or the outcome of a random process. For example, identifying the outcome of a Fair coin, fair coin flip (which has two equally likely outcomes) provides less information (lower entropy, less uncertainty) than identifying the outcome from a roll of a dice, die (which has six equally likely outcomes). Some other important measu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

Controlled Natural Language

Controlled natural languages (CNLs) are subsets of natural languages that are obtained by restricting the grammar and vocabulary in order to reduce or eliminate ambiguity and complexity. Traditionally, controlled languages fall into two major types: those that improve readability for human readers (e.g. non-native speakers), and those that enable reliable automatic semantic analysis of the language. The first type of languages (often called "simplified" or "technical" languages), for example ASD Simplified Technical English, Caterpillar Technical English, IBM's Easy English, are used in the industry to increase the quality of technical documentation, and possibly simplify the semi-automatic translation of the documentation. These languages restrict the writer by general rules such as "Keep sentences short", "Avoid the use of pronouns", "Only use dictionary-approved words", and "Use only the active voice". The second type of languages have a formal syntax and formal semantics, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

|

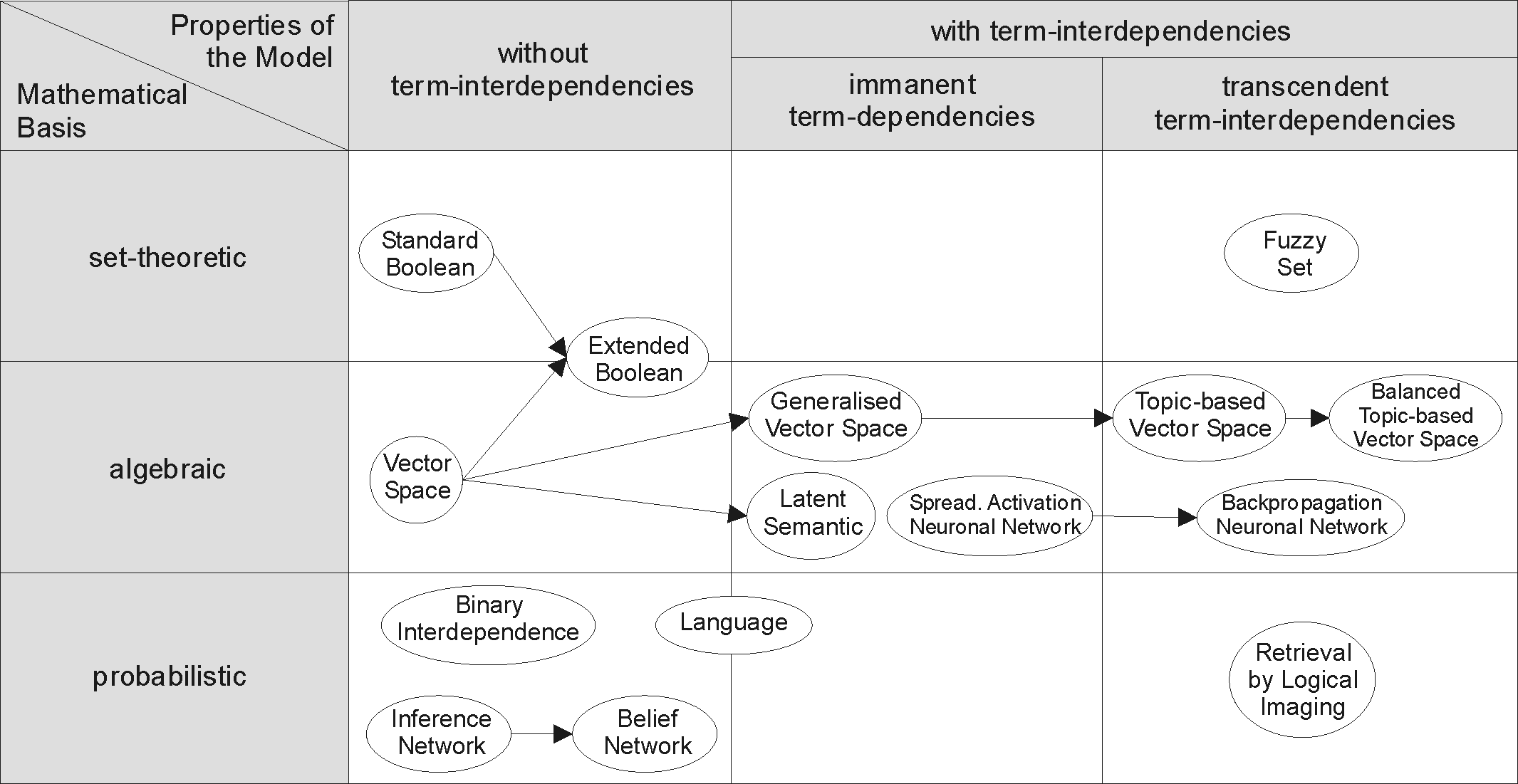

Information Retrieval

Information retrieval (IR) in computing and information science is the task of identifying and retrieving information system resources that are relevant to an Information needs, information need. The information need can be specified in the form of a search query. In the case of document retrieval, queries can be based on full-text search, full-text or other content-based indexing. Information retrieval is the science of searching for information in a document, searching for documents themselves, and also searching for the metadata that describes data, and for databases of texts, images or sounds. Automated information retrieval systems are used to reduce what has been called information overload. An IR system is a software system that provides access to books, journals and other documents; it also stores and manages those documents. Web search engines are the most visible IR applications. Overview An information retrieval process begins when a user enters a query into the sys ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

Semantics

Semantics is the study of linguistic Meaning (philosophy), meaning. It examines what meaning is, how words get their meaning, and how the meaning of a complex expression depends on its parts. Part of this process involves the distinction between sense and reference. Sense is given by the ideas and concepts associated with an expression while reference is the object to which an expression points. Semantics contrasts with syntax, which studies the rules that dictate how to create grammatically correct sentences, and pragmatics, which investigates how people use language in communication. Lexical semantics is the branch of semantics that studies word meaning. It examines whether words have one or several meanings and in what lexical relations they stand to one another. Phrasal semantics studies the meaning of sentences by exploring the phenomenon of compositionality or how new meanings can be created by arranging words. Formal semantics (natural language), Formal semantics relies o ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

|

Computational Complexity Theory

In theoretical computer science and mathematics, computational complexity theory focuses on classifying computational problems according to their resource usage, and explores the relationships between these classifications. A computational problem is a task solved by a computer. A computation problem is solvable by mechanical application of mathematical steps, such as an algorithm. A problem is regarded as inherently difficult if its solution requires significant resources, whatever the algorithm used. The theory formalizes this intuition, by introducing mathematical models of computation to study these problems and quantifying their computational complexity, i.e., the amount of resources needed to solve them, such as time and storage. Other measures of complexity are also used, such as the amount of communication (used in communication complexity), the number of logic gate, gates in a circuit (used in circuit complexity) and the number of processors (used in parallel computing). O ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |