|

Non-stationary

In mathematics and statistics, a stationary process (also called a strict/strictly stationary process or strong/strongly stationary process) is a stochastic process whose statistical properties, such as mean and variance, do not change over time. More formally, the joint probability distribution of the process remains the same when shifted in time. This implies that the process is statistically consistent across different time periods. Because many statistical procedures in time series analysis assume stationarity, non-stationary data are frequently transformed to achieve stationarity before analysis. A common cause of non-stationarity is a trend in the mean, which can be due to either a unit root or a deterministic trend. In the case of a unit root, stochastic shocks have permanent effects, and the process is not mean-reverting. With a deterministic trend, the process is called trend-stationary, and shocks have only transitory effects, with the variable tending towards a determin ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Differencing

In time series analysis used in statistics and econometrics, autoregressive integrated moving average (ARIMA) and seasonal ARIMA (SARIMA) models are generalizations of the autoregressive moving average (ARMA) model to non-stationary series and periodic variation, respectively. All these models are fitted to time series in order to better understand it and predict future values. The purpose of these generalizations is to fit the data as well as possible. Specifically, ARMA assumes that the series is stationary, that is, its expected value is constant in time. If instead the series has a trend (but a constant variance/autocovariance), the trend is removed by "differencing", leaving a stationary series. This operation generalizes ARMA and corresponds to the " integrated" part of ARIMA. Analogously, periodic variation is removed by "seasonal differencing". Components As in ARMA, the "autoregressive" () part of ARIMA indicates that the evolving variable of interest is regressed on ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Unit Root

In probability theory and statistics, a unit root is a feature of some stochastic processes (such as random walks) that can cause problems in statistical inference involving time series models. A linear stochastic process has a unit root if 1 is a root of the process's characteristic equation. Such a process is non-stationary but does not always have a trend. If the other roots of the characteristic equation lie inside the unit circle—that is, have a modulus (absolute value) less than one—then the first difference of the process will be stationary; otherwise, the process will need to be differenced multiple times to become stationary. If there are ''d'' unit roots, the process will have to be differenced ''d'' times in order to make it stationary. Due to this characteristic, unit root processes are also called difference stationary. Unit root processes may sometimes be confused with trend-stationary processes; while they share many properties, they are different in m ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Trend-stationary Process

In the statistical analysis of time series, a trend-stationary process is a stochastic process from which an underlying trend (function solely of time) can be removed, leaving a stationary process. The trend does not have to be linear. Conversely, if the process requires differencing to be made stationary, then it is called difference stationary and possesses one or more unit roots. Those two concepts may sometimes be confused, but while they share many properties, they are different in many aspects. It is possible for a time series to be non-stationary, yet have no unit root and be trend-stationary. In both unit root and trend-stationary processes, the mean can be growing or decreasing over time; however, in the presence of a shock, trend-stationary processes are mean-reverting (i.e. transitory, the time series will converge again towards the growing mean, which was not affected by the shock) while unit-root processes have a permanent impact on the mean (i.e. no convergence ove ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Autoregressive Moving Average Model

In the statistical analysis of time series, autoregressive–moving-average (ARMA) models are a way to describe a (weakly) stationary stochastic process using autoregression (AR) and a moving average (MA), each with a polynomial. They are a tool for understanding a series and predicting future values. AR involves regressing the variable on its own lagged (i.e., past) values. MA involves modeling the error as a linear combination of error terms occurring contemporaneously and at various times in the past. The model is usually denoted ARMA(''p'', ''q''), where ''p'' is the order of AR and ''q'' is the order of MA. The general ARMA model was described in the 1951 thesis of Peter Whittle, ''Hypothesis testing in time series analysis'', and it was popularized in the 1970 book by George E. P. Box and Gwilym Jenkins. ARMA models can be estimated by using the Box–Jenkins method. Mathematical formulation Autoregressive model The notation AR(''p'') refers to the autoregressi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Autoregressive

In statistics, econometrics, and signal processing, an autoregressive (AR) model is a representation of a type of random process; as such, it can be used to describe certain time-varying processes in nature, economics, behavior, etc. The autoregressive model specifies that the output variable depends linearly on its own previous values and on a stochastic term (an imperfectly predictable term); thus the model is in the form of a stochastic difference equation (or recurrence relation) which should not be confused with a differential equation. Together with the moving-average (MA) model, it is a special case and key component of the more general autoregressive–moving-average (ARMA) and autoregressive integrated moving average (ARIMA) models of time series, which have a more complicated stochastic structure; it is also a special case of the vector autoregressive model (VAR), which consists of a system of more than one interlocking stochastic difference equation in more than one ev ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

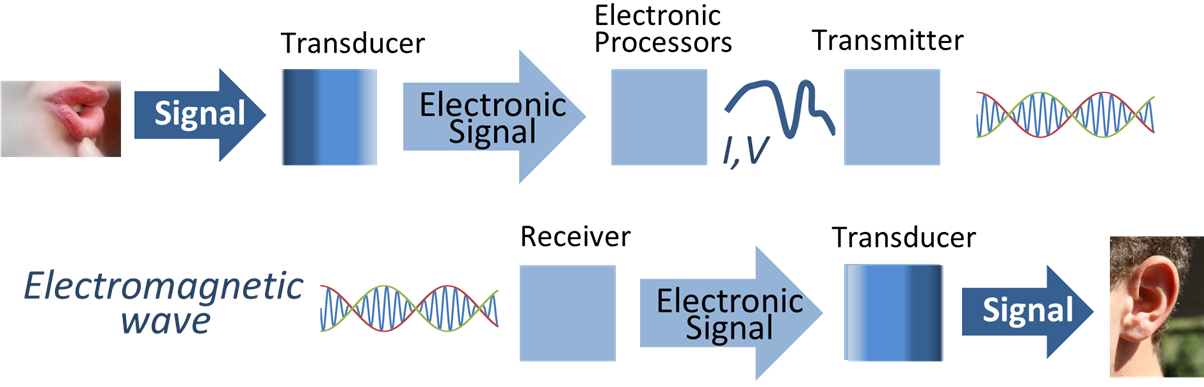

Signal Processing

Signal processing is an electrical engineering subfield that focuses on analyzing, modifying and synthesizing ''signals'', such as audio signal processing, sound, image processing, images, Scalar potential, potential fields, Seismic tomography, seismic signals, Altimeter, altimetry processing, and scientific measurements. Signal processing techniques are used to optimize transmissions, Data storage, digital storage efficiency, correcting distorted signals, improve subjective video quality, and to detect or pinpoint components of interest in a measured signal. History According to Alan V. Oppenheim and Ronald W. Schafer, the principles of signal processing can be found in the classical numerical analysis techniques of the 17th century. They further state that the digital refinement of these techniques can be found in the digital control systems of the 1940s and 1950s. In 1948, Claude Shannon wrote the influential paper "A Mathematical Theory of Communication" which was publis ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Time Series Analysis

In mathematics, a time series is a series of data points indexed (or listed or graphed) in time order. Most commonly, a time series is a sequence taken at successive equally spaced points in time. Thus it is a sequence of discrete-time data. Examples of time series are heights of ocean tides, counts of sunspots, and the daily closing value of the Dow Jones Industrial Average. A time series is very frequently plotted via a run chart (which is a temporal line chart). Time series are used in statistics, signal processing, pattern recognition, econometrics, mathematical finance, weather forecasting, earthquake prediction, electroencephalography, control engineering, astronomy, communications engineering, and largely in any domain of applied science and engineering which involves temporal measurements. Time series ''analysis'' comprises methods for analyzing time series data in order to extract meaningful statistics and other characteristics of the data. Time series ''forec ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Uniform Distribution (continuous)

In probability theory and statistics, the continuous uniform distributions or rectangular distributions are a family of symmetric probability distributions. Such a distribution describes an experiment where there is an arbitrary outcome that lies between certain bounds. The bounds are defined by the parameters, a and b, which are the minimum and maximum values. The interval can either be closed (i.e. ,b/math>) or open (i.e. (a,b)). Therefore, the distribution is often abbreviated U(a,b), where U stands for uniform distribution. The difference between the bounds defines the interval length; all intervals of the same length on the distribution's support are equally probable. It is the maximum entropy probability distribution for a random variable X under no constraint other than that it is contained in the distribution's support. Definitions Probability density function The probability density function of the continuous uniform distribution is f(x) = \begin \dfrac & ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Ergodic Process

In physics, statistics, econometrics and signal processing, a stochastic process is said to be in an ergodic regime if an observable's ensemble average equals the time average. In this regime, any collection of random samples from a process must represent the average statistical properties of the entire regime. Conversely, a regime of a process that is not ergodic is said to be in non-ergodic regime. A regime implies a time-window of a process whereby ergodicity measure is applied. Specific definitions One can discuss the ergodicity of various statistics of a stochastic process. For example, a wide-sense stationary process X(t) has constant mean :\mu_X= E (t) and autocovariance :r_X(\tau) = E X(t)-\mu_X) (X(t+\tau)-\mu_X) that depends only on the lag \tau and not on time t. The properties \mu_X and r_X(\tau) are ''ensemble averages'' (calculated over all possible sample functions X), not time averages. The process X(t) is said to be mean-ergodicPapoulis, p. 428 or m ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Bernoulli Scheme

In mathematics, the Bernoulli scheme or Bernoulli shift is a generalization of the Bernoulli process to more than two possible outcomes. Bernoulli schemes appear naturally in symbolic dynamics, and are thus important in the study of dynamical systems. Many important dynamical systems (such as Axiom A systems) exhibit a repellor that is the product of the Cantor set and a smooth manifold, and the dynamics on the Cantor set are isomorphic to that of the Bernoulli shift. This is essentially the Markov partition. The term ''shift'' is in reference to the shift operator, which may be used to study Bernoulli schemes. The Ornstein isomorphism theorem shows that Bernoulli shifts are isomorphic when their entropy is equal. Definition A Bernoulli scheme is a discrete-time stochastic process where each independent random variable may take on one of ''N'' distinct possible values, with the outcome ''i'' occurring with probability p_i, with ''i'' = 1, ..., ''N'', and : ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

White Noise

In signal processing, white noise is a random signal having equal intensity at different frequencies, giving it a constant power spectral density. The term is used with this or similar meanings in many scientific and technical disciplines, including physics, acoustical engineering, telecommunications, and statistical forecasting. White noise refers to a statistical model for signals and signal sources, not to any specific signal. White noise draws its name from white light, although light that appears white generally does not have a flat power spectral density over the visible band. In discrete time, white noise is a discrete signal whose samples are regarded as a sequence of serially uncorrelated random variables with zero mean and finite variance; a single realization of white noise is a random shock. In some contexts, it is also required that the samples be independent and have identical probability distribution (in other words independent and identically distribu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |