|

K-SVD

In applied mathematics, ''k''-SVD is a dictionary learning algorithm for creating a dictionary for sparse representations, via a singular value decomposition approach. ''k''-SVD is a generalization of the ''k''-means clustering method, and it works by iteratively alternating between sparse coding the input data based on the current dictionary, and updating the atoms in the dictionary to better fit the data. It is structurally related to the expectation–maximization (EM) algorithm. ''k''-SVD can be found widely in use in applications such as image processing, audio processing, biology, and document analysis. ''k''-SVD algorithm ''k''-SVD is a kind of generalization of ''k''-means, as follows. The ''k''-means clustering can be also regarded as a method of sparse representation. That is, finding the best possible codebook to represent the data samples \^M_ by nearest neighbor, by solving : \quad \min \limits _ \ \qquad \text \forall i, x_i = e_k \text k. which is near ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Dictionary Learning

Sparse dictionary learning (also known as sparse coding or SDL) is a representation learning method which aims to find a sparse representation of the input data in the form of a linear combination of basic elements as well as those basic elements themselves. These elements are called ''atoms'', and they compose a ''dictionary''. Atoms in the dictionary are not required to be orthogonal, and they may be an over-complete spanning set. This problem setup also allows the dimensionality of the signals being represented to be higher than any one of the signals being observed. These two properties lead to having seemingly redundant atoms that allow multiple representations of the same signal, but also provide an improvement in sparsity and flexibility of the representation. One of the most important applications of sparse dictionary learning is in the field of compressed sensing or signal recovery. In compressed sensing, a high-dimensional signal can be recovered with only a few linear me ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Singular Value Decomposition

In linear algebra, the singular value decomposition (SVD) is a Matrix decomposition, factorization of a real number, real or complex number, complex matrix (mathematics), matrix into a rotation, followed by a rescaling followed by another rotation. It generalizes the eigendecomposition of a square normal matrix with an orthonormal eigenbasis to any matrix. It is related to the polar decomposition#Matrix polar decomposition, polar decomposition. Specifically, the singular value decomposition of an m \times n complex matrix is a factorization of the form \mathbf = \mathbf, where is an complex unitary matrix, \mathbf \Sigma is an m \times n rectangular diagonal matrix with non-negative real numbers on the diagonal, is an n \times n complex unitary matrix, and \mathbf V^* is the conjugate transpose of . Such decomposition always exists for any complex matrix. If is real, then and can be guaranteed to be real orthogonal matrix, orthogonal matrices; in such contexts, the SVD ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

K-means Clustering

''k''-means clustering is a method of vector quantization, originally from signal processing, that aims to partition of a set, partition ''n'' observations into ''k'' clusters in which each observation belongs to the cluster (statistics), cluster with the nearest mean (cluster centers or cluster centroid), serving as a prototype of the cluster. This results in a partitioning of the data space into Voronoi cells. ''k''-means clustering minimizes within-cluster variances (squared Euclidean distances), but not regular Euclidean distances, which would be the more difficult Weber problem: the mean optimizes squared errors, whereas only the geometric median minimizes Euclidean distances. For instance, better Euclidean solutions can be found using k-medians clustering, ''k''-medians and k-medoids, ''k''-medoids. The problem is computationally difficult (NP-hardness, NP-hard); however, efficient heuristic algorithms converge quickly to a local optimum. These are usually similar to the ex ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

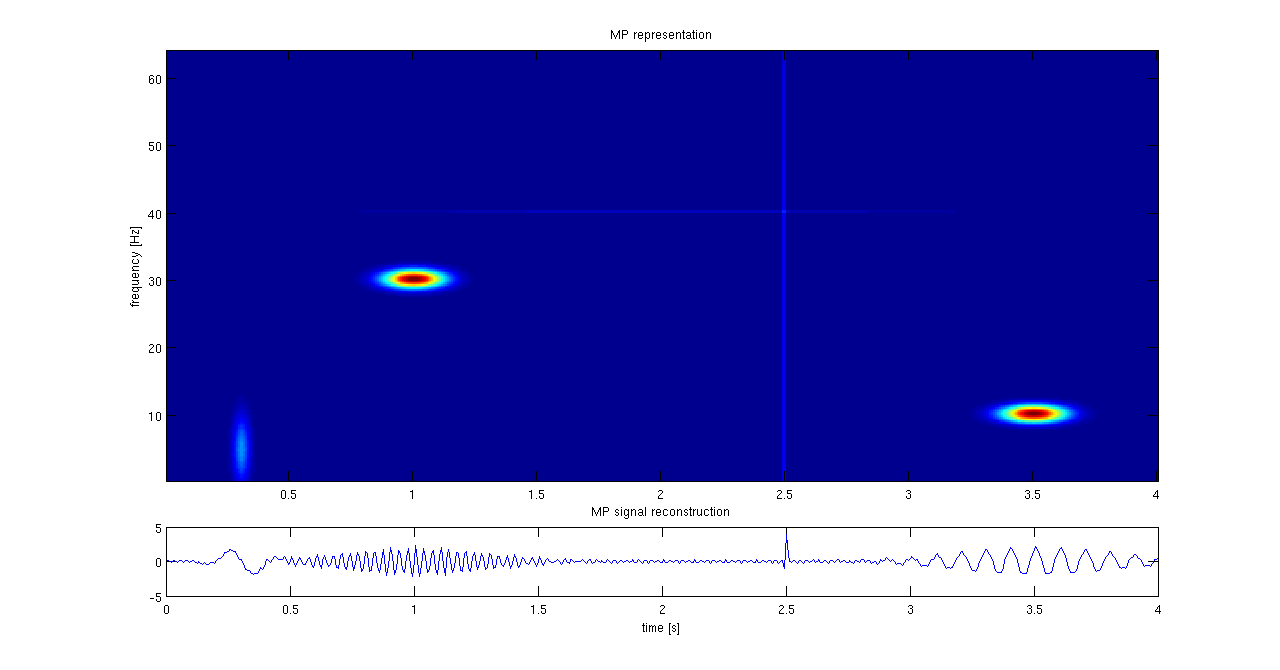

Matching Pursuit

Matching pursuit (MP) is a sparse approximation algorithm which finds the "best matching" projections of multidimensional data onto the span of an over-complete (i.e., redundant) dictionary D. The basic idea is to approximately represent a signal f from Hilbert space H as a weighted sum of finitely many functions g_ (called atoms) taken from D. An approximation with N atoms has the form : f(t) \approx \hat f_N(t) := \sum_^ a_n g_(t) where g_ is the \gamma_nth column of the matrix D and a_n is the scalar weighting factor (amplitude) for the atom g_. Normally, not every atom in D will be used in this sum. Instead, matching pursuit chooses the atoms one at a time in order to maximally (greedily) reduce the approximation error. This is achieved by finding the atom that has the highest inner product with the signal (assuming the atoms are normalized), subtracting from the signal an approximation that uses only that one atom, and repeating the process until the signal is satisfactoril ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Sparse Approximation

Sparse approximation (also known as sparse representation) theory deals with sparse solutions for systems of linear equations. Techniques for finding these solutions and exploiting them in applications have found wide use in image processing, signal processing, machine learning, medical imaging, and more. Sparse decomposition Noiseless observations Consider a linear system of equations x = D\alpha, where D is an underdetermined m\times p matrix (m < p) and . The matrix (typically assumed to be full-rank) is referred to as the dictionary, and is a signal of interest. The core sparse representation problem is defined as the quest for the sparsest possible representation satisfying . Due to the underdetermined nature of , this linear system admits in general infinitely many possible solutions, and among these we seek the one with the fewe ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Singular Value Decomposition

In linear algebra, the singular value decomposition (SVD) is a Matrix decomposition, factorization of a real number, real or complex number, complex matrix (mathematics), matrix into a rotation, followed by a rescaling followed by another rotation. It generalizes the eigendecomposition of a square normal matrix with an orthonormal eigenbasis to any matrix. It is related to the polar decomposition#Matrix polar decomposition, polar decomposition. Specifically, the singular value decomposition of an m \times n complex matrix is a factorization of the form \mathbf = \mathbf, where is an complex unitary matrix, \mathbf \Sigma is an m \times n rectangular diagonal matrix with non-negative real numbers on the diagonal, is an n \times n complex unitary matrix, and \mathbf V^* is the conjugate transpose of . Such decomposition always exists for any complex matrix. If is real, then and can be guaranteed to be real orthogonal matrix, orthogonal matrices; in such contexts, the SVD ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Applied Mathematics

Applied mathematics is the application of mathematics, mathematical methods by different fields such as physics, engineering, medicine, biology, finance, business, computer science, and Industrial sector, industry. Thus, applied mathematics is a combination of mathematical science and specialized knowledge. The term "applied mathematics" also describes the profession, professional specialty in which mathematicians work on practical problems by formulating and studying mathematical models. In the past, practical applications have motivated the development of mathematical theories, which then became the subject of study in pure mathematics where abstract concepts are studied for their own sake. The activity of applied mathematics is thus intimately connected with research in pure mathematics. History Historically, applied mathematics consisted principally of Mathematical analysis, applied analysis, most notably differential equations; approximation theory (broadly construed, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Sparse Representation

Sparse is a computer software tool designed to find possible coding faults in the Linux kernel. Unlike other such tools, this static analysis tool was initially designed to only flag constructs that were likely to be of interest to kernel developers, such as the mixing of pointers to user and kernel address spaces. Sparse checks for known problems and allows the developer to include annotations in the code that convey information about data types, such as the address space that pointers point to and the locks that a function acquires or releases. Linus Torvalds started writing Sparse in 2003. Josh Triplett was its maintainer from 2006, a role taken over by Christopher Li in 2009 and by Luc Van Oostenryck in November 2018. Sparse is released under the MIT License. Annotations Some of the checks performed by Sparse require annotating the source code using the __attribute__ GCC extension, or the Sparse-specific __context__ specifier. Sparse defines the following list of attribu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Expectation–maximization Algorithm

In statistics, an expectation–maximization (EM) algorithm is an iterative method to find (local) maximum likelihood or maximum a posteriori (MAP) estimates of parameters in statistical models, where the model depends on unobserved latent variables. The EM iteration alternates between performing an expectation (E) step, which creates a function for the expectation of the log-likelihood evaluated using the current estimate for the parameters, and a maximization (M) step, which computes parameters maximizing the expected log-likelihood found on the ''E'' step. These parameter-estimates are then used to determine the distribution of the latent variables in the next E step. It can be used, for example, to estimate a mixture of gaussians, or to solve the multiple linear regression problem. History The EM algorithm was explained and given its name in a classic 1977 paper by Arthur Dempster, Nan Laird, and Donald Rubin. They pointed out that the method had been "proposed man ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Nearest Neighbor Search

Nearest neighbor search (NNS), as a form of proximity search, is the optimization problem of finding the point in a given set that is closest (or most similar) to a given point. Closeness is typically expressed in terms of a dissimilarity function: the less similar the objects, the larger the function values. Formally, the nearest-neighbor (NN) search problem is defined as follows: given a set ''S'' of points in a space ''M'' and a query point ''q'' ∈ ''M'', find the closest point in ''S'' to ''q''. Donald Knuth in vol. 3 of '' The Art of Computer Programming'' (1973) called it the post-office problem, referring to an application of assigning to a residence the nearest post office. A direct generalization of this problem is a ''k''-NN search, where we need to find the ''k'' closest points. Most commonly ''M'' is a metric space and dissimilarity is expressed as a distance metric, which is symmetric and satisfies the triangle inequality. Even more common, ''M'' is take ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Frobenius Norm

In the field of mathematics, norms are defined for elements within a vector space. Specifically, when the vector space comprises matrices, such norms are referred to as matrix norms. Matrix norms differ from vector norms in that they must also interact with matrix multiplication. Preliminaries Given a field \ K\ of either real or complex numbers (or any complete subset thereof), let \ K^\ be the -vector space of matrices with m rows and n columns and entries in the field \ K ~. A matrix norm is a norm on \ K^~. Norms are often expressed with double vertical bars (like so: \ \, A\, \ ). Thus, the matrix norm is a function \ \, \cdot\, : K^ \to \R^\ that must satisfy the following properties: For all scalars \ \alpha \in K\ and matrices \ A, B \in K^\ , * \, A\, \ge 0\ (''positive-valued'') * \, A\, = 0 \iff A=0_ (''definite'') * \left\, \alpha\ A \right\, = \left, \alpha \\ \left\, A\right\, \ (''absolutely homogeneous'') * \, A + B \, \le \, A \, + \, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |