|

Entropy Power Inequality

In information theory, the entropy power inequality (EPI) is a result that relates to so-called "entropy power" of random variables. It shows that the entropy power of suitably well-behaved random variables is a superadditive function. The entropy power inequality was proved in 1948 by Claude Shannon in his seminal paper "A Mathematical Theory of Communication". Shannon also provided a sufficient condition for equality to hold; Stam (1959) showed that the condition is in fact necessary. Statement of the inequality For a random vector X : \Omega \to \mathbb^n with probability density function f : \mathbb^n \to \mathbb, the differential entropy of X, denoted h(X), is defined to be :h(X) = - \int_ f(x) \log f(x) \, dx and the entropy power of X, denoted N(X), is defined to be :N(X) = \frac e^. In particular, N(X) = , K, ^ when X is normally distributed with covariance matrix K. Let X and Y be independent random variables with probability density functions in the L^p space L^p(\ma ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Information Theory

Information theory is the mathematical study of the quantification (science), quantification, Data storage, storage, and telecommunications, communication of information. The field was established and formalized by Claude Shannon in the 1940s, though early contributions were made in the 1920s through the works of Harry Nyquist and Ralph Hartley. It is at the intersection of electronic engineering, mathematics, statistics, computer science, Neuroscience, neurobiology, physics, and electrical engineering. A key measure in information theory is information entropy, entropy. Entropy quantifies the amount of uncertainty involved in the value of a random variable or the outcome of a random process. For example, identifying the outcome of a Fair coin, fair coin flip (which has two equally likely outcomes) provides less information (lower entropy, less uncertainty) than identifying the outcome from a roll of a dice, die (which has six equally likely outcomes). Some other important measu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Multivariate Normal

In probability theory and statistics, the multivariate normal distribution, multivariate Gaussian distribution, or joint normal distribution is a generalization of the one-dimensional (univariate) normal distribution to higher dimensions. One definition is that a random vector is said to be ''k''-variate normally distributed if every linear combination of its ''k'' components has a univariate normal distribution. Its importance derives mainly from the multivariate central limit theorem. The multivariate normal distribution is often used to describe, at least approximately, any set of (possibly) correlated real-valued random variables, each of which clusters around a mean value. Definitions Notation and parametrization The multivariate normal distribution of a ''k''-dimensional random vector \mathbf = (X_1,\ldots,X_k)^ can be written in the following notation: : \mathbf\ \sim\ \mathcal(\boldsymbol\mu,\, \boldsymbol\Sigma), or to make it explicitly known that \mathbf i ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Bell System Technical Journal

The ''Bell Labs Technical Journal'' was the in-house scientific journal for scientists of Bell Labs, published yearly by the IEEE society. The journal was originally established as ''The Bell System Technical Journal'' (BSTJ) in New York by the American Telephone and Telegraph Company (AT&T) in 1922. It was published under this name until 1983, when the breakup of the Bell System placed various parts of the companies in the system into independent corporate entities. The journal was devoted to the scientific fields and engineering disciplines practiced in the Bell System for improvements in the wide field of electrical communication. After the restructuring of Bell Labs in 1984, the journal was renamed to ''AT&T Bell Laboratories Technical Journal''. In 1985, it was published as the ''AT&T Technical Journal'' until 1996, when it was renamed to ''Bell Labs Technical Journal''. The journal was discontinued in 2020. The last managing editor was Charles Bahr. History The Bell System ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Entropy Estimation

In various science/engineering applications, such as independent component analysis, image analysis, genetic analysis, speech recognition, manifold learning, and time delay estimationBenesty, J.; Yiteng Huang; Jingdong Chen (2007) Time Delay Estimation via Minimum Entropy. In ''Signal Processing Letters'', Volume 14, Issue 3, March 2007 157–160 it is useful to estimate the differential entropy of a system or process, given some observations. The simplest and most common approach uses histogram-based estimation, but other approaches have been developed and used, each with its own benefits and drawbacks.J. Beirlant, E. J. Dudewicz, L. Gyorfi, and E. C. van der Meulen (1997Nonparametric entropy estimation: An overview In ''International Journal of Mathematical and Statistical Sciences'', Volume 6, pp. 17– 39. The main factor in choosing a method is often a trade-off between the bias and the variance of the estimate,T. Schürmann, Bias analysis in entropy estimation. In ''J. Ph ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Kullback–Leibler Divergence

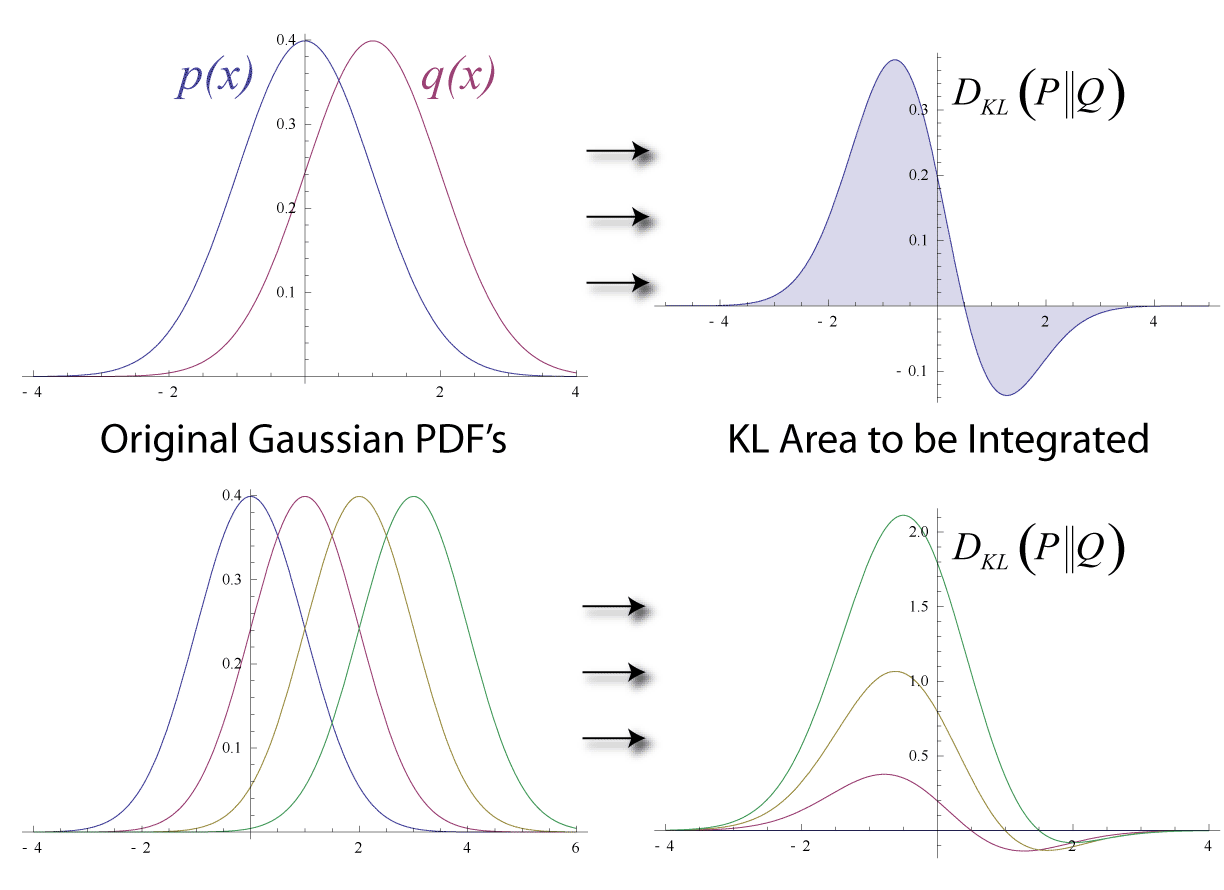

In mathematical statistics, the Kullback–Leibler (KL) divergence (also called relative entropy and I-divergence), denoted D_\text(P \parallel Q), is a type of statistical distance: a measure of how much a model probability distribution is different from a true probability distribution . Mathematically, it is defined as D_\text(P \parallel Q) = \sum_ P(x) \, \log \frac\text A simple interpretation of the KL divergence of from is the expected excess surprise from using as a model instead of when the actual distribution is . While it is a measure of how different two distributions are and is thus a distance in some sense, it is not actually a metric, which is the most familiar and formal type of distance. In particular, it is not symmetric in the two distributions (in contrast to variation of information), and does not satisfy the triangle inequality. Instead, in terms of information geometry, it is a type of divergence, a generalization of squared distance, and for cer ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Self-information

In information theory, the information content, self-information, surprisal, or Shannon information is a basic quantity derived from the probability of a particular event occurring from a random variable. It can be thought of as an alternative way of expressing probability, much like odds or log-odds, but which has particular mathematical advantages in the setting of information theory. The Shannon information can be interpreted as quantifying the level of "surprise" of a particular outcome. As it is such a basic quantity, it also appears in several other settings, such as the length of a message needed to transmit the event given an optimal source coding of the random variable. The Shannon information is closely related to ''entropy'', which is the expected value of the self-information of a random variable, quantifying how surprising the random variable is "on average". This is the average amount of self-information an observer would expect to gain about a random variable wh ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Limiting Density Of Discrete Points

In information theory, the limiting density of discrete points is an adjustment to the formula of Claude Shannon for differential entropy. It was formulated by Edwin Thompson Jaynes to address defects in the initial definition of differential entropy. Definition Shannon originally wrote down the following formula for the entropy of a continuous distribution, known as differential entropy: : h(X)=-\int p(x)\log p(x)\,dx. Unlike Shannon's formula for the discrete entropy, however, this is not the result of any derivation (Shannon simply replaced the summation symbol in the discrete version with an integral), and it lacks many of the properties that make the discrete entropy a useful measure of uncertainty. In particular, it is not invariant under a change of variables and can become negative. In addition, it is not even dimensionally correct. Since h(X) would be dimensionless, p(x) must have units of \frac , which means that the argument to the logarithm is not dimensionless ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Information Theory

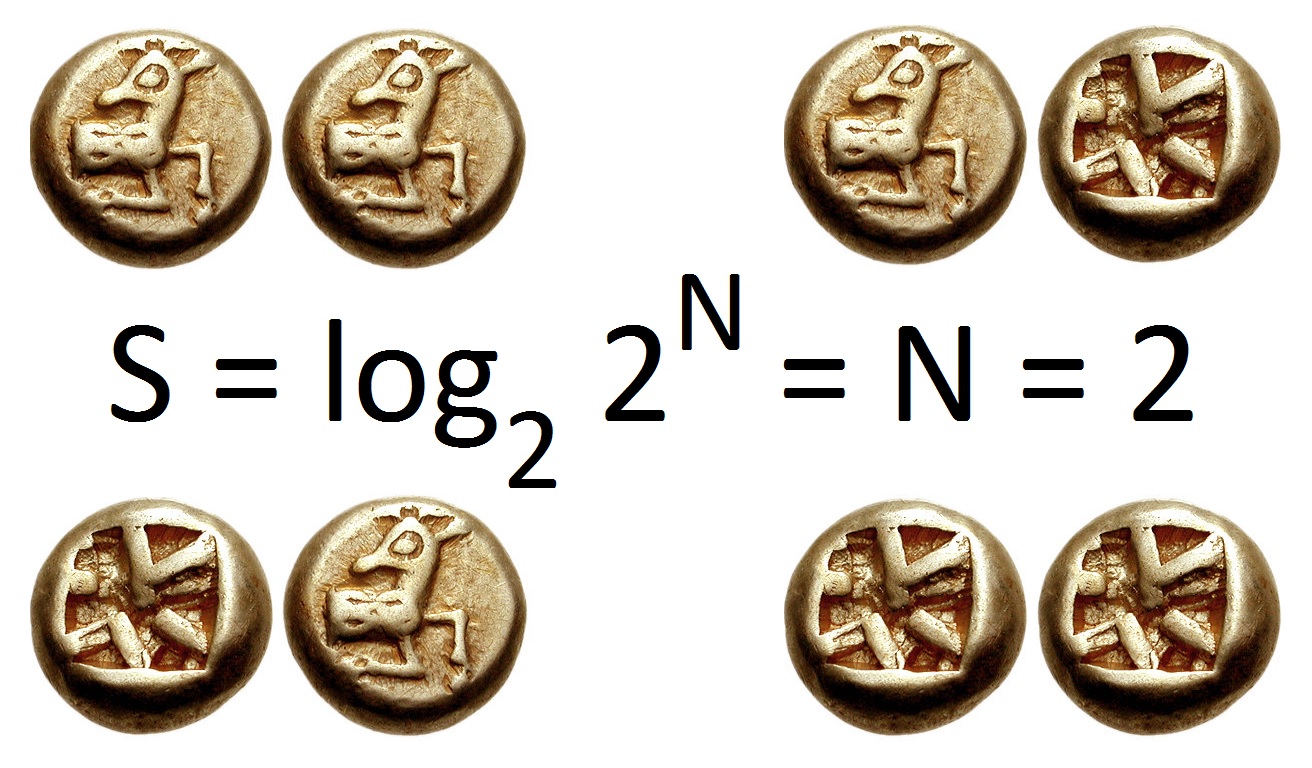

Information theory is the mathematical study of the quantification (science), quantification, Data storage, storage, and telecommunications, communication of information. The field was established and formalized by Claude Shannon in the 1940s, though early contributions were made in the 1920s through the works of Harry Nyquist and Ralph Hartley. It is at the intersection of electronic engineering, mathematics, statistics, computer science, Neuroscience, neurobiology, physics, and electrical engineering. A key measure in information theory is information entropy, entropy. Entropy quantifies the amount of uncertainty involved in the value of a random variable or the outcome of a random process. For example, identifying the outcome of a Fair coin, fair coin flip (which has two equally likely outcomes) provides less information (lower entropy, less uncertainty) than identifying the outcome from a roll of a dice, die (which has six equally likely outcomes). Some other important measu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Information Entropy

In information theory, the entropy of a random variable quantifies the average level of uncertainty or information associated with the variable's potential states or possible outcomes. This measures the expected amount of information needed to describe the state of the variable, considering the distribution of probabilities across all potential states. Given a discrete random variable X, which may be any member x within the set \mathcal and is distributed according to p\colon \mathcal\to , 1/math>, the entropy is \Eta(X) := -\sum_ p(x) \log p(x), where \Sigma denotes the sum over the variable's possible values. The choice of base for \log, the logarithm, varies for different applications. Base 2 gives the unit of bits (or " shannons"), while base ''e'' gives "natural units" nat, and base 10 gives units of "dits", "bans", or " hartleys". An equivalent definition of entropy is the expected value of the self-information of a variable. The concept of information entropy was ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Covariance Matrix

In probability theory and statistics, a covariance matrix (also known as auto-covariance matrix, dispersion matrix, variance matrix, or variance–covariance matrix) is a square matrix giving the covariance between each pair of elements of a given random vector. Intuitively, the covariance matrix generalizes the notion of variance to multiple dimensions. As an example, the variation in a collection of random points in two-dimensional space cannot be characterized fully by a single number, nor would the variances in the x and y directions contain all of the necessary information; a 2 \times 2 matrix would be necessary to fully characterize the two-dimensional variation. Any covariance matrix is symmetric and positive semi-definite and its main diagonal contains variances (i.e., the covariance of each element with itself). The covariance matrix of a random vector \mathbf is typically denoted by \operatorname_, \Sigma or S. Definition Throughout this article, boldfaced u ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

If And Only If

In logic and related fields such as mathematics and philosophy, "if and only if" (often shortened as "iff") is paraphrased by the biconditional, a logical connective between statements. The biconditional is true in two cases, where either both statements are true or both are false. The connective is biconditional (a statement of material equivalence), and can be likened to the standard material conditional ("only if", equal to "if ... then") combined with its reverse ("if"); hence the name. The result is that the truth of either one of the connected statements requires the truth of the other (i.e. either both statements are true, or both are false), though it is controversial whether the connective thus defined is properly rendered by the English "if and only if"—with its pre-existing meaning. For example, ''P if and only if Q'' means that ''P'' is true whenever ''Q'' is true, and the only case in which ''P'' is true is if ''Q'' is also true, whereas in the case of ''P if Q ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Random Variable

A random variable (also called random quantity, aleatory variable, or stochastic variable) is a Mathematics, mathematical formalization of a quantity or object which depends on randomness, random events. The term 'random variable' in its mathematical definition refers to neither randomness nor variability but instead is a mathematical function (mathematics), function in which * the Domain of a function, domain is the set of possible Outcome (probability), outcomes in a sample space (e.g. the set \ which are the possible upper sides of a flipped coin heads H or tails T as the result from tossing a coin); and * the Range of a function, range is a measurable space (e.g. corresponding to the domain above, the range might be the set \ if say heads H mapped to -1 and T mapped to 1). Typically, the range of a random variable is a subset of the Real number, real numbers. Informally, randomness typically represents some fundamental element of chance, such as in the roll of a dice, d ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |