|

Weak Equivalence (formal Languages)

In formal language theory, weak equivalence of two grammars means they generate the same set of strings, i.e. that the formal language they generate is the same. In compiler theory the notion is distinguished from strong (or structural) equivalence, which additionally means that the two parse trees are reasonably similar in that the same semantic interpretation can be assigned to both. Vijay-Shanker and Weir (1994) demonstrates that Linear Indexed Grammars, Combinatory Categorial Grammars, Tree-adjoining Grammars, and Head Grammars are weakly equivalent formalisms, in that they all define the same string languages. On the other hand, if two grammars generate the same set of derivation trees (or more generally, the same set of abstract syntactic objects), then the two grammars are strongly equivalent. Chomsky (1963) introduces the notion of strong equivalence, and argues that only strong equivalence is relevant when comparing grammar formalisms. Kornai and Pullum (1990)Kornai, A ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Formal Language

In logic, mathematics, computer science, and linguistics, a formal language consists of words whose letters are taken from an alphabet and are well-formed according to a specific set of rules. The alphabet of a formal language consists of symbols, letters, or tokens that concatenate into strings of the language. Each string concatenated from symbols of this alphabet is called a word, and the words that belong to a particular formal language are sometimes called ''well-formed words'' or '' well-formed formulas''. A formal language is often defined by means of a formal grammar such as a regular grammar or context-free grammar, which consists of its formation rules. In computer science, formal languages are used among others as the basis for defining the grammar of programming languages and formalized versions of subsets of natural languages in which the words of the language represent concepts that are associated with particular meanings or semantics. In computational compl ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Context-free Grammar

In formal language theory, a context-free grammar (CFG) is a formal grammar whose production rules are of the form :A\ \to\ \alpha with A a ''single'' nonterminal symbol, and \alpha a string of terminals and/or nonterminals (\alpha can be empty). A formal grammar is "context-free" if its production rules can be applied regardless of the context of a nonterminal. No matter which symbols surround it, the single nonterminal on the left hand side can always be replaced by the right hand side. This is what distinguishes it from a context-sensitive grammar. A formal grammar is essentially a set of production rules that describe all possible strings in a given formal language. Production rules are simple replacements. For example, the first rule in the picture, :\langle\text\rangle \to \langle\text\rangle = \langle\text\rangle ; replaces \langle\text\rangle with \langle\text\rangle = \langle\text\rangle ;. There can be multiple replacement rules for a given nonterminal symbol. Th ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Context-free Grammar

In formal language theory, a context-free grammar (CFG) is a formal grammar whose production rules are of the form :A\ \to\ \alpha with A a ''single'' nonterminal symbol, and \alpha a string of terminals and/or nonterminals (\alpha can be empty). A formal grammar is "context-free" if its production rules can be applied regardless of the context of a nonterminal. No matter which symbols surround it, the single nonterminal on the left hand side can always be replaced by the right hand side. This is what distinguishes it from a context-sensitive grammar. A formal grammar is essentially a set of production rules that describe all possible strings in a given formal language. Production rules are simple replacements. For example, the first rule in the picture, :\langle\text\rangle \to \langle\text\rangle = \langle\text\rangle ; replaces \langle\text\rangle with \langle\text\rangle = \langle\text\rangle ;. There can be multiple replacement rules for a given nonterminal symbol. Th ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Generative Grammar

Generative grammar, or generativism , is a linguistic theory that regards linguistics as the study of a hypothesised innate grammatical structure. It is a biological or biologistic modification of earlier structuralist theories of linguistics, deriving ultimately from glossematics. Generative grammar considers grammar as a system of rules that generates exactly those combinations of words that form grammatical sentences in a given language. It is a system of explicit rules that may apply repeatedly to generate an indefinite number of sentences which can be as long as one wants them to be. The difference from structural and functional models is that the object is base-generated within the verb phrase in generative grammar. This purportedly cognitive structure is thought of as being a part of a universal grammar, a syntactic structure which is caused by a genetic mutation in humans. Generativists have created numerous theories to make the NP VP (NP) analysis work in nat ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Postfix Traversal

In computer science, tree traversal (also known as tree search and walking the tree) is a form of graph traversal and refers to the process of visiting (e.g. retrieving, updating, or deleting) each node in a tree data structure, exactly once. Such traversals are classified by the order in which the nodes are visited. The following algorithms are described for a binary tree, but they may be generalized to other trees as well. Types Unlike linked lists, one-dimensional arrays and other linear data structures, which are canonically traversed in linear order, trees may be traversed in multiple ways. They may be traversed in depth-first or breadth-first order. There are three common ways to traverse them in depth-first order: in-order, pre-order and post-order. Beyond these basic traversals, various more complex or hybrid schemes are possible, such as depth-limited searches like iterative deepening depth-first search. The latter, as well as breadth-first search, can also be used t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Order Of Operations

In mathematics and computer programming, the order of operations (or operator precedence) is a collection of rules that reflect conventions about which procedures to perform first in order to evaluate a given mathematical expression. For example, in mathematics and most computer languages, multiplication is granted a higher precedence than addition, and it has been this way since the introduction of modern algebraic notation. Thus, the expression is interpreted to have the value , and not . When exponents were introduced in the 16th and 17th centuries, they were given precedence over both addition and multiplication, and could be placed only as a superscript to the right of their base. Thus and . These conventions exist to eliminate notational ambiguity, while allowing notation to be as brief as possible. Where it is desired to override the precedence conventions, or even simply to emphasize them, parentheses ( ) can be used. For example, forces addition to precede multipl ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Concrete Syntax Tree

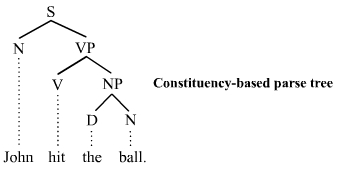

A parse tree or parsing tree or derivation tree or concrete syntax tree is an ordered, rooted tree that represents the syntactic structure of a string according to some context-free grammar. The term ''parse tree'' itself is used primarily in computational linguistics; in theoretical syntax, the term ''syntax tree'' is more common. Concrete syntax trees reflect the syntax of the input language, making them distinct from the abstract syntax trees used in computer programming. Unlike Reed-Kellogg sentence diagrams used for teaching grammar, parse trees do not use distinct symbol shapes for different types of constituents. Parse trees are usually constructed based on either the constituency relation of constituency grammars (phrase structure grammars) or the dependency relation of dependency grammars. Parse trees may be generated for sentences in natural languages (see natural language processing), as well as during processing of computer languages, such as programming languages. ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Start Symbol (formal Languages)

In formal language theory, a grammar (when the context is not given, often called a formal grammar for clarity) describes how to form strings from a language's alphabet that are valid according to the language's syntax. A grammar does not describe the meaning of the strings or what can be done with them in whatever context—only their form. A formal grammar is defined as a set of production rules for such strings in a formal language. Formal language theory, the discipline that studies formal grammars and languages, is a branch of applied mathematics. Its applications are found in theoretical computer science, theoretical linguistics, formal semantics, mathematical logic, and other areas. A formal grammar is a set of rules for rewriting strings, along with a "start symbol" from which rewriting starts. Therefore, a grammar is usually thought of as a language generator. However, it can also sometimes be used as the basis for a "recognizer"—a function in computing that det ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Parse Tree Derivations ETF

Parsing, syntax analysis, or syntactic analysis is the process of analyzing a string of symbols, either in natural language, computer languages or data structures, conforming to the rules of a formal grammar. The term ''parsing'' comes from Latin ''pars'' (''orationis''), meaning part (of speech). The term has slightly different meanings in different branches of linguistics and computer science. Traditional sentence parsing is often performed as a method of understanding the exact meaning of a sentence or word, sometimes with the aid of devices such as sentence diagrams. It usually emphasizes the importance of grammatical divisions such as subject and predicate. Within computational linguistics the term is used to refer to the formal analysis by a computer of a sentence or other string of words into its constituents, resulting in a parse tree showing their syntactic relation to each other, which may also contain semantic and other information (p-values). Some parsing ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Formal Grammars

In formal language theory, a grammar (when the context is not given, often called a formal grammar for clarity) describes how to form strings from a language's alphabet that are valid according to the language's syntax. A grammar does not describe the meaning of the strings or what can be done with them in whatever context—only their form. A formal grammar is defined as a set of production rules for such strings in a formal language. Formal language theory, the discipline that studies formal grammars and languages, is a branch of applied mathematics. Its applications are found in theoretical computer science, theoretical linguistics, formal semantics, mathematical logic, and other areas. A formal grammar is a set of rules for rewriting strings, along with a "start symbol" from which rewriting starts. Therefore, a grammar is usually thought of as a language generator. However, it can also sometimes be used as the basis for a " recognizer"—a function in computing that dete ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Head-driven Phrase Structure Grammar

Head-driven phrase structure grammar (HPSG) is a highly lexicalized, constraint-based grammar developed by Carl Pollard and Ivan Sag. It is a type of phrase structure grammar, as opposed to a dependency grammar, and it is the immediate successor to generalized phrase structure grammar. HPSG draws from other fields such as computer science ( data type theory and knowledge representation) and uses Ferdinand de Saussure's notion of the sign. It uses a uniform formalism and is organized in a modular way which makes it attractive for natural language processing. An HPSG grammar includes principles and grammar rules and lexicon entries which are normally not considered to belong to a grammar. The formalism is based on lexicalism. This means that the lexicon is more than just a list of entries; it is in itself richly structured. Individual entries are marked with types. Types form a hierarchy. Early versions of the grammar were very lexicalized with few grammatical rules (schema). Mo ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |