|

NAS Benchmarks

NAS Parallel Benchmarks (NPB) are a set of benchmarks targeting performance evaluation of highly parallel supercomputers. They are developed and maintained by the NASA Advanced Supercomputing (NAS) Division (formerly the NASA Numerical Aerodynamic Simulation Program) based at the NASA Ames Research Center. NAS solicits performance results for NPB from all sources. History Motivation Traditional benchmarks that existed before NPB, such as the Livermore loops, the LINPACK Benchmark and thNAS Kernel Benchmark Program were usually specialized for vector computers. They generally suffered from inadequacies including parallelism-impeding tuning restrictions and insufficient problem sizes, which rendered them inappropriate for highly parallel systems. Equally unsuitable were full-scale application benchmarks due to high porting cost and unavailability of automatic software parallelization tools. As a result, NPB were developed in 1991 and released in 1992 to address the ensuing lack o ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

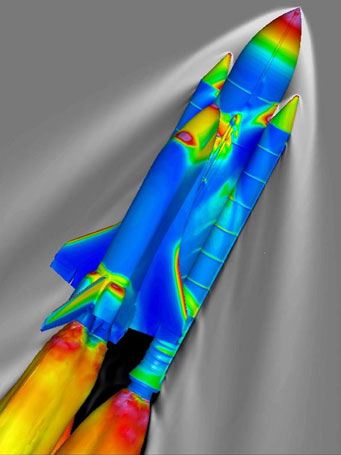

NASA Advanced Supercomputing Division

The NASA Advanced Supercomputing (NAS) Division is located at NASA Ames Research Center, Moffett Federal Airfield, Moffett Field in the heart of Silicon Valley in Mountain View, California, Mountain View, California. It has been the major supercomputing and modeling and simulation resource for NASA missions in aerodynamics, space exploration, studies in weather patterns and ocean currents, and space shuttle and aircraft design and development for almost forty years. The facility currently houses the petascale Pleiades (supercomputer), Pleiades, Aitken, and Electra supercomputers, as well as the terascale Endeavour (supercomputer), Endeavour supercomputer. The systems are based on Silicon Graphics, SGI and Hewlett Packard Enterprise, HPE architecture with Intel processors. The main building also houses disk and archival tape storage systems with a capacity of over an exabyte of data, the hyperwall visualization system, and one of the largest InfiniBand network fabrics in the world. ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Sparse Matrix

In numerical analysis and scientific computing, a sparse matrix or sparse array is a matrix in which most of the elements are zero. There is no strict definition regarding the proportion of zero-value elements for a matrix to qualify as sparse but a common criterion is that the number of non-zero elements is roughly equal to the number of rows or columns. By contrast, if most of the elements are non-zero, the matrix is considered dense. The number of zero-valued elements divided by the total number of elements (e.g., ''m'' × ''n'' for an ''m'' × ''n'' matrix) is sometimes referred to as the sparsity of the matrix. Conceptually, sparsity corresponds to systems with few pairwise interactions. For example, consider a line of balls connected by springs from one to the next: this is a sparse system as only adjacent balls are coupled. By contrast, if the same line of balls were to have springs connecting each ball to all other balls, the system would correspond to a dense matrix. The ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Marsaglia Polar Method

The Marsaglia polar method is a pseudo-random number sampling method for generating a pair of independent standard normal random variables. Standard normal random variables are frequently used in computer science, computational statistics, and in particular, in applications of the Monte Carlo method. The polar method works by choosing random points (''x'', ''y'') in the square −1 < ''x'' < 1, −1 < ''y'' < 1 until : and then returning the required pair of normal s as : or, equivalently, : where and represent the cosine and [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Random Variate

In probability and statistics, a random variate or simply variate is a particular outcome of a ''random variable'': the random variates which are other outcomes of the same random variable might have different values ( random numbers). A random deviate or simply deviate is the difference of random variate with respect to the distribution central location (e.g., mean), often divided by the standard deviation of the distribution (i.e., as a standard score). Random variates are used when simulating processes driven by random influences ( stochastic processes). In modern applications, such simulations would derive random variates corresponding to any given probability distribution from computer procedures designed to create random variates corresponding to a uniform distribution, where these procedures would actually provide values chosen from a uniform distribution of pseudorandom numbers. Procedures to generate random variates corresponding to a given distribution are known as ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Normal Distribution

In statistics, a normal distribution or Gaussian distribution is a type of continuous probability distribution for a real-valued random variable. The general form of its probability density function is : f(x) = \frac e^ The parameter \mu is the mean or expectation of the distribution (and also its median and mode), while the parameter \sigma is its standard deviation. The variance of the distribution is \sigma^2. A random variable with a Gaussian distribution is said to be normally distributed, and is called a normal deviate. Normal distributions are important in statistics and are often used in the natural and social sciences to represent real-valued random variables whose distributions are not known. Their importance is partly due to the central limit theorem. It states that, under some conditions, the average of many samples (observations) of a random variable with finite mean and variance is itself a random variable—whose distribution converges to a normal dist ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Embarrassingly Parallel

In parallel computing, an embarrassingly parallel workload or problem (also called embarrassingly parallelizable, perfectly parallel, delightfully parallel or pleasingly parallel) is one where little or no effort is needed to separate the problem into a number of parallel tasks. This is often the case where there is little or no dependency or need for communication between those parallel tasks, or for results between them.Section 1.4.4 of: Thus, these are different from distributed computing problems that need communication between tasks, especially communication of intermediate results. They are easy to perform on server farms which lack the special infrastructure used in a true supercomputer cluster. They are thus well suited to large, Internet-based volunteer computing platforms such as BOINC, and do not suffer from parallel slowdown. The opposite of embarrassingly parallel problems are inherently serial problems, which cannot be parallelized at all. A common example of a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Bucket Sort

Bucket sort, or bin sort, is a sorting algorithm that works by distributing the elements of an array into a number of buckets. Each bucket is then sorted individually, either using a different sorting algorithm, or by recursively applying the bucket sorting algorithm. It is a distribution sort, a generalization of pigeonhole sort that allows multiple keys per bucket, and is a cousin of radix sort in the most-to-least significant digit flavor. Bucket sort can be implemented with comparisons and therefore can also be considered a comparison sort algorithm. The computational complexity depends on the algorithm used to sort each bucket, the number of buckets to use, and whether the input is uniformly distributed. Bucket sort works as follows: # Set up an array of initially empty "buckets". # Scatter: Go over the original array, putting each object in its bucket. # Sort each non-empty bucket. # Gather: Visit the buckets in order and put all elements back into the original array. P ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Integer Sorting

In computer science, integer sorting is the algorithmic problem of sorting a collection of data values by integer keys. Algorithms designed for integer sorting may also often be applied to sorting problems in which the keys are floating point numbers, rational numbers, or text strings.. The ability to perform integer arithmetic on the keys allows integer sorting algorithms to be faster than comparison sorting algorithms in many cases, depending on the details of which operations are allowed in the model of computing and how large the integers to be sorted are. Integer sorting algorithms including pigeonhole sort, counting sort, and radix sort are widely used and practical. Other integer sorting algorithms with smaller worst-case time bounds are not believed to be practical for computer architectures with 64 or fewer bits per word. Many such algorithms are known, with performance depending on a combination of the number of items to be sorted, number of bits per key, and number of ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Fast Fourier Transform

A fast Fourier transform (FFT) is an algorithm that computes the discrete Fourier transform (DFT) of a sequence, or its inverse (IDFT). Fourier analysis converts a signal from its original domain (often time or space) to a representation in the frequency domain and vice versa. The DFT is obtained by decomposing a sequence of values into components of different frequencies. This operation is useful in many fields, but computing it directly from the definition is often too slow to be practical. An FFT rapidly computes such transformations by factorizing the DFT matrix into a product of sparse (mostly zero) factors. As a result, it manages to reduce the complexity of computing the DFT from O\left(N^2\right), which arises if one simply applies the definition of DFT, to O(N \log N), where N is the data size. The difference in speed can be enormous, especially for long data sets where ''N'' may be in the thousands or millions. In the presence of round-off error, many FFT algorith ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Partial Differential Equation

In mathematics, a partial differential equation (PDE) is an equation which imposes relations between the various partial derivatives of a multivariable function. The function is often thought of as an "unknown" to be solved for, similarly to how is thought of as an unknown number to be solved for in an algebraic equation like . However, it is usually impossible to write down explicit formulas for solutions of partial differential equations. There is, correspondingly, a vast amount of modern mathematical and scientific research on methods to numerically approximate solutions of certain partial differential equations using computers. Partial differential equations also occupy a large sector of pure mathematical research, in which the usual questions are, broadly speaking, on the identification of general qualitative features of solutions of various partial differential equations, such as existence, uniqueness, regularity, and stability. Among the many open questions are the e ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

System Of Linear Equations

In mathematics, a system of linear equations (or linear system) is a collection of one or more linear equations involving the same variables. For example, :\begin 3x+2y-z=1\\ 2x-2y+4z=-2\\ -x+\fracy-z=0 \end is a system of three equations in the three variables . A solution to a linear system is an assignment of values to the variables such that all the equations are simultaneously satisfied. A solution to the system above is given by the ordered triple :(x,y,z)=(1,-2,-2), since it makes all three equations valid. The word "system" indicates that the equations are to be considered collectively, rather than individually. In mathematics, the theory of linear systems is the basis and a fundamental part of linear algebra, a subject which is used in most parts of modern mathematics. Computational algorithms for finding the solutions are an important part of numerical linear algebra, and play a prominent role in engineering, physics, chemistry, computer science, and economics. ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Conjugate Gradient Method

In mathematics, the conjugate gradient method is an algorithm for the numerical solution of particular systems of linear equations, namely those whose matrix is positive-definite. The conjugate gradient method is often implemented as an iterative algorithm, applicable to sparse systems that are too large to be handled by a direct implementation or other direct methods such as the Cholesky decomposition. Large sparse systems often arise when numerically solving partial differential equations or optimization problems. The conjugate gradient method can also be used to solve unconstrained optimization problems such as energy minimization. It is commonly attributed to Magnus Hestenes and Eduard Stiefel, who programmed it on the Z4, and extensively researched it. The biconjugate gradient method provides a generalization to non-symmetric matrices. Various nonlinear conjugate gradient methods seek minima of nonlinear optimization problems. Description of the problem address ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |